Marcos Faundez-Zanuy

Speaker verification in mismatch training and testing conditions

Apr 01, 2022

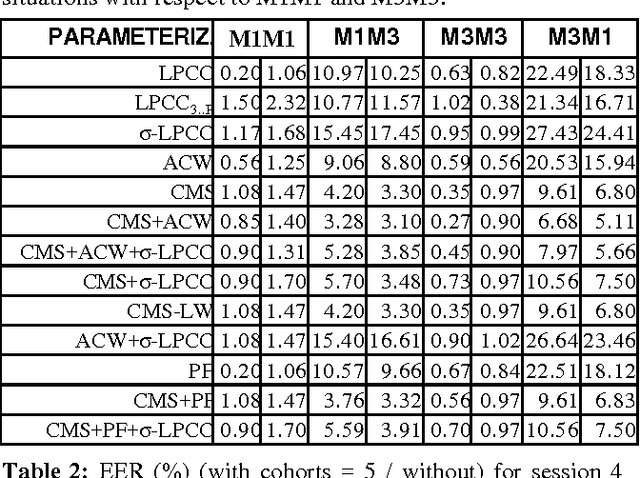

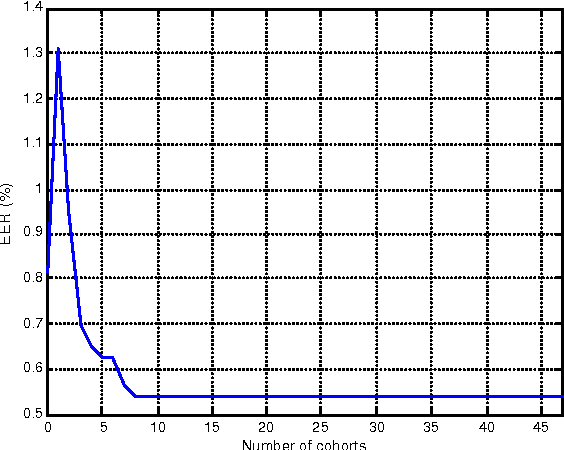

Abstract:This paper presents an exhaustive study about the robustness of several parameterizations, with a new database specially acquired for the purpose of a speaker recognition application. This database includes the following variations: different recording sessions (including telephonic and microphonic recordings), recording rooms, and languages (it has been obtained from a bilingual set of speakers). This study has been performed with covariance matrices in a text independent speaker verification application. It reveals that the combination of several parameterizations can improve the robustness in all the scenarios.

* 4 pages, published in 6th international conference on spoken language processing (ICSLP 2000), Vol. II, pp.322-325. ICSLP 2000, ISBN 7-80150-144-4/G.18Beijing (China). October 16-20, 2000. arXiv admin note: substantial text overlap with arXiv:2203.00513

Face identification by means of a neural net classifier

Apr 01, 2022

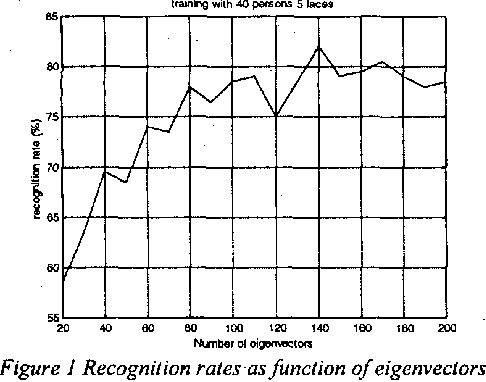

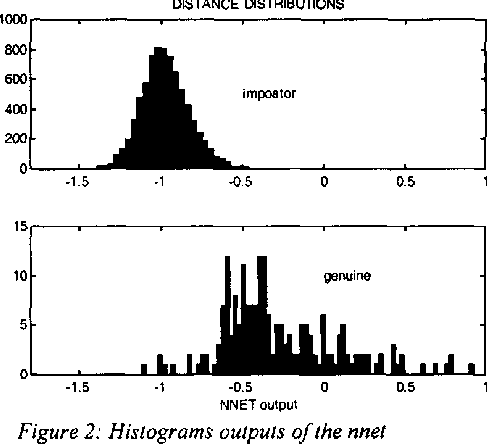

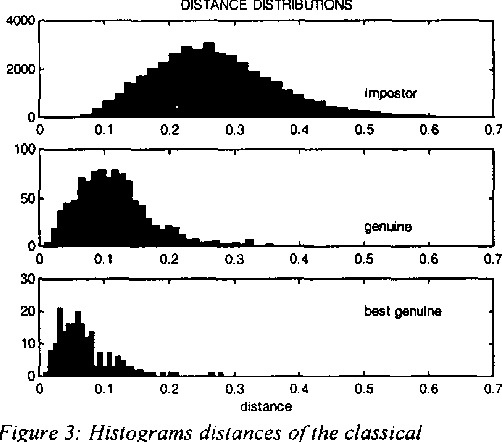

Abstract:This paper describes a novel face identification method that combines the eigenfaces theory with the Neural Nets. We use the eigenfaces methodology in order to reduce the dimensionality of the input image, and a neural net classifier that performs the identification process. The method presented recognizes faces in the presence of variations in facial expression, facial details and lighting conditions. A recognition rate of more than 87% has been achieved, while the classical method of Turk and Pentland achieves a 75.5%.

* 5 pages, published in Proceedings IEEE 33rd Annual 1999 International Carnahan Conference on Security Technology (Cat. No.99CH36303) Madrid (Spain)

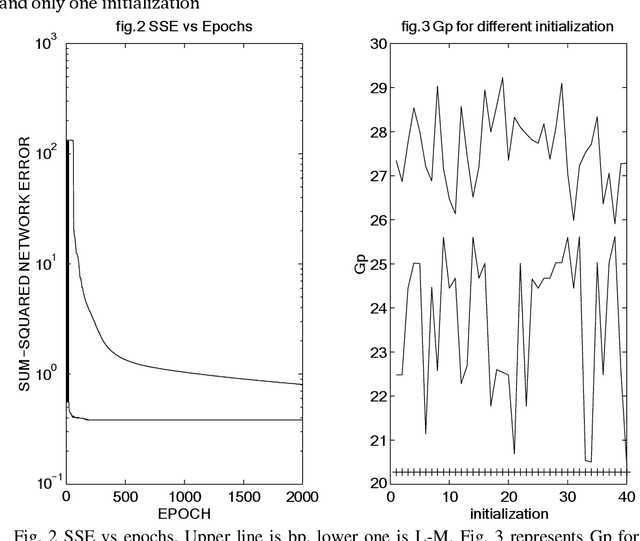

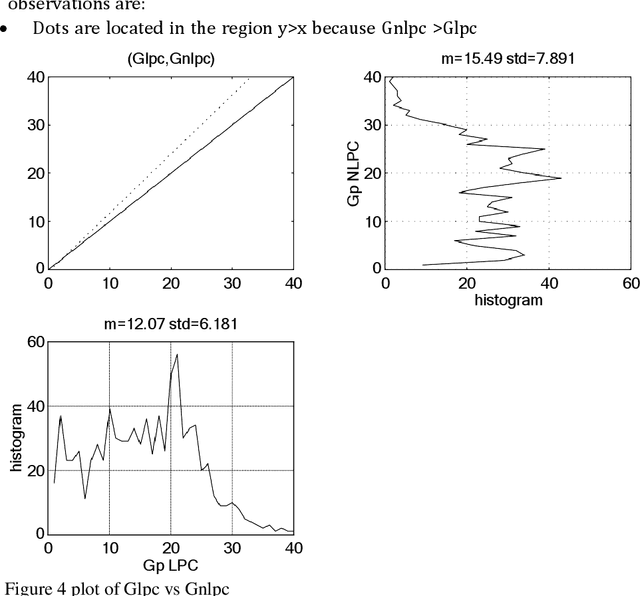

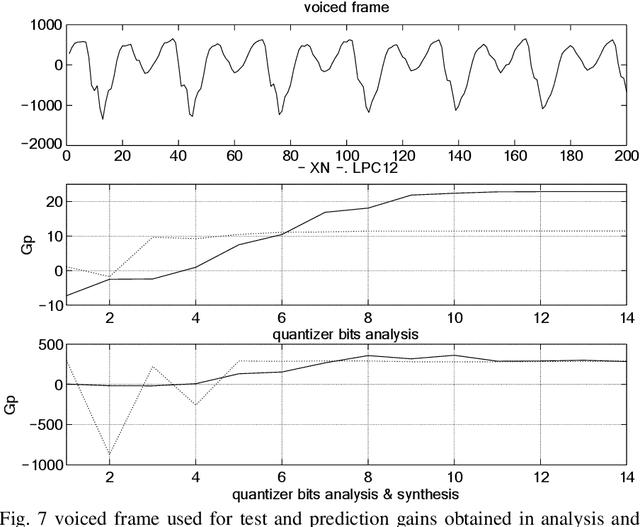

A comparative study between linear and nonlinear speech prediction

Mar 31, 2022

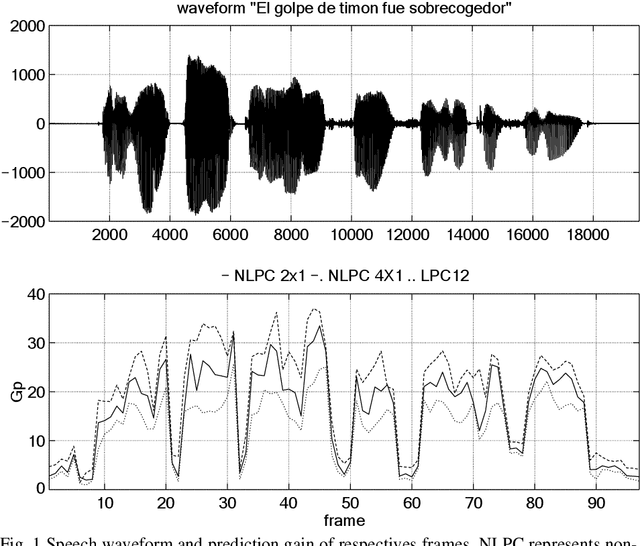

Abstract:This paper is focused on nonlinear prediction coding, which consists on the prediction of a speech sample based on a nonlinear combination of previous samples. It is known that in the generation of the glottal pulse, the wave equation does not behave linearly [2], [10], and we model these effects by means of a nonlinear prediction of speech based on a parametric neural network model. This work is centred on the neural net weight's quantization and on the compression gain.

* 11 pages, published in Mira, J., Moreno-D\'iaz, R., Cabestany, J. (eds) Biological and Artificial Computation: From Neuroscience to Technology. IWANN 1997. Lecture Notes in Computer Science, vol 1240. Springer, Berlin, Heidelberg

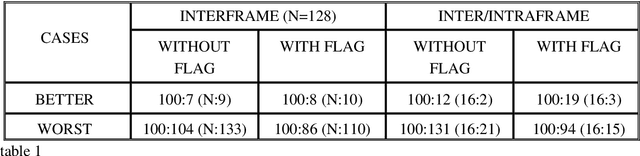

Contributions to interframe coding

Mar 31, 2022

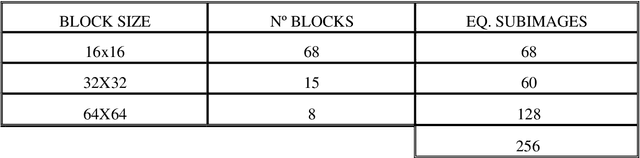

Abstract:Advanced motion models (4 or 6 parameters) are needed for a good representation of the motion experimented by the different objects contained in a sequence of images. If the image is split in very small blocks, then an accurate description of complex movements can be achieved with only 2 parameters. This alternative implies a large set of vectors per image. We propose a new approach to reduce the number of vectors, using different block sizes as a function of the local characteristics of the image, without increasing the error accepted with the smallest blocks. A second algorithm is proposed for an inter/intraframe coder.

* 6 pages, published in Workshop on image analysis & synthesis in image coding. October 1994. Berlin. pp. C3.1 to C3.6. arXiv admin note: text overlap with arXiv:2203.00445

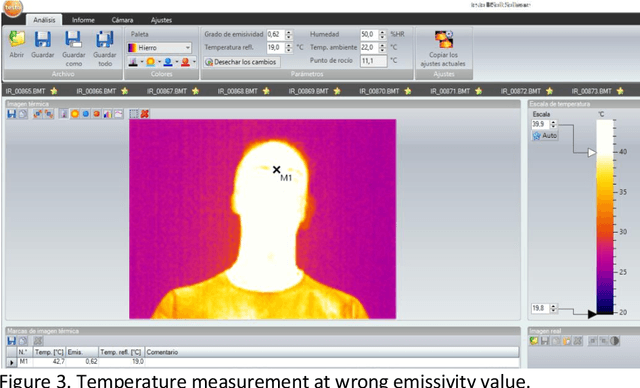

Preliminary experiments on thermal emissivity adjustment for face images

Mar 30, 2022

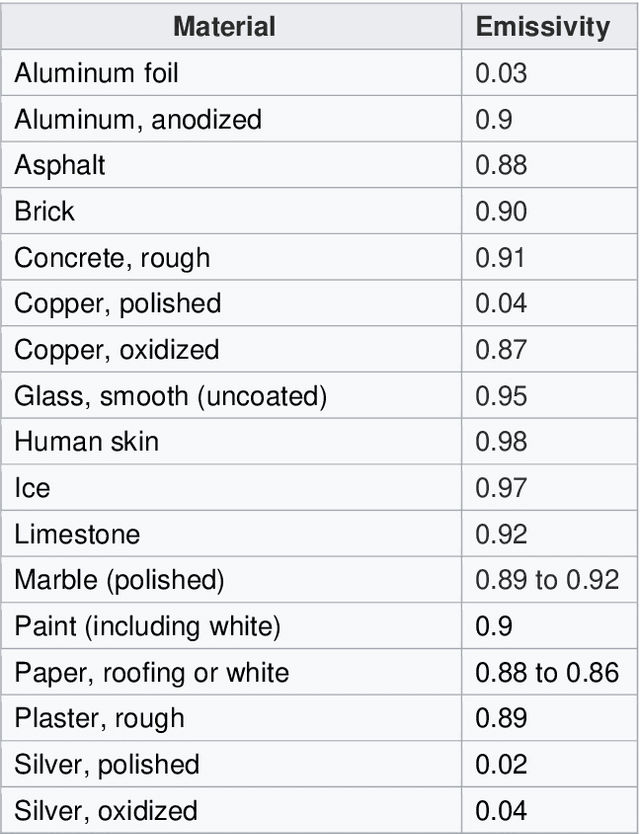

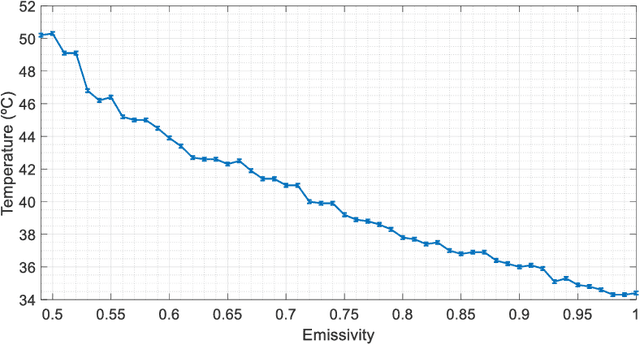

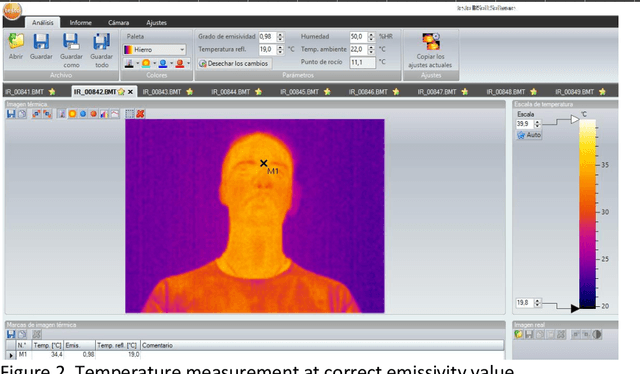

Abstract:In this paper we summarize several applications based on thermal imaging. We emphasize the importance of emissivity adjustment for a proper temperature measurement. A new set of face images acquired at different emissivity values with steps of 0.01 is also presented and will be distributed for free for research purposes. Among the utilities, we can mention: a) the possibility to apply corrections once an image is acquired with a wrong emissivity value and it is not possible to acquire a new one; b) privacy protection in thermal images, which can be obtained with a low emissivity factor, which is still suitable for several applications, but hides the identity of a user; c) image processing for improving temperature detection in scenes containing objects of different emissivity.

* 8 pages, published in: Esposito, A., Faundez-Zanuy, M., Morabito, F., Pasero, E. (eds) Progresses in Artificial Intelligence and Neural Systems. Smart Innovation, Systems and Technologies, vol 184. Springer, Singapore

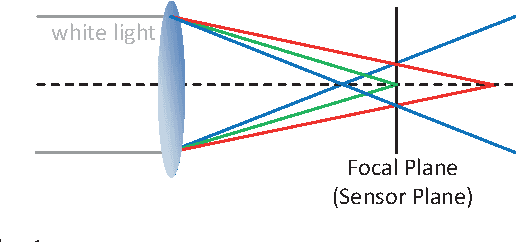

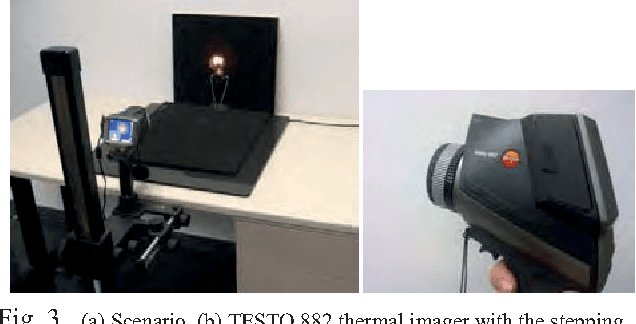

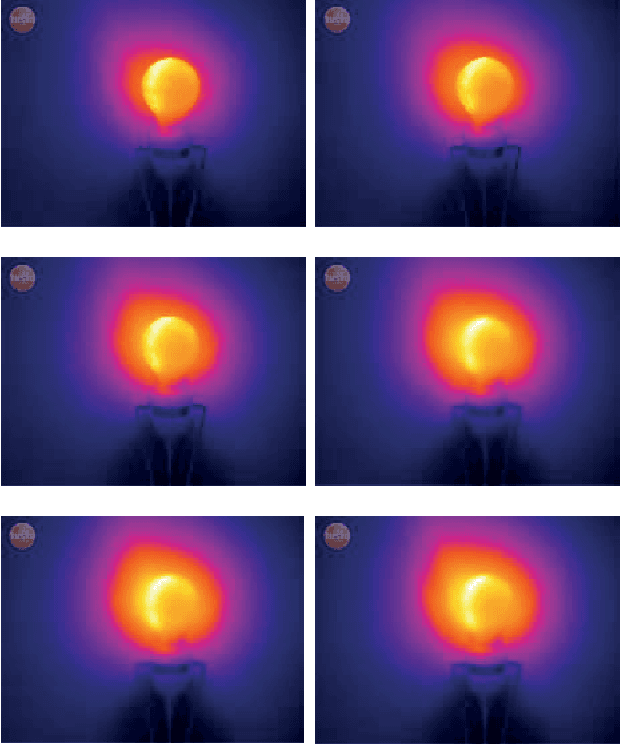

Contribution of the Temperature of the Objects to the Problem of Thermal Imaging Focusing

Mar 30, 2022

Abstract:When focusing an image, depth of field, aperture and distance from the camera to the object, must be taking into account, both, in visible and in infrared spectrum. Our experiments reveal that in addition, the focusing problem in thermal spectrum is also hardly dependent of the temperature of the object itself (and/or the scene).

* 5 pages, published in 2012 IEEE International Carnahan Conference on Security Technology (ICCST), 15-18 Oct. 2012 Boston (MA) USA. arXiv admin note: text overlap with arXiv:2203.08513

A Naturalistic Database of Thermal Emotional Facial Expressions and Effects of Induced Emotions on Memory

Mar 29, 2022

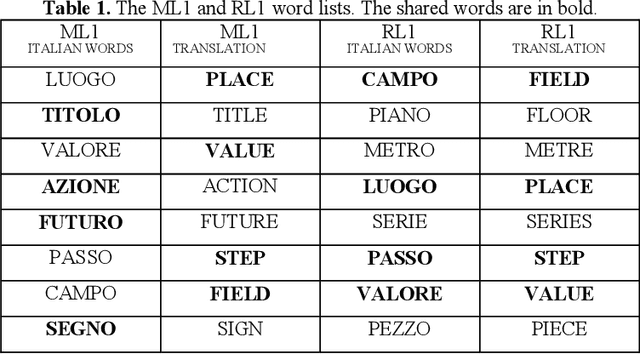

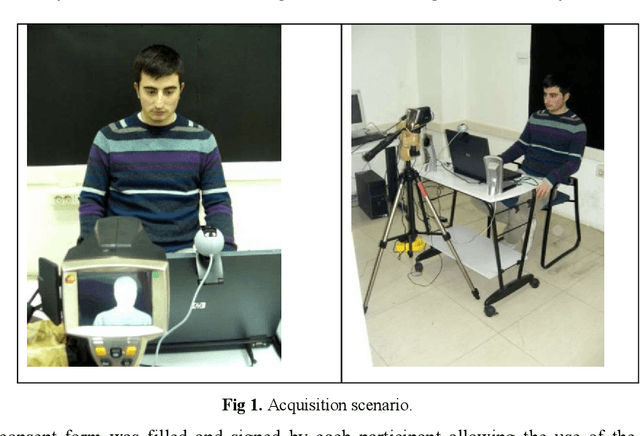

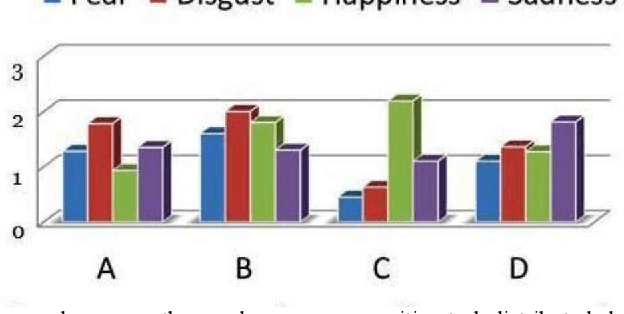

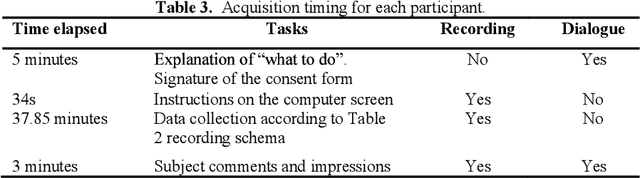

Abstract:This work defines a procedure for collecting naturally induced emotional facial expressions through the vision of movie excerpts with high emotional contents and reports experimental data ascertaining the effects of emotions on memory word recognition tasks. The induced emotional states include the four basic emotions of sadness, disgust, happiness, and surprise, as well as the neutral emotional state. The resulting database contains both thermal and visible emotional facial expressions, portrayed by forty Italian subjects and simultaneously acquired by appropriately synchronizing a thermal and a standard visible camera. Each subject's recording session lasted 45 minutes, allowing for each mode (thermal or visible) to collect a minimum of 2000 facial expressions from which a minimum of 400 were selected as highly expressive of each emotion category. The database is available to the scientific community and can be obtained contacting one of the authors. For this pilot study, it was found that emotions and/or emotion categories do not affect individual performance on memory word recognition tasks and temperature changes in the face or in some regions of it do not discriminate among emotional states.

* 15 pages published in Esposito, A., Esposito, A.M., Vinciarelli, A., Hoffmann, R., M\"uller, V.C. (eds) Cognitive Behavioural Systems. Lecture Notes in Computer Science, vol 7403. Springer, Berlin, Heidelberg

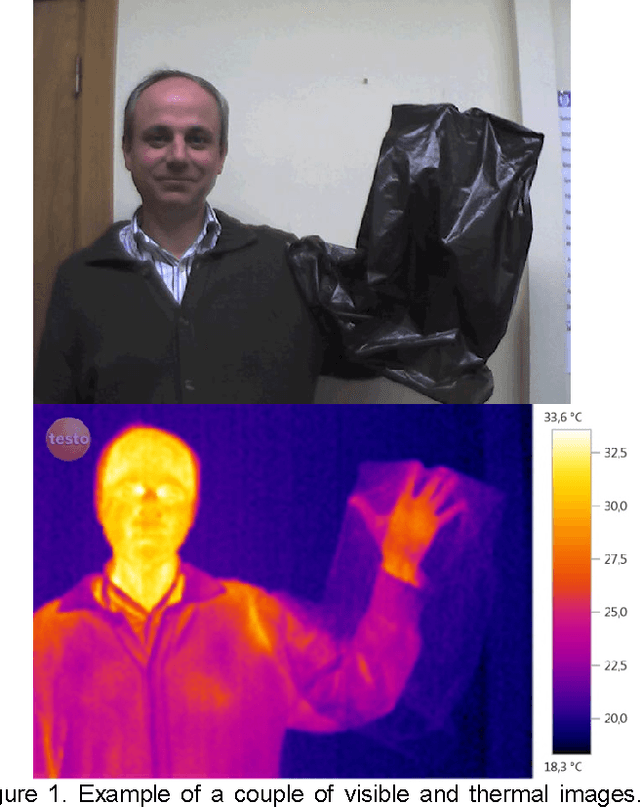

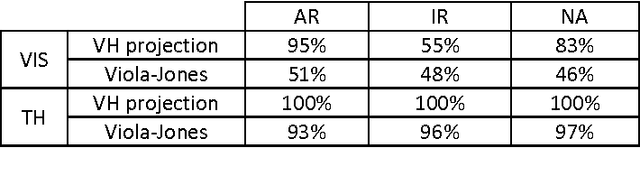

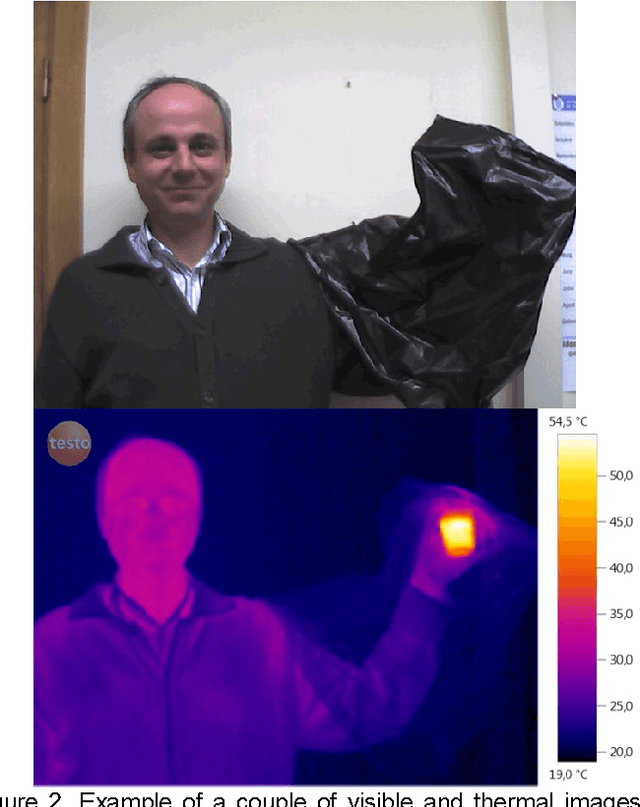

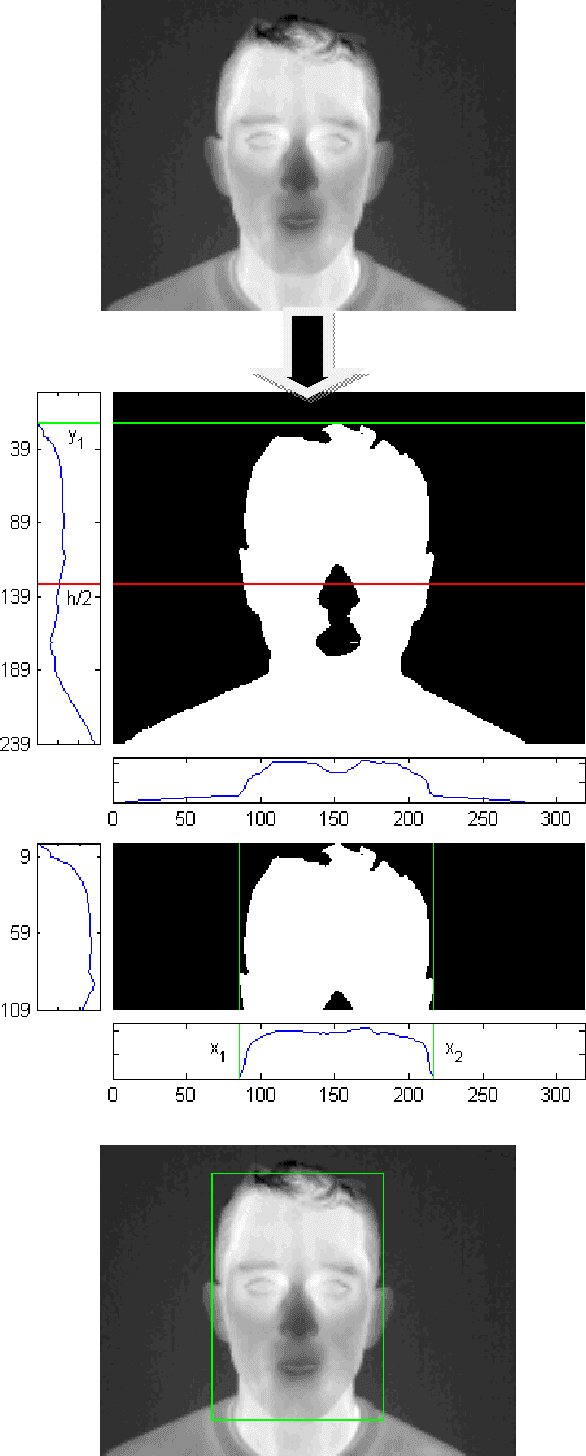

Face segmentation: A comparison between visible and thermal images

Mar 29, 2022

Abstract:Face segmentation is a first step for face biometric systems. In this paper we present a face segmentation algorithm for thermographic images. This algorithm is compared with the classic Viola and Jones algorithm used for visible images. Experimental results reveal that, when segmenting a multispectral (visible and thermal) face database, the proposed algorithm is more than 10 times faster, while the accuracy of face segmentation in thermal images is higher than in case of Viola-Jones

* 5 pages, published in 44th Annual 2010 IEEE International Carnahan Conference on Security Technology, 2010, pp. 185-189, 5-8 Oct. 2010 San Jose (California, USA)

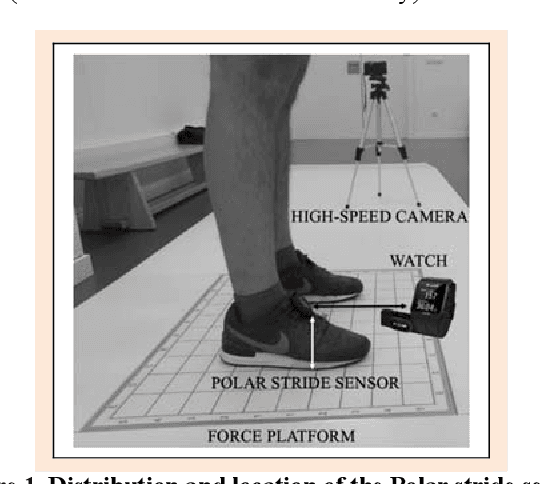

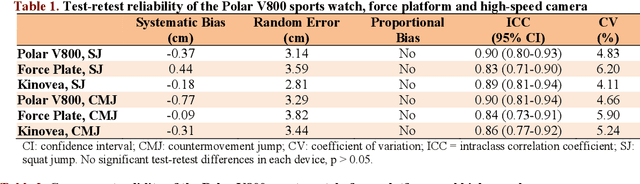

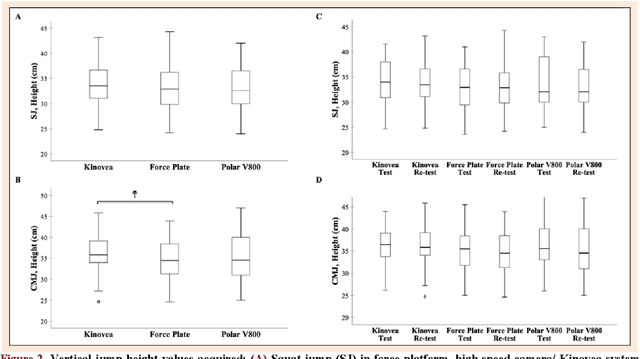

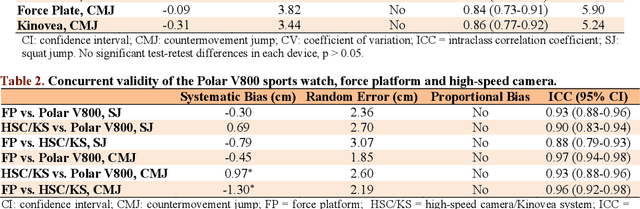

Reliability and Validity of the Polar V800 Sports Watch for Estimating Vertical Jump Height

Mar 28, 2022

Abstract:This study aimed to assess the reliability and validity of the Polar V800 to measure vertical jump height. Twenty-two physically active healthy men (age: 22.89 +- 4.23 years; body mass: 70.74 +- 8.04 kg; height: 1.74 +- 0.76 m) were recruited for the study. The reliability was evaluated by comparing measurements acquired by the Polar V800 in two identical testing sessions one week apart. Validity was assessed by comparing measurements simultaneously obtained using a force platform (gold standard), high-speed camera and the Polar V800 during squat jump (SJ) and countermovement jump (CMJ) tests. In the test-retest reliability, high intraclass correlation coefficients (ICCs) were observed (mean: 0.90, SJ and CMJ) in the Polar V800. There was no significant systematic bias +- random errors (p > 0.05) between test-retest. Low coefficients of variation (<5%) were detected in both jumps in the Polar V800. In the validity assessment, similar jump height was detected among devices (p > 0.05). There was almost perfect agreement between the Polar V800 compared to a force platform for the SJ and CMJ tests (Mean ICCs = 0.95; no systematic bias +- random errors in SJ mean: -0.38 +- 2.10 cm, p > 0.05). Mean ICC between the Polar V800 versus high-speed camera was 0.91 for the SJ and CMJ tests, however, a significant systematic bias +- random error (0.97 +- 2.60 cm; p = 0.01) was detected in CMJ test. The Polar V800 offers valid, compared to force platform, and reliable information about vertical jump height performance in physically active healthy young men.

* 9 pages, published in Journal of sports science and medicine, 20, 149 157

On the Handwriting Tasks' Analysis to Detect Fatigue

Mar 28, 2022

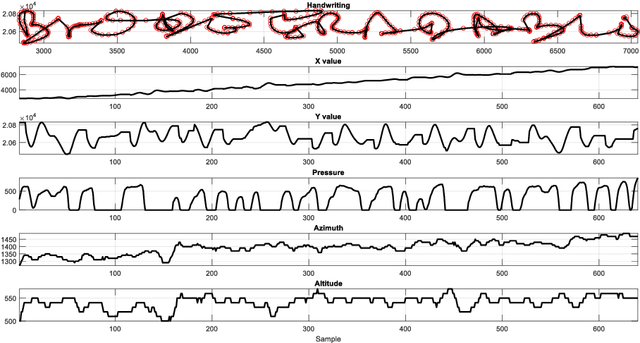

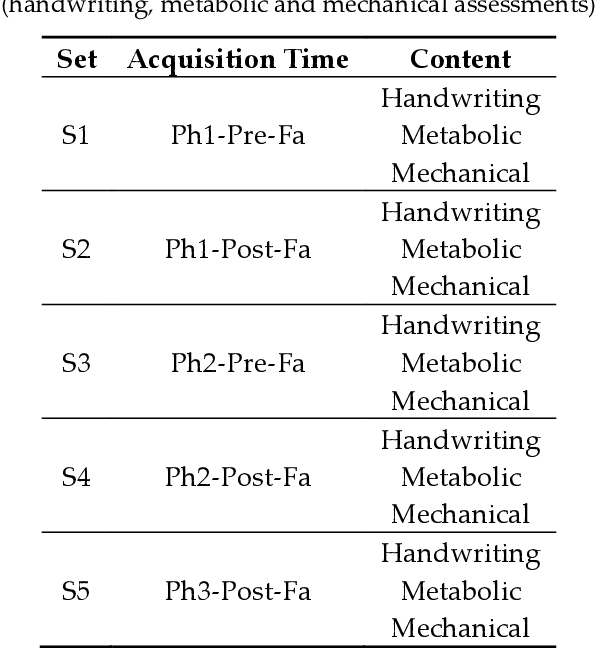

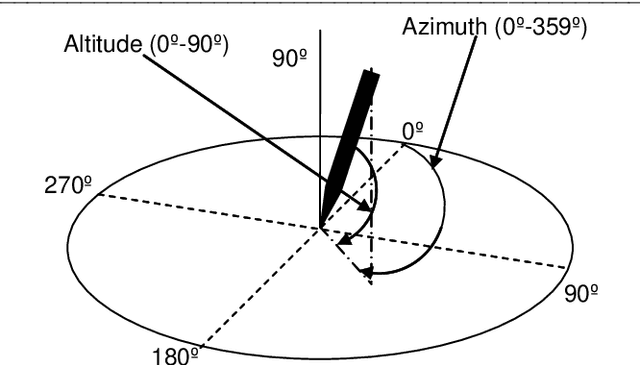

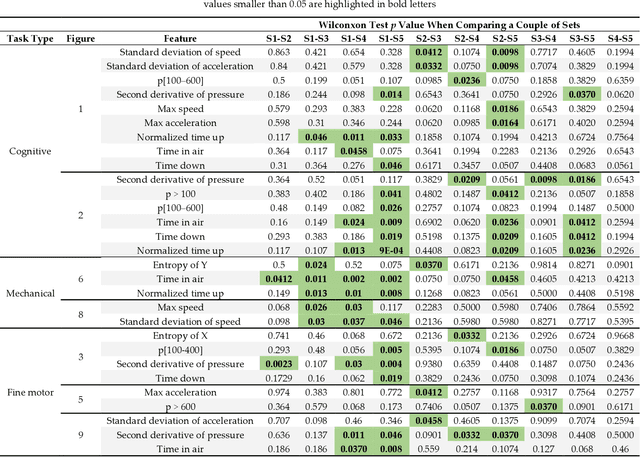

Abstract:Practical determination of physical recovery after intense exercise is a challenging topic that must include mechanical aspects as well as cognitive ones because most of physical sport activities, as well as professional activities (including brain computer interface-operated systems), require good shape in both of them. This paper presents a new online handwritten database of 20 healthy subjects. The main goal was to study the influence of several physical exercise stimuli in different handwritten tasks and to evaluate the recovery after strenuous exercise. To this aim, they performed different handwritten tasks before and after physical exercise as well as other measurements such as metabolic and mechanical fatigue assessment. Experimental results showed that although a fast mechanical recovery happens and can be measured by lactate concentrations and mechanical fatigue, this is not the case when cognitive effort is required. Handwriting analysis revealed that statistical differences exist on handwriting performance even after lactate concentration and mechanical assessment recovery. Conclusions: This points out a necessity of more recovering time in sport and professional activities than those measured in classic ways.

* 16 pages, published in Applied Sciences 10, no. 21: 7630, 2020

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge