Manisha J. Nene

A federated architecture for sector-led AI governance: lessons from India

Mar 27, 2026Abstract:Purpose: India has adopted a vertical, sector-led AI governance strategy. While promoting innovation, such a light-touch approach risks policy fragmentation. This paper aims to propose a cohesive "whole-of-government" architecture to mitigate these risks and connect policy goals with a practical implementation plan. Design/methodology/approach: The paper applies an established five-layer conceptual framework to the Indian context. First, it constructs a national architecture for overall governance. Second, it uses a detailed case study on AI incident management to validate and demonstrate the architecture's practical utility in designing a specific, operational system. Findings: The paper develops two actionable architectures. The primary model assigns clear governance roles to India's key institutions. The second is a detailed, federated architecture for national AI Incident Management. It addresses the data silo problem by using a common national standard that allows sector-specific data collection while facilitating cross-sectoral analysis. Practical implications: The proposed architectures offer a clear and predictable roadmap for India's policymakers, regulators and industry to accelerate the national AI governance agenda. Social implications: By providing a systematic path from policy to practice, the architecture builds public trust. This structured approach ensures accountability and aligns AI development with societal values. Originality/value: This paper proposes a detailed operational architecture for India's "whole-of-government" approach to AI. It offers a globally relevant template for any nation pursuing a sector-led governance model, providing a clear implementation plan. Furthermore, the proposed federated architecture demonstrates how adopting common standards can enable cross-border data aggregation and global sectoral risk analysis without centralising control.

* 12 pages, 2 figures, 1 table. This is the author's accepted manuscript of the article published as: Avinash Agarwal, Manisha J. Nene, "A federated architecture for sector-led AI governance: lessons from India", Transforming Government: People, Process and Policy, 2026. Available at: https://doi.org/10.1108/TG-09-2025-0310

Incorporating AI Incident Reporting into Telecommunications Law and Policy: Insights from India

Sep 11, 2025Abstract:The integration of artificial intelligence (AI) into telecommunications infrastructure introduces novel risks, such as algorithmic bias and unpredictable system behavior, that fall outside the scope of traditional cybersecurity and data protection frameworks. This paper introduces a precise definition and a detailed typology of telecommunications AI incidents, establishing them as a distinct category of risk that extends beyond conventional cybersecurity and data protection breaches. It argues for their recognition as a distinct regulatory concern. Using India as a case study for jurisdictions that lack a horizontal AI law, the paper analyzes the country's key digital regulations. The analysis reveals that India's existing legal instruments, including the Telecommunications Act, 2023, the CERT-In Rules, and the Digital Personal Data Protection Act, 2023, focus on cybersecurity and data breaches, creating a significant regulatory gap for AI-specific operational incidents, such as performance degradation and algorithmic bias. The paper also examines structural barriers to disclosure and the limitations of existing AI incident repositories. Based on these findings, the paper proposes targeted policy recommendations centered on integrating AI incident reporting into India's existing telecom governance. Key proposals include mandating reporting for high-risk AI failures, designating an existing government body as a nodal agency to manage incident data, and developing standardized reporting frameworks. These recommendations aim to enhance regulatory clarity and strengthen long-term resilience, offering a pragmatic and replicable blueprint for other nations seeking to govern AI risks within their existing sectoral frameworks.

Enhancements for Developing a Comprehensive AI Fairness Assessment Standard

Apr 10, 2025Abstract:As AI systems increasingly influence critical sectors like telecommunications, finance, healthcare, and public services, ensuring fairness in decision-making is essential to prevent biased or unjust outcomes that disproportionately affect vulnerable entities or result in adverse impacts. This need is particularly pressing as the industry approaches the 6G era, where AI will drive complex functions like autonomous network management and hyper-personalized services. The TEC Standard for Fairness Assessment and Rating of AI Systems provides guidelines for evaluating fairness in AI, focusing primarily on tabular data and supervised learning models. However, as AI applications diversify, this standard requires enhancement to strengthen its impact and broaden its applicability. This paper proposes an expansion of the TEC Standard to include fairness assessments for images, unstructured text, and generative AI, including large language models, ensuring a more comprehensive approach that keeps pace with evolving AI technologies. By incorporating these dimensions, the enhanced framework will promote responsible and trustworthy AI deployment across various sectors.

* 5 pages. Published in 2025 17th International Conference on COMmunication Systems and NETworks (COMSNETS). Access: https://ieeexplore.ieee.org/abstract/document/10885551

Standardised schema and taxonomy for AI incident databases in critical digital infrastructure

Jan 28, 2025Abstract:The rapid deployment of Artificial Intelligence (AI) in critical digital infrastructure introduces significant risks, necessitating a robust framework for systematically collecting AI incident data to prevent future incidents. Existing databases lack the granularity as well as the standardized structure required for consistent data collection and analysis, impeding effective incident management. This work proposes a standardized schema and taxonomy for AI incident databases, addressing these challenges by enabling detailed and structured documentation of AI incidents across sectors. Key contributions include developing a unified schema, introducing new fields such as incident severity, causes, and harms caused, and proposing a taxonomy for classifying AI incidents in critical digital infrastructure. The proposed solution facilitates more effective incident data collection and analysis, thus supporting evidence-based policymaking, enhancing industry safety measures, and promoting transparency. This work lays the foundation for a coordinated global response to AI incidents, ensuring trust, safety, and accountability in using AI across regions.

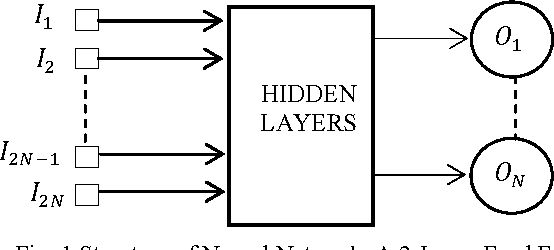

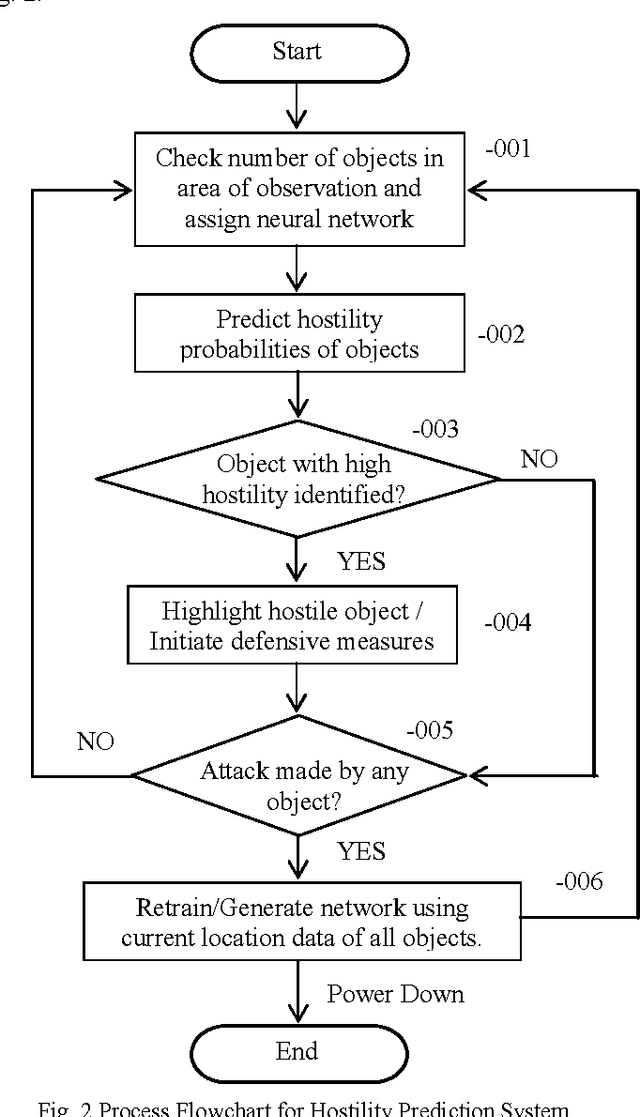

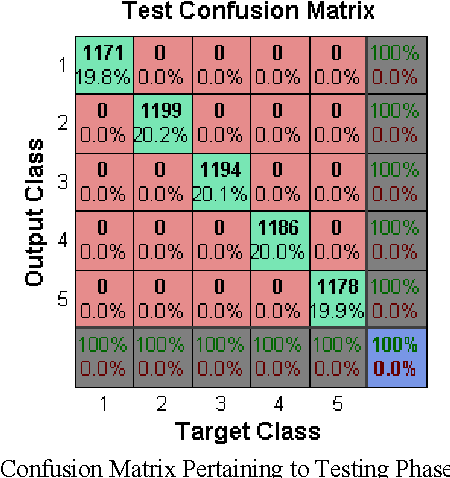

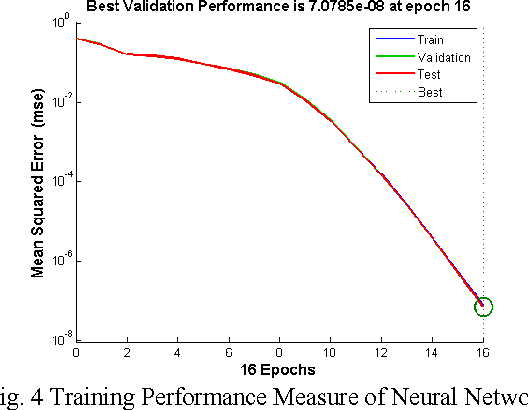

Hostile Intent Identification by Movement Pattern Analysis: Using Artificial Neural Networks

Jan 04, 2015

Abstract:In the recent years, the problem of identifying suspicious behavior has gained importance and identifying this behavior using computational systems and autonomous algorithms is highly desirable in a tactical scenario. So far, the solutions have been primarily manual which elicit human observation of entities to discern the hostility of the situation. To cater to this problem statement, a number of fully automated and partially automated solutions exist. But, these solutions lack the capability of learning from experiences and work in conjunction with human supervision which is extremely prone to error. In this paper, a generalized methodology to predict the hostility of a given object based on its movement patterns is proposed which has the ability to learn and is based upon the mechanism of humans of learning from experiences. The methodology so proposed has been implemented in a computer simulation. The results show that the posited methodology has the potential to be applied in real world tactical scenarios.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge