Lizhe Sun

Online Regression with Feature Selection in Stochastic Data Streams

Sep 24, 2018

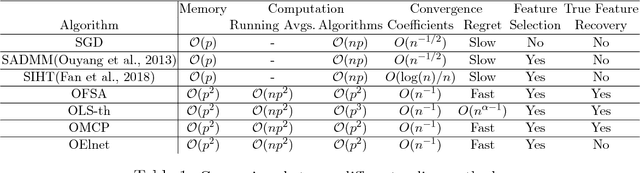

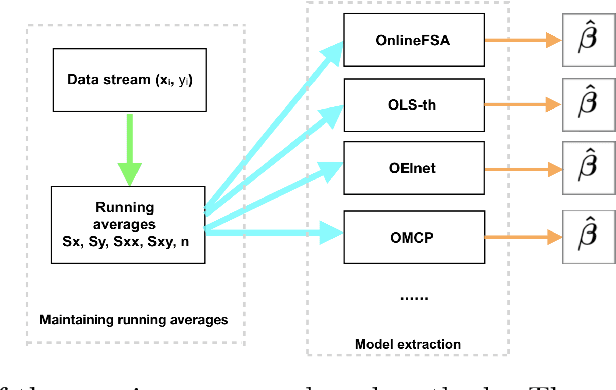

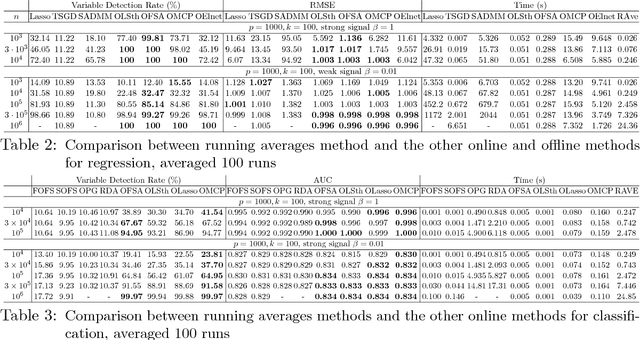

Abstract:Online learning algorithms have a wide variety of applications in large scale machine learning problems because users can know the performance of current models trained by existing data and they also can update the new models rapidly after data changes. However, the standard online learning methods still suffer some issues such as lower convergence rates and limited capability to select features or to recover the true features. In this paper, we present a novel framework for online learning based on running averages and introduce a series of online versions of some popular existing offline algorithms such as Elastic Net, Minimax Concave Penalty and Feature Selection with Annealing. We prove the equivalence between our online methods and their offline counterparts and give theoretical feature selection and convergence guarantees for some of them. In contrast to the existing online methods, the proposed methods can extract models with any desired sparsity level at any time. Numerical experiments indicate that our new methods enjoy high feature selection accuracy and a fast convergence rate, compared with standard stochastic algorithms and offline learning algorithms. We also present some applications to large datasets where again the proposed framework shows competitive results compared to popular online and offline algorithms.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge