Krunal Kishor Patel

Discounted Pseudocosts in MILP

Jul 07, 2024

Abstract:In this article, we introduce the concept of discounted pseudocosts, inspired by discounted total reward in reinforcement learning, and explore their application in mixed-integer linear programming (MILP). Traditional pseudocosts estimate changes in the objective function due to variable bound changes during the branch-and-bound process. By integrating reinforcement learning concepts, we propose a novel approach incorporating a forward-looking perspective into pseudocost estimation. We present the motivation behind discounted pseudocosts and discuss how they represent the anticipated reward for branching after one level of exploration in the MILP problem space. Initial experiments on MIPLIB 2017 benchmark instances demonstrate the potential of discounted pseudocosts to enhance branching strategies and accelerate the solution process for challenging MILP problems.

Revisiting column-generation-based matheuristic for learning classification trees

Aug 22, 2023

Abstract:Decision trees are highly interpretable models for solving classification problems in machine learning (ML). The standard ML algorithms for training decision trees are fast but generate suboptimal trees in terms of accuracy. Other discrete optimization models in the literature address the optimality problem but only work well on relatively small datasets. \cite{firat2020column} proposed a column-generation-based heuristic approach for learning decision trees. This approach improves scalability and can work with large datasets. In this paper, we describe improvements to this column generation approach. First, we modify the subproblem model to significantly reduce the number of subproblems in multiclass classification instances. Next, we show that the data-dependent constraints in the master problem are implied, and use them as cutting planes. Furthermore, we describe a separation model to generate data points for which the linear programming relaxation solution violates their corresponding constraints. We conclude by presenting computational results that show that these modifications result in better scalability.

Explainable prediction of Qcodes for NOTAMs using column generation

Aug 09, 2022

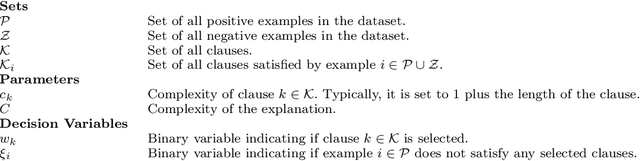

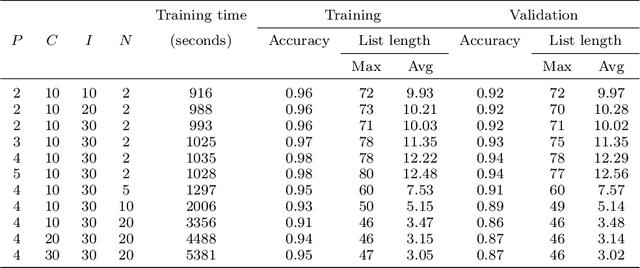

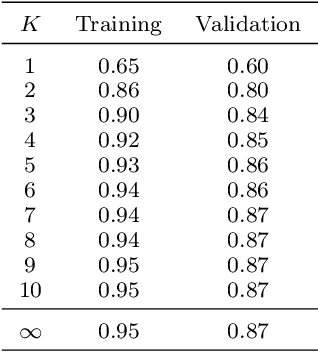

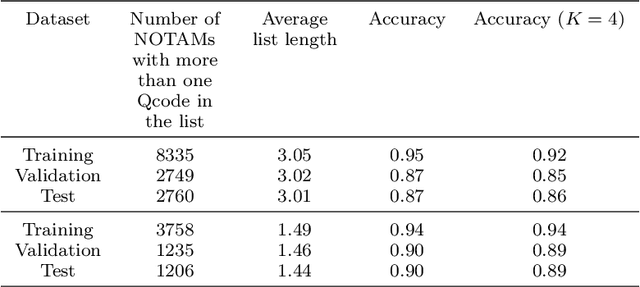

Abstract:A NOtice To AirMen (NOTAM) contains important flight route related information. To search and filter them, NOTAMs are grouped into categories called QCodes. In this paper, we develop a tool to predict, with some explanations, a Qcode for a NOTAM. We present a way to extend the interpretable binary classification using column generation proposed in Dash, Gunluk, and Wei (2018) to a multiclass text classification method. We describe the techniques used to tackle the issues related to one vs-rest classification, such as multiple outputs and class imbalances. Furthermore, we introduce some heuristics, including the use of a CP-SAT solver for the subproblems, to reduce the training time. Finally, we show that our approach compares favorably with state-of-the-art machine learning algorithms like Linear SVM and small neural networks while adding the needed interpretability component.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge