Kenneth Odoh

Connect the dots: Dataset Condensation, Differential Privacy, and Adversarial Uncertainty

Feb 16, 2024Abstract:Our work focuses on understanding the underpinning mechanism of dataset condensation by drawing connections with ($\epsilon$, $\delta$)-differential privacy where the optimal noise, $\epsilon$, is chosen by adversarial uncertainty \cite{Grining2017}. We can answer the question about the inner workings of the dataset condensation procedure. Previous work \cite{dong2022} proved the link between dataset condensation (DC) and ($\epsilon$, $\delta$)-differential privacy. However, it is unclear from existing works on ablating DC to obtain a lower-bound estimate of $\epsilon$ that will suffice for creating high-fidelity synthetic data. We suggest that adversarial uncertainty is the most appropriate method to achieve an optimal noise level, $\epsilon$. As part of the internal dynamics of dataset condensation, we adopt a satisfactory scheme for noise estimation that guarantees high-fidelity data while providing privacy.

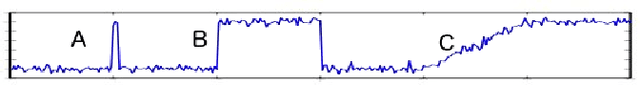

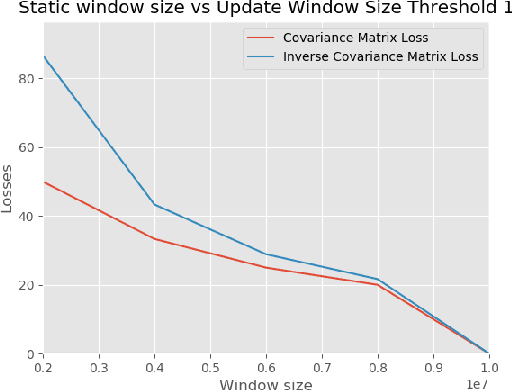

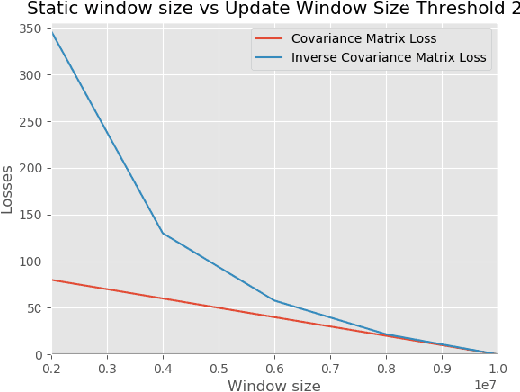

Real-time Anomaly Detection for Multivariate Data Streams

Sep 26, 2022

Abstract:We present a real-time multivariate anomaly detection algorithm for data streams based on the Probabilistic Exponentially Weighted Moving Average (PEWMA). Our formulation is resilient to (abrupt transient, abrupt distributional, and gradual distributional) shifts in the data. The novel anomaly detection routines utilize an incremental online algorithm to handle streams. Furthermore, our proposed anomaly detection algorithm works in an unsupervised manner eliminating the need for labeled examples. Our algorithm performs well and is resilient in the face of concept drifts.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge