Jules Depersin

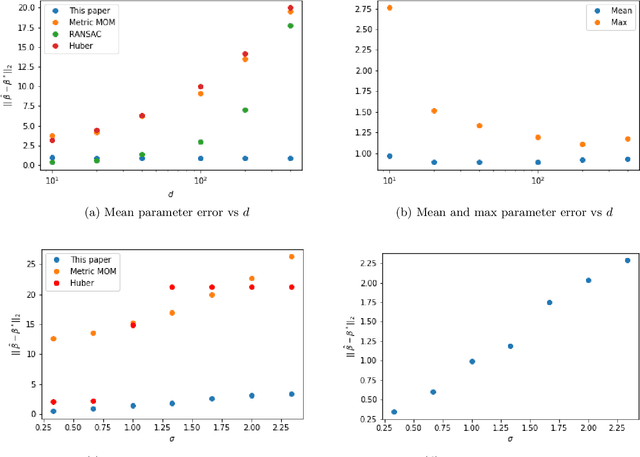

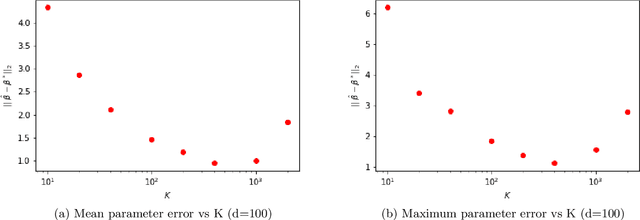

A spectral algorithm for robust regression with subgaussian rates

Jul 12, 2020

Abstract:We study a new linear up to quadratic time algorithm for linear regression in the absence of strong assumptions on the underlying distributions of samples, and in the presence of outliers. The goal is to design a procedure which comes with actual working code that attains the optimal sub-gaussian error bound even though the data have only finite moments (up to $L_4$) and in the presence of possibly adversarial outliers. A polynomial-time solution to this problem has been recently discovered but has high runtime due to its use of Sum-of-Square hierarchy programming. At the core of our algorithm is an adaptation of the spectral method introduced for the mean estimation problem to the linear regression problem. As a by-product we established a connection between the linear regression problem and the furthest hyperplane problem. From a stochastic point of view, in addition to the study of the classical quadratic and multiplier processes we introduce a third empirical process that comes naturally in the study of the statistical properties of the algorithm.

Robust subgaussian estimation with VC-dimension

Apr 24, 2020Abstract:Median-of-means (MOM) based procedures provide non-asymptotic and strong deviation bounds even when data are heavy-tailed and/or corrupted. This work proposes a new general way to bound the excess risk for MOM estimators. The core technique is the use of VC-dimension (instead of Rademacher complexity) to measure the statistical complexity. In particular, this allows to give the first robust estimators for sparse estimation which achieves the so-called subgaussian rate only assuming a finite second moment for the uncorrupted data. By comparison, previous works using Rademacher complexities required a number of finite moments that grows logarithmically with the dimension. With this technique, we derive new robust sugaussian bounds for mean estimation in any norm. We also derive a new robust estimator for covariance estimation that is the first to achieve subgaussian bounds without $L_4-L_2$ norm equivalence.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge