Juergen Bosse

Digital and Robotic Twinning for Validation of Proximity Operations and Formation Flying

Jul 26, 2025Abstract:In spacecraft Rendezvous, Proximity Operations (RPO), and Formation Flying (FF), the Guidance Navigation and Control (GNC) system is safety-critical and must meet strict performance requirements. However, validating such systems is challenging due to the complexity of the space environment, necessitating a verification and validation (V&V) process that bridges simulation and real-world behavior. The key contribution of this paper is a unified, end-to-end digital and robotic twinning framework that enables software- and hardware-in-the-loop testing for multi-modal GNC systems. The robotic twin includes three testbeds at Stanford's Space Rendezvous Laboratory (SLAB): the GNSS and Radiofrequency Autonomous Navigation Testbed for Distributed Space Systems (GRAND) to validate RF-based navigation techniques, and the Testbed for Rendezvous and Optical Navigation (TRON) and Optical Stimulator (OS) to validate vision-based methods. The test article for this work is an integrated multi-modal GNC software stack for RPO and FF developed at SLAB. This paper introduces the hybrid framework and summarizes calibration and error characterization for the robotic twin. Then, the GNC stack's performance and robustness is characterized using the integrated digital and robotic twinning pipeline for a full-range RPO mission scenario in Low-Earth Orbit (LEO). The results shown in the paper demonstrate consistency between digital and robotic twins, validating the hybrid twinning pipeline as a reliable framework for realistic assessment and verification of GNC systems.

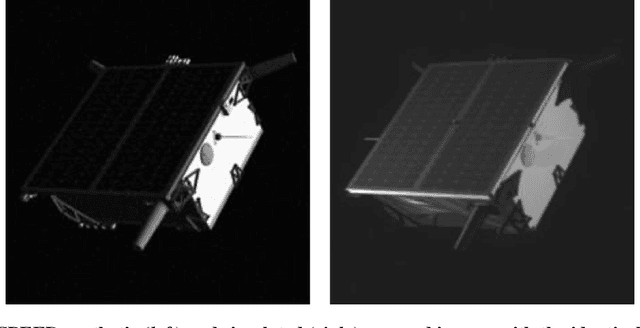

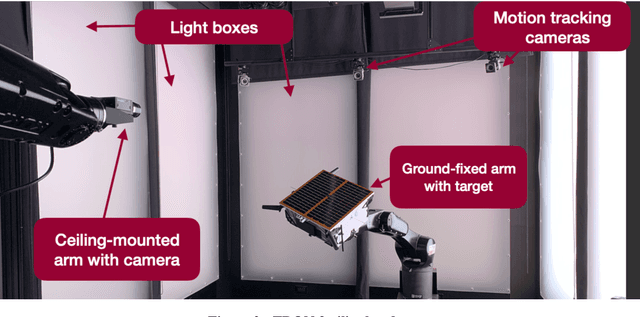

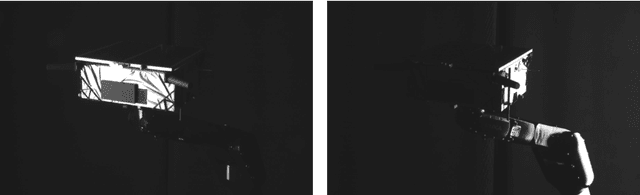

Robotic Testbed for Rendezvous and Optical Navigation: Multi-Source Calibration and Machine Learning Use Cases

Aug 12, 2021

Abstract:This work presents the most recent advances of the Robotic Testbed for Rendezvous and Optical Navigation (TRON) at Stanford University - the first robotic testbed capable of validating machine learning algorithms for spaceborne optical navigation. The TRON facility consists of two 6 degrees-of-freedom KUKA robot arms and a set of Vicon motion track cameras to reconfigure an arbitrary relative pose between a camera and a target mockup model. The facility includes multiple Earth albedo light boxes and a sun lamp to recreate the high-fidelity spaceborne illumination conditions. After the overview of the facility, this work details the multi-source calibration procedure which enables the estimation of the relative pose between the object and the camera with millimeter-level position and millidegree-level orientation accuracies. Finally, a comparative analysis of the synthetic and TRON simulated imageries is performed using a Convolutional Neural Network (CNN) pre-trained on the synthetic images. The result shows a considerable gap in the CNN's performance, suggesting the TRON simulated images can be used to validate the robustness of any machine learning algorithms trained on more easily accessible synthetic imagery from computer graphics.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge