Joshua M. Pearce

Synthetic-to-real Composite Semantic Segmentation in Additive Manufacturing

Oct 14, 2022

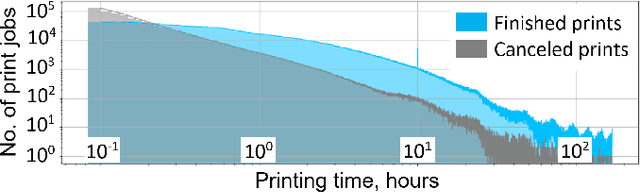

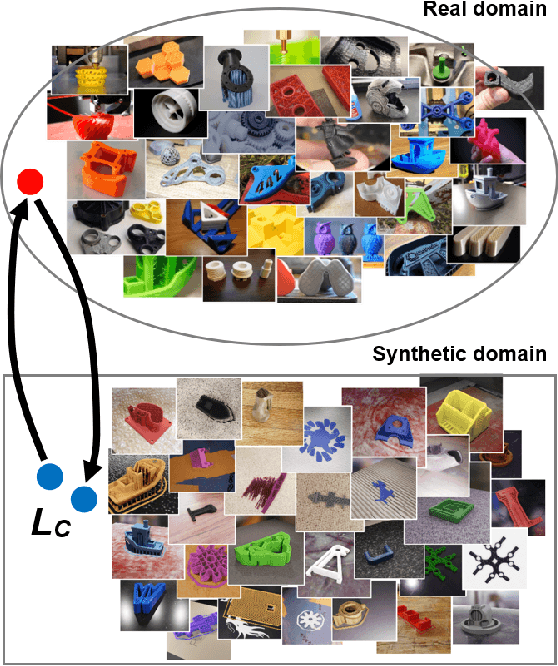

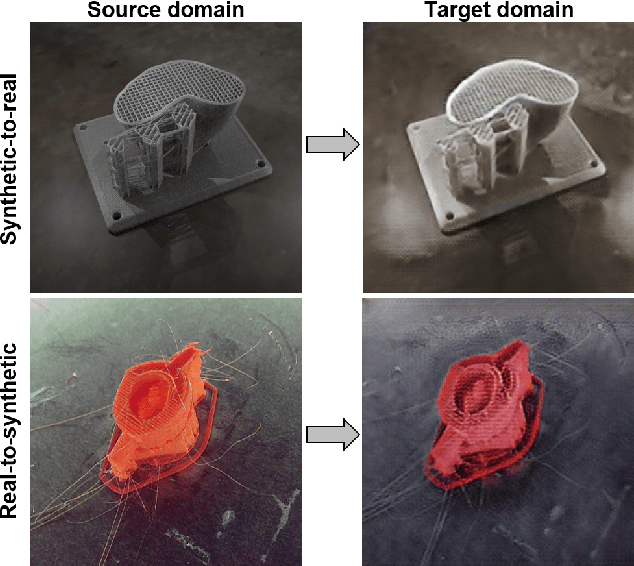

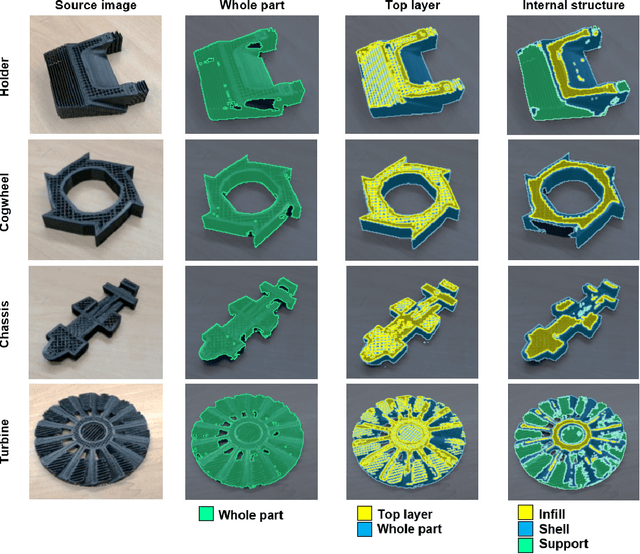

Abstract:The application of computer vision and machine learning methods in the field of additive manufacturing (AM) for semantic segmentation of the structural elements of 3-D printed products will improve real-time failure analysis systems and can potentially reduce the number of defects by enabling in situ corrections. This work demonstrates the possibilities of using physics-based rendering for labeled image dataset generation, as well as image-to-image translation capabilities to improve the accuracy of real image segmentation for AM systems. Multi-class semantic segmentation experiments were carried out based on the U-Net model and cycle generative adversarial network. The test results demonstrated the capacity of detecting such structural elements of 3-D printed parts as a top layer, infill, shell, and support. A basis for further segmentation system enhancement by utilizing image-to-image style transfer and domain adaptation technologies was also developed. The results indicate that using style transfer as a precursor to domain adaptation can significantly improve real 3-D printing image segmentation in situations where a model trained on synthetic data is the only tool available. The mean intersection over union (mIoU) scores for synthetic test datasets included 94.90% for the entire 3-D printed part, 73.33% for the top layer, 78.93% for the infill, 55.31% for the shell, and 69.45% for supports.

Towards Smart Monitored AM: Open Source in-Situ Layer-wise 3D Printing Image Anomaly Detection Using Histograms of Oriented Gradients and a Physics-Based Rendering Engine

Nov 04, 2021

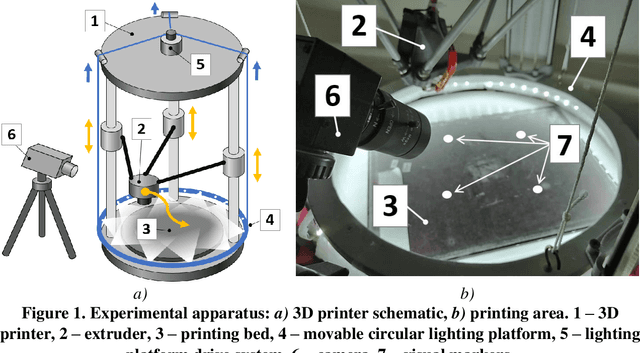

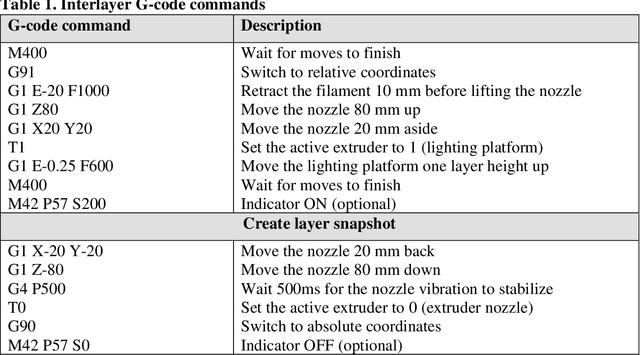

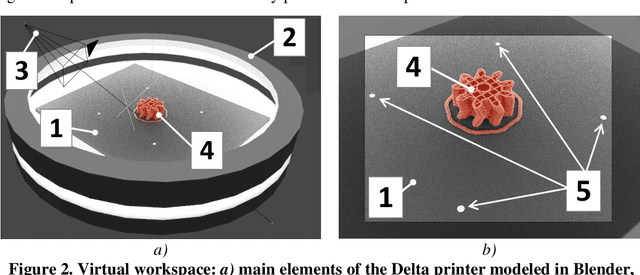

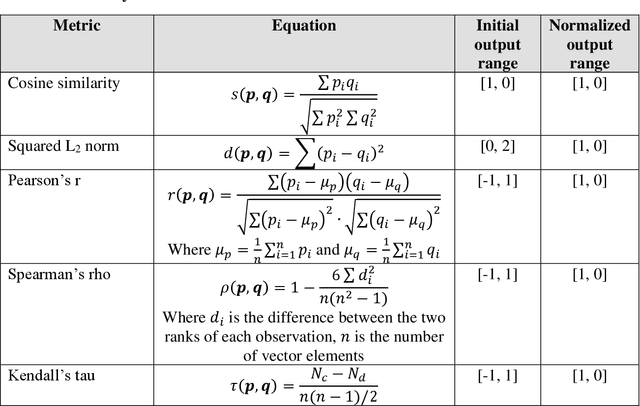

Abstract:This study presents an open source method for detecting 3D printing anomalies by comparing images of printed layers from a stationary monocular camera with G-code-based reference images of an ideal process generated with Blender, a physics rendering engine. Recognition of visual deviations was accomplished by analyzing the similarity of histograms of oriented gradients (HOG) of local image areas. The developed technique requires preliminary modeling of the working environment to achieve the best match for orientation, color rendering, lighting, and other parameters of the printed part. The output of the algorithm is a level of mismatch between printed and synthetic reference layers. Twelve similarity and distance measures were implemented and compared for their effectiveness at detecting 3D printing errors on six different representative failure types and their control error-free print images. The results show that although Kendall tau, Jaccard, and Sorensen similarities are the most sensitive, Pearson r, Spearman rho, cosine, and Dice similarities produce the more reliable results. This open source method allows the program to notice critical errors in the early stages of their occurrence and either pause manufacturing processes for further investigation by an operator or in the future AI-controlled automatic error correction. The implementation of this novel method does not require preliminary data for training, and the greatest efficiency can be achieved with the mass production of parts by either additive or subtractive manufacturing of the same geometric shape. It can be concluded this open source method is a promising means of enabling smart distributed recycling for additive manufacturing using complex feedstocks as well as other challenging manufacturing environments.

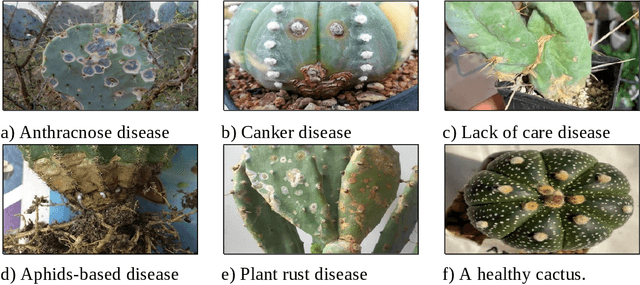

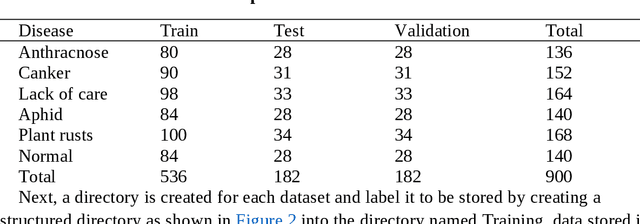

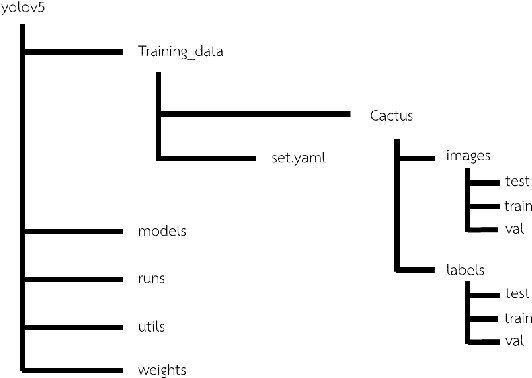

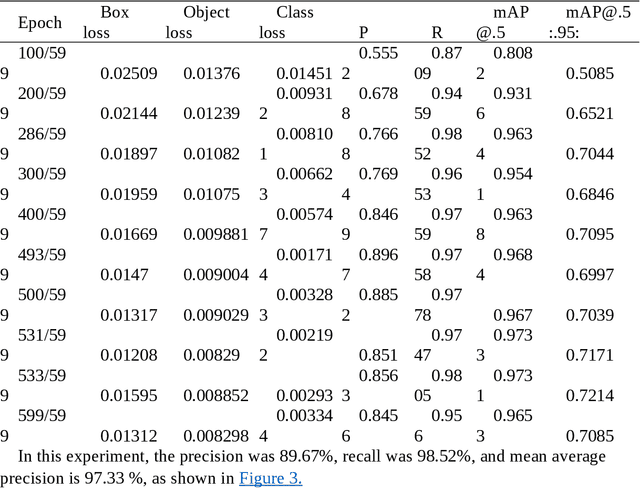

Open source disease analysis system of cactus by artificial intelligence and image processing

Jun 07, 2021

Abstract:There is a growing interest in cactus cultivation because of numerous cacti uses from houseplants to food and medicinal applications. Various diseases impact the growth of cacti. To develop an automated model for the analysis of cactus disease and to be able to quickly treat and prevent damage to the cactus. The Faster R-CNN and YOLO algorithm technique were used to analyze cactus diseases automatically distributed into six groups: 1) anthracnose, 2) canker, 3) lack of care, 4) aphid, 5) rusts and 6) normal group. Based on the experimental results the YOLOv5 algorithm was found to be more effective at detecting and identifying cactus disease than the Faster R-CNN algorithm. Data training and testing with YOLOv5S model resulted in a precision of 89.7% and an accuracy (recall) of 98.5%, which is effective enough for further use in a number of applications in cactus cultivation. Overall the YOLOv5 algorithm had a test time per image of only 26 milliseconds. Therefore, the YOLOv5 algorithm was found to suitable for mobile applications and this model could be further developed into a program for analyzing cactus disease.

Open Source 3-D Filament Diameter Sensor for Recycling, Winding and Additive Manufacturing Machines

Dec 01, 2020

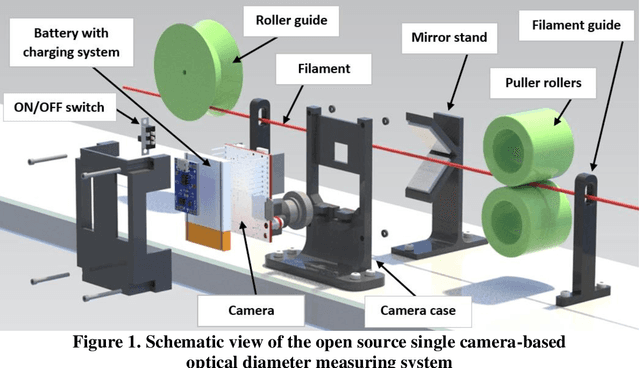

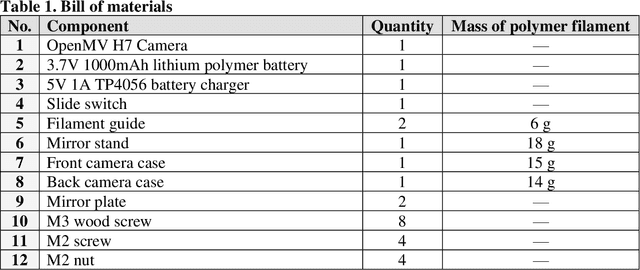

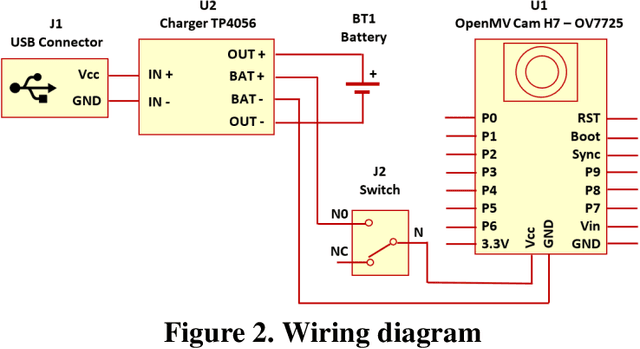

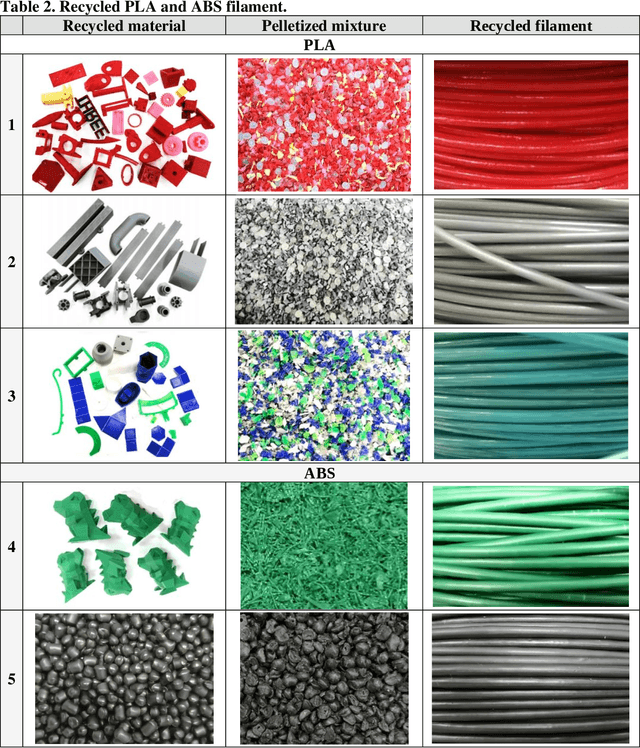

Abstract:To overcome the challenge of upcycling plastic waste into 3-D printing filament in the distributed recycling and additive manufacturing systems, this study designs, builds, tests and validates an open source 3-D filament diameter sensor for recycling and winding machines. The modular system for multi-axis optical control of the diameter of the recycled 3-D-printer filament makes it possible to analyze the surface structure of the processed filament, save the history of measurements along the entire length of the spool, as well as mark defective areas. The sensor is developed as an independent module and integrated into a recyclebot. The diameter sensor was tested on different kinds of polymers (ABS, PLA) different sources of plastic (recycled 3-D prints and virgin plastic waste) and different colors including clear plastic. The results of the diameter measurements using the camera were compared with the manual measurements, and the measurements obtained with a one-dimensional digital light caliper. The results found that the developed open source filament sensing method allows users to obtain significantly more information in comparison with basic one-dimensional light sensors and using the received data not only for more accurate diameter measurements, but also for a detailed analysis of the recycled filament surface. The developed method ensures greater availability of plastics recycling technologies for the manufacturing community and stimulates the growth of composite materials creation. The presented system can greatly enhance the user possibilities and serve as a starting point for a complete recycling control system that will regulate motor parameters to achieve the desired filament diameter with acceptable deviations and even control the extrusion rate on a printer to recover from filament irregularities.

Open Source Computer Vision-based Layer-wise 3D Printing Analysis

Mar 12, 2020

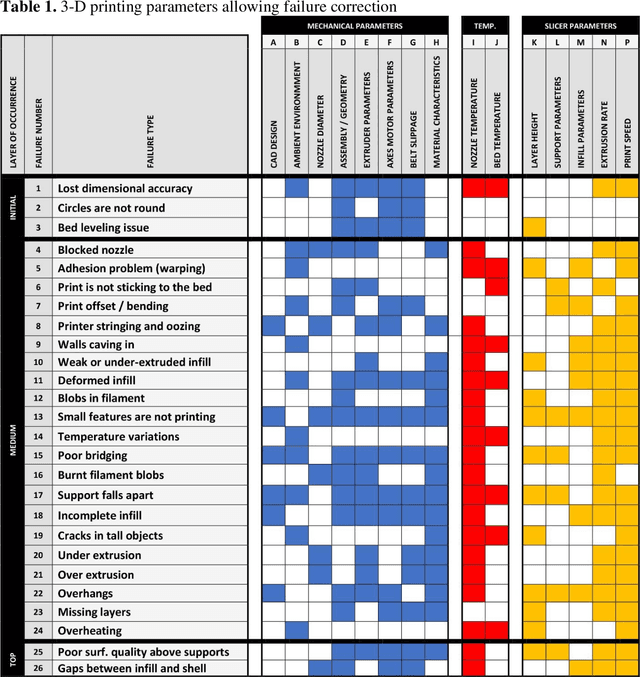

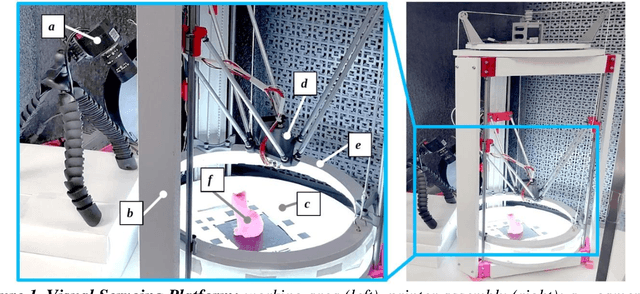

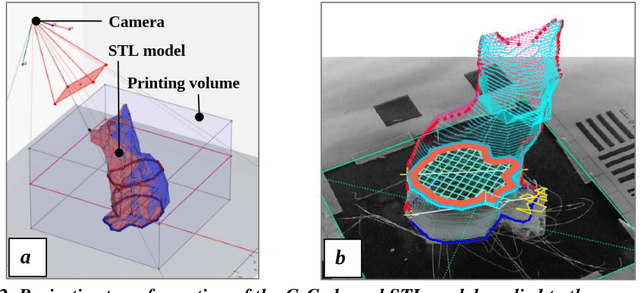

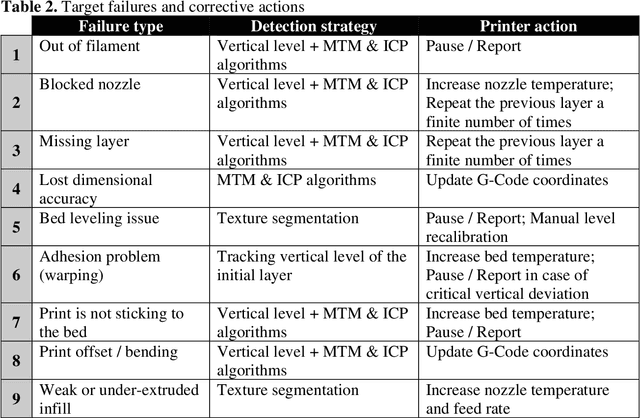

Abstract:The paper describes an open source computer vision-based hardware structure and software algorithm, which analyzes layer-wise the 3-D printing processes, tracks printing errors, and generates appropriate printer actions to improve reliability. This approach is built upon multiple-stage monocular image examination, which allows monitoring both the external shape of the printed object and internal structure of its layers. Starting with the side-view height validation, the developed program analyzes the virtual top view for outer shell contour correspondence using the multi-template matching and iterative closest point algorithms, as well as inner layer texture quality clustering the spatial-frequency filter responses with Gaussian mixture models and segmenting structural anomalies with the agglomerative hierarchical clustering algorithm. This allows evaluation of both global and local parameters of the printing modes. The experimentally-verified analysis time per layer is less than one minute, which can be considered a quasi-real-time process for large prints. The systems can work as an intelligent printing suspension tool designed to save time and material. However, the results show the algorithm provides a means to systematize in situ printing data as a first step in a fully open source failure correction algorithm for additive manufacturing.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge