Joao Paixao

GSVD for Geometry-Grounded Dataset Comparison: An Alignment Angle Is All You Need

Mar 10, 2026Abstract:Geometry-grounded learning asks models to respect structure in the problem domain rather than treating observations as arbitrary vectors. Motivated by this view, we revisit a classical but underused primitive for comparing datasets: linear relations between two data matrices, expressed via the co-span constraint $Ax = By = z$ in a shared ambient space. To operationalize this comparison, we use the generalized singular value decomposition (GSVD) as a joint coordinate system for two subspaces. In particular, we exploit the GSVD form $A = HCU$, $B = HSV$ with $C^{\top}C + S^{\top}S = I$, which separates shared versus dataset-specific directions through the diagonal structure of $(C, S)$. From these factors we derive an interpretable *angle score* $θ(z) \in [0, π/2]$ for a sample $z$, quantifying whether z is explained relatively more by $A$, more by $B$, or comparably by both. The primary role of $θ(z)$ is as a *per-sample geometric diagnostic*. We illustrate the behavior of the score on MNIST through angle distributions and representative GSVD directions. A binary classifier derived from $θ(z)$ is presented as an illustrative application of the score as an interpretable diagnostic tool.

Vector Field Neural Networks

May 16, 2019

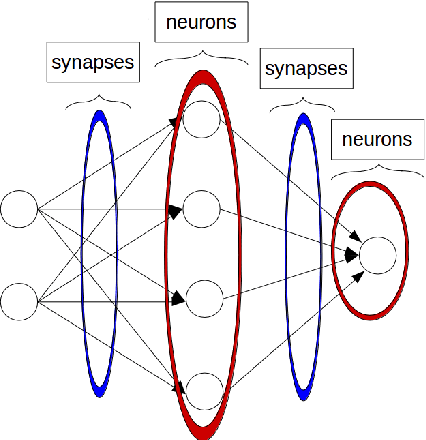

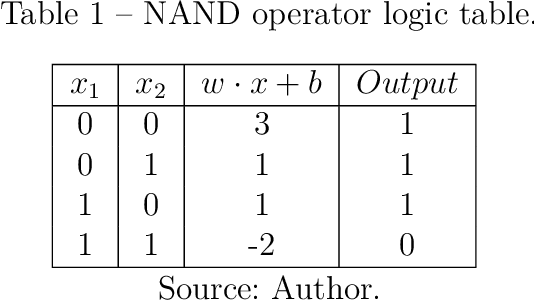

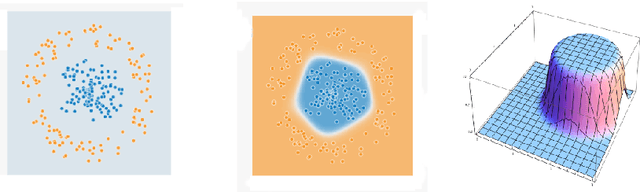

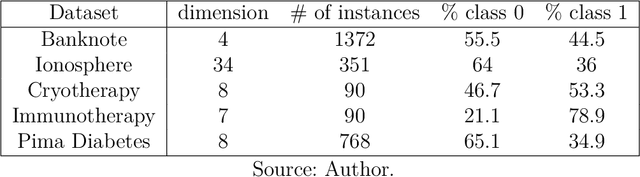

Abstract:This work begins by establishing a mathematical formalization between different geometrical interpretations of Neural Networks, providing a first contribution. From this starting point, a new interpretation is explored, using the idea of implicit vector fields moving data as particles in a flow. A new architecture, Vector Fields Neural Networks(VFNN), is proposed based on this interpretation, with the vector field becoming explicit. A specific implementation of the VFNN using Euler's method to solve ordinary differential equations (ODEs) and gaussian vector fields is tested. The first experiments present visual results remarking the important features of the new architecture and providing another contribution with the geometrically interpretable regularization of model parameters. Then, the new architecture is evaluated for different hyperparameters and inputs, with the objective of evaluating the influence on model performance, computational time, and complexity. The VFNN model is compared against the known basic models Naive Bayes, Feed Forward Neural Networks, and Support Vector Machines(SVM), showing comparable, or better, results for different datasets. Finally, the conclusion provides many new questions and ideas for improvement of the model that can be used to increase model performance.

Vector Field Based Neural Networks

Feb 22, 2018

Abstract:A novel Neural Network architecture is proposed using the mathematically and physically rich idea of vector fields as hidden layers to perform nonlinear transformations in the data. The data points are interpreted as particles moving along a flow defined by the vector field which intuitively represents the desired movement to enable classification. The architecture moves the data points from their original configuration to anew one following the streamlines of the vector field with the objective of achieving a final configuration where classes are separable. An optimization problem is solved through gradient descent to learn this vector field.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge