Jim Weatherall

A Decision-Theoretic Formalisation of Steganography With Applications to LLM Monitoring

Feb 26, 2026Abstract:Large language models are beginning to show steganographic capabilities. Such capabilities could allow misaligned models to evade oversight mechanisms. Yet principled methods to detect and quantify such behaviours are lacking. Classical definitions of steganography, and detection methods based on them, require a known reference distribution of non-steganographic signals. For the case of steganographic reasoning in LLMs, knowing such a reference distribution is not feasible; this renders these approaches inapplicable. We propose an alternative, \textbf{decision-theoretic view of steganography}. Our central insight is that steganography creates an asymmetry in usable information between agents who can and cannot decode the hidden content (present within a steganographic signal), and this otherwise latent asymmetry can be inferred from the agents' observable actions. To formalise this perspective, we introduce generalised $\mathcal{V}$-information: a utilitarian framework for measuring the amount of usable information within some input. We use this to define the \textbf{steganographic gap} -- a measure that quantifies steganography by comparing the downstream utility of the steganographic signal to agents that can and cannot decode the hidden content. We empirically validate our formalism, and show that it can be used to detect, quantify, and mitigate steganographic reasoning in LLMs.

Revolutionizing Clinical Trials: A Manifesto for AI-Driven Transformation

Jun 10, 2025Abstract:This manifesto represents a collaborative vision forged by leaders in pharmaceuticals, consulting firms, clinical research, and AI. It outlines a roadmap for two AI technologies - causal inference and digital twins - to transform clinical trials, delivering faster, safer, and more personalized outcomes for patients. By focusing on actionable integration within existing regulatory frameworks, we propose a way forward to revolutionize clinical research and redefine the gold standard for clinical trials using AI.

TrialGraph: Machine Intelligence Enabled Insight from Graph Modelling of Clinical Trials

Dec 15, 2021

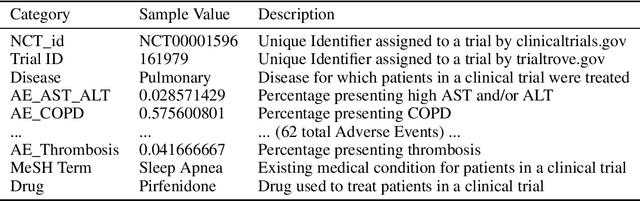

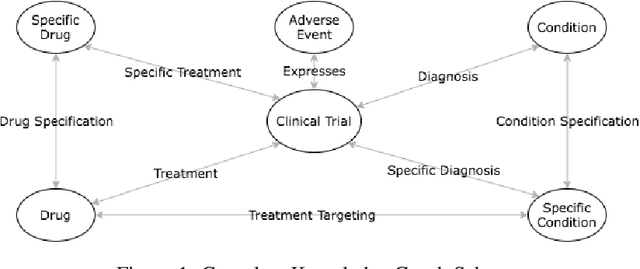

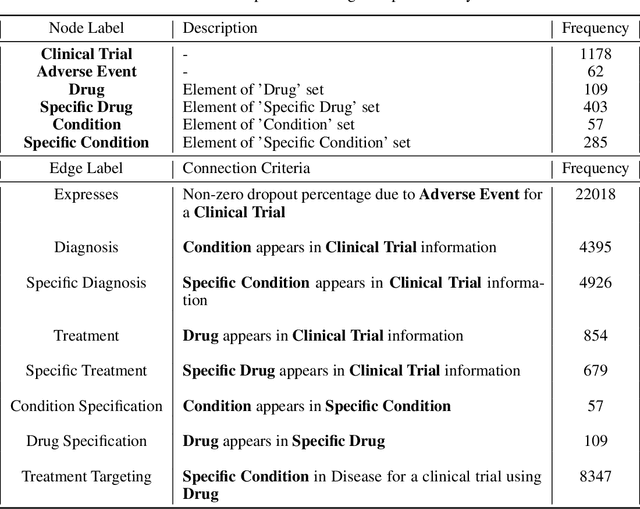

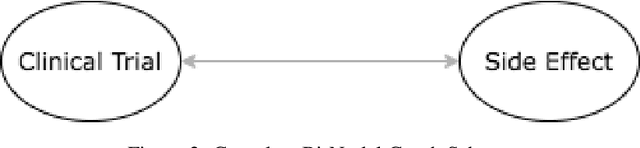

Abstract:A major impediment to successful drug development is the complexity, cost, and scale of clinical trials. The detailed internal structure of clinical trial data can make conventional optimization difficult to achieve. Recent advances in machine learning, specifically graph-structured data analysis, have the potential to enable significant progress in improving the clinical trial design. TrialGraph seeks to apply these methodologies to produce a proof-of-concept framework for developing models which can aid drug development and benefit patients. In this work, we first introduce a curated clinical trial data set compiled from the CT.gov, AACT and TrialTrove databases (n=1191 trials; representing one million patients) and describe the conversion of this data to graph-structured formats. We then detail the mathematical basis and implementation of a selection of graph machine learning algorithms, which typically use standard machine classifiers on graph data embedded in a low-dimensional feature space. We trained these models to predict side effect information for a clinical trial given information on the disease, existing medical conditions, and treatment. The MetaPath2Vec algorithm performed exceptionally well, with standard Logistic Regression, Decision Tree, Random Forest, Support Vector, and Neural Network classifiers exhibiting typical ROC-AUC scores of 0.85, 0.68, 0.86, 0.80, and 0.77, respectively. Remarkably, the best performing classifiers could only produce typical ROC-AUC scores of 0.70 when trained on equivalent array-structured data. Our work demonstrates that graph modelling can significantly improve prediction accuracy on appropriate datasets. Successive versions of the project that refine modelling assumptions and incorporate more data types can produce excellent predictors with real-world applications in drug development.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge