Jeena Kleenankandy

Department of Computer Science and Engineering, National Institute of Technology Calicut, Kerala, India

Recognizing semantic relation in sentence pairs using Tree-RNNs and Typed dependencies

Jan 13, 2022

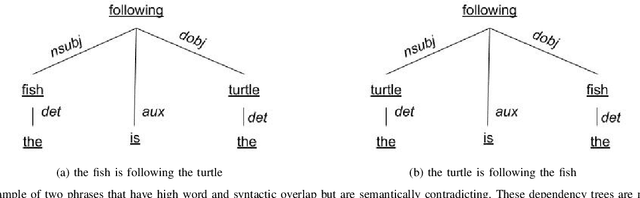

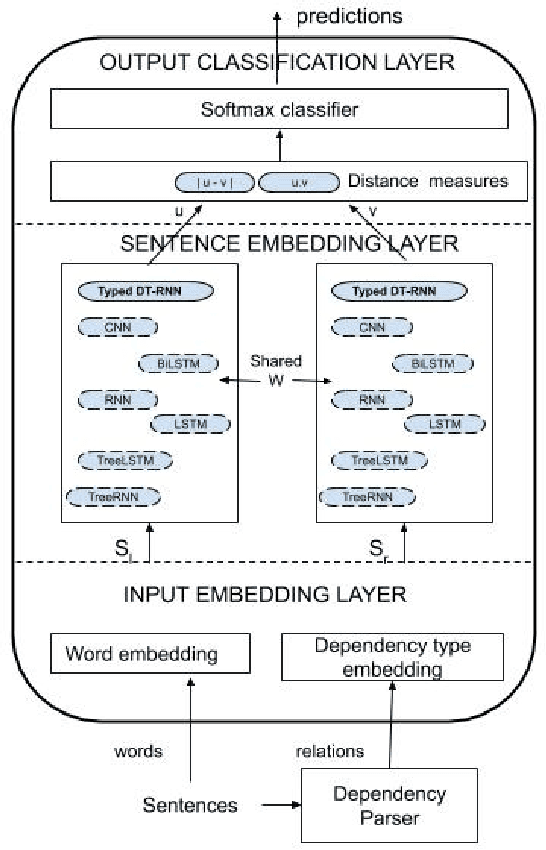

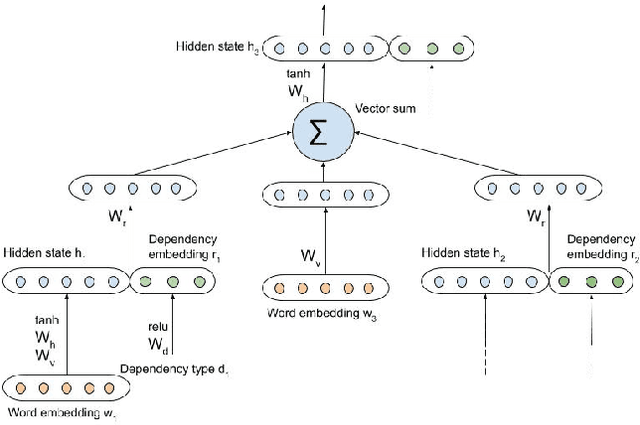

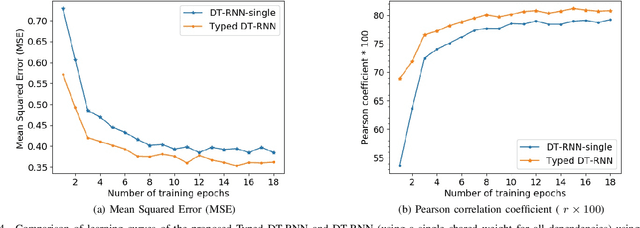

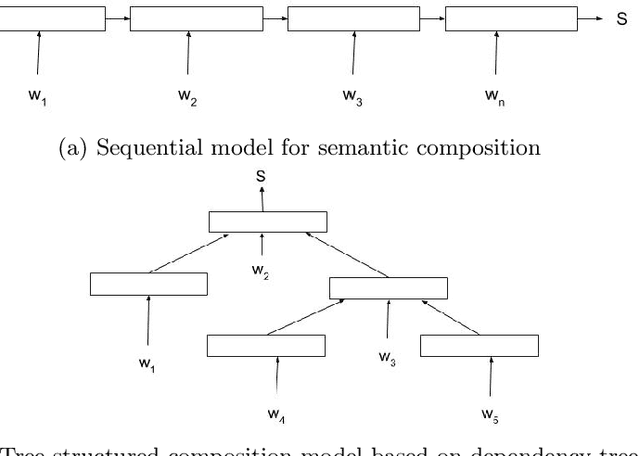

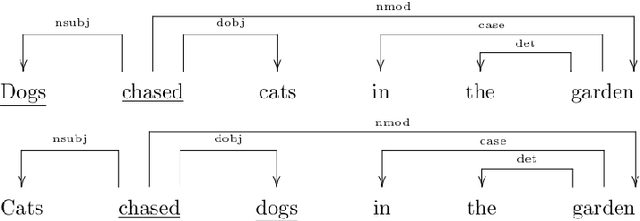

Abstract:Recursive neural networks (Tree-RNNs) based on dependency trees are ubiquitous in modeling sentence meanings as they effectively capture semantic relationships between non-neighborhood words. However, recognizing semantically dissimilar sentences with the same words and syntax is still a challenge to Tree-RNNs. This work proposes an improvement to Dependency Tree-RNN (DT-RNN) using the grammatical relationship type identified in the dependency parse. Our experiments on semantic relatedness scoring (SRS) and recognizing textual entailment (RTE) in sentence pairs using SICK (Sentence Involving Compositional Knowledge) dataset show encouraging results. The model achieved a 2% improvement in classification accuracy for the RTE task over the DT-RNN model. The results show that Pearson's and Spearman's correlation measures between the model's predicted similarity scores and human ratings are higher than those of standard DT-RNNs.

An enhanced Tree-LSTM architecture for sentence semantic modeling using typed dependencies

Feb 18, 2020

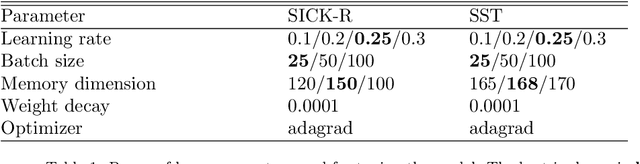

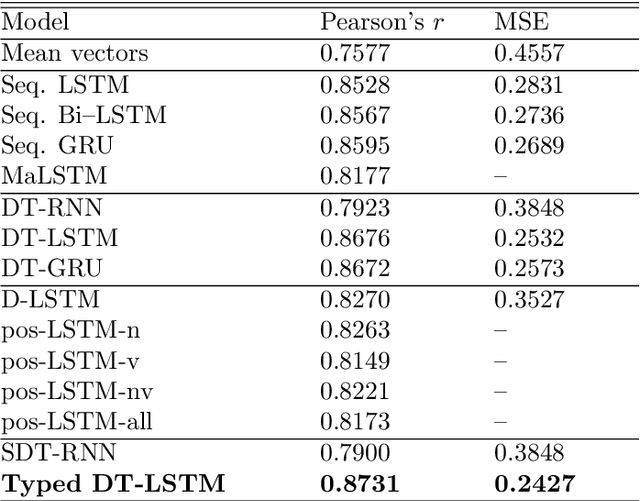

Abstract:Tree-based Long short term memory (LSTM) network has become state-of-the-art for modeling the meaning of language texts as they can effectively exploit the grammatical syntax and thereby non-linear dependencies among words of the sentence. However, most of these models cannot recognize the difference in meaning caused by a change in semantic roles of words or phrases because they do not acknowledge the type of grammatical relations, also known as typed dependencies, in sentence structure. This paper proposes an enhanced LSTM architecture, called relation gated LSTM, which can model the relationship between two inputs of a sequence using a control input. We also introduce a Tree-LSTM model called Typed Dependency Tree-LSTM that uses the sentence dependency parse structure as well as the dependency type to embed sentence meaning into a dense vector. The proposed model outperformed its type-unaware counterpart in two typical NLP tasks - Semantic Relatedness Scoring and Sentiment Analysis, in a lesser number of training epochs. The results were comparable or competitive with other state-of-the-art models. Qualitative analysis showed that changes in the voice of sentences had little effect on the model's predicted scores, while changes in nominal (noun) words had a more significant impact. The model recognized subtle semantic relationships in sentence pairs. The magnitudes of learned typed dependencies embeddings were also in agreement with human intuitions. The research findings imply the significance of grammatical relations in sentence modeling. The proposed models would serve as a base for future researches in this direction.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge