Jean-Marie Chauvet

Memory Traces: Are Transformers Tulving Machines?

Apr 12, 2024Abstract:Memory traces--changes in the memory system that result from the perception and encoding of an event--were measured in pioneering studies by Endel Tulving and Michael J. Watkins in 1975. These and further experiments informed the maturation of Tulving's memory model, from the GAPS (General Abstract Processing System} to the SPI (Serial-Parallel Independent) model. Having current top of the line LLMs revisit the original Tulving-Watkins tests may help in assessing whether foundation models completely instantiate or not this class of psychological models.

Memory GAPS: Would LLMs pass the Tulving Test?

Feb 28, 2024Abstract:The Tulving Test was designed to investigate memory performance in recognition and recall tasks. Its results help assess the relevance of the "Synergistic Ecphory Model" of memory and similar RK paradigms in human performance. This paper starts investigating whether the more than forty-year-old framework sheds some light on LLMs' acts of remembering.

The 30-Year Cycle In The AI Debate

Oct 08, 2018

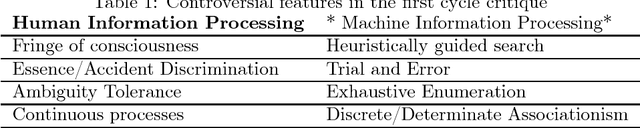

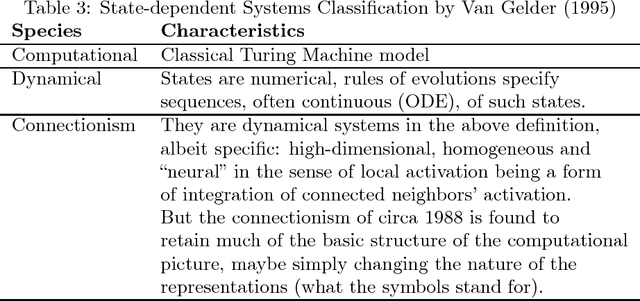

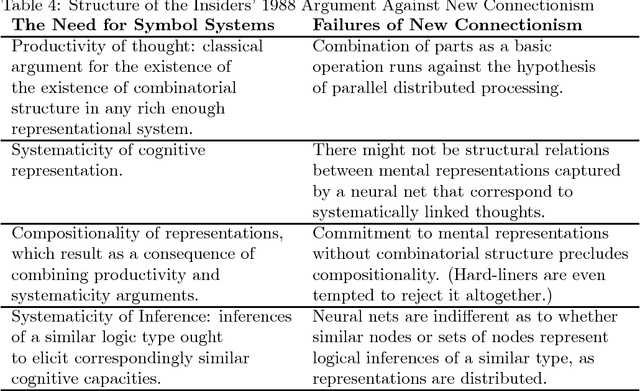

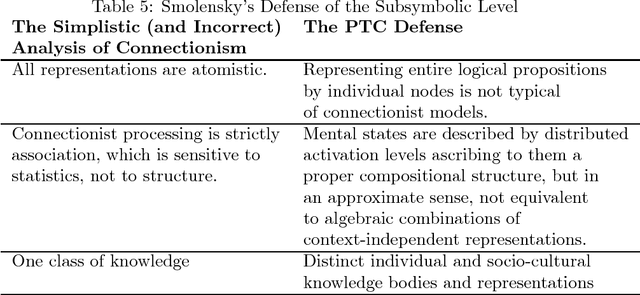

Abstract:In the last couple of years, the rise of Artificial Intelligence and the successes of academic breakthroughs in the field have been inescapable. Vast sums of money have been thrown at AI start-ups. Many existing tech companies -- including the giants like Google, Amazon, Facebook, and Microsoft -- have opened new research labs. The rapid changes in these everyday work and entertainment tools have fueled a rising interest in the underlying technology itself; journalists write about AI tirelessly, and companies -- of tech nature or not -- brand themselves with AI, Machine Learning or Deep Learning whenever they get a chance. Confronting squarely this media coverage, several analysts are starting to voice concerns about over-interpretation of AI's blazing successes and the sometimes poor public reporting on the topic. This paper reviews briefly the track-record in AI and Machine Learning and finds this pattern of early dramatic successes, followed by philosophical critique and unexpected difficulties, if not downright stagnation, returning almost to the clock in 30-year cycles since 1958.

Combinatorial Explorations in Su-Doku

Mar 29, 2008Abstract:Su-Doku, a popular combinatorial puzzle, provides an excellent testbench for heuristic explorations. Several interesting questions arise from its deceptively simple set of rules. How many distinct Su-Doku grids are there? How to find a solution to a Su-Doku puzzle? Is there a unique solution to a given Su-Doku puzzle? What is a good estimation of a puzzle's difficulty? What is the minimum puzzle size (the number of "givens")? This paper explores how these questions are related to the well-known alldifferent constraint which emerges in a wide variety of Constraint Satisfaction Problems (CSP) and compares various algorithmic approaches based on different formulations of Su-Doku.

Memory As A Monadic Control Construct In Problem-Solving

Feb 16, 2004Abstract:Recent advances in programming languages study and design have established a standard way of grounding computational systems representation in category theory. These formal results led to a better understanding of issues of control and side-effects in functional and imperative languages. This framework can be successfully applied to the investigation of the performance of Artificial Intelligence (AI) inference and cognitive systems. In this paper, we delineate a categorical formalisation of memory as a control structure driving performance in inference systems. Abstracting away control mechanisms from three widely used representations of memory in cognitive systems (scripts, production rules and clusters) we explain how categorical triples capture the interaction between learning and problem-solving.

Monadic Style Control Constructs for Inference Systems

Nov 25, 2002Abstract:Recent advances in programming languages study and design have established a standard way of grounding computational systems representation in category theory. These formal results led to a better understanding of issues of control and side-effects in functional and imperative languages. Another benefit is a better way of modelling computational effects in logical frameworks. With this analogy in mind, we embark on an investigation of inference systems based on considering inference behaviour as a form of computation. We delineate a categorical formalisation of control constructs in inference systems. This representation emphasises the parallel between the modular articulation of the categorical building blocks (triples) used to account for the inference architecture and the modular composition of cognitive processes.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge