Jan Van lent

The Monge-Kantorovich Optimal Transport Distance for Image Comparison

Apr 08, 2018

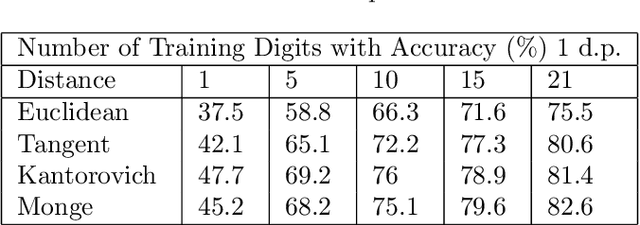

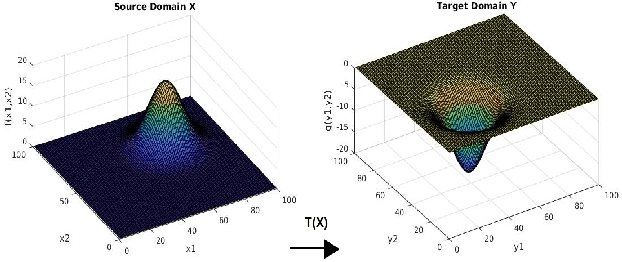

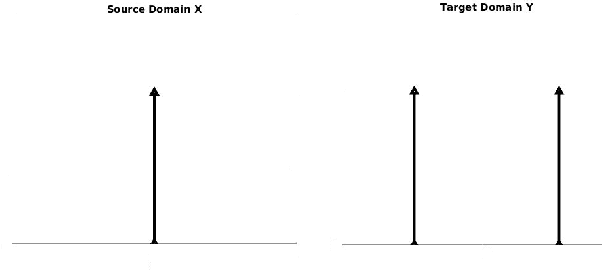

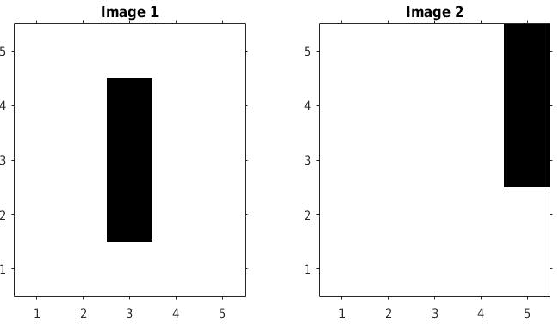

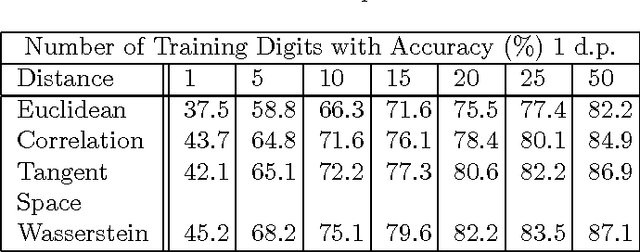

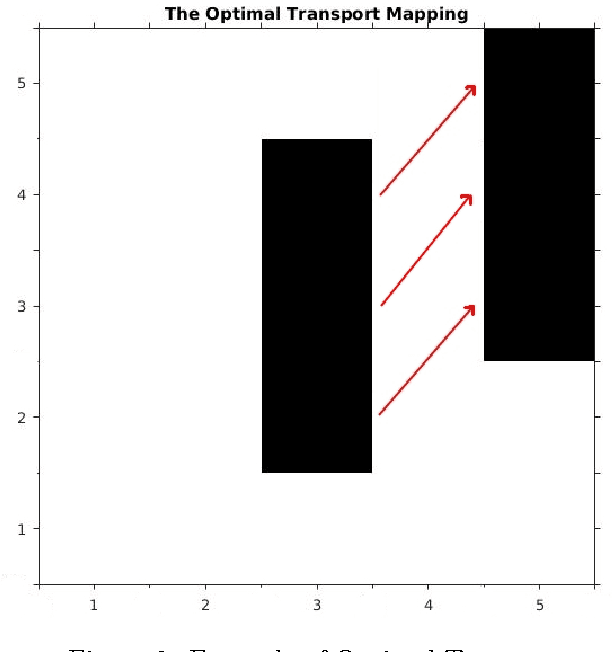

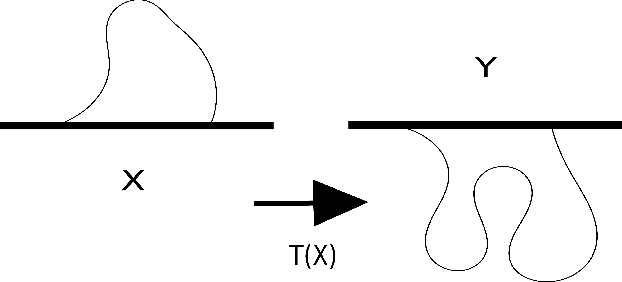

Abstract:This paper focuses on the Monge-Kantorovich formulation of the optimal transport problem and the associated $L^2$ Wasserstein distance. We use the $L^2$ Wasserstein distance in the Nearest Neighbour (NN) machine learning architecture to demonstrate the potential power of the optimal transport distance for image comparison. We compare the Wasserstein distance to other established distances - including the partial differential equation (PDE) formulation of the optimal transport problem - and demonstrate that on the well known MNIST optical character recognition dataset, it achieves excellent results.

Monge's Optimal Transport Distance for Image Classification

Apr 08, 2018

Abstract:This paper focuses on a similarity measure, known as the Wasserstein distance, with which to compare images. The Wasserstein distance results from a partial differential equation (PDE) formulation of Monge's optimal transport problem. We present an efficient numerical solution method for solving Monge's problem. To demonstrate the measure's discriminatory power when comparing images, we use a $1$-Nearest Neighbour ($1$-NN) machine learning algorithm to illustrate the measure's potential benefits over other more traditional distance metrics and also the Tangent Space distance, designed to perform excellently on the well-known MNIST dataset. To our knowledge, the PDE formulation of the Wasserstein metric has not been presented for dealing with image comparison, nor has the Wasserstein distance been used within the $1$-nearest neighbour architecture.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge