Ibrahim Alshubaily

TextCNN with Attention for Text Classification

Aug 04, 2021

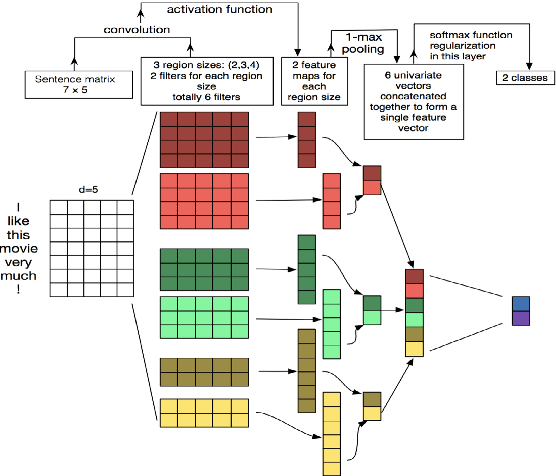

Abstract:The vast majority of textual content is unstructured, making automated classification an important task for many applications. The goal of text classification is to automatically classify text documents into one or more predefined categories. Recently proposed simple architectures for text classification such as Convolutional Neural Networks for Sentence Classification by Kim, Yoon showed promising results. In this paper, we propose incorporating an attention mechanism into the network to boost its performance, we also propose WordRank for vocabulary selection to reduce the network embedding parameters and speed up training with minimum accuracy loss. By adopting the proposed ideas TextCNN accuracy on 20News increased from 94.79 to 96.88, moreover, the number of parameters for the embedding layer can be reduced substantially with little accuracy loss by using WordRank. By using WordRank for vocabulary selection we can reduce the number of parameters by more than 5x from 7.9M to 1.5M, and the accuracy will only decrease by 1.2%.

Efficient Neural Architecture Search with Performance Prediction

Aug 04, 2021Abstract:Neural networks are powerful models that have a remarkable ability to extract patterns that are too complex to be noticed by humans or other machine learning models. Neural networks are the first class of models that can train end-to-end systems with large learning capacities. However, we still have the difficult challenge of designing the neural network, which requires human experience and a long process of trial and error. As a solution, we can use a neural architecture search to find the best network architecture for the task at hand. Existing NAS algorithms generally evaluate the fitness of a new architecture by fully training from scratch, resulting in the prohibitive computational cost, even if operated on high-performance computers. In this paper, an end-to-end offline performance predictor is proposed to accelerate the evaluation of sampled architectures. Index Terms- Learning Curve Prediction, Neural Architecture Search, Reinforcement Learning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge