Hoang-An Le

EDEN: Multimodal Synthetic Dataset of Enclosed GarDEN Scenes

Nov 10, 2020

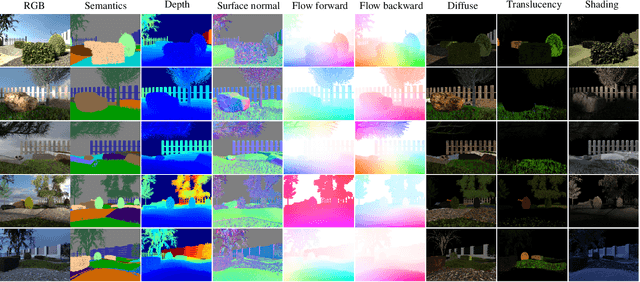

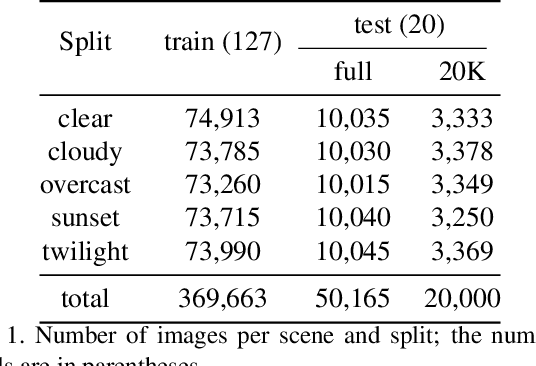

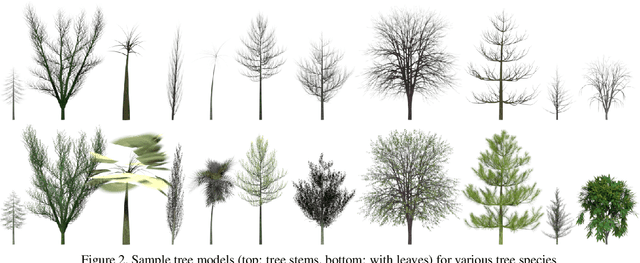

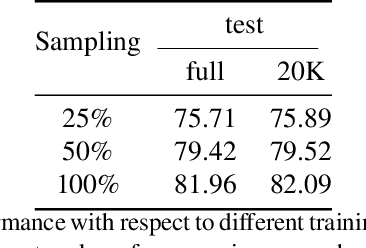

Abstract:Multimodal large-scale datasets for outdoor scenes are mostly designed for urban driving problems. The scenes are highly structured and semantically different from scenarios seen in nature-centered scenes such as gardens or parks. To promote machine learning methods for nature-oriented applications, such as agriculture and gardening, we propose the multimodal synthetic dataset for Enclosed garDEN scenes (EDEN). The dataset features more than 300K images captured from more than 100 garden models. Each image is annotated with various low/high-level vision modalities, including semantic segmentation, depth, surface normals, intrinsic colors, and optical flow. Experimental results on the state-of-the-art methods for semantic segmentation and monocular depth prediction, two important tasks in computer vision, show positive impact of pre-training deep networks on our dataset for unstructured natural scenes. The dataset and related materials will be available at https://lhoangan.github.io/eden.

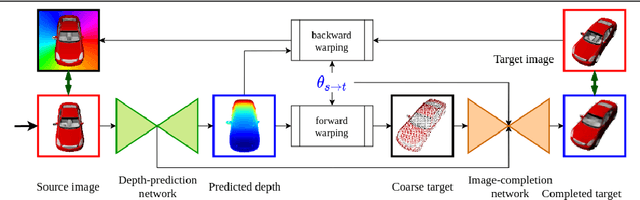

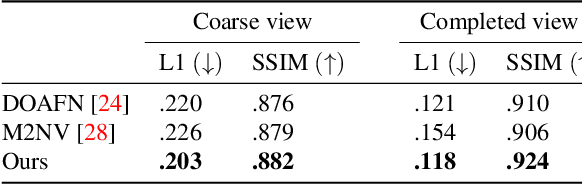

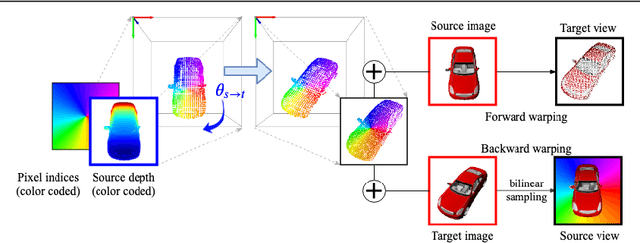

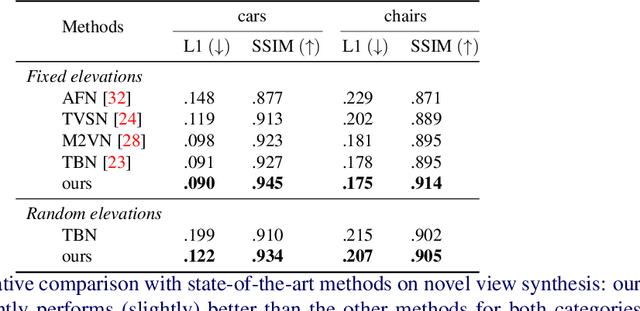

Novel View Synthesis from Single Images via Point Cloud Transformation

Sep 18, 2020

Abstract:In this paper the argument is made that for true novel view synthesis of objects, where the object can be synthesized from any viewpoint, an explicit 3D shape representation isdesired. Our method estimates point clouds to capture the geometry of the object, which can be freely rotated into the desired view and then projected into a new image. This image, however, is sparse by nature and hence this coarse view is used as the input of an image completion network to obtain the dense target view. The point cloud is obtained using the predicted pixel-wise depth map, estimated from a single RGB input image,combined with the camera intrinsics. By using forward warping and backward warpingbetween the input view and the target view, the network can be trained end-to-end without supervision on depth. The benefit of using point clouds as an explicit 3D shape for novel view synthesis is experimentally validated on the 3D ShapeNet benchmark. Source code and data will be available at https://lhoangan.github.io/pc4novis/.

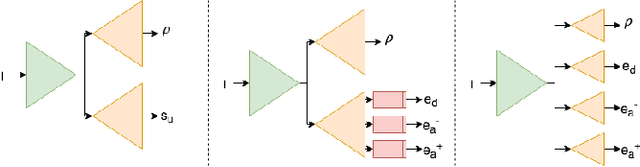

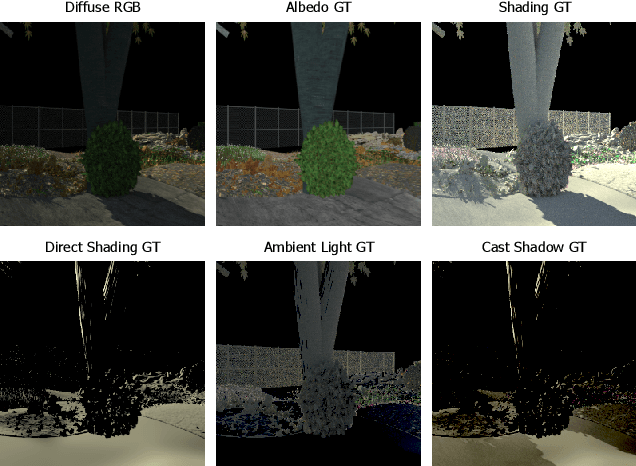

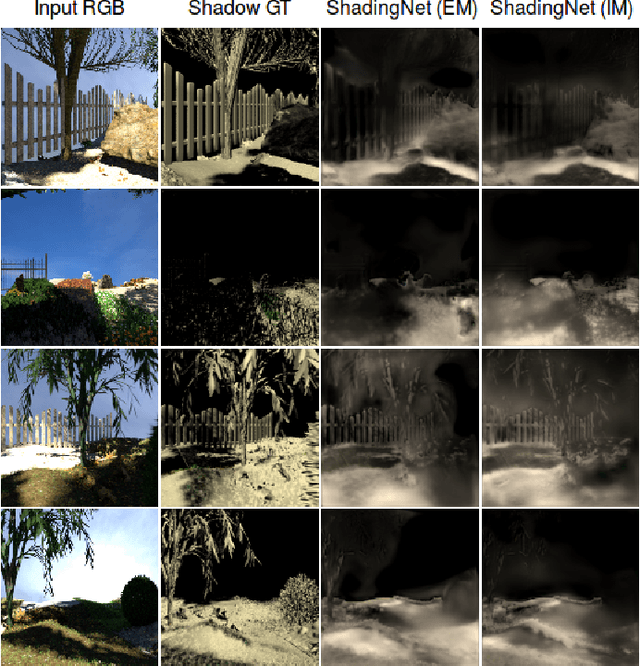

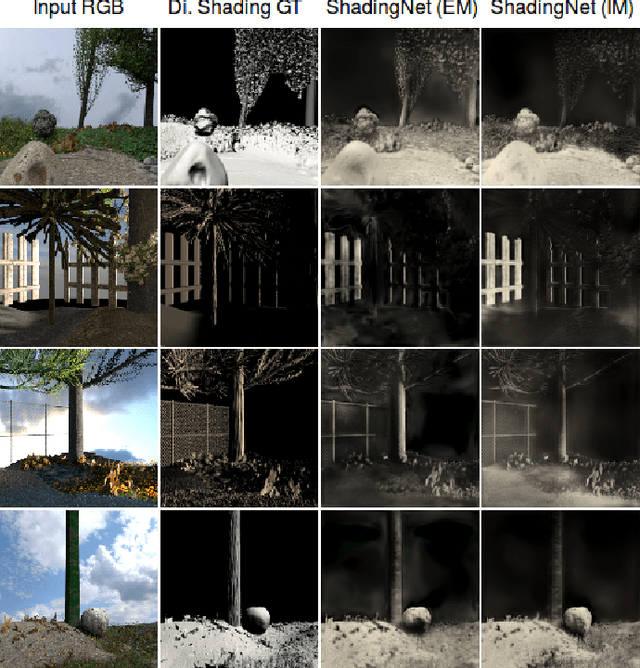

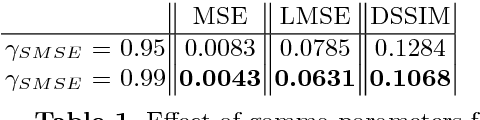

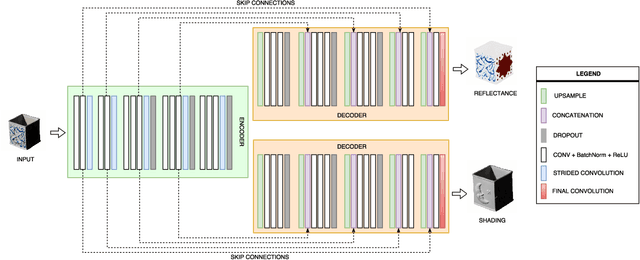

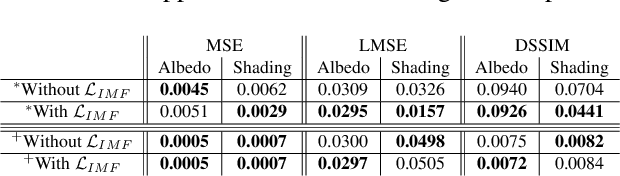

ShadingNet: Image Intrinsics by Fine-Grained Shading Decomposition

Dec 09, 2019

Abstract:In general, intrinsic image decomposition algorithms interpret shading as one unified component including all photometric effects. As shading transitions are generally smoother than albedo changes, these methods may fail in distinguishing strong (cast) shadows from albedo variations. That in return may leak into albedo map predictions. Therefore, in this paper, we propose to decompose the shading component into direct (illumination) and indirect shading (ambient light and shadows). The aim is to distinguish strong cast shadows from reflectance variations. Two end-to-end supervised CNN models (ShadingNets) are proposed exploiting the fine-grained shading model. Furthermore, surface normal features are jointly learned by the proposed CNN networks. Surface normals are expected to assist the decomposition task. A large-scale dataset of scene-level synthetic images of outdoor natural environments is provided with intrinsic image ground-truths. Large scale experiments show that our CNN approach using fine-grained shading decomposition outperforms state-of-the-art methods using unified shading.

Improving Face Detection Performance with 3D-Rendered Synthetic Data

Dec 18, 2018

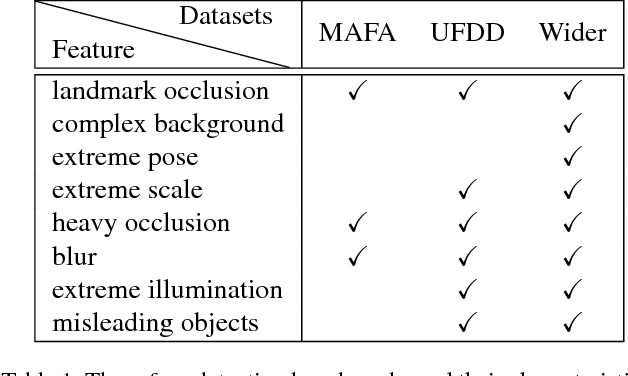

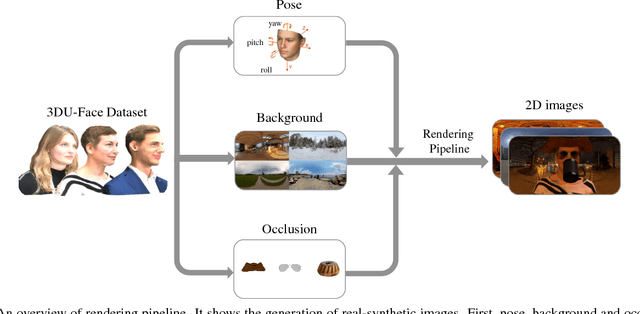

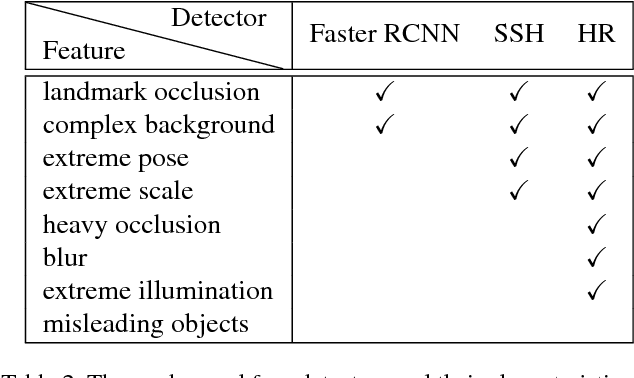

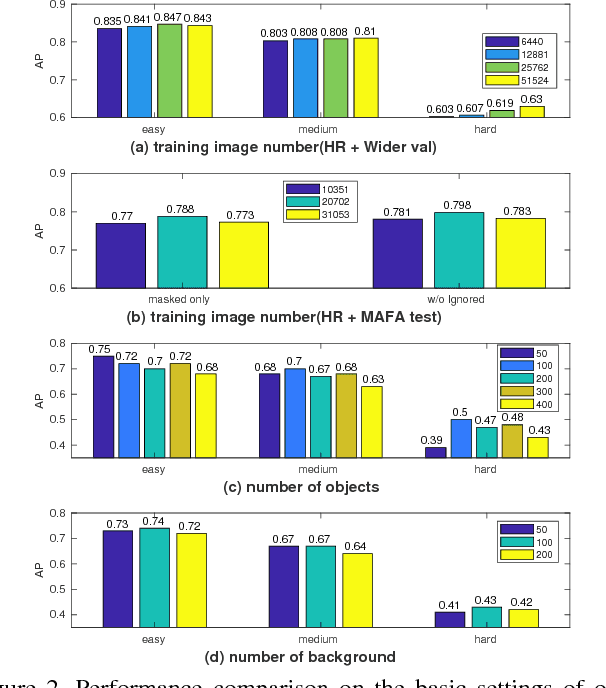

Abstract:In this paper, we provide a synthetic data generator methodology with fully controlled, multifaceted variations based on a new 3D face dataset (3DU-Face). We customized synthetic datasets to address specific types of variations (scale, pose, occlusion, blur, etc.), and systematically investigate the influence of different variations on face detection performances. We examine whether and how these factors contribute to better face detection performances. We validate our synthetic data augmentation for different face detectors (Faster RCNN, SSH and HR) on various face datasets (MAFA, UFDD and Wider Face).

Unsupervised Generation of Optical Flow Datasets from Videos in the Wild

Dec 10, 2018

Abstract:Dense optical flow ground truths of non-rigid motion for real-world images are not available due to the non-intuitive annotation. Aiming at training optical flow deep networks, we present an unsupervised algorithm to generate optical flow ground truth from real-world videos. The algorithm extracts and matches objects of interest from pairs of images in videos to find initial constraints, and applies as-rigid-as-possible deformation over the objects of interest to obtain dense flow fields. The ground truth correctness is enforced by warping the objects in the first frames using the flow fields. We apply the algorithm on the DAVIS dataset to obtain optical flow ground truths for non-rigid movement of real-world objects, using either ground truth or predicted segmentation. We discuss several methods to increase the optical flow variations in the dataset. Extensive experimental results show that training on non-rigid real motion is beneficial compared to training on rigid synthetic data. Moreover, we show that our pipeline generates training data suitable to train successfully FlowNet-S, PWC-Net, and LiteFlowNet deep networks.

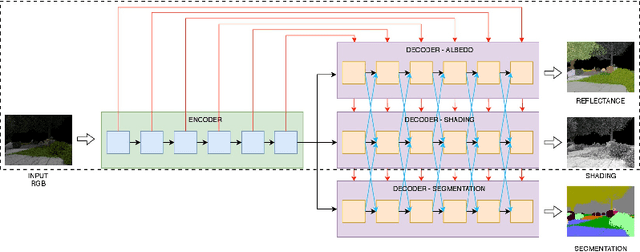

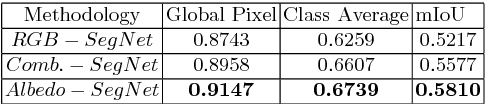

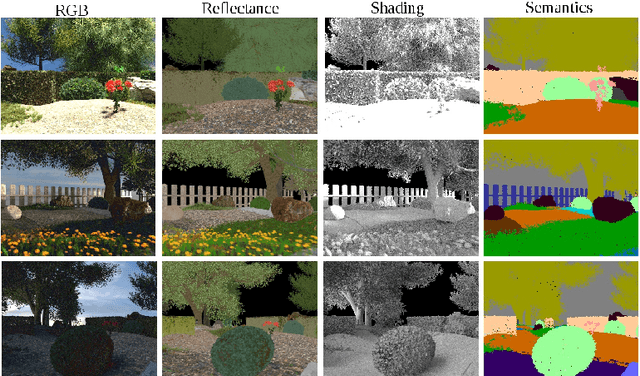

Joint Learning of Intrinsic Images and Semantic Segmentation

Jul 31, 2018

Abstract:Semantic segmentation of outdoor scenes is problematic when there are variations in imaging conditions. It is known that albedo (reflectance) is invariant to all kinds of illumination effects. Thus, using reflectance images for semantic segmentation task can be favorable. Additionally, not only segmentation may benefit from reflectance, but also segmentation may be useful for reflectance computation. Therefore, in this paper, the tasks of semantic segmentation and intrinsic image decomposition are considered as a combined process by exploring their mutual relationship in a joint fashion. To that end, we propose a supervised end-to-end CNN architecture to jointly learn intrinsic image decomposition and semantic segmentation. We analyze the gains of addressing those two problems jointly. Moreover, new cascade CNN architectures for intrinsic-for-segmentation and segmentation-for-intrinsic are proposed as single tasks. Furthermore, a dataset of 35K synthetic images of natural environments is created with corresponding albedo and shading (intrinsics), as well as semantic labels (segmentation) assigned to each object/scene. The experiments show that joint learning of intrinsic image decomposition and semantic segmentation is beneficial for both tasks for natural scenes. Dataset and models are available at: https://ivi.fnwi.uva.nl/cv/intrinseg

Three for one and one for three: Flow, Segmentation, and Surface Normals

Jul 19, 2018Abstract:Optical flow, semantic segmentation, and surface normals represent different information modalities, yet together they bring better cues for scene understanding problems. In this paper, we study the influence between the three modalities: how one impacts on the others and their efficiency in combination. We employ a modular approach using a convolutional refinement network which is trained supervised but isolated from RGB images to enforce joint modality features. To assist the training process, we create a large-scale synthetic outdoor dataset that supports dense annotation of semantic segmentation, optical flow, and surface normals. The experimental results show positive influence among the three modalities, especially for objects' boundaries, region consistency, and scene structures.

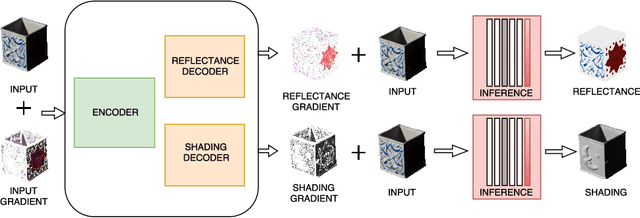

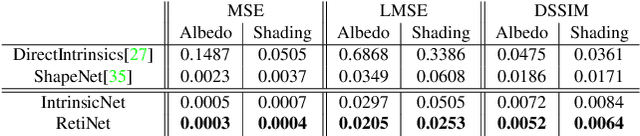

CNN based Learning using Reflection and Retinex Models for Intrinsic Image Decomposition

Apr 03, 2018

Abstract:Most of the traditional work on intrinsic image decomposition rely on deriving priors about scene characteristics. On the other hand, recent research use deep learning models as in-and-out black box and do not consider the well-established, traditional image formation process as the basis of their intrinsic learning process. As a consequence, although current deep learning approaches show superior performance when considering quantitative benchmark results, traditional approaches are still dominant in achieving high qualitative results. In this paper, the aim is to exploit the best of the two worlds. A method is proposed that (1) is empowered by deep learning capabilities, (2) considers a physics-based reflection model to steer the learning process, and (3) exploits the traditional approach to obtain intrinsic images by exploiting reflectance and shading gradient information. The proposed model is fast to compute and allows for the integration of all intrinsic components. To train the new model, an object centered large-scale datasets with intrinsic ground-truth images are created. The evaluation results demonstrate that the new model outperforms existing methods. Visual inspection shows that the image formation loss function augments color reproduction and the use of gradient information produces sharper edges. Datasets, models and higher resolution images are available at https://ivi.fnwi.uva.nl/cv/retinet.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge