Hirotaka Takano

Tackling Unit Commitment and Load Dispatch Problems Considering All Constraints with Evolutionary Computation

Mar 06, 2019

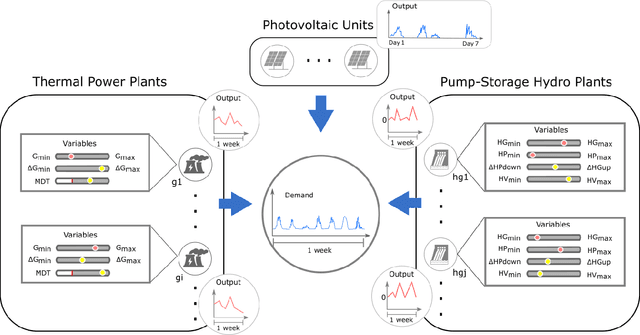

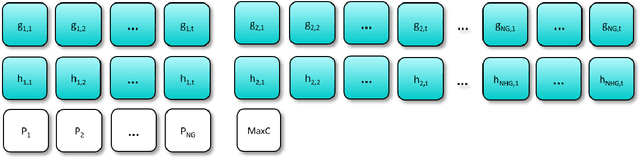

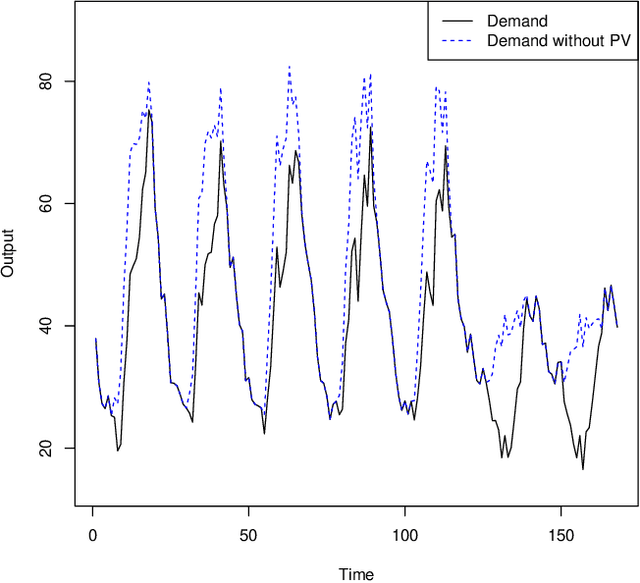

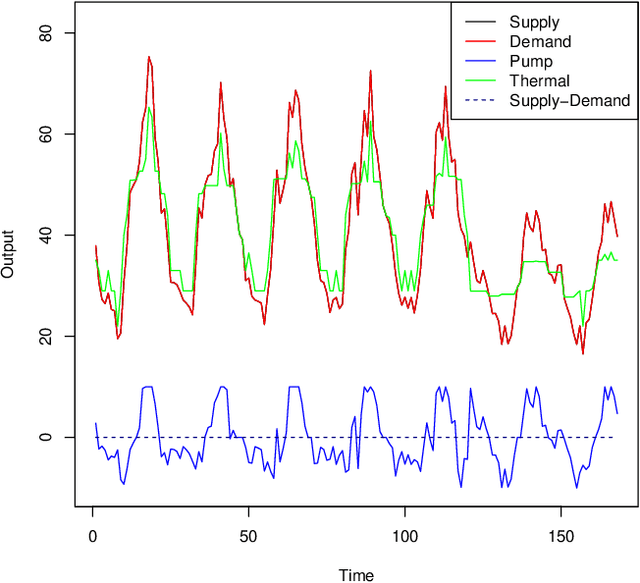

Abstract:Unit commitment and load dispatch problems are important and complex problems in power system operations that have being traditionally solved separately. In this paper, both problems are solved together without approximations or simplifications. In fact, the problem solved has a massive amount of grid-connected photovoltaic units, four pump-storage hydro plants as energy storage units and ten thermal power plants, each with its own set of operation requirements that need to be satisfied. To face such a complex constrained optimization problem an adaptive repair method is proposed. By including a given repair method itself as a parameter to be optimized, the proposed adaptive repair method avoid any bias in repair choices. Moreover, this results in a repair method that adapt to the problem and will improve together with the solution during optimization. Experiments are conducted revealing that the proposed method is capable of surpassing exact method solutions on a simplified version of the problem with approximations as well as solve the otherwise intractable complete problem without simplifications. Moreover, since the proposed approach can be applied to other problems in general and it may not be obvious how to choose the constraint handling for a certain constraint, a guideline is provided explaining the reasoning behind. Thus, this paper open further possibilities to deal with the ever changing types of generation units and other similarly complex operation/schedule optimization problems with many difficult constraints.

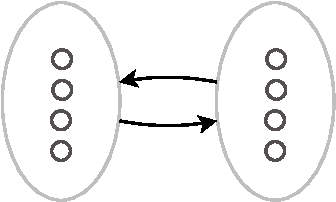

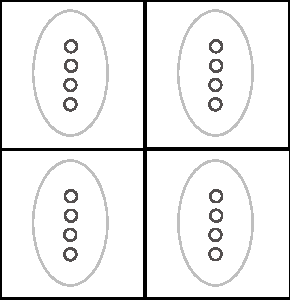

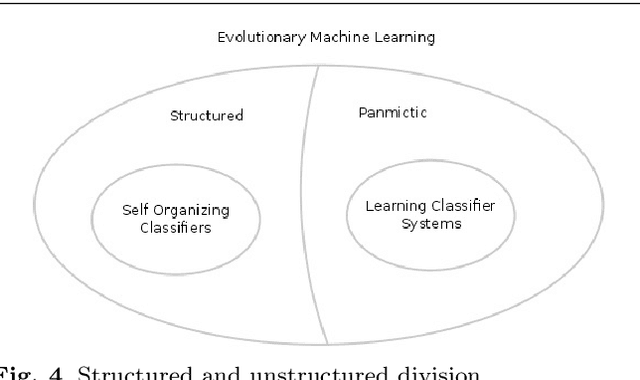

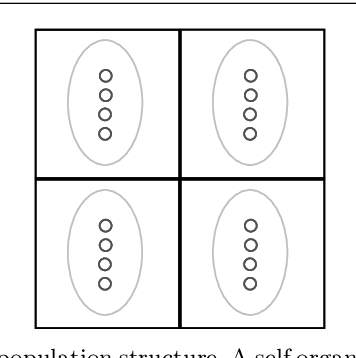

General Subpopulation Framework and Taming the Conflict Inside Populations

Jan 02, 2019Abstract:Structured evolutionary algorithms have been investigated for some time. However, they have been under-explored specially in the field of multi-objective optimization. Despite their good results, the use of complex dynamics and structures make their understanding and adoption rate low. Here, we propose the general subpopulation framework that has the capability of integrating optimization algorithms without restrictions as well as aid the design of structured algorithms. The proposed framework is capable of generalizing most of the structured evolutionary algorithms, such as cellular algorithms, island models, spatial predator-prey and restricted mating based algorithms under its formalization. Moreover, we propose two algorithms based on the general subpopulation framework, demonstrating that with the simple addition of a number of single-objective differential evolution algorithms for each objective the results improve greatly, even when the combined algorithms behave poorly when evaluated alone at the tests. Most importantly, the comparison between the subpopulation algorithms and their related panmictic algorithms suggests that the competition between different strategies inside one population can have deleterious consequences for an algorithm and reveal a strong benefit of using the subpopulation framework. The code for SAN, the proposed multi-objective algorithm which has the current best results in the hardest benchmark, is available at the following https://github.com/zweifel/zweifel

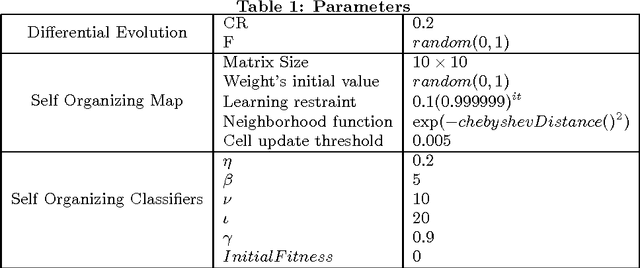

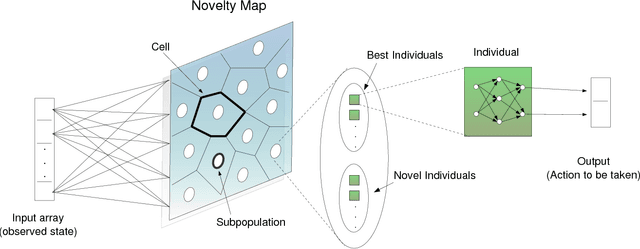

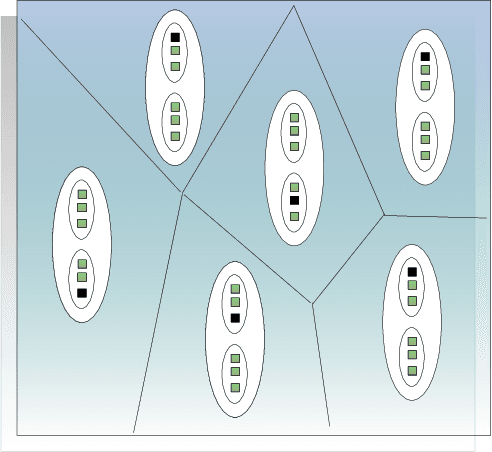

Self Organizing Classifiers and Niched Fitness

Nov 20, 2018

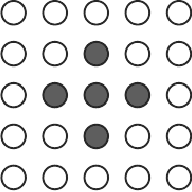

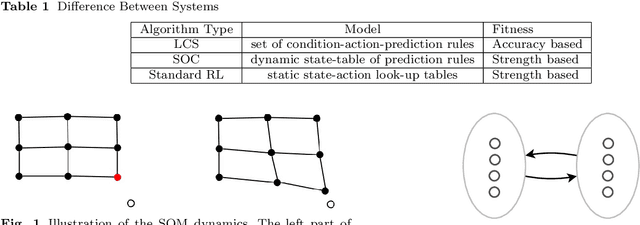

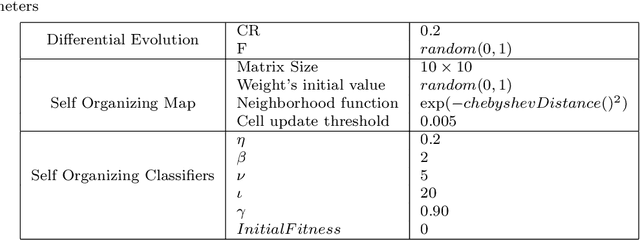

Abstract:Learning classifier systems are adaptive learning systems which have been widely applied in a multitude of application domains. However, there are still some generalization problems unsolved. The hurdle is that fitness and niching pressures are difficult to balance. Here, a new algorithm called Self Organizing Classifiers is proposed which faces this problem from a different perspective. Instead of balancing the pressures, both pressures are separated and no balance is necessary. In fact, the proposed algorithm possesses a dynamical population structure that self-organizes itself to better project the input space into a map. The niched fitness concept is defined along with its dynamical population structure, both are indispensable for the understanding of the proposed method. Promising results are shown on two continuous multi-step problems. One of which is yet more challenging than previous problems of this class in the literature.

* arXiv admin note: text overlap with arXiv:1811.08225

Self Organizing Classifiers: First Steps in Structured Evolutionary Machine Learning

Nov 20, 2018

Abstract:Learning classifier systems (LCSs) are evolutionary machine learning algorithms, flexible enough to be applied to reinforcement, supervised and unsupervised learning problems with good performance. Recently, self organizing classifiers were proposed which are similar to LCSs but have the advantage that in its structured population no balance between niching and fitness pressure is necessary. However, more tests and analysis are required to verify its benefits. Here, a variation of the first algorithm is proposed which uses a parameterless self organizing map (SOM). This algorithm is applied in challenging problems such as big, noisy as well as dynamically changing continuous input-action mazes (growing and compressing mazes are included) with good performance. Moreover, a genetic operator is proposed which utilizes the topological information of the SOM's population structure, improving the results. Thus, the first steps in structured evolutionary machine learning are shown, nonetheless, the problems faced are more difficult than the state-of-art continuous input-action multi-step ones.

Contingency Training

Nov 20, 2018

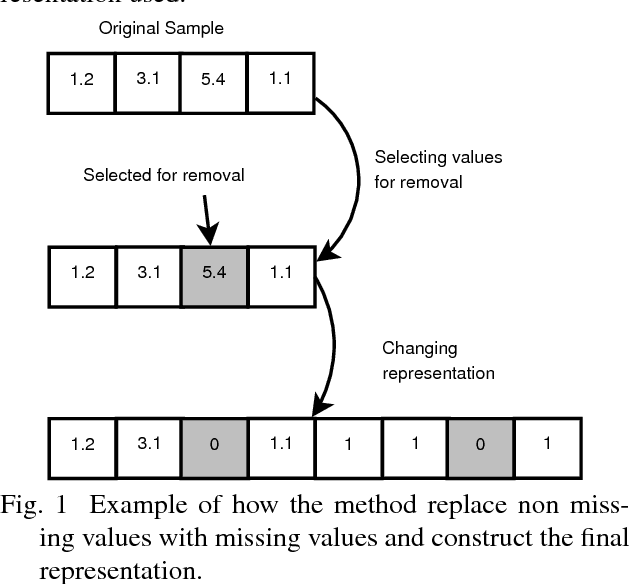

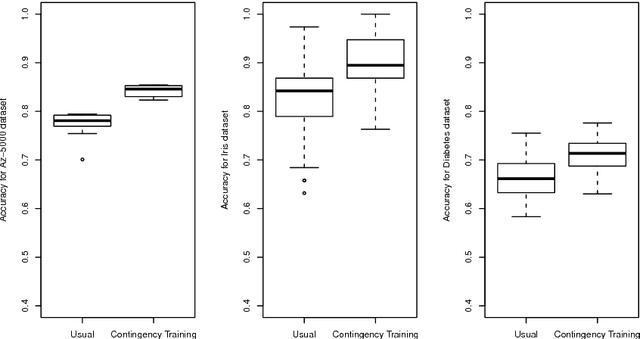

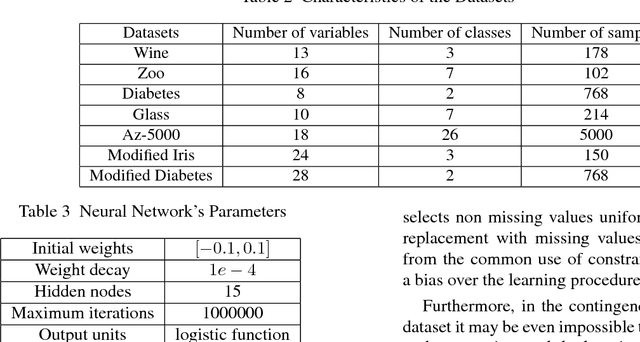

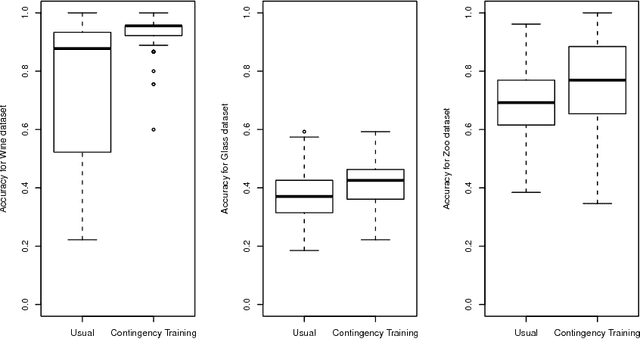

Abstract:When applied to high-dimensional datasets, feature selection algorithms might still leave dozens of irrelevant variables in the dataset. Therefore, even after feature selection has been applied, classifiers must be prepared to the presence of irrelevant variables. This paper investigates a new training method called Contingency Training which increases the accuracy as well as the robustness against irrelevant attributes. Contingency training is classifier independent. By subsampling and removing information from each sample, it creates a set of constraints. These constraints aid the method to automatically find proper importance weights of the dataset's features. Experiments are conducted with the contingency training applied to neural networks over traditional datasets as well as datasets with additional irrelevant variables. For all of the tests, contingency training surpassed the unmodified training on datasets with irrelevant variables and even outperformed slightly when only a few or no irrelevant variables were present.

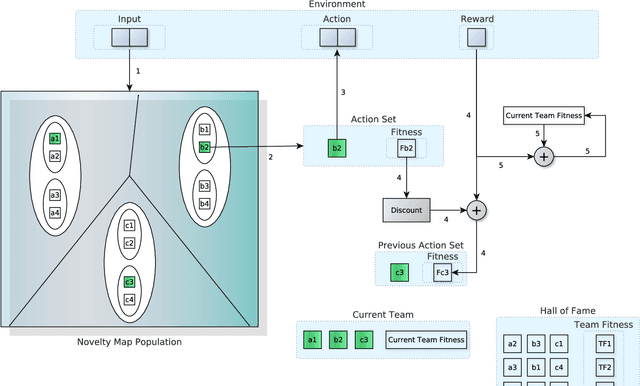

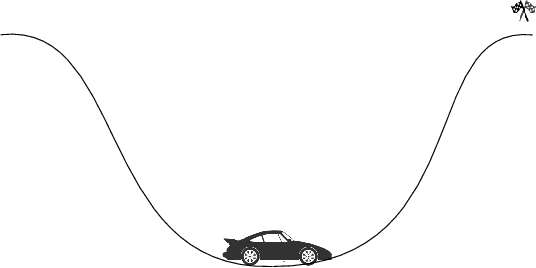

Novelty-organizing team of classifiers in noisy and dynamic environments

Sep 19, 2018

Abstract:In the real world, the environment is constantly changing with the input variables under the effect of noise. However, few algorithms were shown to be able to work under those circumstances. Here, Novelty-Organizing Team of Classifiers (NOTC) is applied to the continuous action mountain car as well as two variations of it: a noisy mountain car and an unstable weather mountain car. These problems take respectively noise and change of problem dynamics into account. Moreover, NOTC is compared with NeuroEvolution of Augmenting Topologies (NEAT) in these problems, revealing a trade-off between the approaches. While NOTC achieves the best performance in all of the problems, NEAT needs less trials to converge. It is demonstrated that NOTC achieves better performance because of its division of the input space (creating easier problems). Unfortunately, this division of input space also requires a bit of time to bootstrap.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge