Hiro Taiyo Hamada

Psychological Concept Neurons: Can Neural Control Bias Probing and Shift Generation in LLMs?

Apr 13, 2026Abstract:Using psychological constructs such as the Big Five, large language models (LLMs) can imitate specific personality profiles and predict a user's personality. While LLMs can exhibit behaviors consistent with these constructs, it remains unclear where and how they are represented inside the model and how they relate to behavioral outputs. To address this gap, we focus on questionnaire-operationalized Big Five concepts, analyze the formation and localization of their internal representations, and use interventions to examine how these representations relate to behavioral outputs. In our experiment, we first use probing to examine where Big Five information emerges across model depth. We then identify neurons that respond selectively to each Big Five concept and test whether enhancing or suppressing their activations can bias latent representations and label generation in intended directions. We find that Big Five information becomes rapidly decodable in early layers and remains detectable through the final layers, while concept-selective neurons are most prevalent in mid layers and exhibit limited overlap across domains. Interventions on these neurons consistently shift probe readouts toward targeted concepts, with targeted success rates exceeding 0.8 for some concepts, indicating that the model's internal separation of Big Five personality traits can be causally steered. At the label-generation level, the same interventions often bias generated label distributions in the intended directions, but the effects are weaker, more concept-dependent, and often accompanied by cross-trait spillover, indicating that comparable control over generated labels is difficult even with interventions on a large fraction of concept-selective neurons. Overall, our findings reveal a gap between representational control and behavioral control in LLMs.

AI agents for facilitating social interactions and wellbeing

Feb 26, 2022

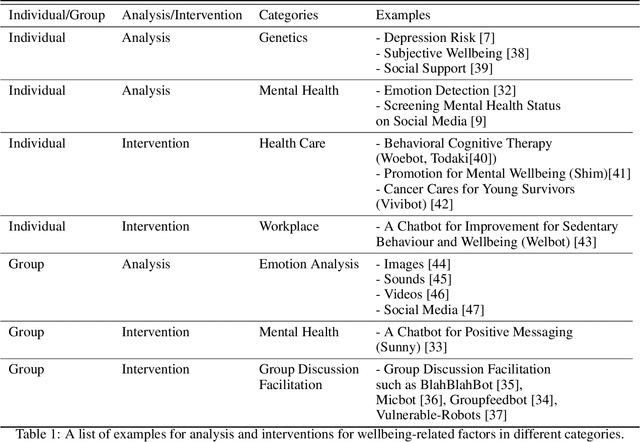

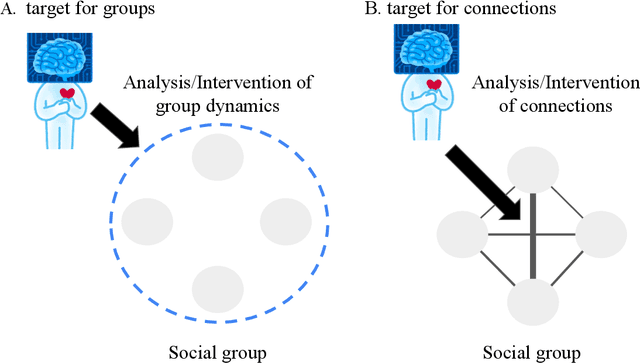

Abstract:Wellbeing AI has been becoming a new trend in individuals' mental health, organizational health, and flourishing our societies. Various applications of wellbeing AI have been introduced to our daily lives. While social relationships within groups are a critical factor for wellbeing, the development of wellbeing AI for social interactions remains relatively scarce. In this paper, we provide an overview of the mediative role of AI-augmented agents for social interactions. First, we discuss the two-dimensional framework for classifying wellbeing AI: individual/group and analysis/intervention. Furthermore, wellbeing AI touches on intervening social relationships between human-human interactions since positive social relationships are key to human wellbeing. This intervention may raise technical and ethical challenges. We discuss opportunities and challenges of the relational approach with wellbeing AI to promote wellbeing in our societies.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge