Hieu X. Le

Transfer learning with class-weighted and focal loss function for automatic skin cancer classification

Sep 13, 2020

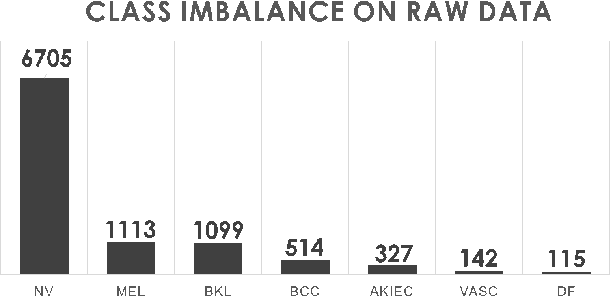

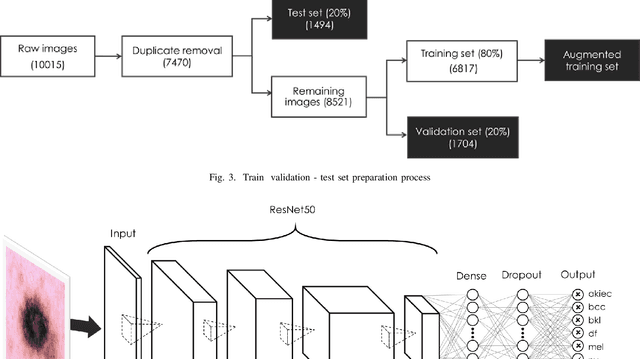

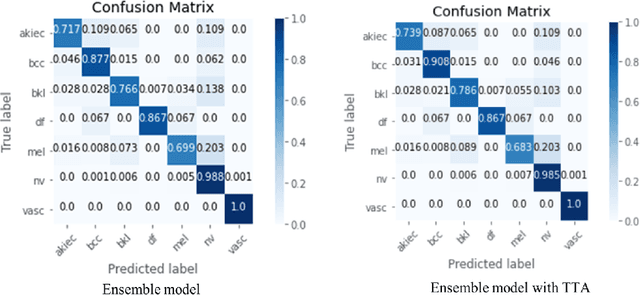

Abstract:Skin cancer is by far in top-3 of the world's most common cancer. Among different skin cancer types, melanoma is particularly dangerous because of its ability to metastasize. Early detection is the key to success in skin cancer treatment. However, skin cancer diagnosis is still a challenge, even for experienced dermatologists, due to strong resemblances between benign and malignant lesions. To aid dermatologists in skin cancer diagnosis, we developed a deep learning system that can effectively and automatically classify skin lesions into one of the seven classes: (1) Actinic Keratoses, (2) Basal Cell Carcinoma, (3) Benign Keratosis, (4) Dermatofibroma, (5) Melanocytic nevi, (6) Melanoma, (7) Vascular Skin Lesion. The HAM10000 dataset was used to train the system. An end-to-end deep learning process, transfer learning technique, utilizing multiple pre-trained models, combining with class-weighted and focal loss were applied for the classification process. The result was that our ensemble of modified ResNet50 models can classify skin lesions into one of the seven classes with top-1, top-2 and top-3 accuracy 93%, 97% and 99%, respectively. This deep learning system can potentially be integrated into computer-aided diagnosis systems that support dermatologists in skin cancer diagnosis.

Interpretation of smartphone-captured radiographs utilizing a deep learning-based approach

Sep 13, 2020

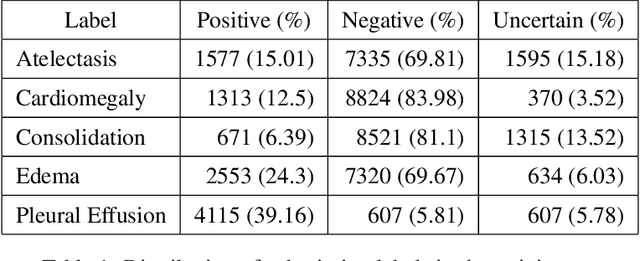

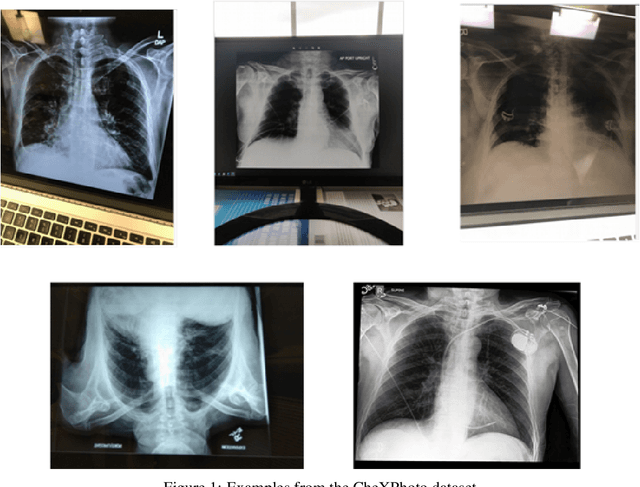

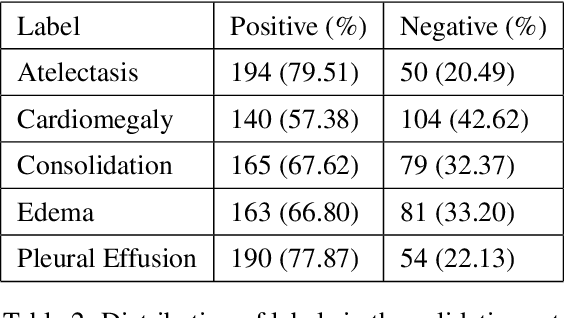

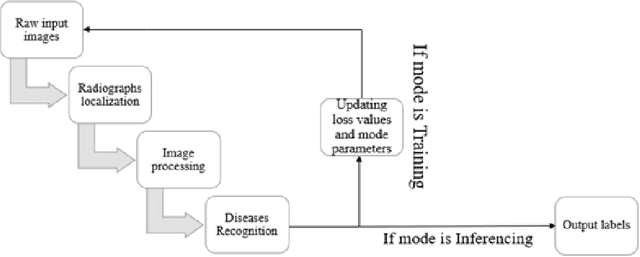

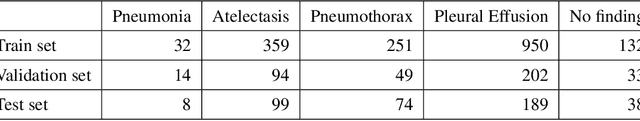

Abstract:Recently, computer-aided diagnostic systems (CADs) that could automatically interpret medical images effectively have been the emerging subject of recent academic attention. For radiographs, several deep learning-based systems or models have been developed to study the multi-label diseases recognition tasks. However, none of them have been trained to work on smartphone-captured chest radiographs. In this study, we proposed a system that comprises a sequence of deep learning-based neural networks trained on the newly released CheXphoto dataset to tackle this issue. The proposed approach achieved promising results of 0.684 in AUC and 0.699 in average F1 score. To the best of our knowledge, this is the first published study that showed to be capable of processing smartphone-captured radiographs.

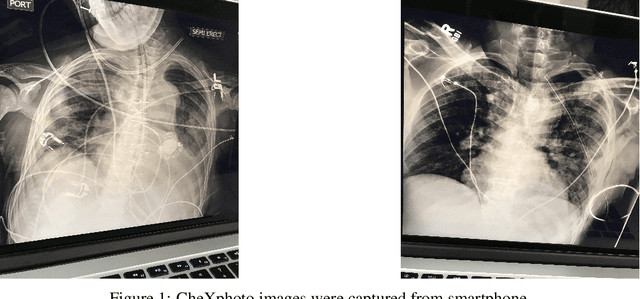

A novel approach to remove foreign objects from chest X-ray images

Aug 16, 2020

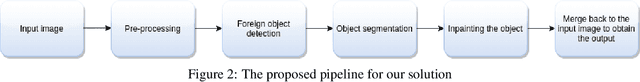

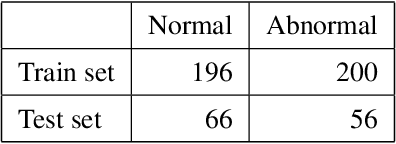

Abstract:We initially proposed a deep learning approach for foreign objects inpainting in smartphone-camera captured chest radiographs utilizing the cheXphoto dataset. Foreign objects which can significantly affect the quality of a computer-aided diagnostic prediction are captured under various settings. In this paper, we used multi-method to tackle both removal and inpainting chest radiographs. Firstly, an object detection model is trained to separate the foreign objects from the given image. Subsequently, the binary mask of each object is extracted utilizing a segmentation model. Each pair of the binary mask and the extracted object are then used for inpainting purposes. Finally, the in-painted regions are now merged back to the original image, resulting in a clean and non-foreign-object-existing output. To conclude, we achieved state-of-the-art accuracy. The experimental results showed a new approach to the possible applications of this method for chest X-ray images detection.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge