Heewon Park

Fed-ADE: Adaptive Learning Rate for Federated Post-adaptation under Distribution Shift

Mar 01, 2026Abstract:Federated learning (FL) in post-deployment settings must adapt to non-stationary data streams across heterogeneous clients without access to ground-truth labels. A major challenge is learning rate selection under client-specific, time-varying distribution shifts, where fixed learning rates often lead to underfitting or divergence. We propose Fed-ADE (Federated Adaptation with Distribution Shift Estimation), an unsupervised federated adaptation framework that leverages lightweight estimators of distribution dynamics. Specifically, Fed-ADE employs uncertainty dynamics estimation to capture changes in predictive uncertainty and representation dynamics estimation to detect covariate-level feature drift, combining them into a per-client, per-timestep adaptive learning rate. We provide theoretical analyses showing that our dynamics estimation approximates the underlying distribution shift and yields dynamic regret and convergence guarantees. Experiments on image and text benchmarks under diverse distribution shifts (label and covariate) demonstrate consistent improvements over strong baselines. These results highlight that distribution shift-aware adaptation enables effective and robust federated post-adaptation under real-world non-stationarity.

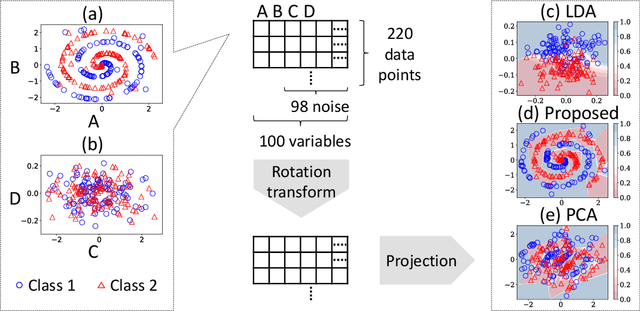

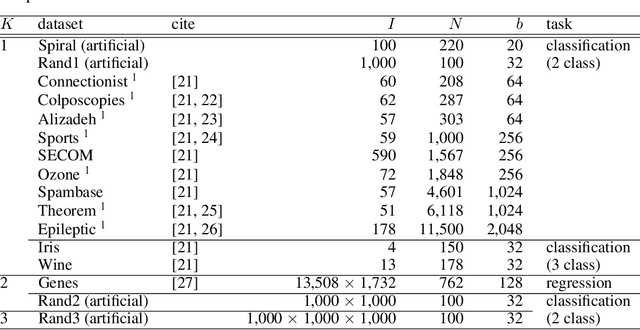

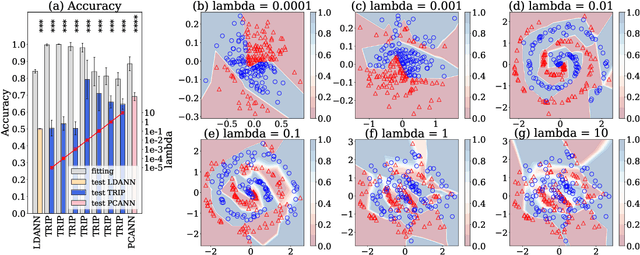

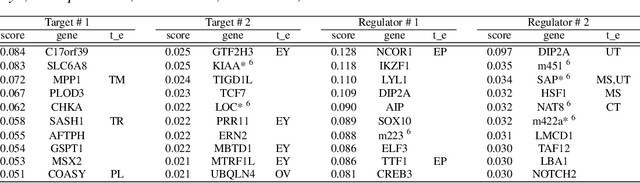

Linear Tensor Projection Revealing Nonlinearity

Jul 08, 2020

Abstract:Dimensionality reduction is an effective method for learning high-dimensional data, which can provide better understanding of decision boundaries in human-readable low-dimensional subspace. Linear methods, such as principal component analysis and linear discriminant analysis, make it possible to capture the correlation between many variables; however, there is no guarantee that the correlations that are important in predicting data can be captured. Moreover, if the decision boundary has strong nonlinearity, the guarantee becomes increasingly difficult. This problem is exacerbated when the data are matrices or tensors that represent relationships between variables. We propose a learning method that searches for a subspace that maximizes the prediction accuracy while retaining as much of the original data information as possible, even if the prediction model in the subspace has strong nonlinearity. This makes it easier to interpret the mechanism of the group of variables behind the prediction problem that the user wants to know. We show the effectiveness of our method by applying it to various types of data including matrices and tensors.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge