Hamimah Ujir

The Analysis of Facial Feature Deformation using Optical Flow Algorithm

Oct 23, 2020

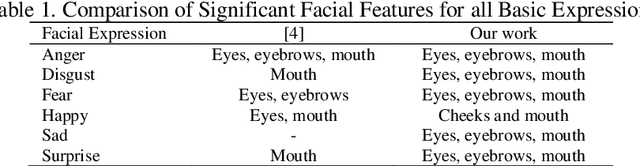

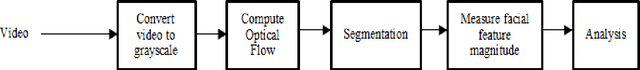

Abstract:Facial features deformed according to the intended facial expression. Specific facial features are associated with specific facial expression, i.e. happy means the deformation of mouth. This paper presents the study of facial feature deformation for each facial expression by using an optical flow algorithm and segmented into three different regions of interest. The deformation of facial features shows the relation between facial the and facial expression. Based on the experiments, the deformations of eye and mouth are significant in all expressions except happy. For happy expression, cheeks and mouths are the significant regions. This work also suggests that different facial features' intensity varies in the way that they contribute to the recognition of the different facial expression intensity. The maximum magnitude across all expressions is shown by the mouth for surprise expression which is 9x10-4. While the minimum magnitude is shown by the mouth for angry expression which is 0.4x10-4.

* 8 pages

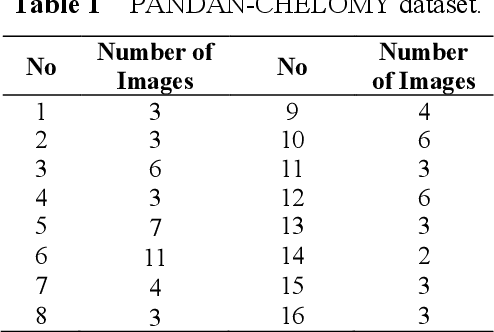

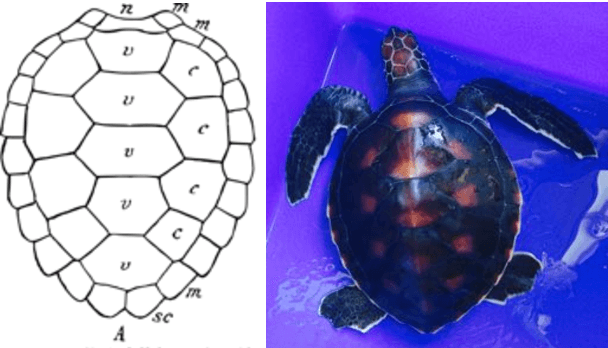

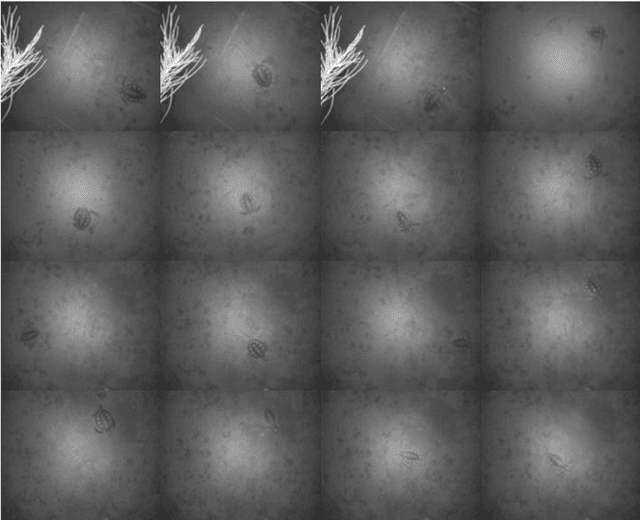

Towards Automated Biometric Identification of Sea Turtles (Chelonia mydas)

Sep 25, 2019

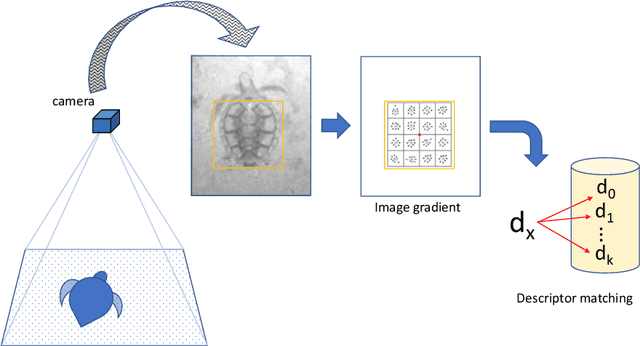

Abstract:Passive biometric identification enables wildlife monitoring with minimal disturbance. Using a motion-activated camera placed at an elevated position and facing downwards, we collected images of sea turtle carapace, each belonging to one of sixteen Chelonia mydas juveniles. We then learned co-variant and robust image descriptors from these images, enabling indexing and retrieval. In this work, we presented several classification results of sea turtle carapaces using the learned image descriptors. We found that a template-based descriptor, i.e., Histogram of Oriented Gradients (HOG) performed exceedingly better during classification than keypoint-based descriptors. For our dataset, a high-dimensional descriptor is a must due to the minimal gradient and color information inside the carapace images. Using HOG, we obtained an average classification accuracy of 65%.

* This is the draft version. Final version published in Journal of ICT Research and Applications, [S.l.], v. 12, n. 3, p. 256-266, dec. 2018

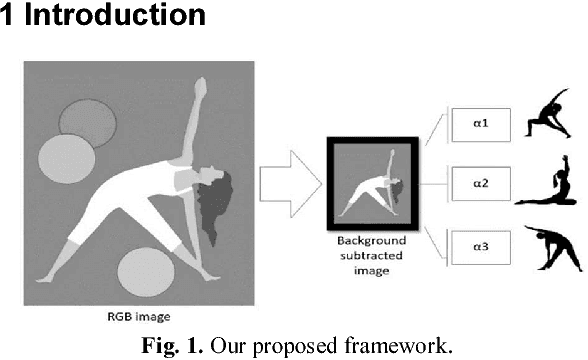

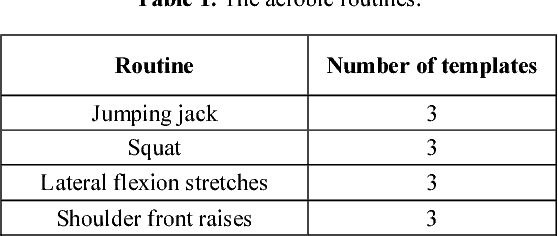

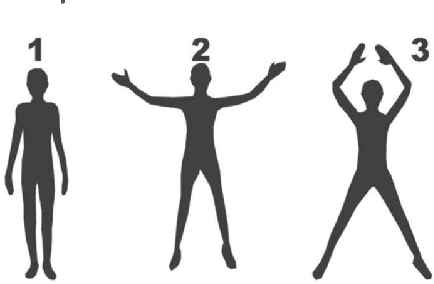

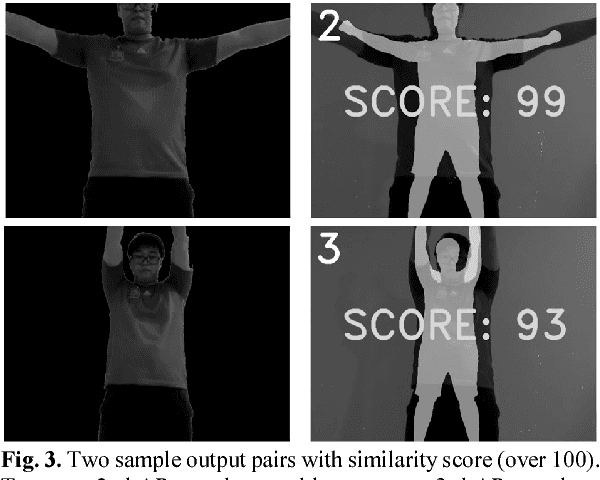

Assessing Performance of Aerobic Routines using Background Subtraction and Intersected Image Region

Oct 03, 2018

Abstract:It is recommended for a novice to engage a trained and experience person, i.e., a coach before starting an unfamiliar aerobic or weight routine. The coach's task is to provide real-time feedbacks to ensure that the routine is performed in a correct manner. This greatly reduces the risk of injury and maximise physical gains. We present a simple image similarity measure based on intersected image region to assess a subject's performance of an aerobic routine. The method is implemented inside an Augmented Reality (AR) desktop app that employs a single RGB camera to capture still images of the subject as he or she progresses through the routine. The background-subtracted body pose image is compared against the exemplar body pose image (i.e., AR template) at specific intervals. Based on a limited dataset, our pose matching function is reported to have an accuracy of 93.67%.

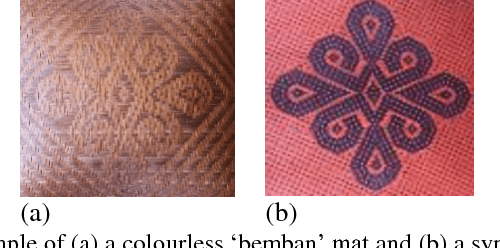

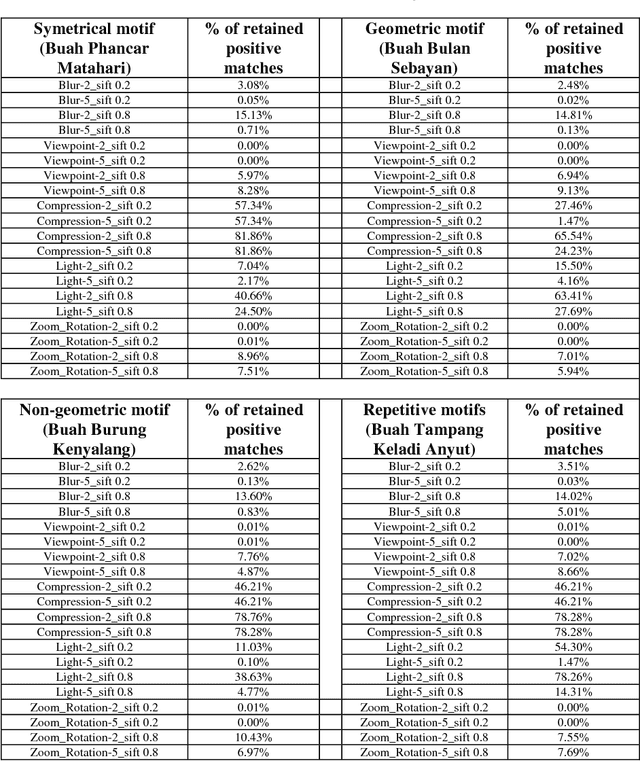

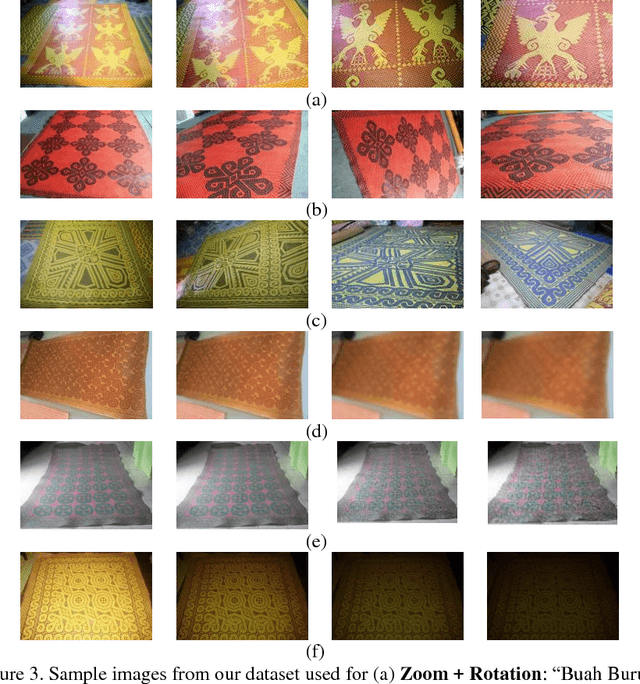

Performance Evaluation of SIFT Descriptor against Common Image Deformations on Iban Plaited Mat Motifs

Oct 03, 2018

Abstract:Borneo indigenous communities are blessed with rich craft heritage. One such examples is the Iban's plaited mat craft. There have been many efforts by UNESCO and the Sarawak Government to preserve and promote the craft. One such method is by developing a mobile app capable of recognising the different mat motifs. As a first step towards this aim, we presents a novel image dataset consisting of seven mat motif classes. Each class possesses a unique variation of chevrons, diagonal shapes, symmetrical, repetitive, geometric and non geometric patterns. In this study, the performance of the Scale invariant feature transform (SIFT) descriptor is evaluated against five common image deformations, i.e., zoom and rotation, viewpoint, image blur, JPEG compression and illumination. Using our dataset, SIFT performed favourably with test sequences belonging to Illumination changes, Viewpoint changes, JPEG compression and Zoom and Rotation. However, it did not performed well with Image blur test sequences with an average of 1.61 percents retained pairwise matching after blurring with a Gaussian kernel of 8.0 radius.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge