Gustavo Assunção

Approaching Metaheuristic Deep Learning Combos for Automated Data Mining

Oct 16, 2024

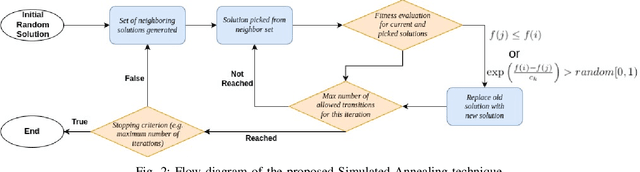

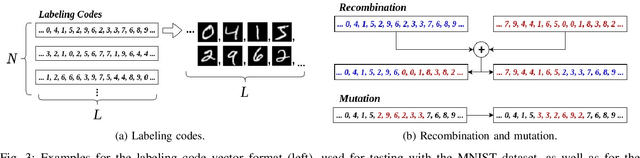

Abstract:Lack of data on which to perform experimentation is a recurring issue in many areas of research, particularly in machine learning. The inability of most automated data mining techniques to be generalized to all types of data is inherently related with their dependency on those types which deems them ineffective against anything slightly different. Meta-heuristics are algorithms which attempt to optimize some solution independently of the type of data used, whilst classifiers or neural networks focus on feature extrapolation and dimensionality reduction to fit some model onto data arranged in a particular way. These two algorithmic fields encompass a group of characteristics which when combined are seemingly capable of achieving data mining regardless of how it is arranged. To this end, this work proposes a means of combining meta-heuristic methods with conventional classifiers and neural networks in order to perform automated data mining. Experiments on the MNIST dataset for handwritten digit recognition were performed and it was empirically observed that using a ground truth labeled dataset's validation accuracy is inadequate for correcting labels of other previously unseen data instances.

Self-mediated exploration in artificial intelligence inspired by cognitive psychology

Feb 13, 2023

Abstract:Exploration of the physical environment is an indispensable precursor to data acquisition and enables knowledge generation via analytical or direct trialing. Artificial Intelligence lacks the exploratory capabilities of even the most underdeveloped organisms, hindering its autonomy and adaptability. Supported by cognitive psychology, this works links human behavior and artificial agents to endorse self-development. In accordance with reported data, paradigms of epistemic and achievement emotion are embedded to machine-learning methodology contingent on their impact when decision making. A study is subsequently designed to mirror previous human trials, which artificial agents are made to undergo repeatedly towards convergence. Results demonstrate causality, learned by the vast majority of agents, between their internal states and exploration to match those reported for human counterparts. The ramifications of these findings are pondered for both research into human cognition and betterment of artificial intelligence.

Bio-Inspired Modality Fusion for Active Speaker Detection

Feb 28, 2020

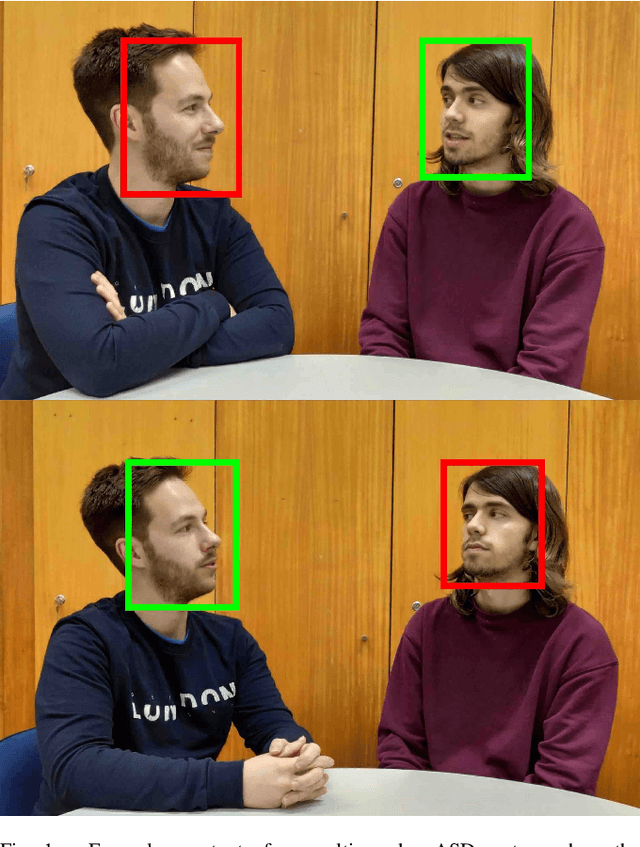

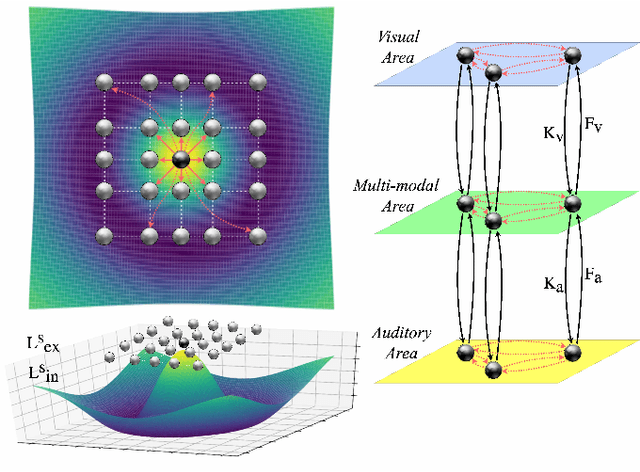

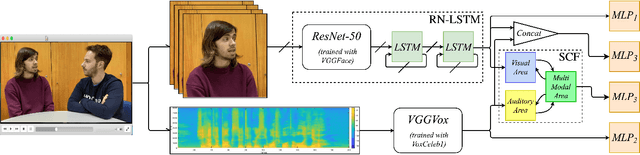

Abstract:Human beings have developed fantastic abilities to integrate information from various sensory sources exploring their inherent complementarity. Perceptual capabilities are therefore heightened enabling, for instance, the well known "cocktail party" and McGurk effects, i.e. speech disambiguation from a panoply of sound signals. This fusion ability is also key in refining the perception of sound source location, as in distinguishing whose voice is being heard in a group conversation. Furthermore, Neuroscience has successfully identified the superior colliculus region in the brain as the one responsible for this modality fusion, with a handful of biological models having been proposed to approach its underlying neurophysiological process. Deriving inspiration from one of these models, this paper presents a methodology for effectively fusing correlated auditory and visual information for active speaker detection. Such an ability can have a wide range of applications, from teleconferencing systems to social robotics. The detection approach initially routes auditory and visual information through two specialized neural network structures. The resulting embeddings are fused via a novel layer based on the superior colliculus, whose topological structure emulates spatial neuron cross-mapping of unimodal perceptual fields. The validation process employed two publicly available datasets, with achieved results confirming and greatly surpassing initial expectations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge