Guo JinHong

Instance Attack:An Explanation-based Vulnerability Analysis Framework Against DNNs for Malware Detection

Sep 06, 2022

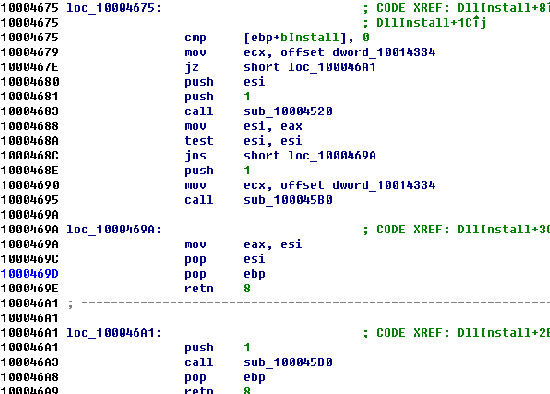

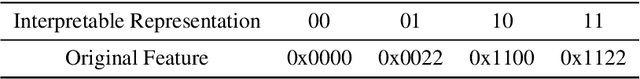

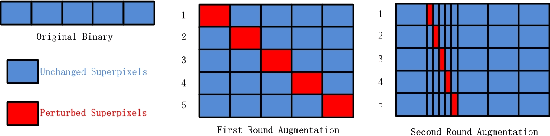

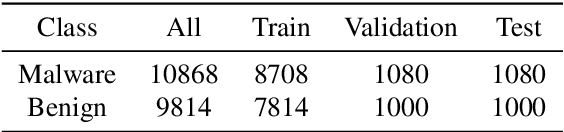

Abstract:Deep neural networks (DNNs) are increasingly being applied in malware detection and their robustness has been widely debated. Traditionally an adversarial example generation scheme relies on either detailed model information (gradient-based methods) or lots of samples to train a surrogate model, neither of which are available in most scenarios. We propose the notion of the instance-based attack. Our scheme is interpretable and can work in a black-box environment. Given a specific binary example and a malware classifier, we use the data augmentation strategies to produce enough data from which we can train a simple interpretable model. We explain the detection model by displaying the weight of different parts of the specific binary. By analyzing the explanations, we found that the data subsections play an important role in Windows PE malware detection. We proposed a new function preserving transformation algorithm that can be applied to data subsections. By employing the binary-diversification techniques that we proposed, we eliminated the influence of the most weighted part to generate adversarial examples. Our algorithm can fool the DNNs in certain cases with a success rate of nearly 100\%. Our method outperforms the state-of-the-art method . The most important aspect is that our method operates in black-box settings and the results can be validated with domain knowledge. Our analysis model can assist people in improving the robustness of malware detectors.

Fun2Vec:a Contrastive Learning Framework of Function-level Representation for Binary

Sep 06, 2022

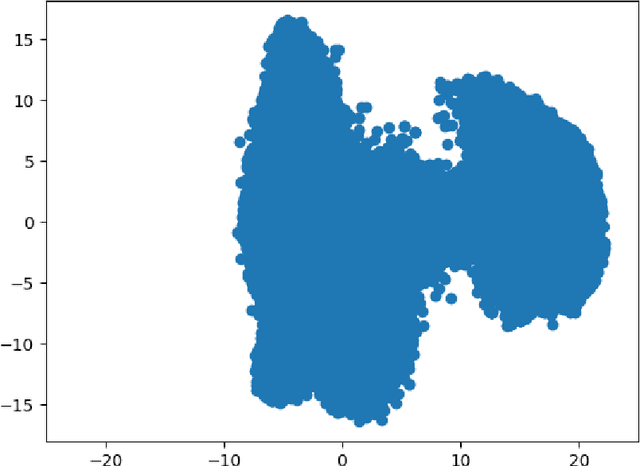

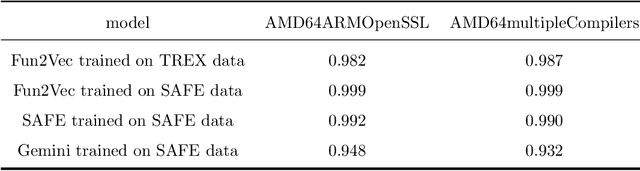

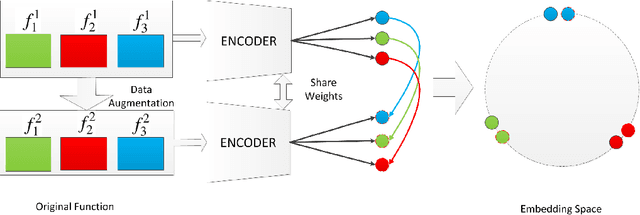

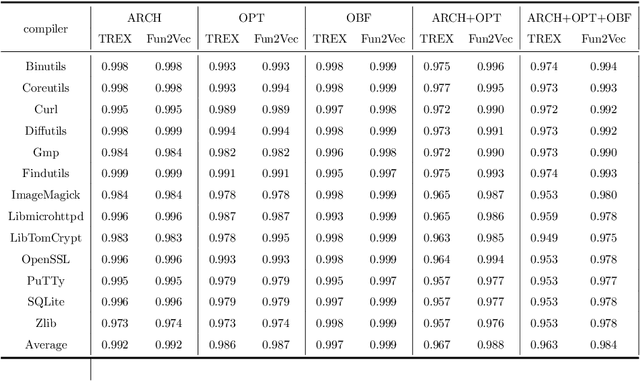

Abstract:Function-level binary code similarity detection is essential in the field of cyberspace security. It helps us find bugs and detect patent infringements in released software and plays a key role in the prevention of supply chain attacks. A practical embedding learning framework relies on the robustness of vector representation system of assembly code and the accuracy of the annotation of function pairs. Supervised learning based methods are traditionally emploied. But annotating different function pairs with accurate labels is very difficult. These supervised learning methods are easily overtrained and suffer from vector robustness issues. To mitigate these problems, we propose Fun2Vec: a contrastive learning framework of function-level representation for binary. We take an unsupervised learning approach and formulate the binary code similarity detection as instance discrimination. Fun2Vec works directly on disassembled binary functions, and could be implemented with any encoder. It does not require manual labeled similar or dissimilar information. We use the compiler optimization options and code obfuscation techniques to generate augmented data. Our experimental results demonstrate that our method surpasses the state-of-the-art in accuracy and have great advantage in few-shot settings.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge