Glen Evenbly

Improved Wavelets for Image Compression from Unitary Circuits

Mar 04, 2022

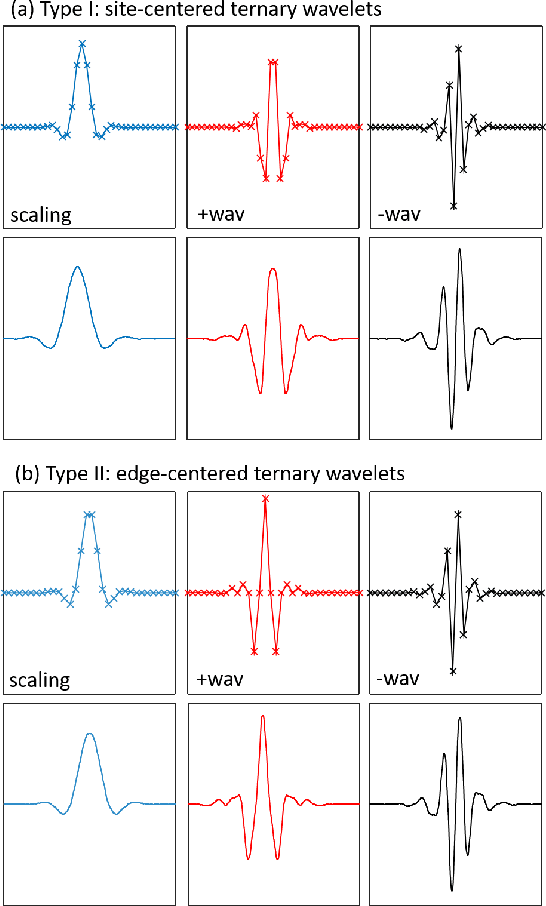

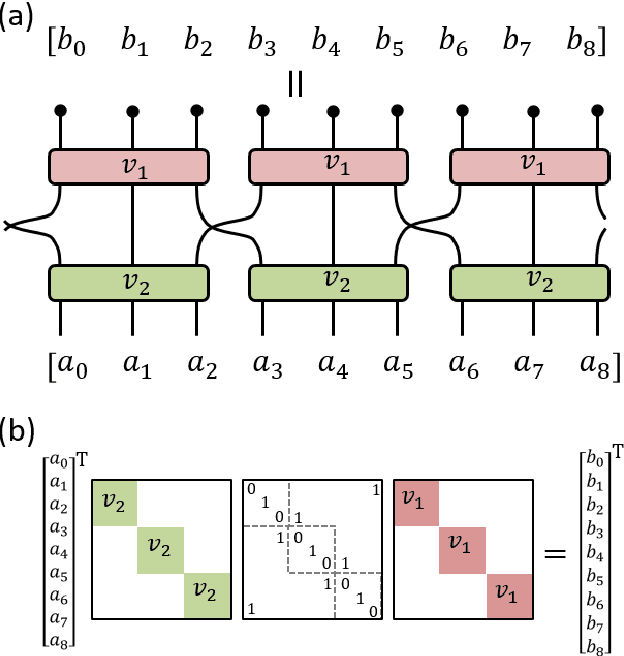

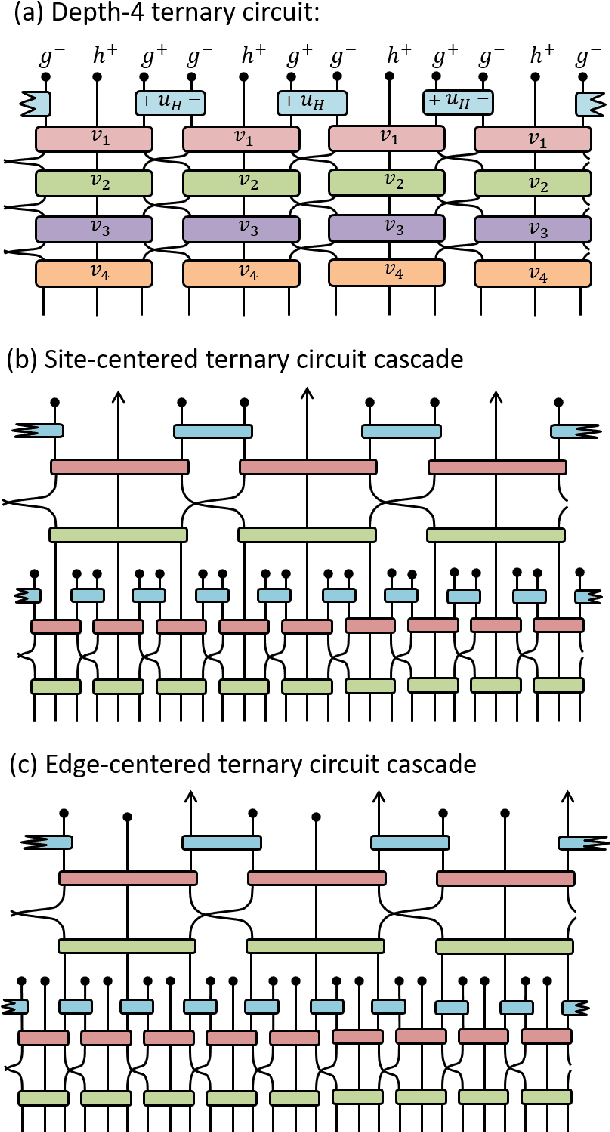

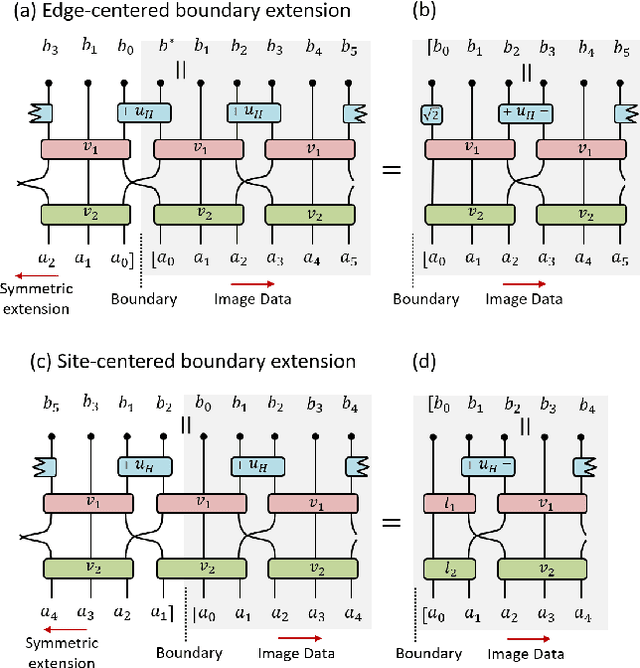

Abstract:We benchmark the efficacy of several novel orthogonal, symmetric, dilation-3 wavelets, derived from a unitary circuit based construction, towards image compression. The performance of these wavelets is compared across several photo databases against the CDF-9/7 wavelets in terms of the minimum number of non-zero wavelet coefficients needed to obtain a specified image quality, as measured by the multi-scale structural similarity index (MS-SSIM). The new wavelets are found to consistently offer better compression efficiency than the CDF-9/7 wavelets across a broad range of image resolutions and quality requirements, averaging 7-8% improved compression efficiency on high-resolution photo images when high-quality (MS-SSIM = 0.99) is required.

Number-State Preserving Tensor Networks as Classifiers for Supervised Learning

May 15, 2019

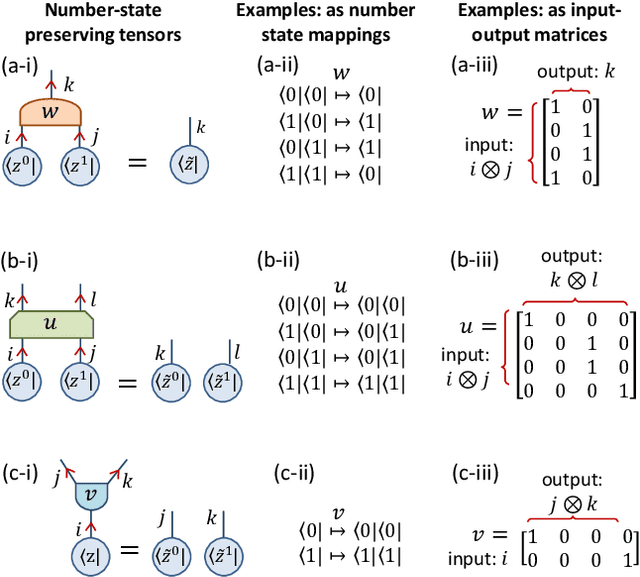

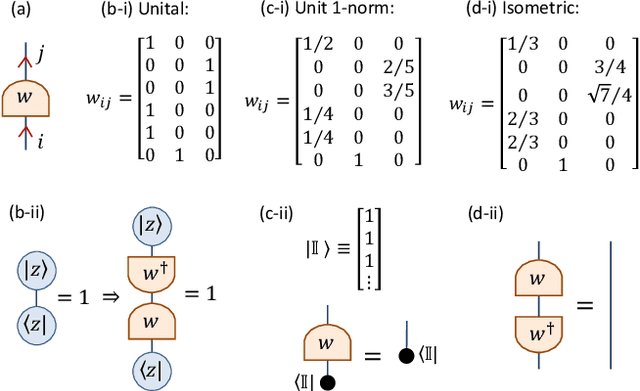

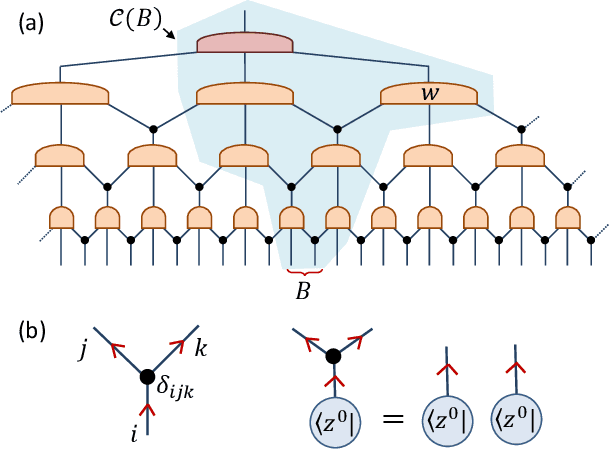

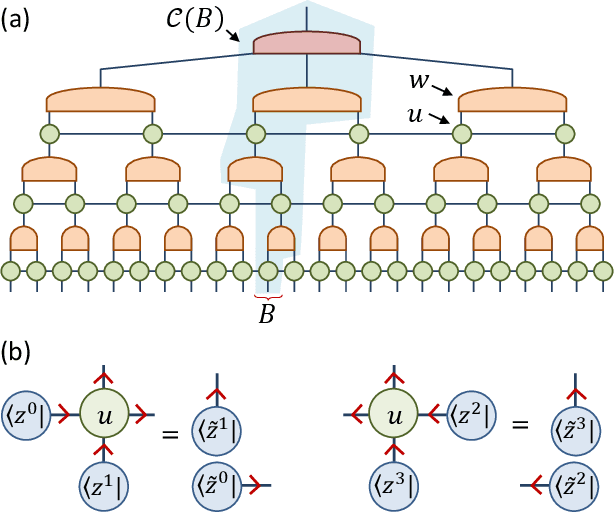

Abstract:We propose a restricted class of tensor network state, built from number-state preserving tensors, for supervised learning tasks. This class of tensor network is argued to be a natural choice for classifiers as (i) they map classical data to classical data, and thus preserve the interpretability of data under tensor transformations, (ii) they can be efficiently trained to maximize their scalar product against classical data sets, and (iii) they seem to be as powerful as generic (unrestricted) tensor networks in this task. Our proposal is demonstrated using a variety of benchmark classification problems, where number-state preserving versions of commonly used networks (including MPS, TTN and MERA) are trained as effective classifiers. This work opens the path for powerful tensor network methods such as MERA, which were previously computationally intractable as classifiers, to be employed for difficult tasks such as image recognition.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge