Gabriele Gianini

Geometry-Aware Physics-Informed PointNets for Modeling Flows Across Porous Structures

Feb 15, 2026Abstract:Predicting flows that occur both through and around porous bodies is challenging due to coupled physics across fluid and porous regions and the need to generalize across diverse geometries and boundary conditions. We address this problem using two Physics Informed learning approaches: Physics Informed PointNets (PIPN) and Physics Informed Geometry Aware Neural Operator (P-IGANO). We enforce the incompressible Navier Stokes equations in the free-flow region and a Darcy Forchheimer extension in the porous region within a unified loss and condition the networks on geometry and material parameters. Datasets are generated with OpenFOAM on 2D ducts containing porous obstacles and on 3D windbreak scenarios with tree canopies and buildings. We first verify the pipeline via the method of manufactured solutions, then assess generalization to unseen shapes, and for PI-GANO, to variable boundary conditions and parameter settings. The results show consistently low velocity and pressure errors in both seen and unseen cases, with accurate reproduction of the wake structures. Performance degrades primarily near sharp interfaces and in regions with large gradients. Overall, the study provides a first systematic evaluation of PIPN/PI-GANO for simultaneous through-and-around porous flows and shows their potential to accelerate design studies without retraining per geometry.

Analysing Neural Network Topologies: a Game Theoretic Approach

Apr 17, 2019

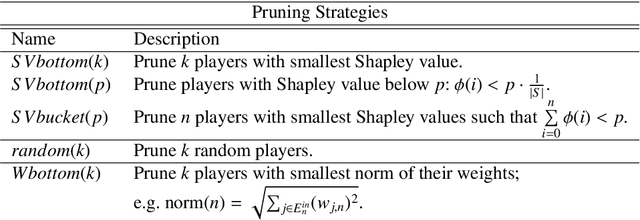

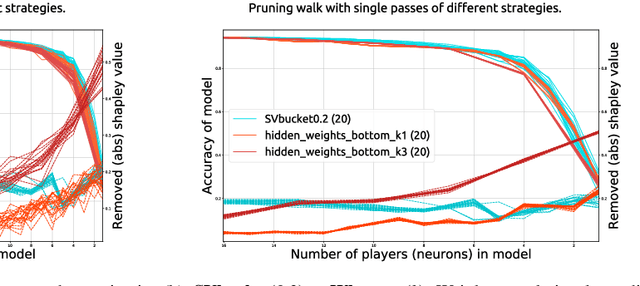

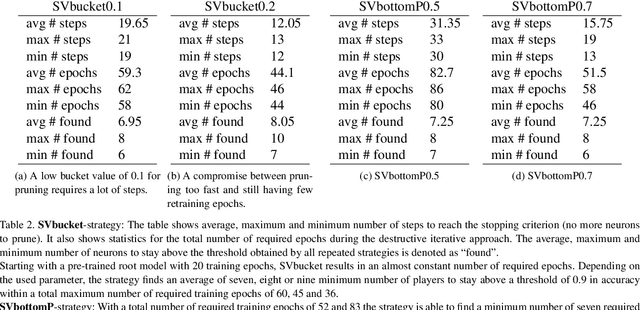

Abstract:Artificial Neural Networks have shown impressive success in very different application cases. Choosing a proper network architecture is a critical decision for a network's success, usually done in a manual manner. As a straightforward strategy, large, mostly fully connected architectures are selected, thereby relying on a good optimization strategy to find proper weights while at the same time avoiding overfitting. However, large parts of the final network are redundant. In the best case, large parts of the network become simply irrelevant for later inferencing. In the worst case, highly parameterized architectures hinder proper optimization and allow the easy creation of adverserial examples fooling the network. A first step in removing irrelevant architectural parts lies in identifying those parts, which requires measuring the contribution of individual components such as neurons. In previous work, heuristics based on using the weight distribution of a neuron as contribution measure have shown some success, but do not provide a proper theoretical understanding. Therefore, in our work we investigate game theoretic measures, namely the Shapley value (SV), in order to separate relevant from irrelevant parts of an artificial neural network. We begin by designing a coalitional game for an artificial neural network, where neurons form coalitions and the average contributions of neurons to coalitions yield to the Shapley value. In order to measure how well the Shapley value measures the contribution of individual neurons, we remove low-contributing neurons and measure its impact on the network performance. In our experiments we show that the Shapley value outperforms other heuristics for measuring the contribution of neurons.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge