G. Iosifidis

Improving IoT Analytics through Selective Edge Execution

Mar 07, 2020

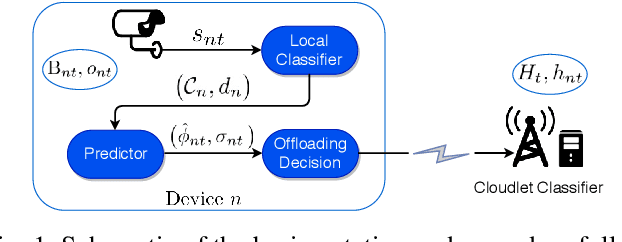

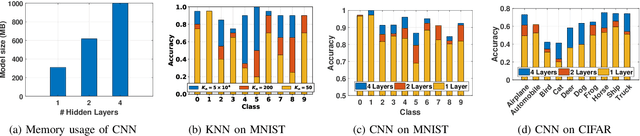

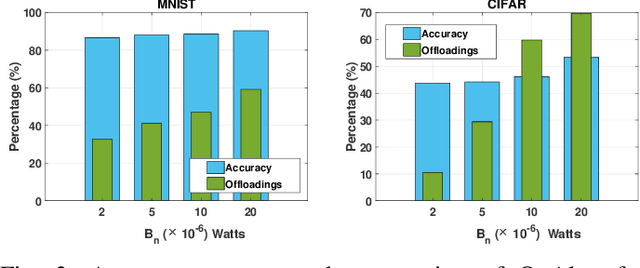

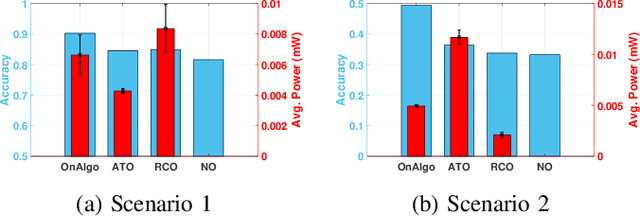

Abstract:A large number of emerging IoT applications rely on machine learning routines for analyzing data. Executing such tasks at the user devices improves response time and economizes network resources. However, due to power and computing limitations, the devices often cannot support such resource-intensive routines and fail to accurately execute the analytics. In this work, we propose to improve the performance of analytics by leveraging edge infrastructure. We devise an algorithm that enables the IoT devices to execute their routines locally; and then outsource them to cloudlet servers, only if they predict they will gain a significant performance improvement. It uses an approximate dual subgradient method, making minimal assumptions about the statistical properties of the system's parameters. Our analysis demonstrates that our proposed algorithm can intelligently leverage the cloudlet, adapting to the service requirements.

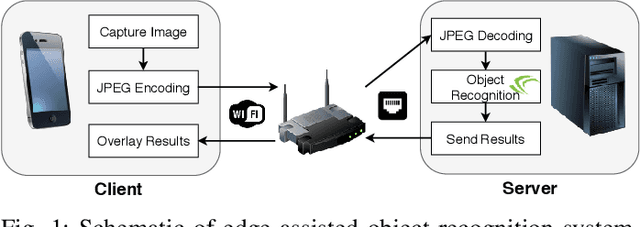

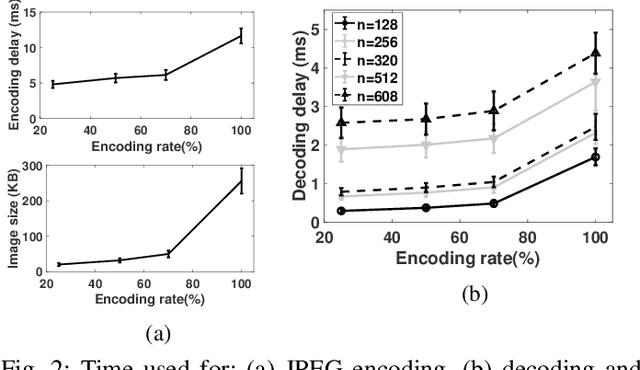

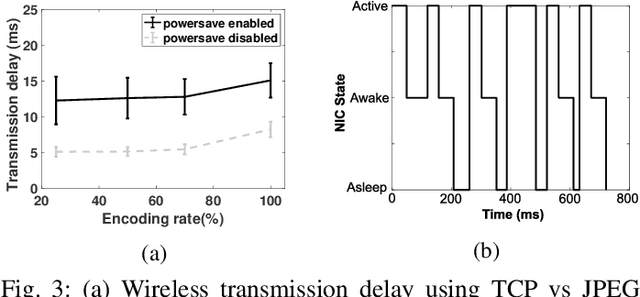

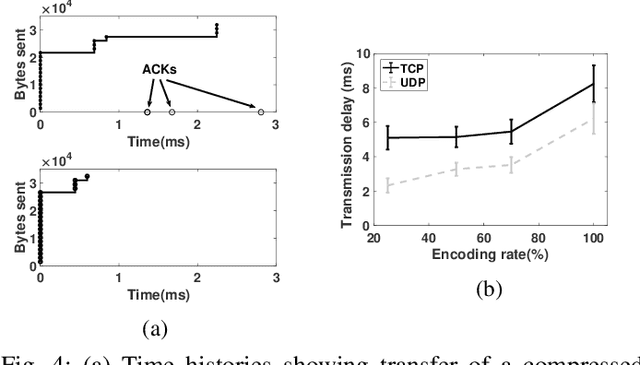

Measurement-driven Analysis of an Edge-Assisted Object Recognition System

Mar 07, 2020

Abstract:We develop an edge-assisted object recognition system with the aim of studying the system-level trade-offs between end-to-end latency and object recognition accuracy. We focus on developing techniques that optimize the transmission delay of the system and demonstrate the effect of image encoding rate and neural network size on these two performance metrics. We explore optimal trade-offs between these metrics by measuring the performance of our real time object recognition application. Our measurements reveal hitherto unknown parameter effects and sharp trade-offs, hence paving the road for optimizing this key service. Finally, we formulate two optimization problems using our measurement-based models and following a Pareto analysis we find that careful tuning of the system operation yields at least 33% better performance for real time conditions, over the standard transmission method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge