Franz Pernkopf

Graz University of Technology

Complex-valued Convolutional Neural Networks for Enhanced Radar Signal Denoising and Interference Mitigation

Apr 29, 2021

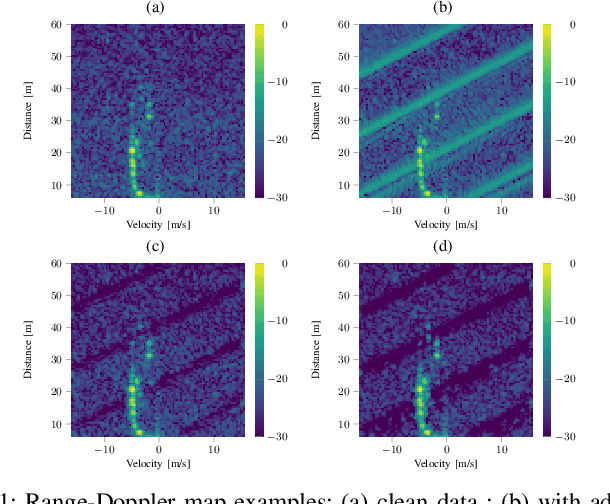

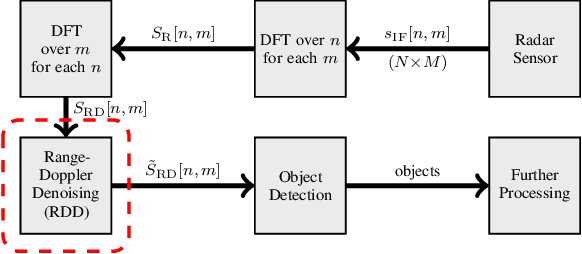

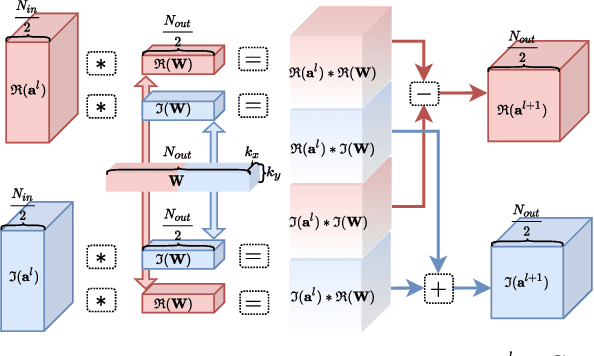

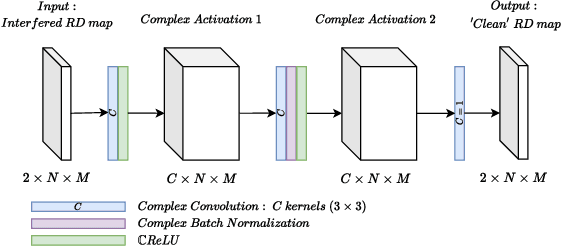

Abstract:Autonomous driving highly depends on capable sensors to perceive the environment and to deliver reliable information to the vehicles' control systems. To increase its robustness, a diversified set of sensors is used, including radar sensors. Radar is a vital contribution of sensory information, providing high resolution range as well as velocity measurements. The increased use of radar sensors in road traffic introduces new challenges. As the so far unregulated frequency band becomes increasingly crowded, radar sensors suffer from mutual interference between multiple radar sensors. This interference must be mitigated in order to ensure a high and consistent detection sensitivity. In this paper, we propose the use of Complex-Valued Convolutional Neural Networks (CVCNNs) to address the issue of mutual interference between radar sensors. We extend previously developed methods to the complex domain in order to process radar data according to its physical characteristics. This not only increases data efficiency, but also improves the conservation of phase information during filtering, which is crucial for further processing, such as angle estimation. Our experiments show, that the use of CVCNNs increases data efficiency, speeds up network training and substantially improves the conservation of phase information during interference removal.

End-to-end Keyword Spotting using Neural Architecture Search and Quantization

Apr 14, 2021

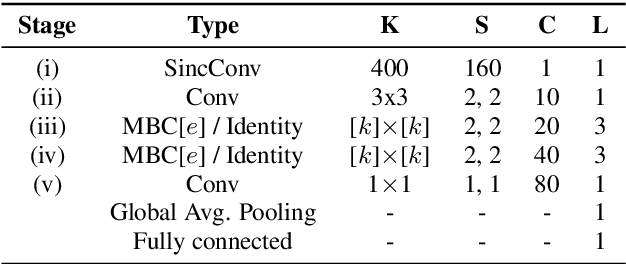

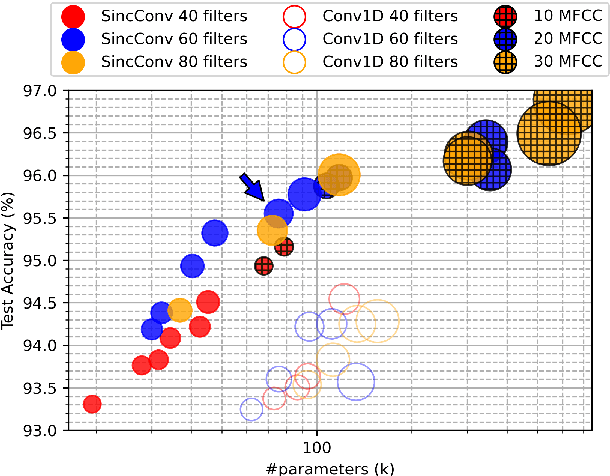

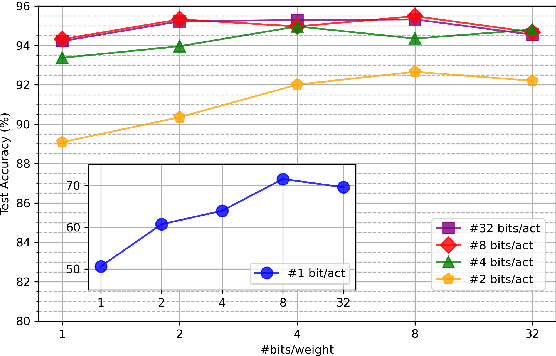

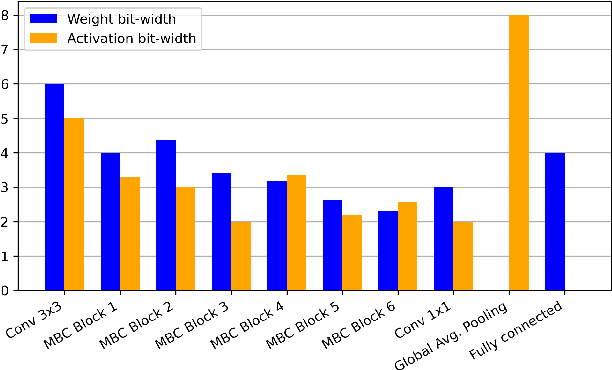

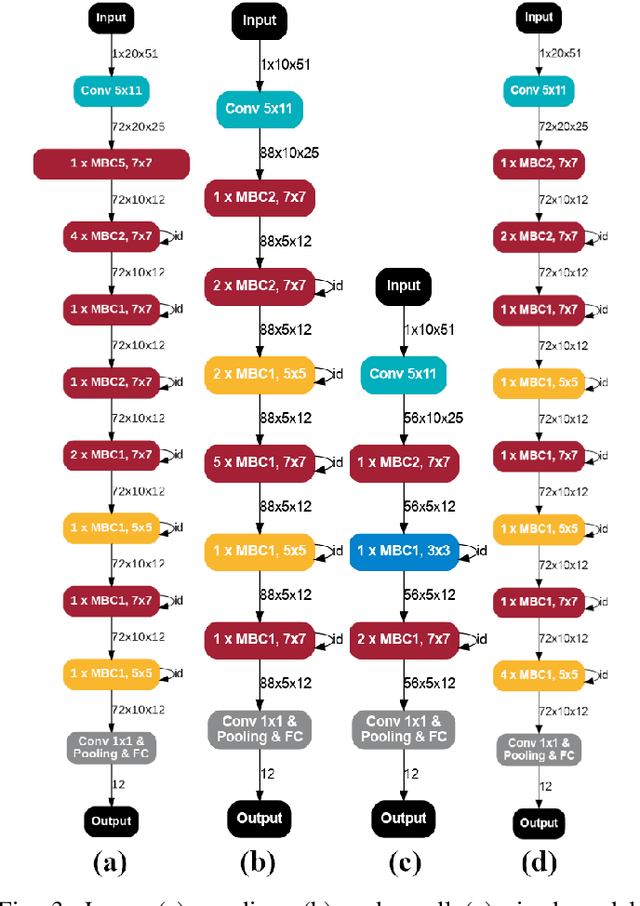

Abstract:This paper introduces neural architecture search (NAS) for the automatic discovery of end-to-end keyword spotting (KWS) models in limited resource environments. We employ a differentiable NAS approach to optimize the structure of convolutional neural networks (CNNs) operating on raw audio waveforms. After a suitable KWS model is found with NAS, we conduct quantization of weights and activations to reduce the memory footprint. We conduct extensive experiments on the Google speech commands dataset. In particular, we compare our end-to-end approach to mel-frequency cepstral coefficient (MFCC) based systems. For quantization, we compare fixed bit-width quantization and trained bit-width quantization. Using NAS only, we were able to obtain a highly efficient model with an accuracy of 95.55% using 75.7k parameters and 13.6M operations. Using trained bit-width quantization, the same model achieves a test accuracy of 93.76% while using on average only 2.91 bits per activation and 2.51 bits per weight.

Blind Speech Separation and Dereverberation using Neural Beamforming

Mar 24, 2021

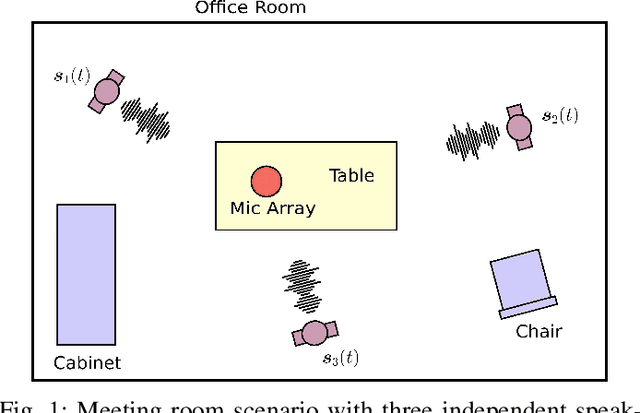

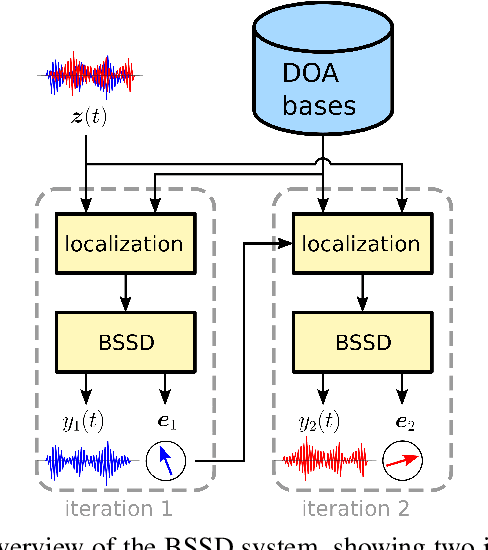

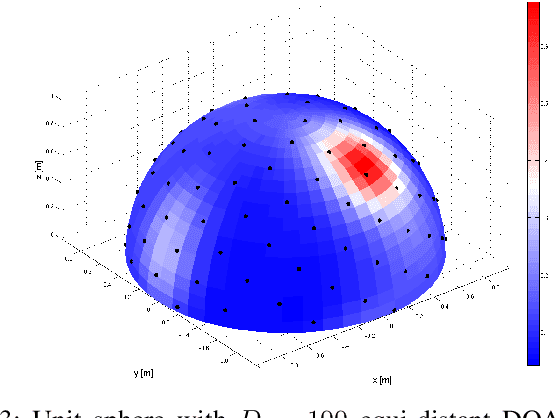

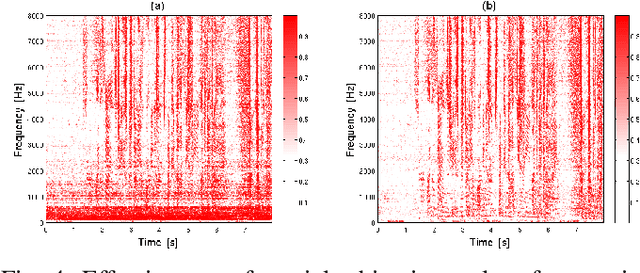

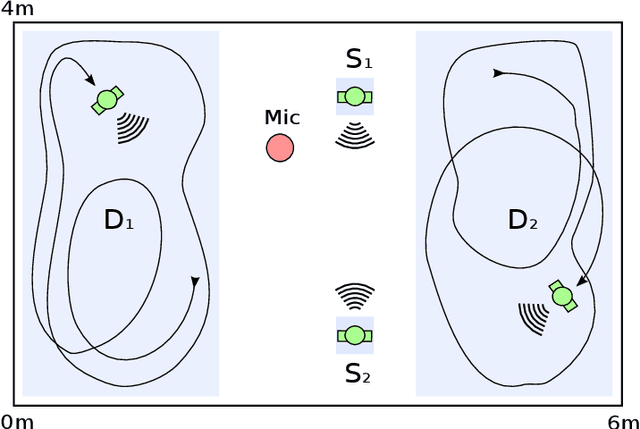

Abstract:In this paper, we present the Blind Speech Separation and Dereverberation (BSSD) network, which performs simultaneous speaker separation, dereverberation and speaker identification in a single neural network. Speaker separation is guided by a set of predefined spatial cues. Dereverberation is performed by using neural beamforming, and speaker identification is aided by embedding vectors and triplet mining. We introduce a frequency-domain model which uses complex-valued neural networks, and a time-domain variant which performs beamforming in latent space. Further, we propose a block-online mode to process longer audio recordings, as they occur in meeting scenarios. We evaluate our system in terms of Scale Independent Signal to Distortion Ratio (SI-SDR), Word Error Rate (WER) and Equal Error Rate (EER).

Resource-efficient DNNs for Keyword Spotting using Neural Architecture Search and Quantization

Dec 18, 2020

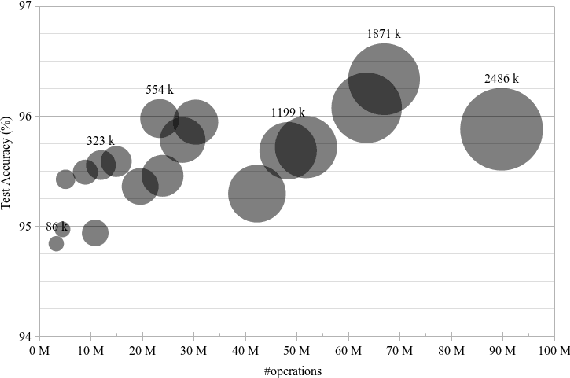

Abstract:This paper introduces neural architecture search (NAS) for the automatic discovery of small models for keyword spotting (KWS) in limited resource environments. We employ a differentiable NAS approach to optimize the structure of convolutional neural networks (CNNs) to maximize the classification accuracy while minimizing the number of operations per inference. Using NAS only, we were able to obtain a highly efficient model with 95.4% accuracy on the Google speech commands dataset with 494.8 kB of memory usage and 19.6 million operations. Additionally, weight quantization is used to reduce the memory consumption even further. We show that weight quantization to low bit-widths (e.g. 1 bit) can be used without substantial loss in accuracy. By increasing the number of input features from 10 MFCC to 20 MFCC we were able to increase the accuracy to 96.3% at 340.1 kB of memory usage and 27.1 million operations.

Deep Interference Mitigation and Denoising of Real-World FMCW Radar Signals

Dec 04, 2020

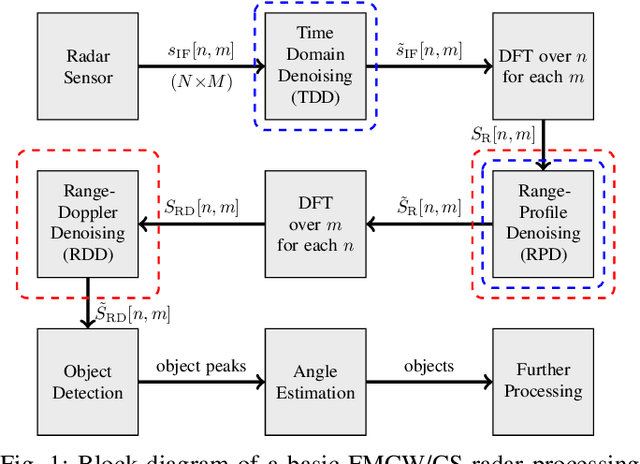

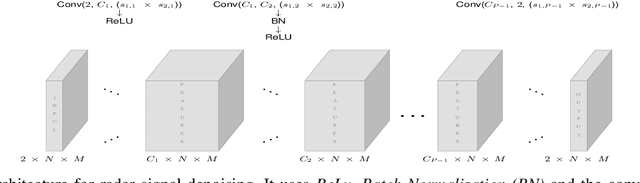

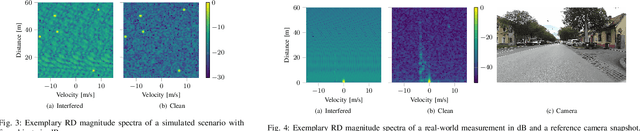

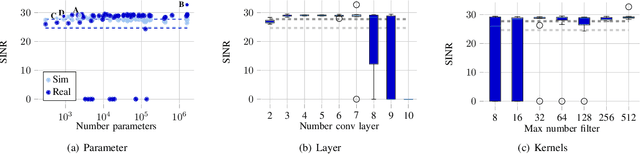

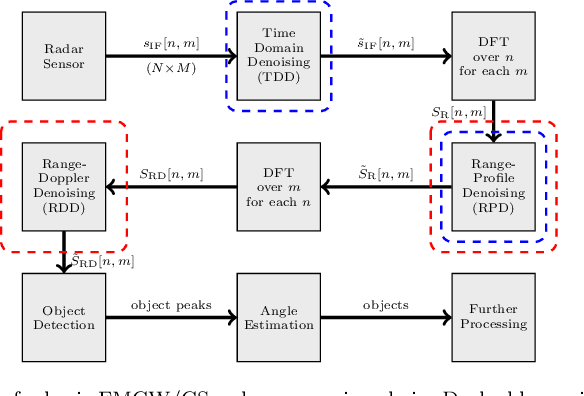

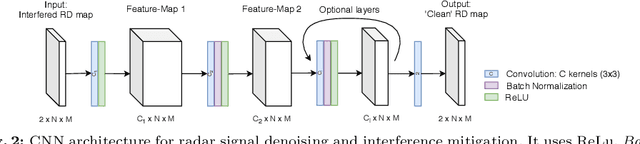

Abstract:Radar sensors are crucial for environment perception of driver assistance systems as well as autonomous cars. Key performance factors are a fine range resolution and the possibility to directly measure velocity. With a rising number of radar sensors and the so far unregulated automotive radar frequency band, mutual interference is inevitable and must be dealt with. Sensors must be capable of detecting, or even mitigating the harmful effects of interference, which include a decreased detection sensitivity. In this paper, we evaluate a Convolutional Neural Network (CNN)-based approach for interference mitigation on real-world radar measurements. We combine real measurements with simulated interference in order to create input-output data suitable for training the model. We analyze the performance to model complexity relation on simulated and measurement data, based on an extensive parameter search. Further, a finite sample size performance comparison shows the effectiveness of the model trained on either simulated or real data as well as for transfer learning. A comparative performance analysis with the state of the art emphasizes the potential of CNN-based models for interference mitigation and denoising of real-world measurements, also considering resource constraints of the hardware.

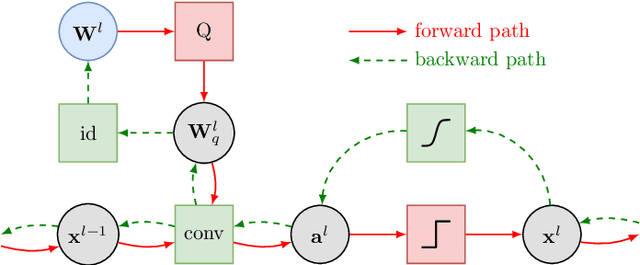

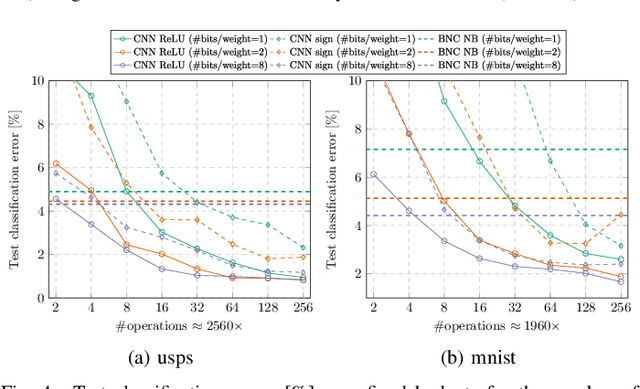

Quantized Neural Networks for Radar Interference Mitigation

Dec 01, 2020

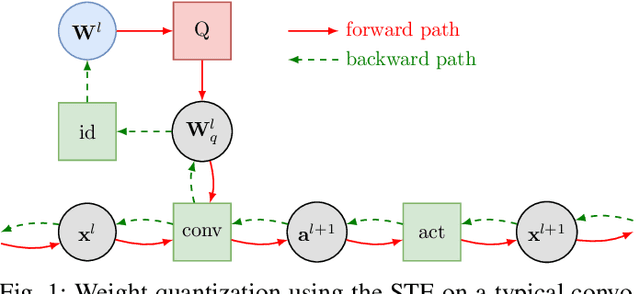

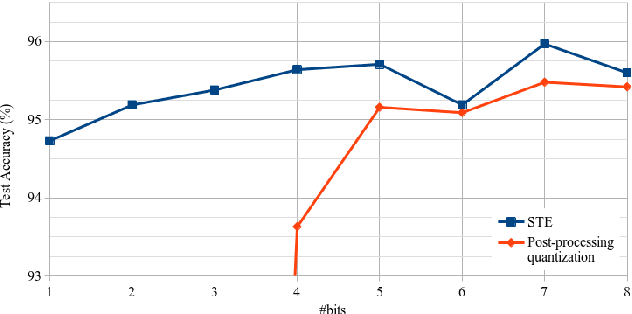

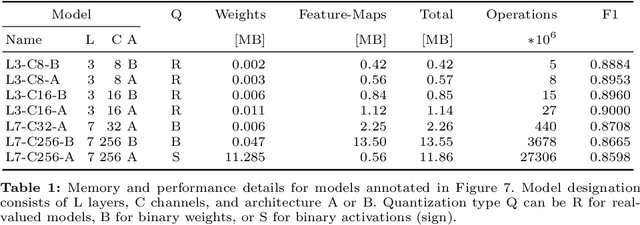

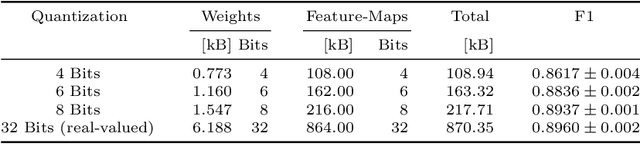

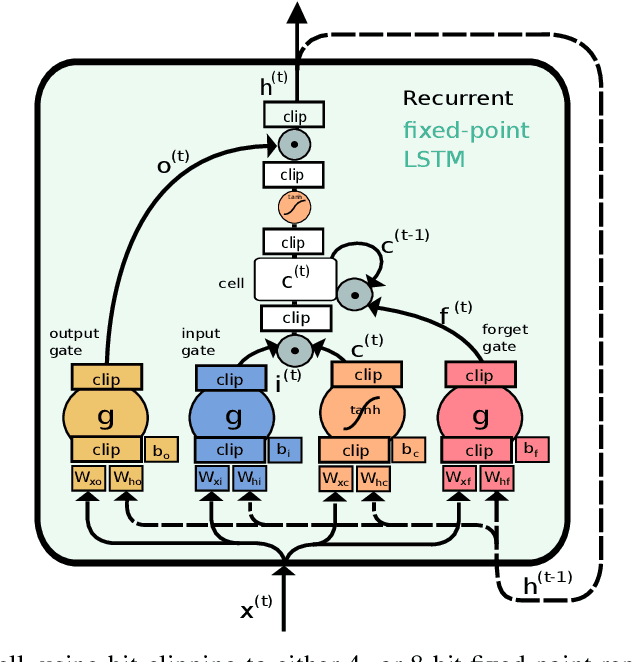

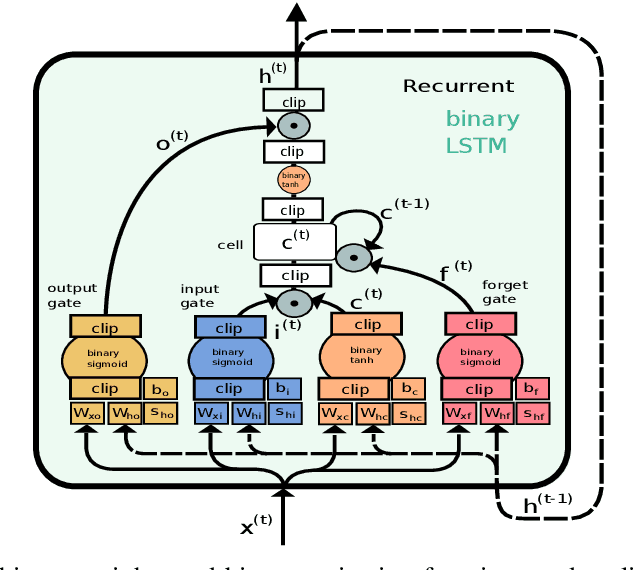

Abstract:Radar sensors are crucial for environment perception of driver assistance systems as well as autonomous vehicles. Key performance factors are weather resistance and the possibility to directly measure velocity. With a rising number of radar sensors and the so far unregulated automotive radar frequency band, mutual interference is inevitable and must be dealt with. Algorithms and models operating on radar data in early processing stages are required to run directly on specialized hardware, i.e. the radar sensor. This specialized hardware typically has strict resource-constraints, i.e. a low memory capacity and low computational power. Convolutional Neural Network (CNN)-based approaches for denoising and interference mitigation yield promising results for radar processing in terms of performance. However, these models typically contain millions of parameters, stored in hundreds of megabytes of memory, and require additional memory during execution. In this paper we investigate quantization techniques for CNN-based denoising and interference mitigation of radar signals. We analyze the quantization potential of different CNN-based model architectures and sizes by considering (i) quantized weights and (ii) piecewise constant activation functions, which results in reduced memory requirements for model storage and during the inference step respectively.

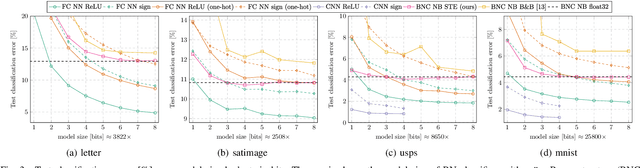

On Resource-Efficient Bayesian Network Classifiers and Deep Neural Networks

Oct 22, 2020

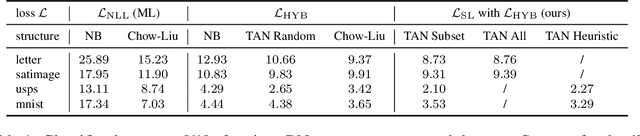

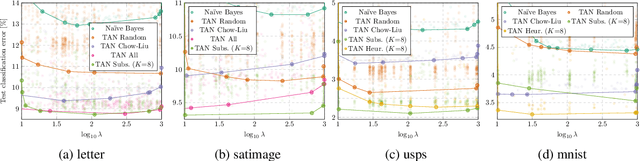

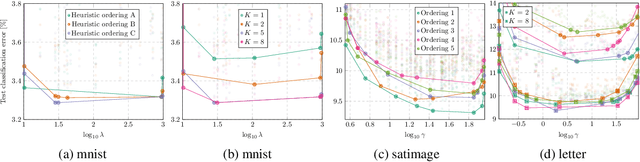

Abstract:We present two methods to reduce the complexity of Bayesian network (BN) classifiers. First, we introduce quantization-aware training using the straight-through gradient estimator to quantize the parameters of BNs to few bits. Second, we extend a recently proposed differentiable tree-augmented naive Bayes (TAN) structure learning approach by also considering the model size. Both methods are motivated by recent developments in the deep learning community, and they provide effective means to trade off between model size and prediction accuracy, which is demonstrated in extensive experiments. Furthermore, we contrast quantized BN classifiers with quantized deep neural networks (DNNs) for small-scale scenarios which have hardly been investigated in the literature. We show Pareto optimal models with respect to model size, number of operations, and test error and find that both model classes are viable options.

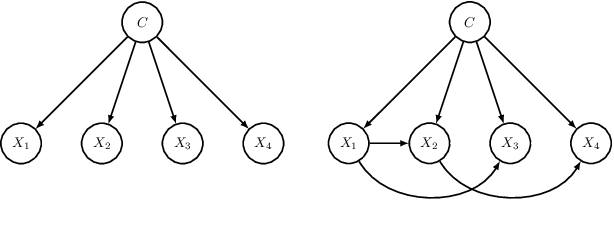

Differentiable TAN Structure Learning for Bayesian Network Classifiers

Aug 21, 2020

Abstract:Learning the structure of Bayesian networks is a difficult combinatorial optimization problem. In this paper, we consider learning of tree-augmented naive Bayes (TAN) structures for Bayesian network classifiers with discrete input features. Instead of performing a combinatorial optimization over the space of possible graph structures, the proposed method learns a distribution over graph structures. After training, we select the most probable structure of this distribution. This allows for a joint training of the Bayesian network parameters along with its TAN structure using gradient-based optimization. The proposed method is agnostic to the specific loss and only requires that it is differentiable. We perform extensive experiments using a hybrid generative-discriminative loss based on the discriminative probabilistic margin. Our method consistently outperforms random TAN structures and Chow-Liu TAN structures.

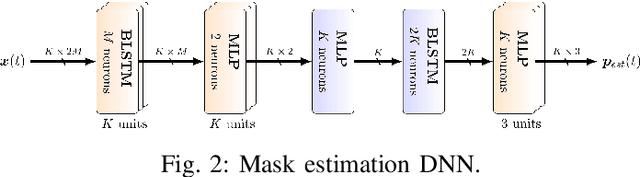

Resource-Efficient Speech Mask Estimation for Multi-Channel Speech Enhancement

Jul 22, 2020

Abstract:While machine learning techniques are traditionally resource intensive, we are currently witnessing an increased interest in hardware and energy efficient approaches. This need for resource-efficient machine learning is primarily driven by the demand for embedded systems and their usage in ubiquitous computing and IoT applications. In this article, we provide a resource-efficient approach for multi-channel speech enhancement based on Deep Neural Networks (DNNs). In particular, we use reduced-precision DNNs for estimating a speech mask from noisy, multi-channel microphone observations. This speech mask is used to obtain either the Minimum Variance Distortionless Response (MVDR) or Generalized Eigenvalue (GEV) beamformer. In the extreme case of binary weights and reduced precision activations, a significant reduction of execution time and memory footprint is possible while still obtaining an audio quality almost on par to single-precision DNNs and a slightly larger Word Error Rate (WER) for single speaker scenarios using the WSJ0 speech corpus.

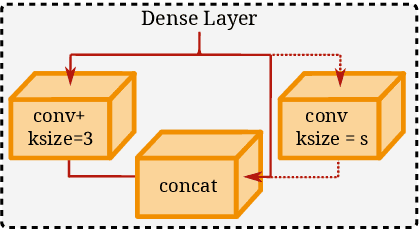

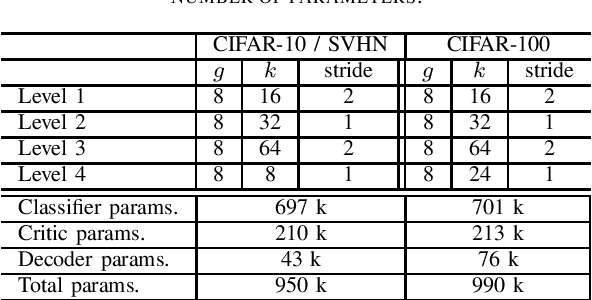

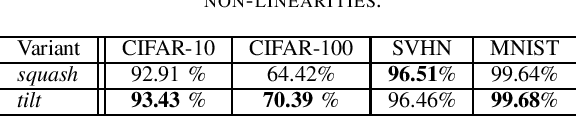

Wasserstein Routed Capsule Networks

Jul 22, 2020

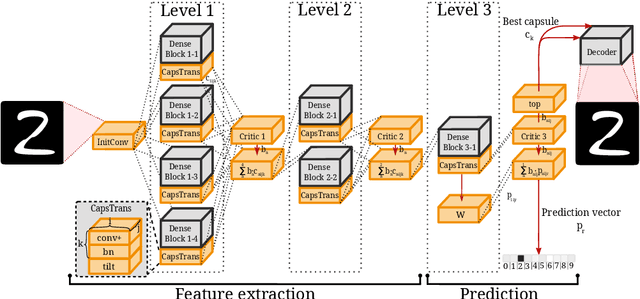

Abstract:Capsule networks offer interesting properties and provide an alternative to today's deep neural network architectures. However, recent approaches have failed to consistently achieve competitive results across different image datasets. We propose a new parameter efficient capsule architecture, that is able to tackle complex tasks by using neural networks trained with an approximate Wasserstein objective to dynamically select capsules throughout the entire architecture. This approach focuses on implementing a robust routing scheme, which can deliver improved results using little overhead. We perform several ablation studies verifying the proposed concepts and show that our network is able to substantially outperform other capsule approaches by over 1.2 % on CIFAR-10, using fewer parameters.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge