Firouz Abdullah Al-Wassai

The Classification Accuracy of Multiple-Metric Learning Algorithm on Multi-Sensor Fusion

Sep 25, 2013

Abstract:This paper focuses on two main issues; first one is the impact of Similarity Search to learning the training sample in metric space, and searching based on supervised learning classi-fication. In particular, four metrics space searching are based on spatial information that are introduced as the following; Cheby-shev Distance (CD); Bray Curtis Distance (BCD); Manhattan Distance (MD) and Euclidean Distance(ED) classifiers. The second issue investigates the performance of combination of mul-ti-sensor images on the supervised learning classification accura-cy. QuickBird multispectral data (MS) and panchromatic data (PAN) have been used in this study to demonstrate the enhance-ment and accuracy assessment of fused image over the original images. The supervised classification results of fusion image generated better than the MS did. QuickBird and the best results with ED classifier than the other did.

* This paper has been withdrawn by the author due to a crucial sign error in title the paper

Image Fusion Technologies In Commercial Remote Sensing Packages

Jul 09, 2013

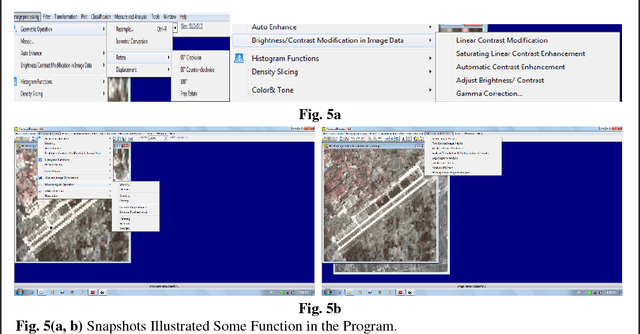

Abstract:Several remote sensing software packages are used to the explicit purpose of analyzing and visualizing remotely sensed data, with the developing of remote sensing sensor technologies from last ten years. Accord-ing to literature, the remote sensing is still the lack of software tools for effective information extraction from remote sensing data. So, this paper provides a state-of-art of multi-sensor image fusion technologies as well as review on the quality evaluation of the single image or fused images in the commercial remote sensing pack-ages. It also introduces program (ALwassaiProcess) developed for image fusion and classification.

* Keywords: Commercial Processing Systems, Image Fusion, quality evaluation

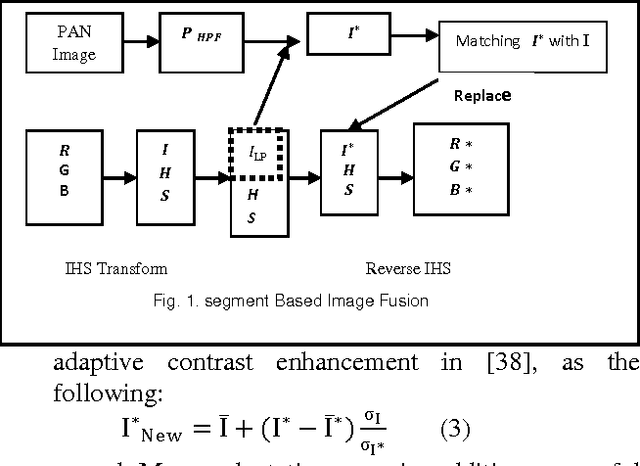

The Segmentation Fusion Method On10 Multi-Sensors

Aug 23, 2012

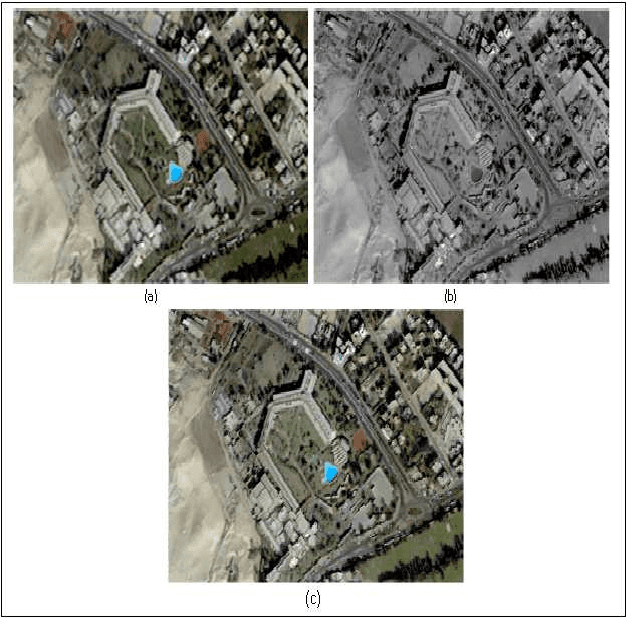

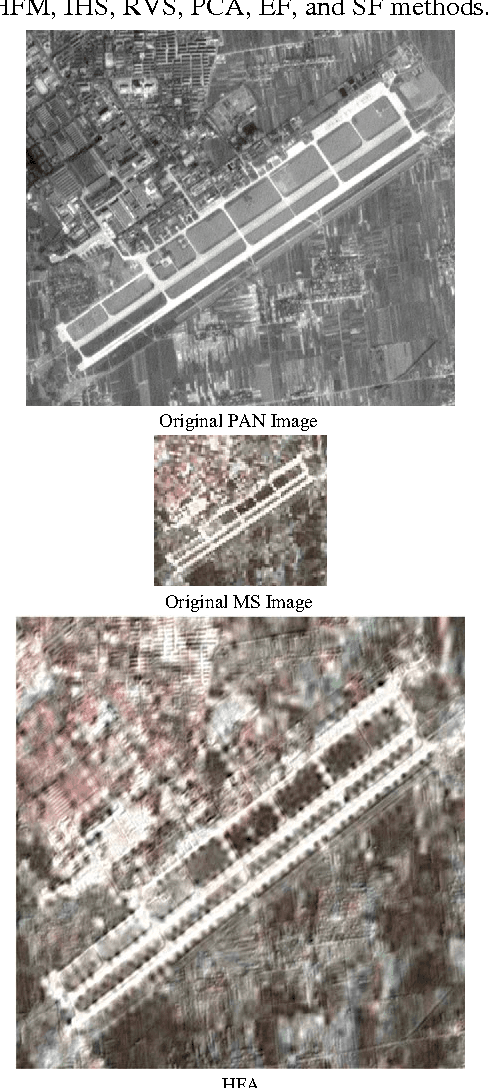

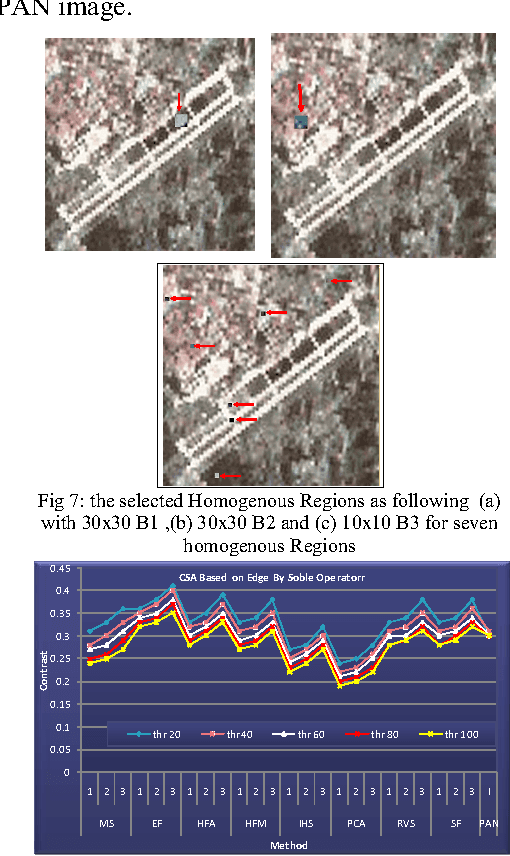

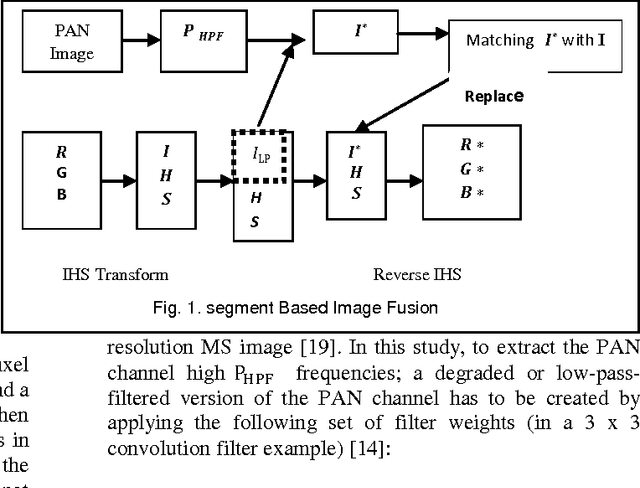

Abstract:The most significant problem may be undesirable effects for the spectral signatures of fused images as well as the benefits of using fused images mostly compared to their source images were acquired at the same time by one sensor. They may or may not be suitable for the fusion of other images. It becomes therefore increasingly important to investigate techniques that allow multi-sensor, multi-date image fusion to make final conclusions can be drawn on the most suitable method of fusion. So, In this study we present a new method Segmentation Fusion method (SF) for remotely sensed images is presented by considering the physical characteristics of sensors, which uses a feature level processing paradigm. In a particularly, attempts to test the proposed method performance on 10 multi-sensor images and comparing it with different fusion techniques for estimating the quality and degree of information improvement quantitatively by using various spatial and spectral metrics.

* http://www.ijltemas.in/new-icae-2012-?df=1&t=1345561079771

A Novel Metric Approach Evaluation For The Spatial Enhancement Of Pan-Sharpened Images

Jul 20, 2012

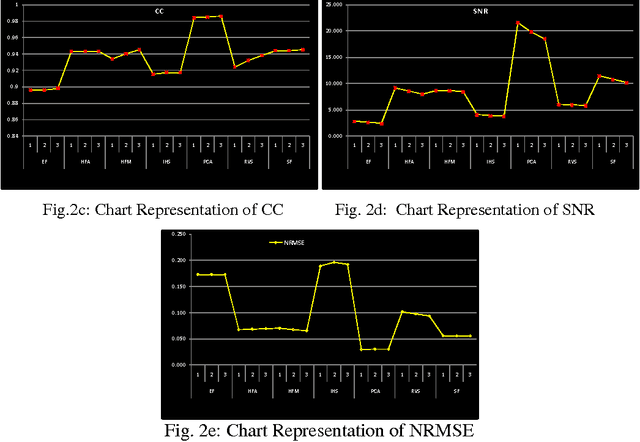

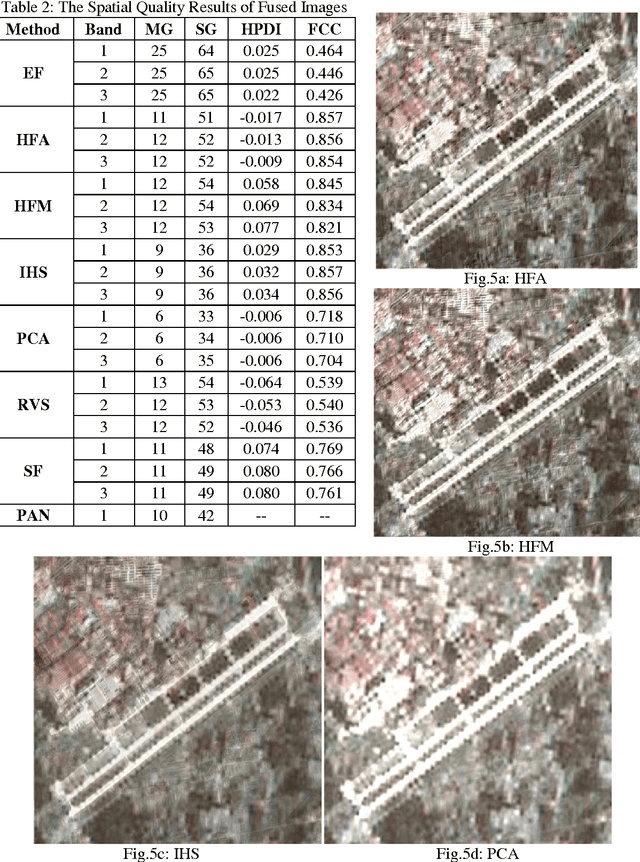

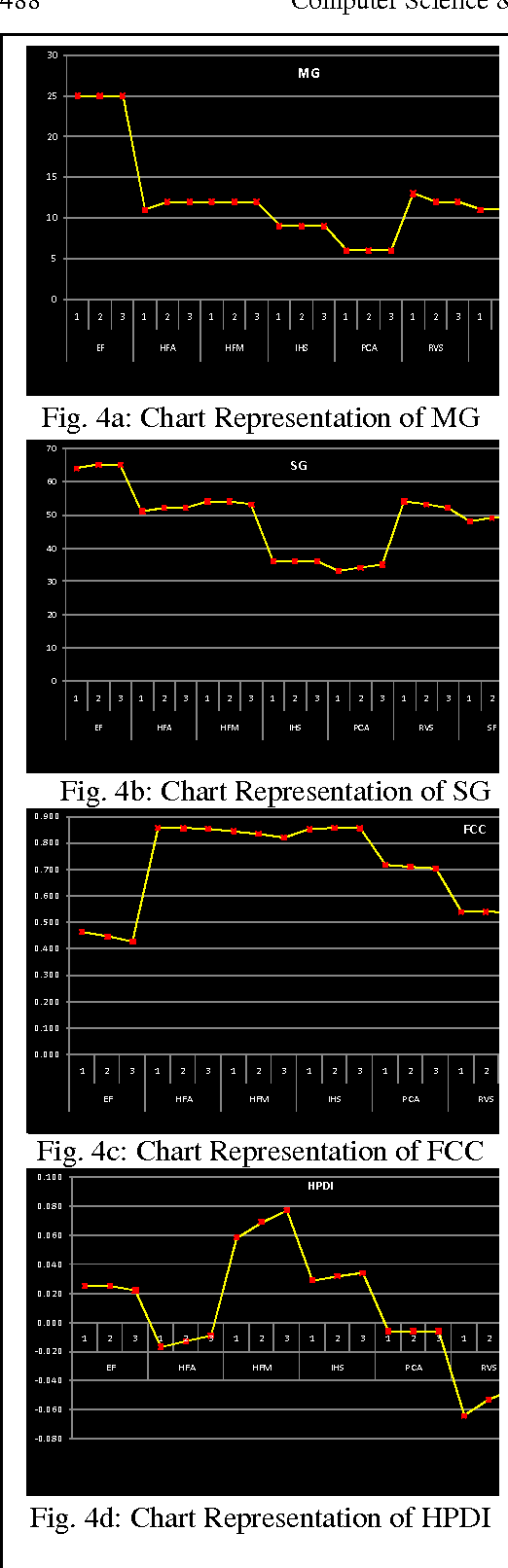

Abstract:Various and different methods can be used to produce high-resolution multispectral images from high-resolution panchromatic image (PAN) and low-resolution multispectral images (MS), mostly on the pixel level. The Quality of image fusion is an essential determinant of the value of processing images fusion for many applications. Spatial and spectral qualities are the two important indexes that used to evaluate the quality of any fused image. However, the jury is still out of fused image's benefits if it compared with its original images. In addition, there is a lack of measures for assessing the objective quality of the spatial resolution for the fusion methods. So, an objective quality of the spatial resolution assessment for fusion images is required. Therefore, this paper describes a new approach proposed to estimate the spatial resolution improve by High Past Division Index (HPDI) upon calculating the spatial-frequency of the edge regions of the image and it deals with a comparison of various analytical techniques for evaluating the Spatial quality, and estimating the colour distortion added by image fusion including: MG, SG, FCC, SD, En, SNR, CC and NRMSE. In addition, this paper devotes to concentrate on the comparison of various image fusion techniques based on pixel and feature fusion technique.

* arXiv admin note: substantial text overlap with arXiv:1110.4970

Spatial And Spectral Quality Evaluation Based On Edges Regions Of Satellite Image Fusion

Jul 08, 2012

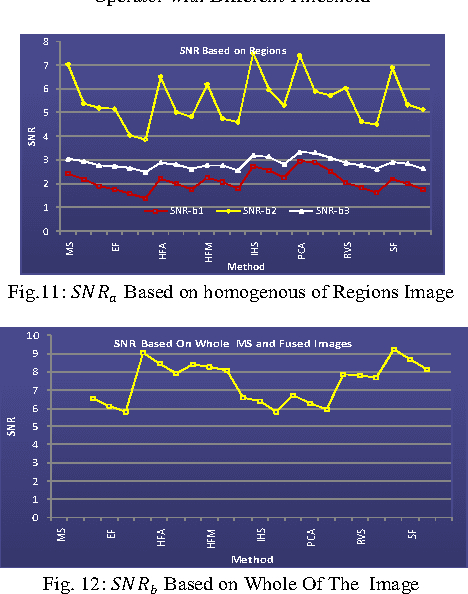

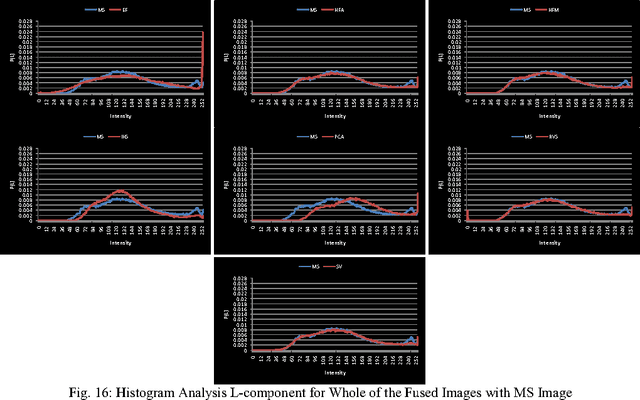

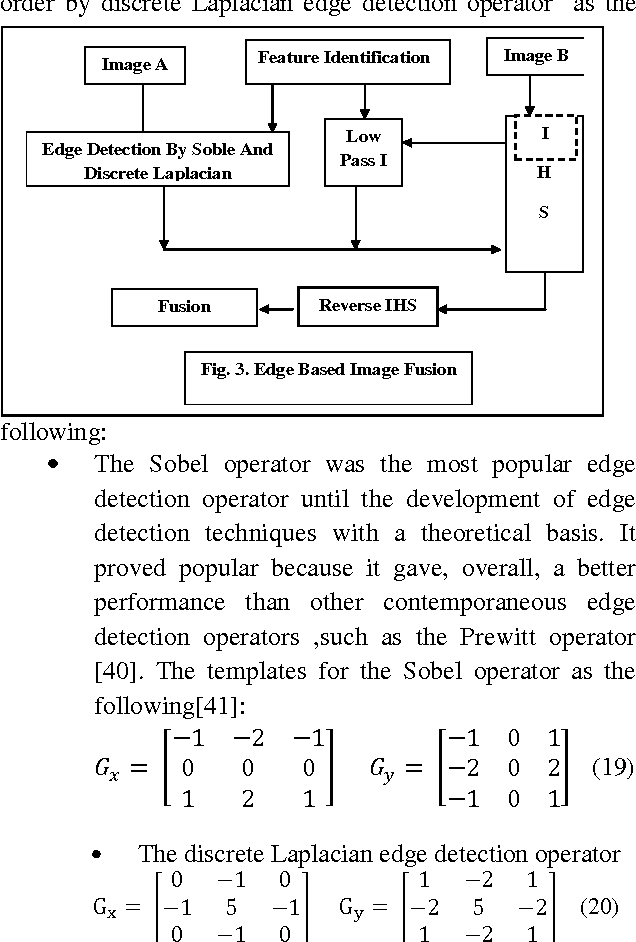

Abstract:The Quality of image fusion is an essential determinant of the value of processing images fusion for many applications. Spatial and spectral qualities are the two important indexes that used to evaluate the quality of any fused image. However, the jury is still out of fused image's benefits if it compared with its original images. In addition, there is a lack of measures for assessing the objective quality of the spatial resolution for the fusion methods. Therefore, an objective quality of the spatial resolution assessment for fusion images is required. Most important details of the image are in edges regions, but most standards of image estimation do not depend upon specifying the edges in the image and measuring their edges. However, they depend upon the general estimation or estimating the uniform region, so this study deals with new method proposed to estimate the spatial resolution by Contrast Statistical Analysis (CSA) depending upon calculating the contrast of the edge, non edge regions and the rate for the edges regions. Specifying the edges in the image is made by using Soble operator with different threshold values. In addition, estimating the color distortion added by image fusion based on Histogram Analysis of the edge brightness values of all RGB-color bands and Lcomponent.

* 2nd International Conference on Advanced Computing & Communication Technologies (ACCT12),7 -- 8 January 2012

Studying Satellite Image Quality Based on the Fusion Techniques

Oct 22, 2011

Abstract:Various and different methods can be used to produce high-resolution multispectral images from high-resolution panchromatic image (PAN) and low-resolution multispectral images (MS), mostly on the pixel level. However, the jury is still out on the benefits of a fused image compared to its original images. There is also a lack of measures for assessing the objective quality of the spatial resolution for the fusion methods. Therefore, an objective quality of the spatial resolution assessment for fusion images is required. So, this study attempts to develop a new qualitative assessment to evaluate the spatial quality of the pan sharpened images by many spatial quality metrics. Also, this paper deals with a comparison of various image fusion techniques based on pixel and feature fusion techniques.

Multisensor Images Fusion Based on Feature-Level

Aug 20, 2011

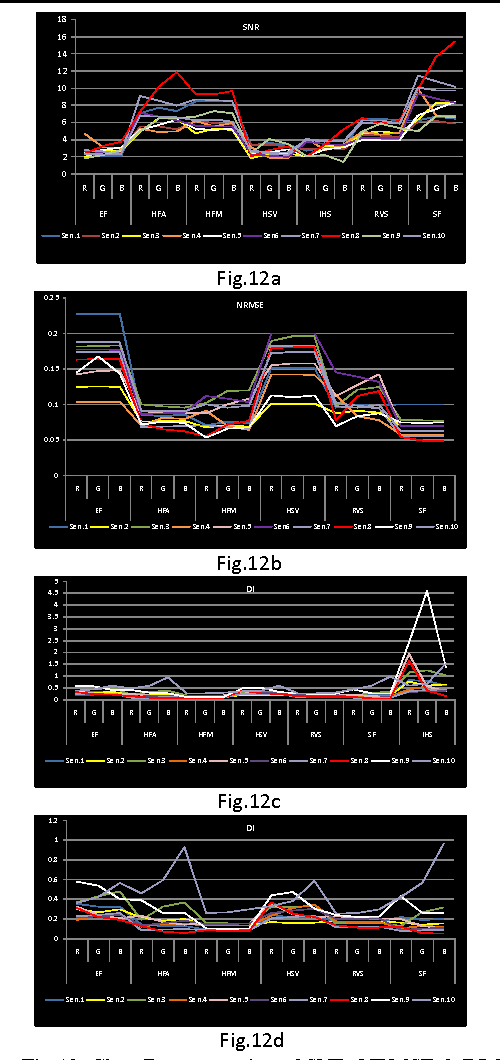

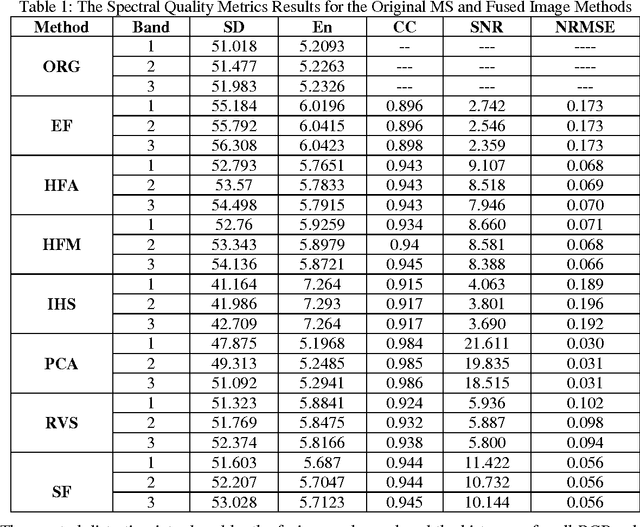

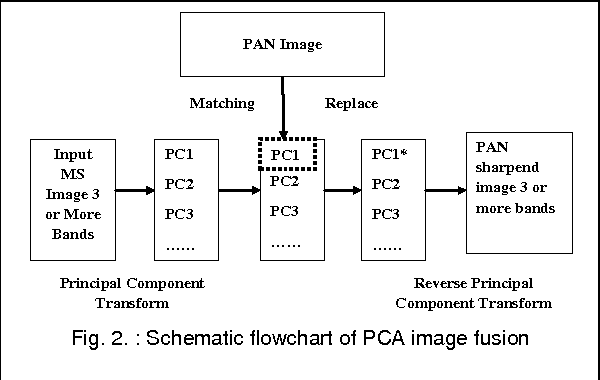

Abstract:Until now, of highest relevance for remote sensing data processing and analysis have been techniques for pixel level image fusion. So, This paper attempts to undertake the study of Feature-Level based image fusion. For this purpose, feature based fusion techniques, which are usually based on empirical or heuristic rules, are employed. Hence, in this paper we consider feature extraction (FE) for fusion. It aims at finding a transformation of the original space that would produce such new features, which preserve or improve as much as possible. This study introduces three different types of Image fusion techniques including Principal Component Analysis based Feature Fusion (PCA), Segment Fusion (SF) and Edge fusion (EF). This paper also devotes to concentrate on the analytical techniques for evaluating the quality of image fusion (F) by using various methods including (SD), (En), (CC), (SNR), (NRMSE) and (DI) to estimate the quality and degree of information improvement of a fused image quantitatively.

* Keywords: Image fusion, Feature, Edge Fusion, Segment Fusion, IHS, PCA

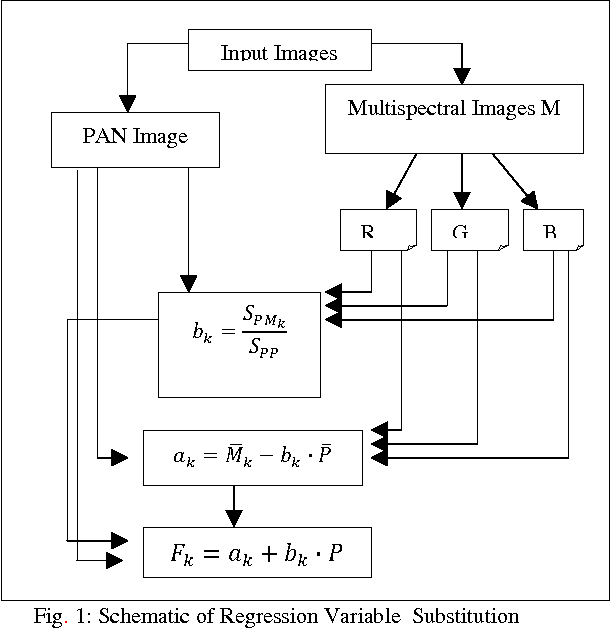

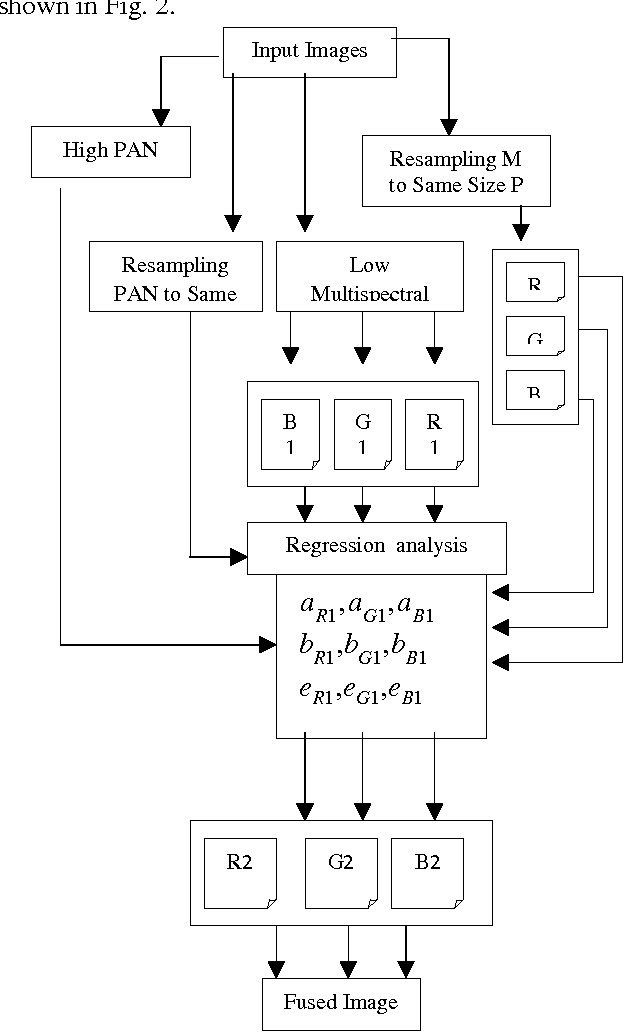

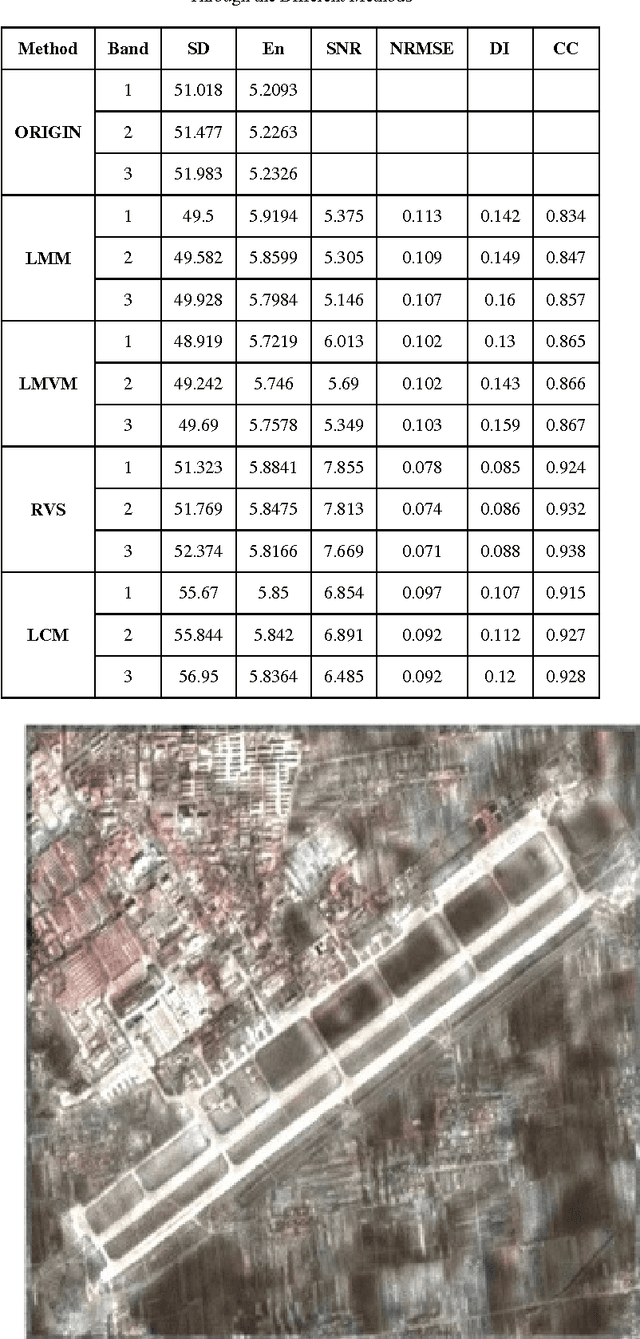

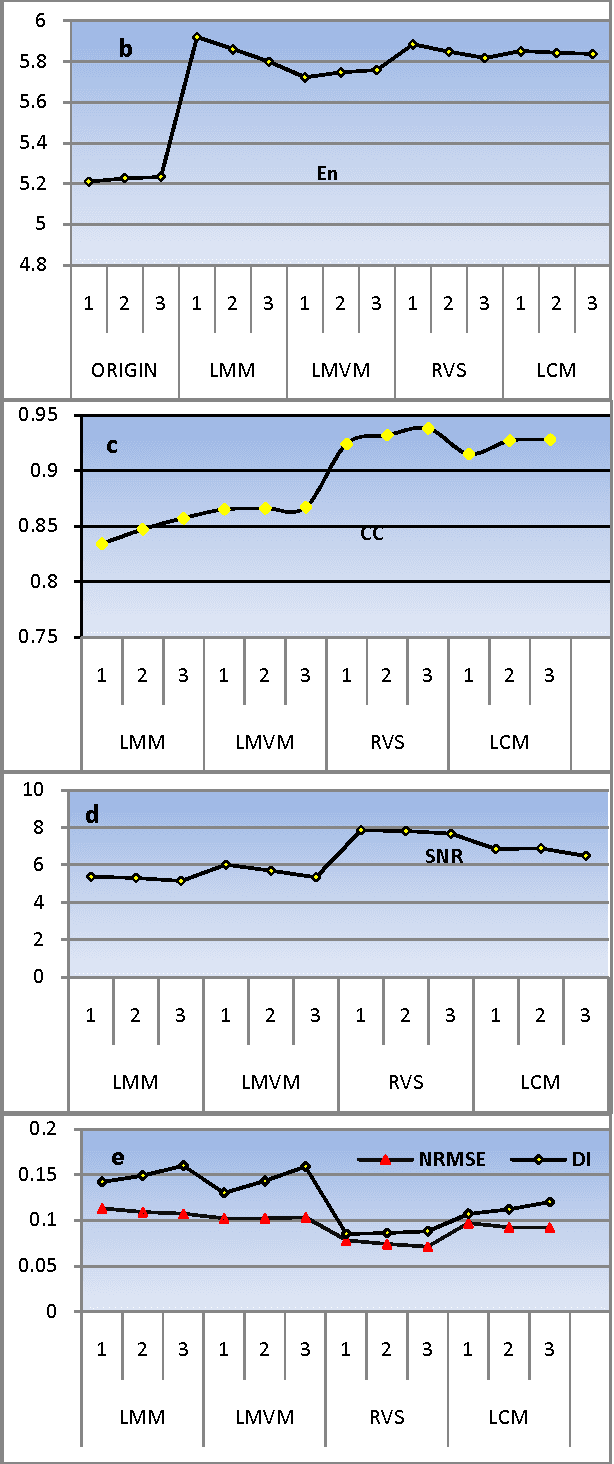

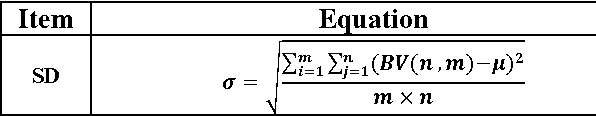

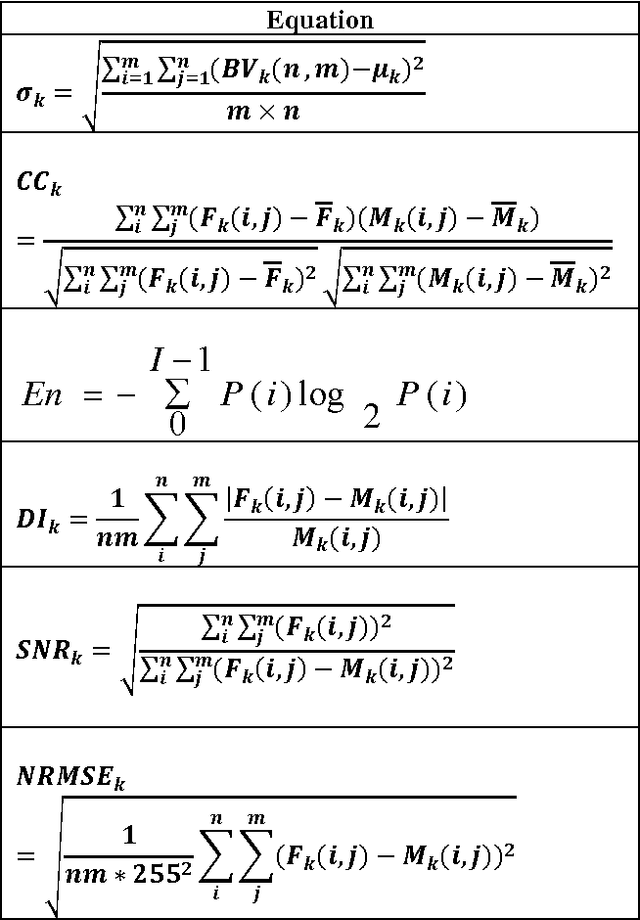

The Statistical methods of Pixel-Based Image Fusion Techniques

Aug 12, 2011

Abstract:There are many image fusion methods that can be used to produce high-resolution mutlispectral images from a high-resolution panchromatic (PAN) image and low-resolution multispectral (MS) of remote sensed images. This paper attempts to undertake the study of image fusion techniques with different Statistical techniques for image fusion as Local Mean Matching (LMM), Local Mean and Variance Matching (LMVM), Regression variable substitution (RVS), Local Correlation Modeling (LCM) and they are compared with one another so as to choose the best technique, that can be applied on multi-resolution satellite images. This paper also devotes to concentrate on the analytical techniques for evaluating the quality of image fusion (F) by using various methods including Standard Deviation (SD), Entropy(En), Correlation Coefficient (CC), Signal-to Noise Ratio (SNR), Normalization Root Mean Square Error (NRMSE) and Deviation Index (DI) to estimate the quality and degree of information improvement of a fused image quantitatively.

* Keywords: Data Fusion, Resolution Enhancement, Statistical fusion, Correlation Modeling, Matching, pixel based fusion

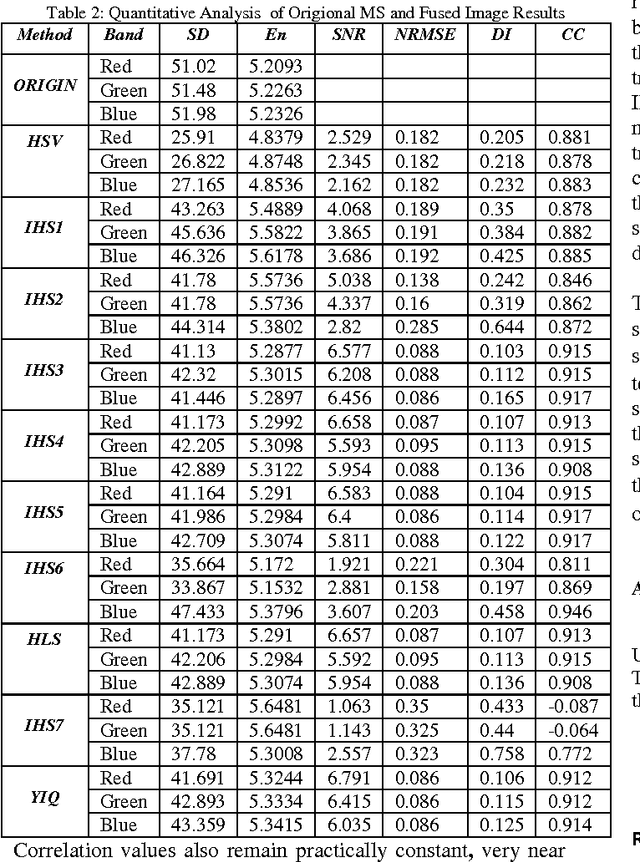

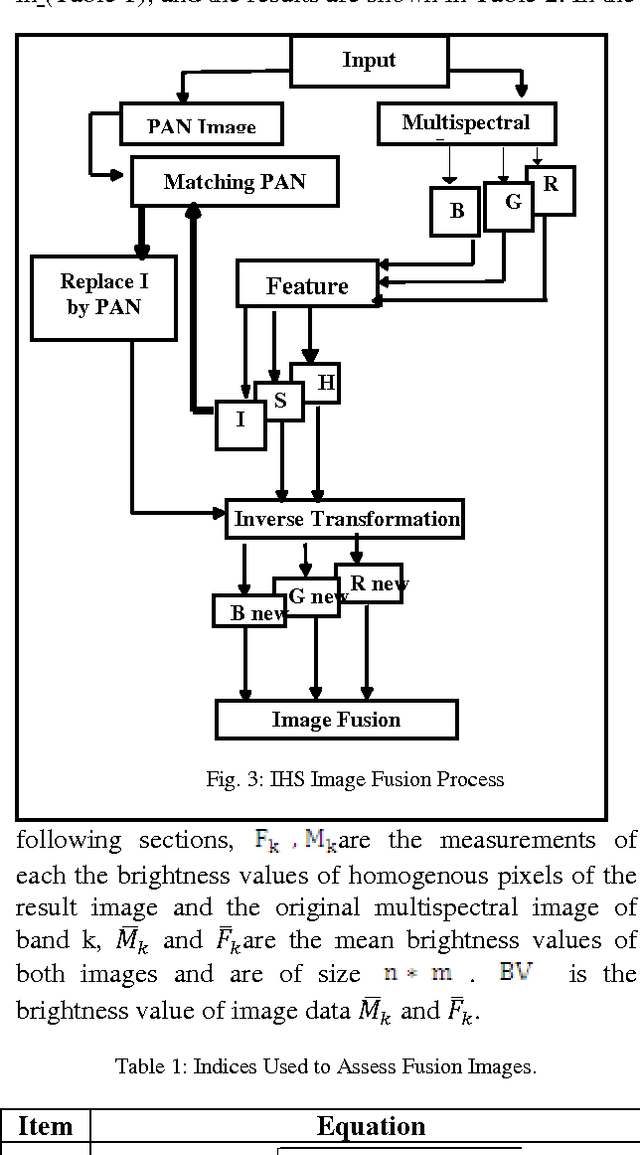

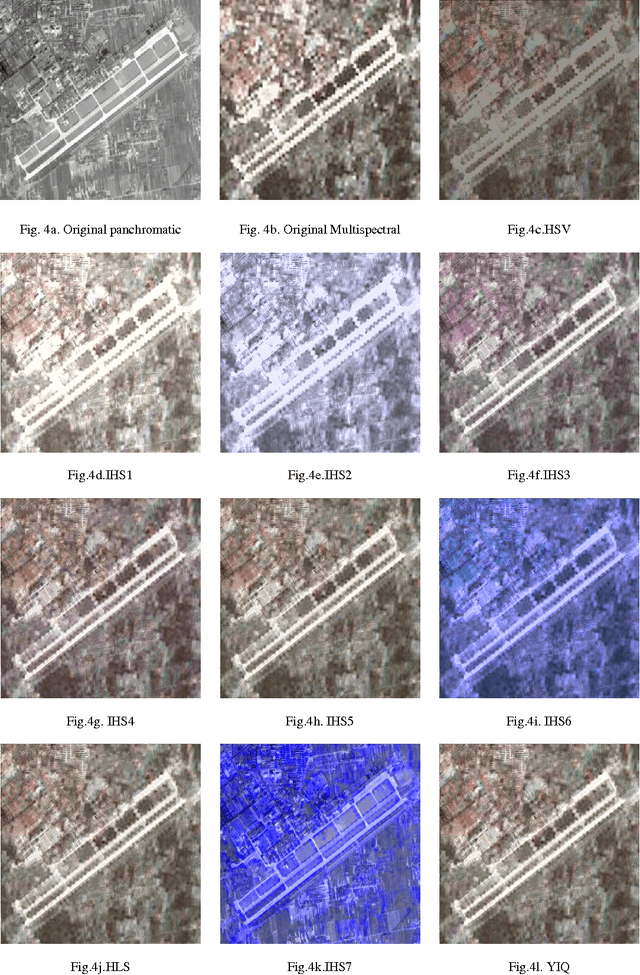

The IHS Transformations Based Image Fusion

Jul 26, 2011

Abstract:The IHS sharpening technique is one of the most commonly used techniques for sharpening. Different transformations have been developed to transfer a color image from the RGB space to the IHS space. Through literature, it appears that, various scientists proposed alternative IHS transformations and many papers have reported good results whereas others show bad ones as will as not those obtained which the formula of IHS transformation were used. In addition to that, many papers show different formulas of transformation matrix such as IHS transformation. This leads to confusion what is the exact formula of the IHS transformation?. Therefore, the main purpose of this work is to explore different IHS transformation techniques and experiment it as IHS based image fusion. The image fusion performance was evaluated, in this study, using various methods to estimate the quality and degree of information improvement of a fused image quantitatively.

* Image Fusion, Color Models, IHS, HSV, HSL, YIQ, transformations

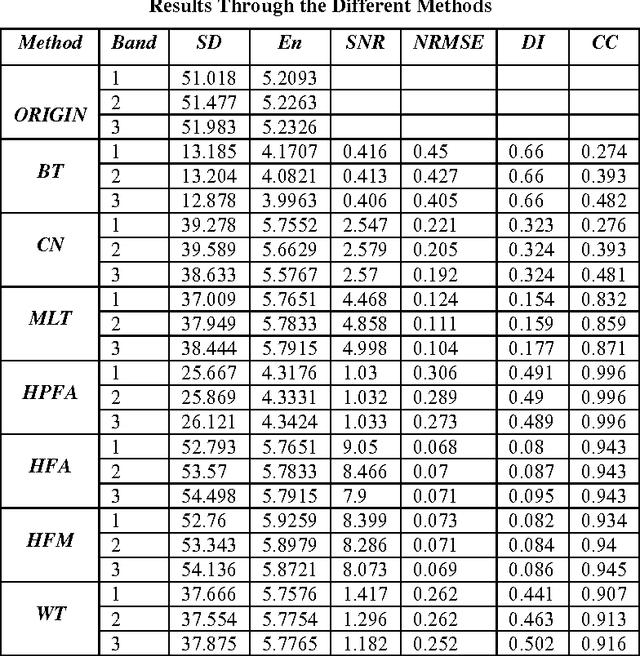

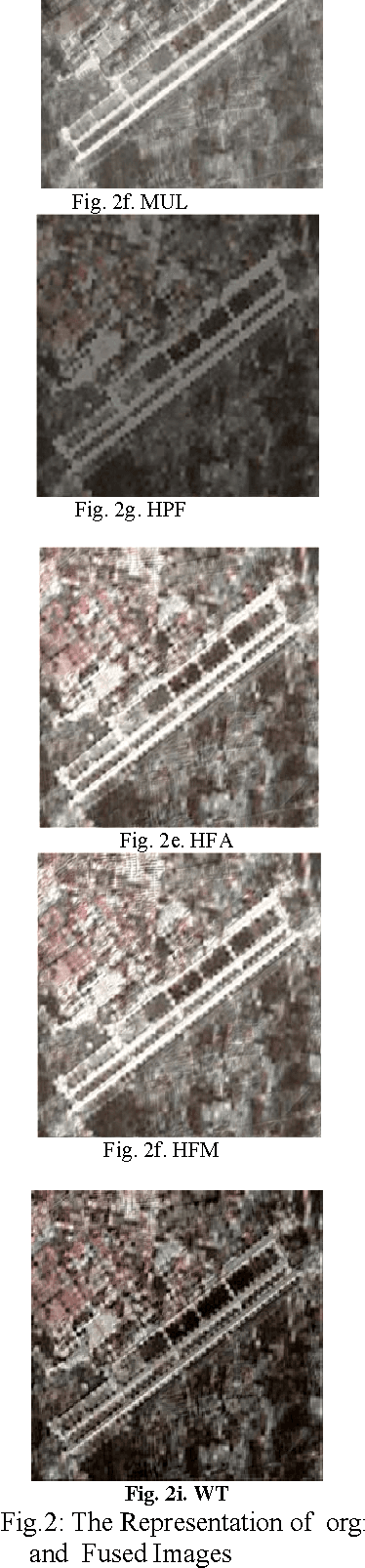

Arithmetic and Frequency Filtering Methods of Pixel-Based Image Fusion Techniques

Jul 19, 2011

Abstract:In remote sensing, image fusion technique is a useful tool used to fuse high spatial resolution panchromatic images (PAN) with lower spatial resolution multispectral images (MS) to create a high spatial resolution multispectral of image fusion (F) while preserving the spectral information in the multispectral image (MS).There are many PAN sharpening techniques or Pixel-Based image fusion techniques that have been developed to try to enhance the spatial resolution and the spectral property preservation of the MS. This paper attempts to undertake the study of image fusion, by using two types of pixel-based image fusion techniques i.e. Arithmetic Combination and Frequency Filtering Methods of Pixel-Based Image Fusion Techniques. The first type includes Brovey Transform (BT), Color Normalized Transformation (CN) and Multiplicative Method (MLT). The second type include High-Pass Filter Additive Method (HPFA), High-Frequency-Addition Method (HFA) High Frequency Modulation Method (HFM) and The Wavelet transform-based fusion method (WT). This paper also devotes to concentrate on the analytical techniques for evaluating the quality of image fusion (F) by using various methods including Standard Deviation (SD), Entropy(En), Correlation Coefficient (CC), Signal-to Noise Ratio (SNR), Normalization Root Mean Square Error (NRMSE) and Deviation Index (DI) to estimate the quality and degree of information improvement of a fused image quantitatively.

* Image Fusion, Pixel-Based Fusion, Brovey Transform, Color Normalized, High-Pass Filter, Modulation, Wavelet transform

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge