Fernando Giner

An Intrinsic Framework of Information Retrieval Evaluation Measures

Apr 02, 2023Abstract:Information retrieval (IR) evaluation measures are cornerstones for determining the suitability and task performance efficiency of retrieval systems. Their metric and scale properties enable to compare one system against another to establish differences or similarities. Based on the representational theory of measurement, this paper determines these properties by exploiting the information contained in a retrieval measure itself. It establishes the intrinsic framework of a retrieval measure, which is the common scenario when the domain set is not explicitly specified. A method to determine the metric and scale properties of any retrieval measure is provided, requiring knowledge of only some of its attained values. The method establishes three main categories of retrieval measures according to their intrinsic properties. Some common user-oriented and system-oriented evaluation measures are classified according to the presented taxonomy.

A comment to "A General Theory of IR Evaluation Measures"

Mar 28, 2023Abstract:The paper "A General Theory of IR Evaluation Measures" develops a formal framework to determine whether IR evaluation measures are interval scales. This comment shows some limitations about its conclusions.

On the Metric Properties of IR Evaluation Measures Based on Ranking Axioms

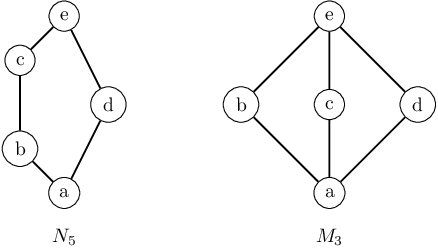

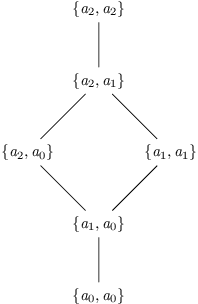

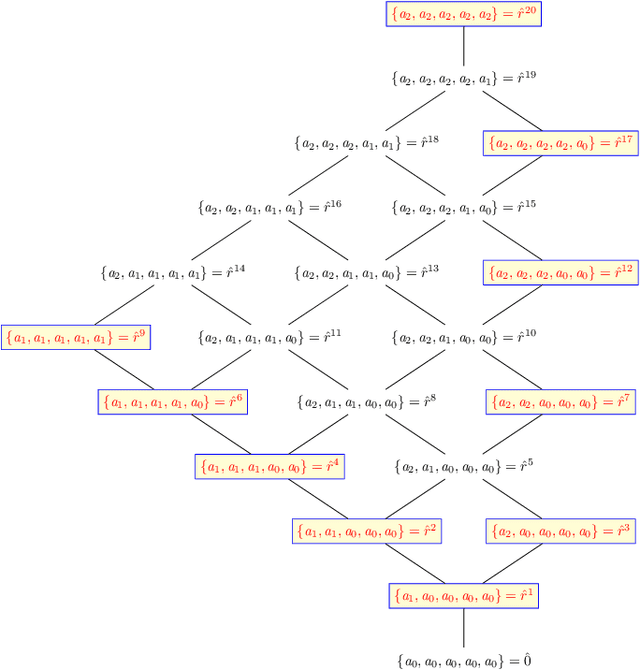

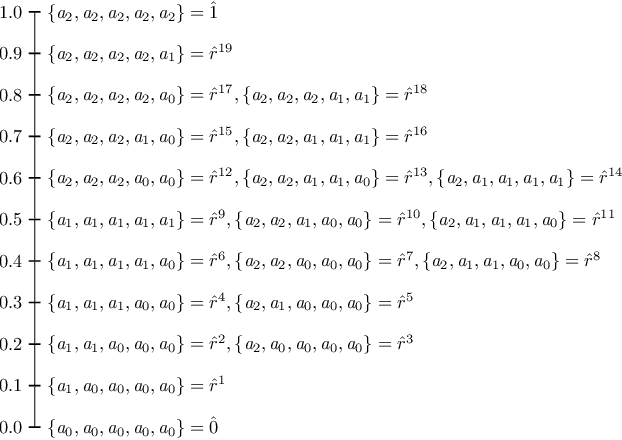

Jul 07, 2022Abstract:The axiomatic analysis of IR evaluation metrics has contributed to a better understanding of their properties. Some works have modelled the effectiveness of retrieval measures with axioms that capture desirable properties on the set of ranked lists of documents. Recently, it has been shown that three of these axioms lead to some orderings. This work formally explores the metric properties of the set of rankings, endowed with these orderings. Based on lattice theory, the possible metrics and pseudo-metrics, defined on these structures, are determined. It is found that, when the relevant documents are prioritized, precision, recall, RBP and DCG are metrics on the set of rankings, however they are pseudo-metrics when the swapping of documents is considered.

On the Effect of Ranking Axioms on IR Evaluation Metrics

Jul 04, 2022

Abstract:The study of IR evaluation metrics through axiomatic analysis enables a better understanding of their numerical properties. Some works have modelled the effectiveness of retrieval metrics with axioms that capture desirable properties on the set of rankings of documents. This paper formally explores the effect of these ranking axioms on the numerical values of some IR evaluation metrics. It focuses on the set of ranked lists of documents with multigrade relevance. The possible orderings in this set are derived from three commonly accepted ranking axioms on retrieval metrics; then, they are classified by their latticial properties. When relevant documents are prioritised, a subset of document rankings are identified: the join-irreducible elements, which have some resemblance to the concept of basis in vector space. It is possible to compute the precision, recall, RBP or DCG values of any ranking from their values in the join-irreducible elements. However this is not the case when the swapping of documents is considered.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge