Fang Qingyun

Fusion Detection via Distance-Decay IoU and weighted Dempster-Shafer Evidence Theory

Dec 06, 2021

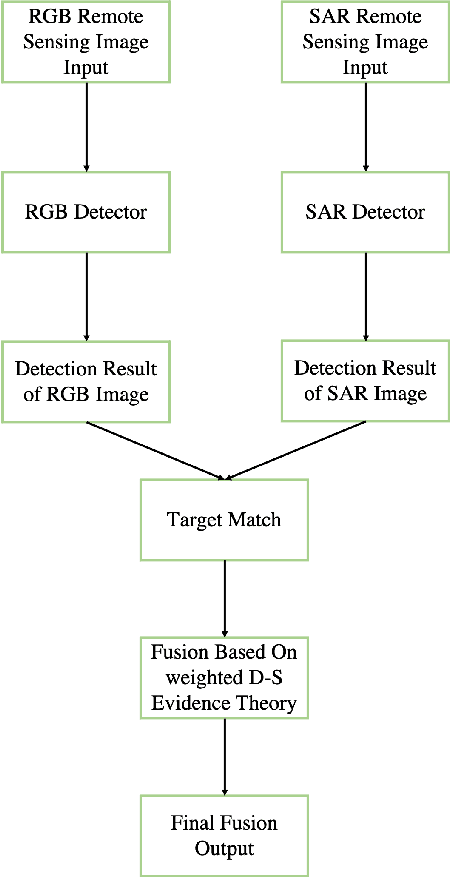

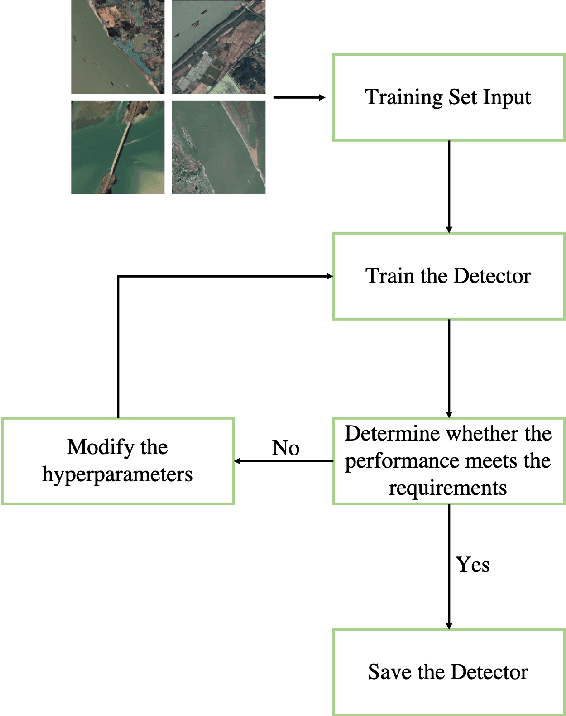

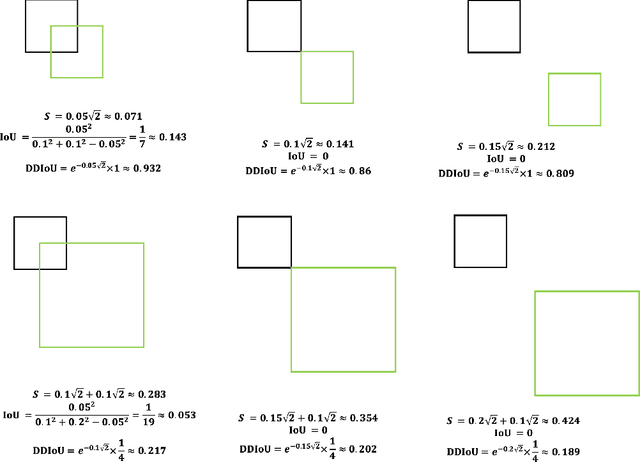

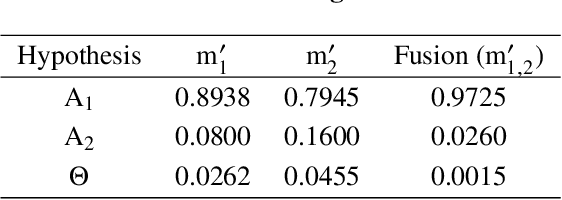

Abstract:In recent years, increasing attentions are paid on object detection in remote sensing imagery. However, traditional optical detection is highly susceptible to illumination and weather anomaly. It is a challenge to effectively utilize the cross-modality information from multi-source remote sensing images, especially from optical and synthetic aperture radar images, to achieve all-day and all-weather detection with high accuracy and speed. Towards this end, a fast multi-source fusion detection framework is proposed in current paper. A novel distance-decay intersection over union is employed to encode the shape properties of the targets with scale invariance. Therefore, the same target in multi-source images can be paired accurately. Furthermore, the weighted Dempster-Shafer evidence theory is utilized to combine the optical and synthetic aperture radar detection, which overcomes the drawback in feature-level fusion that requires a large amount of paired data. In addition, the paired optical and synthetic aperture radar images for container ship Ever Given which ran aground in the Suez Canal are taken to demonstrate our fusion algorithm. To test the effectiveness of the proposed method, on self-built data set, the average precision of the proposed fusion detection framework outperform the optical detection by 20.13%.

Cross-Modality Fusion Transformer for Multispectral Object Detection

Dec 01, 2021

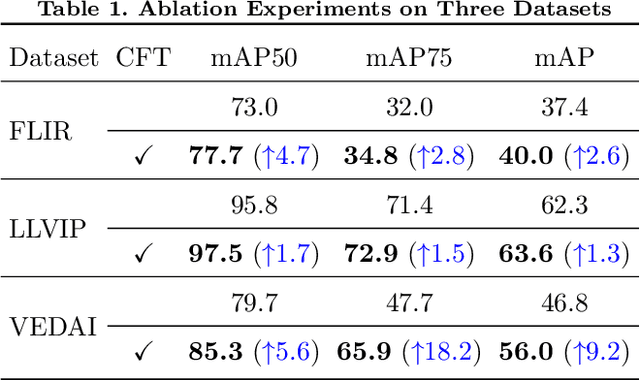

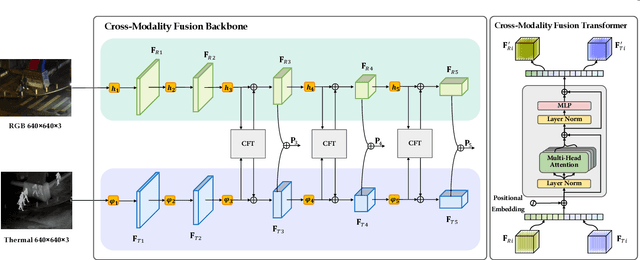

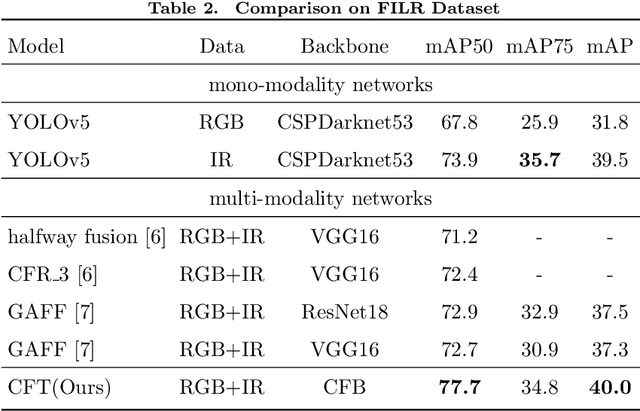

Abstract:Multispectral image pairs can provide the combined information, making object detection applications more reliable and robust in the open world. To fully exploit the different modalities, we present a simple yet effective cross-modality feature fusion approach, named Cross-Modality Fusion Transformer (CFT) in this paper. Unlike prior CNNs-based works, guided by the transformer scheme, our network learns long-range dependencies and integrates global contextual information in the feature extraction stage. More importantly, by leveraging the self attention of the transformer, the network can naturally carry out simultaneous intra-modality and inter-modality fusion, and robustly capture the latent interactions between RGB and Thermal domains, thereby significantly improving the performance of multispectral object detection. Extensive experiments and ablation studies on multiple datasets demonstrate that our approach is effective and achieves state-of-the-art detection performance. Our code and models are available at https://github.com/DocF/multispectral-object-detection.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge