Falak Shah

PoseSync: Robust pose based video synchronization

Aug 24, 2023Abstract:Pose based video sychronization can have applications in multiple domains such as gameplay performance evaluation, choreography or guiding athletes. The subject's actions could be compared and evaluated against those performed by professionals side by side. In this paper, we propose an end to end pipeline for synchronizing videos based on pose. The first step crops the region where the person present in the image followed by pose detection on the cropped image. This is followed by application of Dynamic Time Warping(DTW) on angle/ distance measures between the pose keypoints leading to a scale and shift invariant pose matching pipeline.

Faster object tracking pipeline for real time tracking

Nov 08, 2020

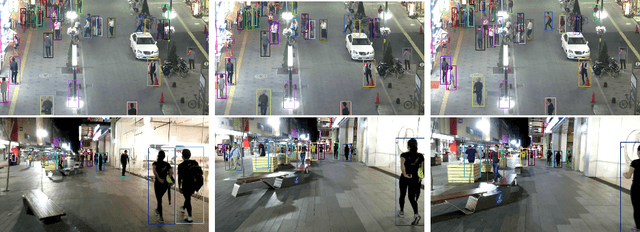

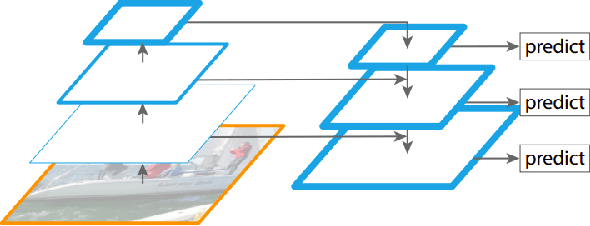

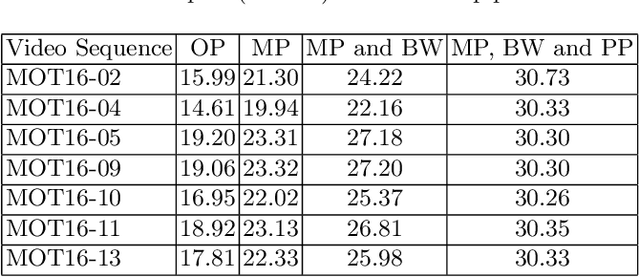

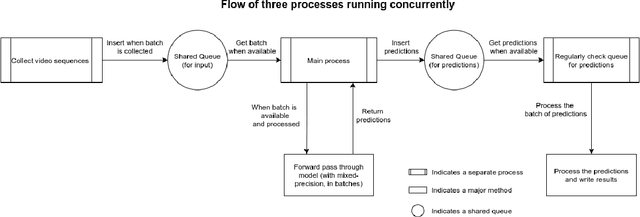

Abstract:Multi-object tracking (MOT) is a challenging practical problem for vision based applications. Most recent approaches for MOT use precomputed detections from models such as Faster RCNN, performing fine-tuning of bounding boxes and association in subsequent phases. However, this is not suitable for actual industrial applications due to unavailability of detections upfront. In their recent work, Wang et al. proposed a tracking pipeline that uses a Joint detection and embedding model and performs target localization and association in realtime. Upon investigating the tracking by detection paradigm, we find that the tracking pipeline can be made faster by performing localization and association tasks parallely with model prediction. This, and other computational optimizations such as using mixed precision model and performing batchwise detection result in a speed-up of the tracking pipeline by 57.8\% (19 FPS to 30 FPS) on FullHD resolution. Moreover, the speed is independent of the object density in image sequence. The main contribution of this paper is showcasing a generic pipeline which can be used to speed up detection based object tracking methods. We also reviewed different batch sizes for optimal performance, taking into consideration GPU memory usage and speed.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge