Fabiano Ferreira Luz

Semantic Parsing: Syntactic assurance to target sentence using LSTM Encoder CFG-Decoder

Jul 18, 2018

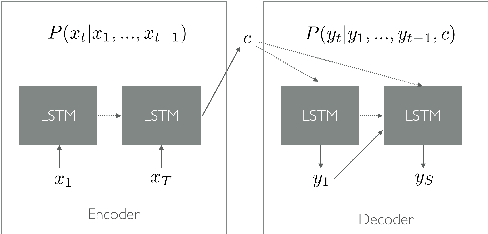

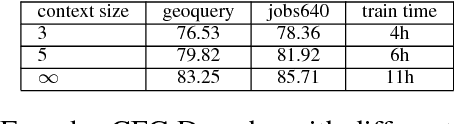

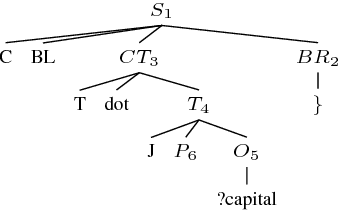

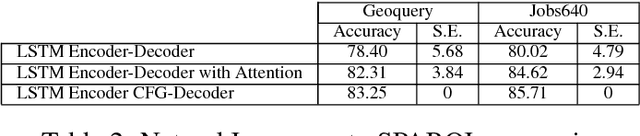

Abstract:Semantic parsing can be defined as the process of mapping natural language sentences into a machine interpretable, formal representation of its meaning. Semantic parsing using LSTM encoder-decoder neural networks have become promising approach. However, human automated translation of natural language does not provide grammaticality guarantees for the sentences generate such a guarantee is particularly important for practical cases where a data base query can cause critical errors if the sentence is ungrammatical. In this work, we propose an neural architecture called Encoder CFG-Decoder, whose output conforms to a given context-free grammar. Results are show for any implementation of such architecture display its correctness and providing benchmark accuracy levels better than the literature.

Semantic Parsing Natural Language into SPARQL: Improving Target Language Representation with Neural Attention

Mar 12, 2018

Abstract:Semantic parsing is the process of mapping a natural language sentence into a formal representation of its meaning. In this work we use the neural network approach to transform natural language sentence into a query to an ontology database in the SPARQL language. This method does not rely on handcraft-rules, high-quality lexicons, manually-built templates or other handmade complex structures. Our approach is based on vector space model and neural networks. The proposed model is based in two learning steps. The first step generates a vector representation for the sentence in natural language and SPARQL query. The second step uses this vector representation as input to a neural network (LSTM with attention mechanism) to generate a model able to encode natural language and decode SPARQL.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge