F. Ratnikov

Generative Adversarial Networks for the fast simulation of the Time Projection Chamber responses at the MPD detector

Mar 30, 2022

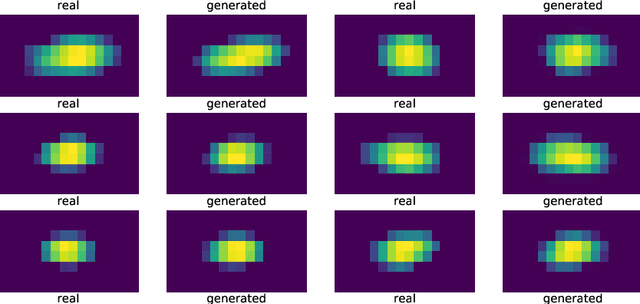

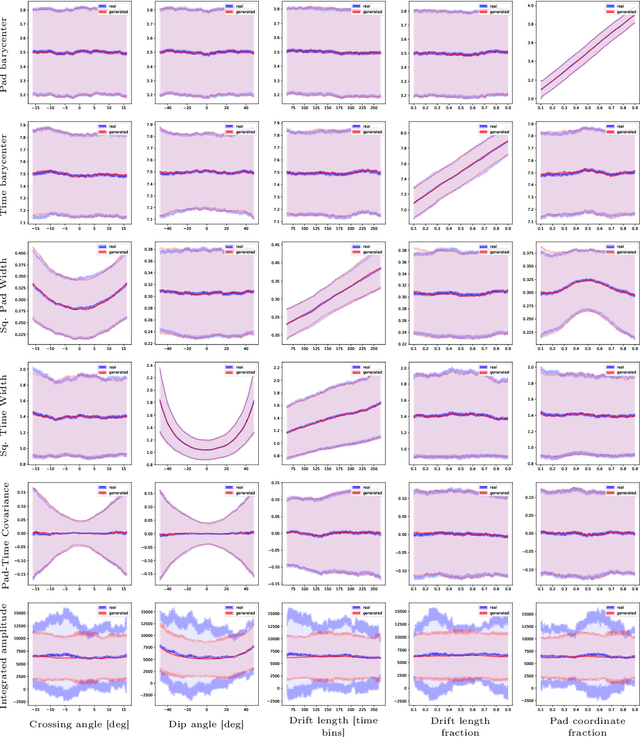

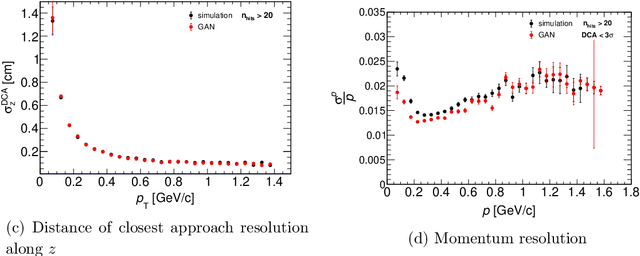

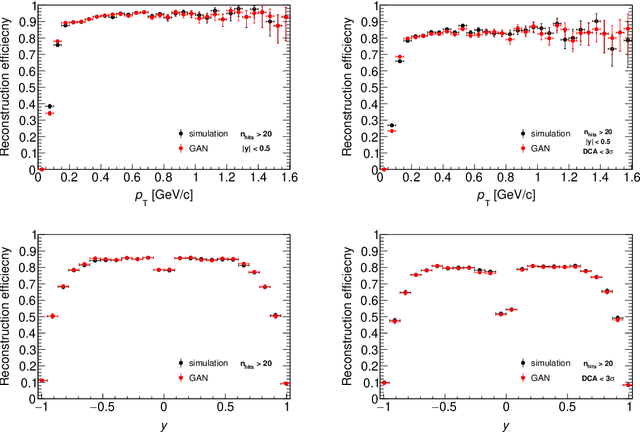

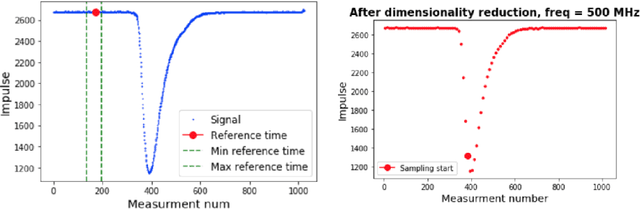

Abstract:The detailed detector simulation models are vital for the successful operation of modern high-energy physics experiments. In most cases, such detailed models require a significant amount of computing resources to run. Often this may not be afforded and less resource-intensive approaches are desired. In this work, we demonstrate the applicability of Generative Adversarial Networks (GAN) as the basis for such fast-simulation models for the case of the Time Projection Chamber (TPC) at the MPD detector at the NICA accelerator complex. Our prototype GAN-based model of TPC works more than an order of magnitude faster compared to the detailed simulation without any noticeable drop in the quality of the high-level reconstruction characteristics for the generated data. Approaches with direct and indirect quality metrics optimization are compared.

Simulating the Time Projection Chamber responses at the MPD detector using Generative Adversarial Networks

Dec 08, 2020

Abstract:High energy physics experiments rely heavily on the detailed detector simulation models in many tasks. Running these detailed models typically requires a notable amount of the computing time available to the experiments. In this work, we demonstrate a novel approach to speed up the simulation of the Time Projection Chamber tracker of the MPD experiment at the NICA accelerator complex. Our method is based on a Generative Adversarial Network - a deep learning technique allowing for implicit non-parametric estimation of the population distribution for a given set of objects. This approach lets us learn and then sample from the distribution of raw detector responses, conditioned on the parameters of the charged particle tracks. To evaluate the quality of the proposed model, we integrate it into the MPD software stack and demonstrate that it produces high-quality events similar to the detailed simulator, with a speed-up of at least an order of magnitude.

Using machine learning to speed up new and upgrade detector studies: a calorimeter case

Mar 11, 2020

Abstract:In this paper, we discuss the way advanced machine learning techniques allow physicists to perform in-depth studies of the realistic operating modes of the detectors during the stage of their design. Proposed approach can be applied to both design concept (CDR) and technical design (TDR) phases of future detectors and existing detectors if upgraded. The machine learning approaches may speed up the verification of the possible detector configurations and will automate the entire detector R\&D, which is often accompanied by a large number of scattered studies. We present the approach of using machine learning for detector R\&D and its optimisation cycle with an emphasis on the project of the electromagnetic calorimeter upgrade for the LHCb detector\cite{lhcls3}. The spatial reconstruction and time of arrival properties for the electromagnetic calorimeter were demonstrated.

Deep learning for inferring cause of data anomalies

Nov 19, 2017

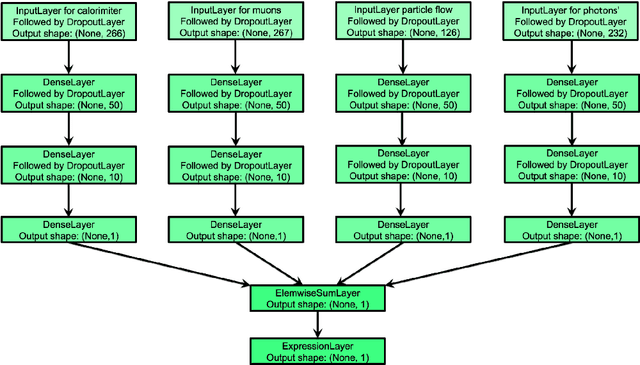

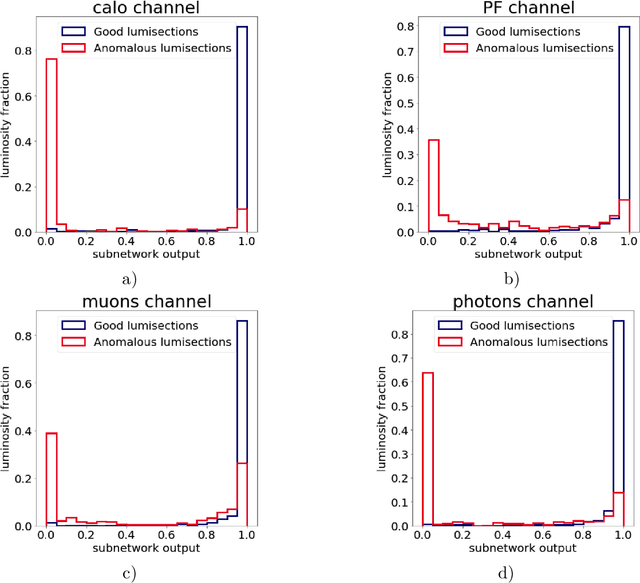

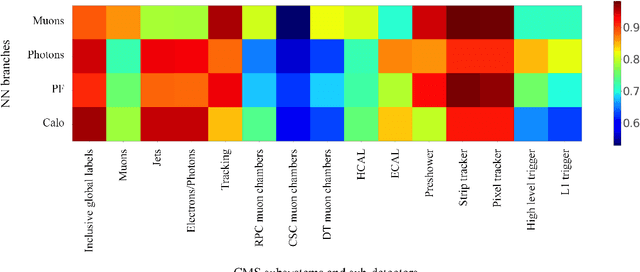

Abstract:Daily operation of a large-scale experiment is a resource consuming task, particularly from perspectives of routine data quality monitoring. Typically, data comes from different sub-detectors and the global quality of data depends on the combinatorial performance of each of them. In this paper, the problem of identifying channels in which anomalies occurred is considered. We introduce a generic deep learning model and prove that, under reasonable assumptions, the model learns to identify 'channels' which are affected by an anomaly. Such model could be used for data quality manager cross-check and assistance and identifying good channels in anomalous data samples. The main novelty of the method is that the model does not require ground truth labels for each channel, only global flag is used. This effectively distinguishes the model from classical classification methods. Being applied to CMS data collected in the year 2010, this approach proves its ability to decompose anomaly by separate channels.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge