Eray Özkural

Zeta Distribution and Transfer Learning Problem

Jun 23, 2018Abstract:We explore the relations between the zeta distribution and algorithmic information theory via a new model of the transfer learning problem. The program distribution is approximated by a zeta distribution with parameter near $1$. We model the training sequence as a stochastic process. We analyze the upper temporal bound for learning a training sequence and its entropy rates, assuming an oracle for the transfer learning problem. We argue from empirical evidence that power-law models are suitable for natural processes. Four sequence models are proposed. Random typing model is like no-free lunch where transfer learning does not work. Zeta process independently samples programs from the zeta distribution. A model of common sub-programs inspired by genetics uses a database of sub-programs. An evolutionary zeta process samples mutations from Zeta distribution. The analysis of stochastic processes inspired by evolution suggest that AI may be feasible in nature, countering no-free lunch sort of arguments.

The Foundations of Deep Learning with a Path Towards General Intelligence

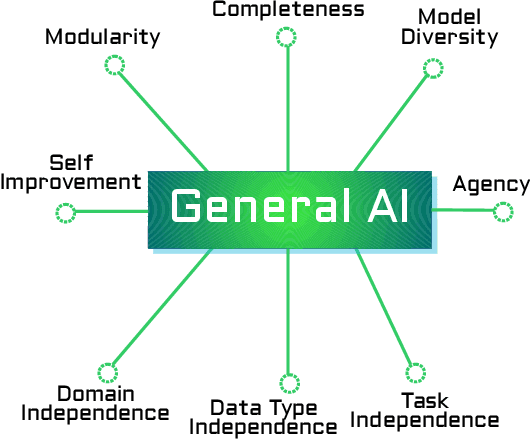

Jun 22, 2018Abstract:Like any field of empirical science, AI may be approached axiomatically. We formulate requirements for a general-purpose, human-level AI system in terms of postulates. We review the methodology of deep learning, examining the explicit and tacit assumptions in deep learning research. Deep Learning methodology seeks to overcome limitations in traditional machine learning research as it combines facets of model richness, generality, and practical applicability. The methodology so far has produced outstanding results due to a productive synergy of function approximation, under plausible assumptions of irreducibility and the efficiency of back-propagation family of algorithms. We examine these winning traits of deep learning, and also observe the various known failure modes of deep learning. We conclude by giving recommendations on how to extend deep learning methodology to cover the postulates of general-purpose AI including modularity, and cognitive architecture. We also relate deep learning to advances in theoretical neuroscience research.

Omega: An Architecture for AI Unification

May 16, 2018

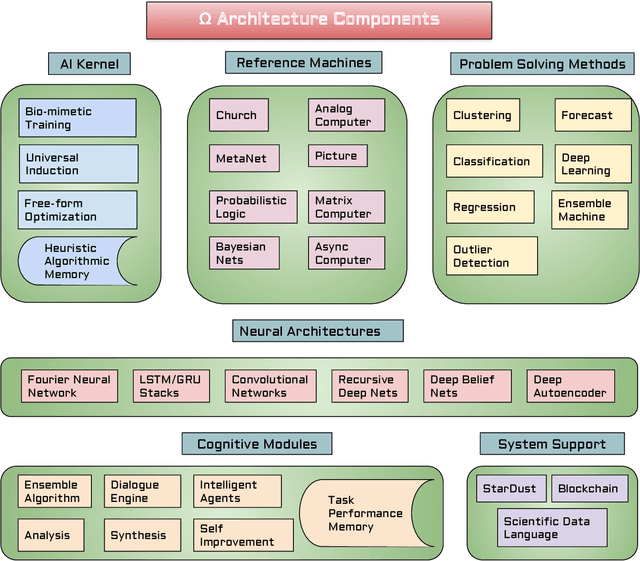

Abstract:We introduce the open-ended, modular, self-improving Omega AI unification architecture which is a refinement of Solomonoff's Alpha architecture, as considered from first principles. The architecture embodies several crucial principles of general intelligence including diversity of representations, diversity of data types, integrated memory, modularity, and higher-order cognition. We retain the basic design of a fundamental algorithmic substrate called an "AI kernel" for problem solving and basic cognitive functions like memory, and a larger, modular architecture that re-uses the kernel in many ways. Omega includes eight representation languages and six classes of neural networks, which are briefly introduced. The architecture is intended to initially address data science automation, hence it includes many problem solving methods for statistical tasks. We review the broad software architecture, higher-order cognition, self-improvement, modular neural architectures, intelligent agents, the process and memory hierarchy, hardware abstraction, peer-to-peer computing, and data abstraction facility.

Ultimate Intelligence Part III: Measures of Intelligence, Perception and Intelligent Agents

Sep 08, 2017Abstract:We propose that operator induction serves as an adequate model of perception. We explain how to reduce universal agent models to operator induction. We propose a universal measure of operator induction fitness, and show how it can be used in a reinforcement learning model and a homeostasis (self-preserving) agent based on the free energy principle. We show that the action of the homeostasis agent can be explained by the operator induction model.

Gigamachine: incremental machine learning on desktop computers

Sep 08, 2017Abstract:We present a concrete design for Solomonoff's incremental machine learning system suitable for desktop computers. We use R5RS Scheme and its standard library with a few omissions as the reference machine. We introduce a Levin Search variant based on a stochastic Context Free Grammar together with new update algorithms that use the same grammar as a guiding probability distribution for incremental machine learning. The updates include adjusting production probabilities, re-using previous solutions, learning programming idioms and discovery of frequent subprograms. The issues of extending the a priori probability distribution and bootstrapping are discussed. We have implemented a good portion of the proposed algorithms. Experiments with toy problems show that the update algorithms work as expected.

* This is the original submission for my AGI-2010 paper titled Stochastic Grammar Based Incremental Machine Learning Using Scheme which may be found on http://agi-conf.org/2010/wp-content/uploads/2009/06/paper_24.pdf and presented a partial but general solution to the transfer learning problem in AI. arXiv admin note: substantial text overlap with arXiv:1103.1003

Godseed: Benevolent or Malevolent?

Oct 10, 2016Abstract:It is hypothesized by some thinkers that benign looking AI objectives may result in powerful AI drives that may pose an existential risk to human society. We analyze this scenario and find the underlying assumptions to be unlikely. We examine the alternative scenario of what happens when universal goals that are not human-centric are used for designing AI agents. We follow a design approach that tries to exclude malevolent motivations from AI agents, however, we see that objectives that seem benevolent may pose significant risk. We consider the following meta-rules: preserve and pervade life and culture, maximize the number of free minds, maximize intelligence, maximize wisdom, maximize energy production, behave like human, seek pleasure, accelerate evolution, survive, maximize control, and maximize capital. We also discuss various solution approaches for benevolent behavior including selfless goals, hybrid designs, Darwinism, universal constraints, semi-autonomy, and generalization of robot laws. A "prime directive" for AI may help in formulating an encompassing constraint for avoiding malicious behavior. We hypothesize that social instincts for autonomous robots may be effective such as attachment learning. We mention multiple beneficial scenarios for an advanced semi-autonomous AGI agent in the near future including space exploration, automation of industries, state functions, and cities. We conclude that a beneficial AI agent with intelligence beyond human-level is possible and has many practical use cases.

Ultimate Intelligence Part II: Physical Measure and Complexity of Intelligence

May 11, 2016Abstract:We continue our analysis of volume and energy measures that are appropriate for quantifying inductive inference systems. We extend logical depth and conceptual jump size measures in AIT to stochastic problems, and physical measures that involve volume and energy. We introduce a graphical model of computational complexity that we believe to be appropriate for intelligent machines. We show several asymptotic relations between energy, logical depth and volume of computation for inductive inference. In particular, we arrive at a "black-hole equation" of inductive inference, which relates energy, volume, space, and algorithmic information for an optimal inductive inference solution. We introduce energy-bounded algorithmic entropy. We briefly apply our ideas to the physical limits of intelligent computation in our universe.

Ultimate Intelligence Part I: Physical Completeness and Objectivity of Induction

Apr 09, 2015Abstract:We propose that Solomonoff induction is complete in the physical sense via several strong physical arguments. We also argue that Solomonoff induction is fully applicable to quantum mechanics. We show how to choose an objective reference machine for universal induction by defining a physical message complexity and physical message probability, and argue that this choice dissolves some well-known objections to universal induction. We also introduce many more variants of physical message complexity based on energy and action, and discuss the ramifications of our proposals.

What Is It Like to Be a Brain Simulation?

Feb 01, 2014Abstract:We frame the question of what kind of subjective experience a brain simulation would have in contrast to a biological brain. We discuss the brain prosthesis thought experiment. We evaluate how the experience of the brain simulation might differ from the biological, according to a number of hypotheses about experience and the properties of simulation. Then, we identify finer questions relating to the original inquiry, and answer them from both a general physicalist, and panexperientialist perspective.

Diverse Consequences of Algorithmic Probability

Nov 07, 2011Abstract:We reminisce and discuss applications of algorithmic probability to a wide range of problems in artificial intelligence, philosophy and technological society. We propose that Solomonoff has effectively axiomatized the field of artificial intelligence, therefore establishing it as a rigorous scientific discipline. We also relate to our own work in incremental machine learning and philosophy of complexity.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge