Dennis Willsch

How to find expressible and trainable parameterized quantum circuits?

Mar 15, 2026Abstract:Whether parameterized quantum circuits (PQCs) can be systematically constructed to be both trainable and expressive remains an open question. Highly expressive PQCs often exhibit barren plateaus, while several trainable alternatives admit efficient classical simulation. We address this question by deriving a finite-sample, dimension-independent concentration bound for estimating the variance of a PQC cost function, yielding explicit trainability guarantees. Across commonly used ansätze, we observe an anticorrelation between trainability and expressibility, consistent with theoretical insights. Building on this observation, we propose a property-based ansatz-search framework for identifying circuits that combine trainability and expressibility. We demonstrate its practical viability on a real quantum computer and apply it to variational quantum algorithms. We identify quantum neural network ansätze with improved effective dimension using over $6 \times$ fewer parameters, and for VQE on $\mathrm{H}_2$ we achieve UCCSD-like accuracy at substantially reduced circuit complexity.

Support vector machines on the D-Wave quantum annealer

Jun 14, 2019

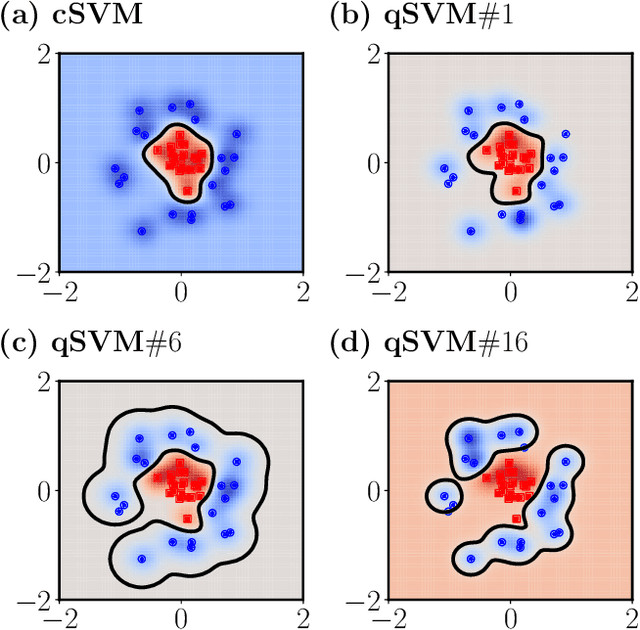

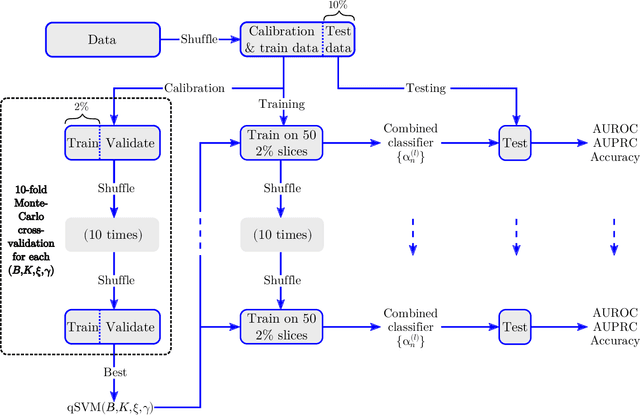

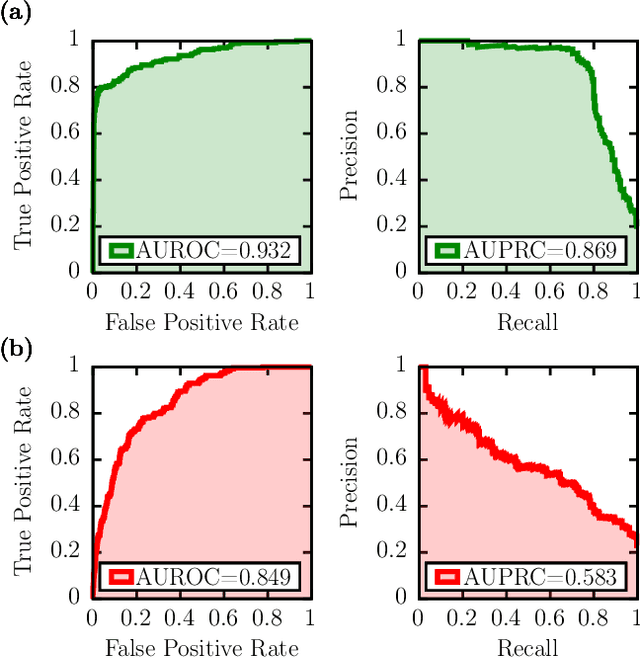

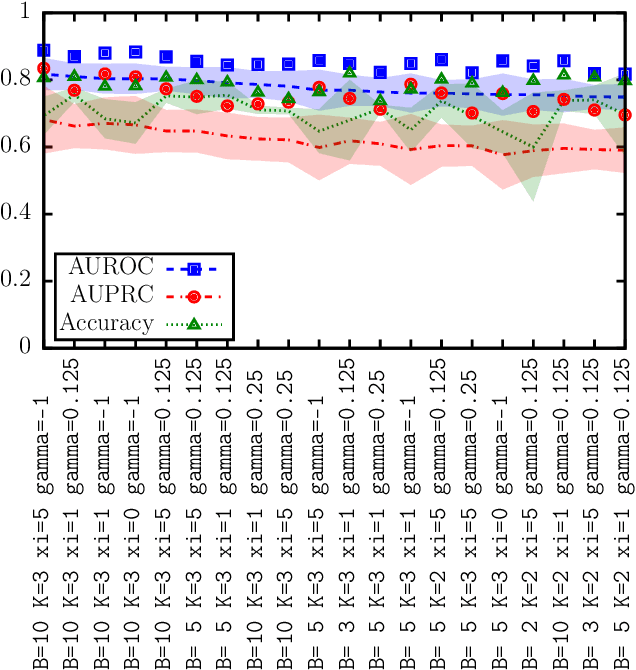

Abstract:Kernel-based support vector machines (SVMs) are supervised machine learning algorithms for classification and regression problems. We present a method to train SVMs on a D-Wave 2000Q quantum annealer and study its performance in comparison to SVMs trained on conventional computers. The method is applied to both synthetic data and real data obtained from biology experiments. We find that the quantum annealer produces an ensemble of different solutions that often generalizes better to unseen data than the single global minimum of an SVM trained on a conventional computer, especially in cases where only limited training data is available. For cases with more training data than currently fits on the quantum annealer, we show that a combination of classifiers for subsets of the data almost always produces stronger joint classifiers than the conventional SVM for the same parameters.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge