Dennis Singh Moirangthem

EzSQL: An SQL intermediate representation for improving SQL-to-text Generation

Nov 28, 2024

Abstract:The SQL-to-text generation task traditionally uses template base, Seq2Seq, tree-to-sequence, and graph-to-sequence models. Recent models take advantage of pre-trained generative language models for this task in the Seq2Seq framework. However, treating SQL as a sequence of inputs to the pre-trained models is not optimal. In this work, we put forward a new SQL intermediate representation called EzSQL to align SQL with the natural language text sequence. EzSQL simplifies the SQL queries and brings them closer to natural language text by modifying operators and keywords, which can usually be described in natural language. EzSQL also removes the need for set operators. Our proposed SQL-to-text generation model uses EzSQL as the input to a pre-trained generative language model for generating the text descriptions. We demonstrate that our model is an effective state-of-the-art method to generate text narrations from SQL queries on the WikiSQL and Spider datasets. We also show that by generating pretraining data using our SQL-to-text generation model, we can enhance the performance of Text-to-SQL parsers.

Human Action Generation with Generative Adversarial Networks

May 26, 2018

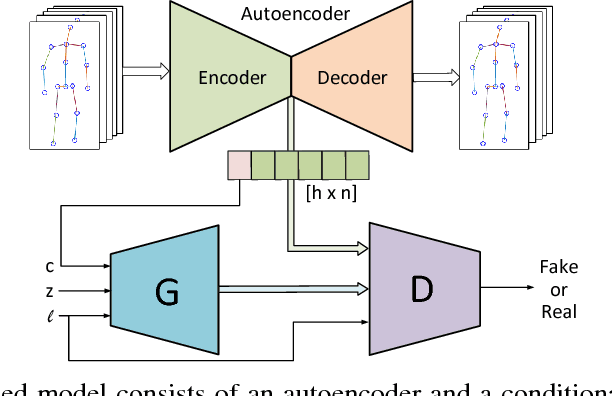

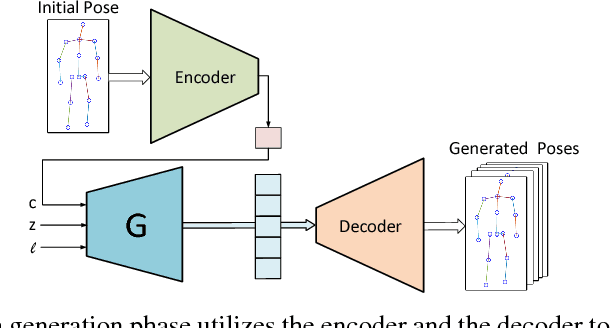

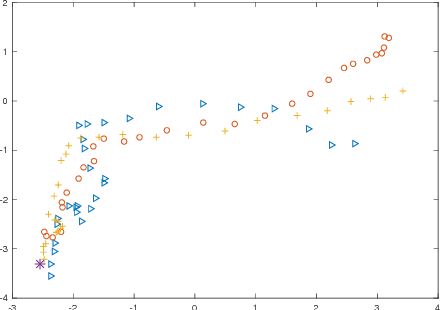

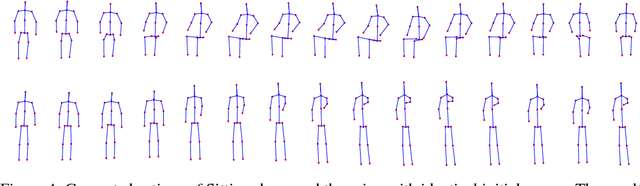

Abstract:Inspired by the recent advances in generative models, we introduce a human action generation model in order to generate a consecutive sequence of human motions to formulate novel actions. We propose a framework of an autoencoder and a generative adversarial network (GAN) to produce multiple and consecutive human actions conditioned on the initial state and the given class label. The proposed model is trained in an end-to-end fashion, where the autoencoder is jointly trained with the GAN. The model is trained on the NTU RGB+D dataset and we show that the proposed model can generate different styles of actions. Moreover, the model can successfully generate a sequence of novel actions given different action labels as conditions. The conventional human action prediction and generation models lack those features, which are essential for practical applications.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge