Dejan Stepec

Vessel and Port Efficiency Metrics through Validated AIS data

Apr 30, 2021

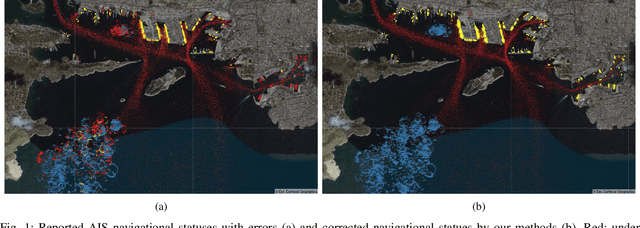

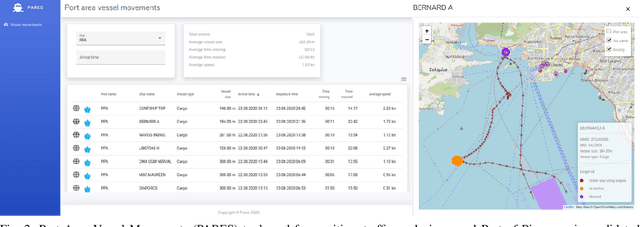

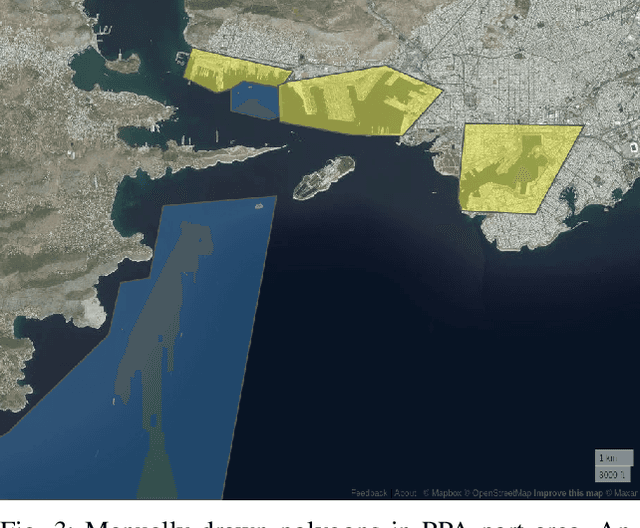

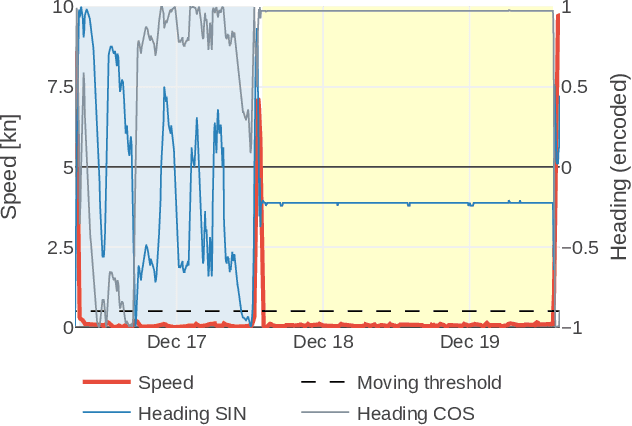

Abstract:Automatic Identification System (AIS) data represents a rich source of information about maritime traffic and offers a great potential for data analytics and predictive modeling solutions, which can help optimizing logistic chains and to reduce environmental impacts. In this work, we address the main limitations of the validity of AIS navigational data fields, by proposing a machine learning-based data-driven methodology to detect and (to the possible extent) also correct erroneous data. Additionally, we propose a metric that can be used by vessel operators and ports to express numerically their business and environmental efficiency through time and spatial dimensions, enabled with the obtained validated AIS data. We also demonstrate Port Area Vessel Movements (PARES) tool, which demonstrates the proposed solutions.

Machine Learning based System for Vessel Turnaround Time Prediction

Apr 28, 2021

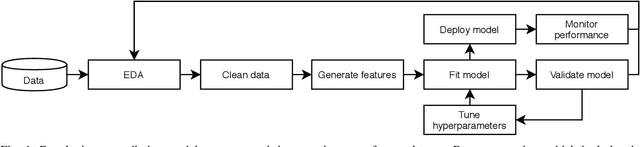

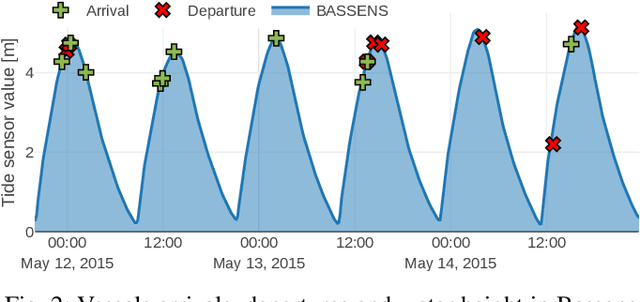

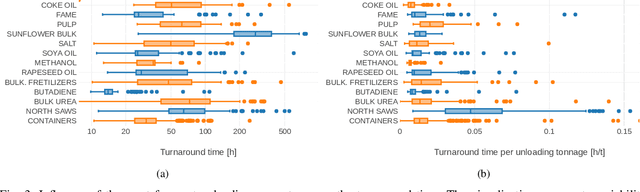

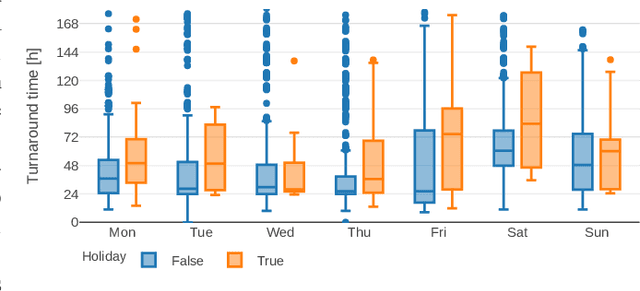

Abstract:In this paper, we present a novel system for predicting vessel turnaround time, based on machine learning and standardized port call data. We also investigate the use of specific external maritime big data, to enhance the accuracy of the available data and improve the performance of the developed system. An extensive evaluation is performed in Port of Bordeaux, where we report the results on 11 years of historical port call data and provide verification on live, operational data from the port. The proposed automated data-driven turnaround time prediction system is able to perform with increased accuracy, in comparison with the current manual expert-based system in Port of Bordeaux.

Automated System for Ship Detection from Medium Resolution Satellite Optical Imagery

Apr 28, 2021

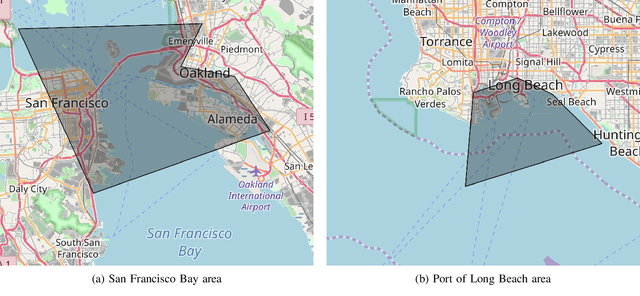

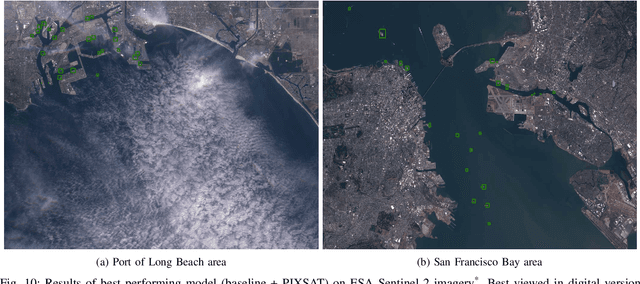

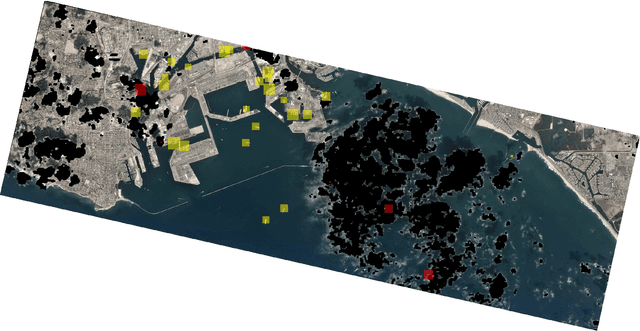

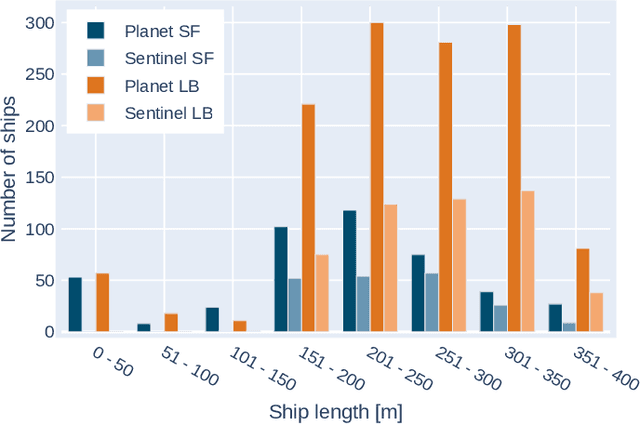

Abstract:In this paper, we present a ship detection pipeline for low-cost medium resolution satellite optical imagery obtained from ESA Sentinel-2 and Planet Labs Dove constellations. This optical satellite imagery is readily available for any place on Earth and underutilized in the maritime domain, compared to existing solutions based on synthetic-aperture radar (SAR) imagery. We developed a ship detection method based on a state-of-the-art deep-learning-based object detection method which was developed and evaluated on a large-scale dataset that was collected and automatically annotated with the help of Automatic Identification System (AIS) data.

Image Synthesis as a Pretext for Unsupervised Histopathological Diagnosis

Apr 28, 2021

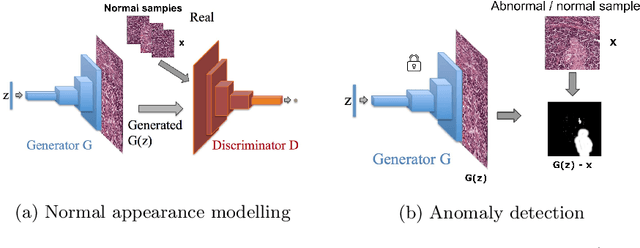

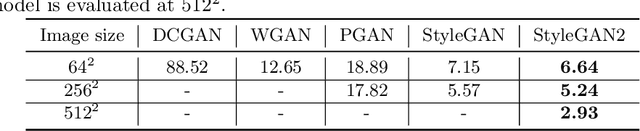

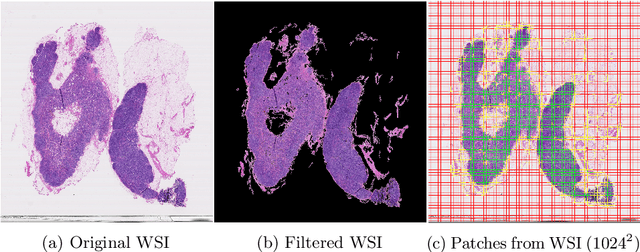

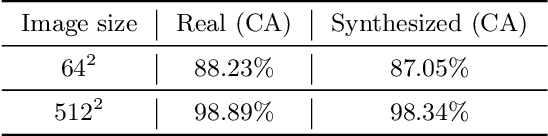

Abstract:Anomaly detection in visual data refers to the problem of differentiating abnormal appearances from normal cases. Supervised approaches have been successfully applied to different domains, but require an abundance of labeled data. Due to the nature of how anomalies occur and their underlying generating processes, it is hard to characterize and label them. Recent advances in deep generative-based models have sparked interest in applying such methods for unsupervised anomaly detection and have shown promising results in medical and industrial inspection domains. In this work we evaluate a crucial part of the unsupervised visual anomaly detection pipeline, that is needed for normal appearance modeling, as well as the ability to reconstruct closest looking normal and tumor samples. We adapt and evaluate different high-resolution state-of-the-art generative models from the face synthesis domain and demonstrate their superiority over currently used approaches on a challenging domain of digital pathology. Multifold improvement in image synthesis is demonstrated in terms of the quality and resolution of the generated images, validated also against the supervised model.

Unsupervised Detection of Cancerous Regions in Histology Imagery using Image-to-Image Translation

Apr 28, 2021

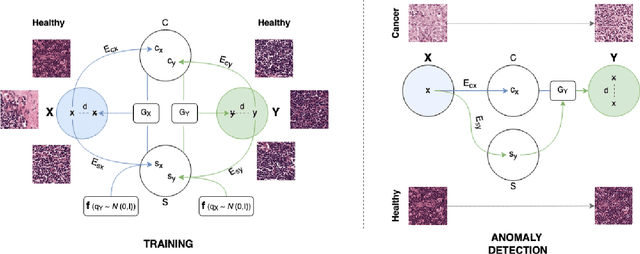

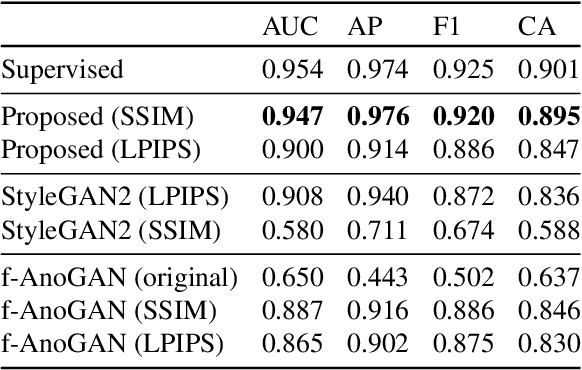

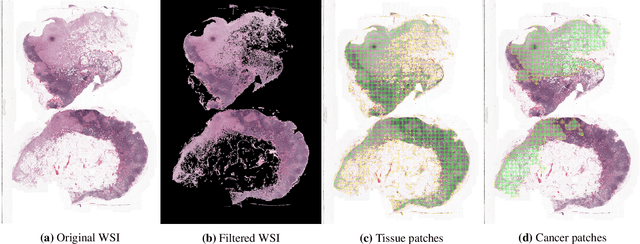

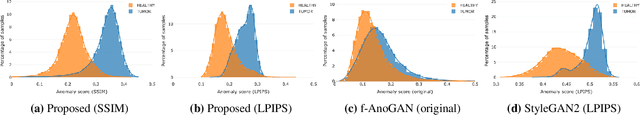

Abstract:Detection of visual anomalies refers to the problem of finding patterns in different imaging data that do not conform to the expected visual appearance and is a widely studied problem in different domains. Due to the nature of anomaly occurrences and underlying generating processes, it is hard to characterize them and obtain labeled data. Obtaining labeled data is especially difficult in biomedical applications, where only trained domain experts can provide labels, which often come in large diversity and complexity. Recently presented approaches for unsupervised detection of visual anomalies approaches omit the need for labeled data and demonstrate promising results in domains, where anomalous samples significantly deviate from the normal appearance. Despite promising results, the performance of such approaches still lags behind supervised approaches and does not provide a one-fits-all solution. In this work, we present an image-to-image translation-based framework that significantly surpasses the performance of existing unsupervised methods and approaches the performance of supervised methods in a challenging domain of cancerous region detection in histology imagery.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge