David Sigtermans

Is Information Theory Inherently a Theory of Causation?

Oct 19, 2020

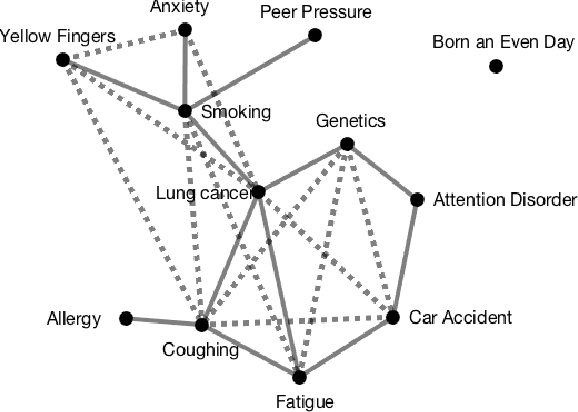

Abstract:Information theory gives rise to a novel method for causal skeleton discovery by expressing associations between variables as tensors. This tensor-based approach reduces the dimensionality of the data needed to test for conditional independence. For systems comprising three variables, this means that the causal skeleton can be determined using the tensors of the pair-wise associations.

Transfer Entropy: where Shannon meets Turing

May 25, 2019

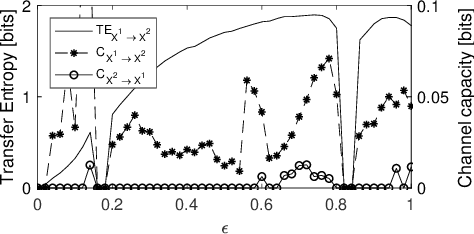

Abstract:Transfer entropy is capable of capturing nonlinear source-destination relations between multi-variate time series. It is a measure of association between source data that are transformed into destination data via a set of linear transformations between their probability mass functions. The resulting tensor formalism is used to show that in specific cases, e.g., in the case the system consists of three stochastic processes, bivariate analysis suffices to distinguish true relations from false relations. This allows us to determine the causal structure as far as encoded in the probability mass functions of noisy data. The tensor formalism was also used to derive the Data Processing Inequality for transfer entropy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge