Daniel Sáez Trigueros

Face Recognition: From Traditional to Deep Learning Methods

Oct 31, 2018

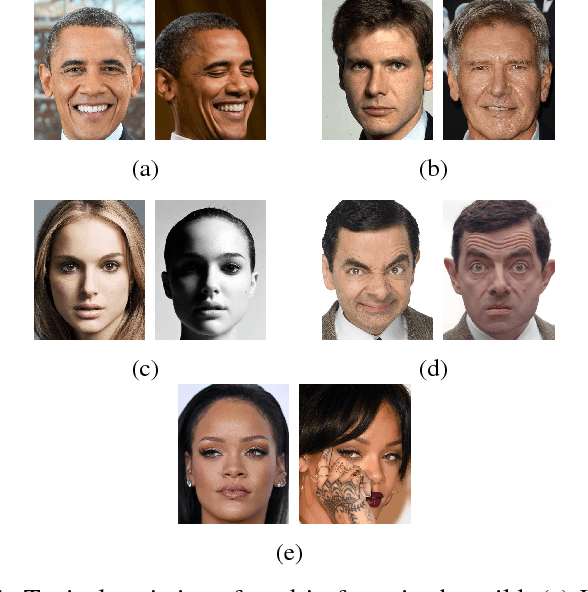

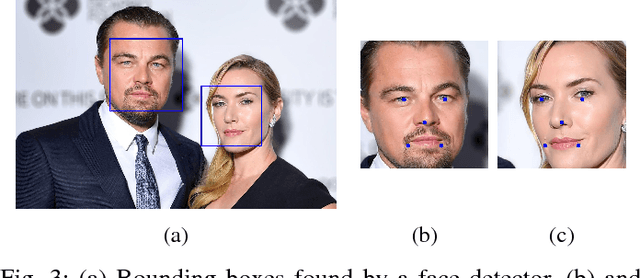

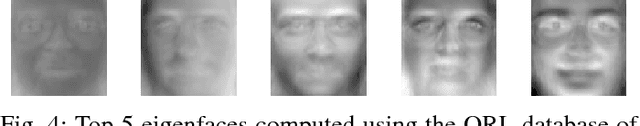

Abstract:Starting in the seventies, face recognition has become one of the most researched topics in computer vision and biometrics. Traditional methods based on hand-crafted features and traditional machine learning techniques have recently been superseded by deep neural networks trained with very large datasets. In this paper we provide a comprehensive and up-to-date literature review of popular face recognition methods including both traditional (geometry-based, holistic, feature-based and hybrid methods) and deep learning methods.

Generating Photo-Realistic Training Data to Improve Face Recognition Accuracy

Oct 31, 2018

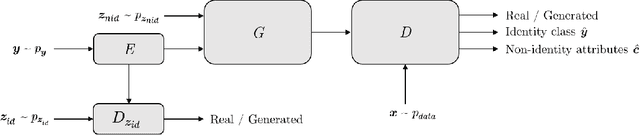

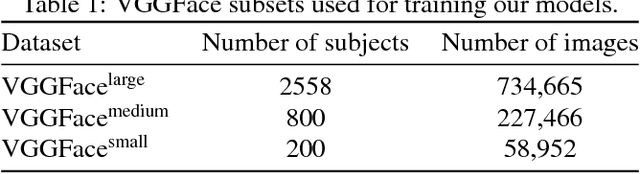

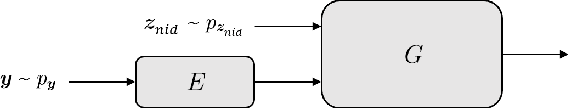

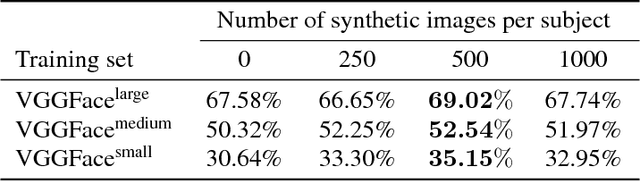

Abstract:In this paper we investigate the feasibility of using synthetic data to augment face datasets. In particular, we propose a novel generative adversarial network (GAN) that can disentangle identity-related attributes from non-identity-related attributes. This is done by training an embedding network that maps discrete identity labels to an identity latent space that follows a simple prior distribution, and training a GAN conditioned on samples from that distribution. Our proposed GAN allows us to augment face datasets by generating both synthetic images of subjects in the training set and synthetic images of new subjects not in the training set. By using recent advances in GAN training, we show that the synthetic images generated by our model are photo-realistic, and that training with augmented datasets can indeed increase the accuracy of face recognition models as compared with models trained with real images alone.

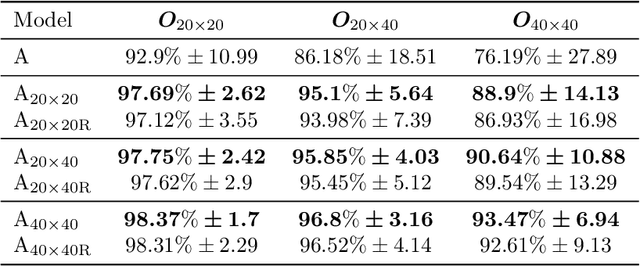

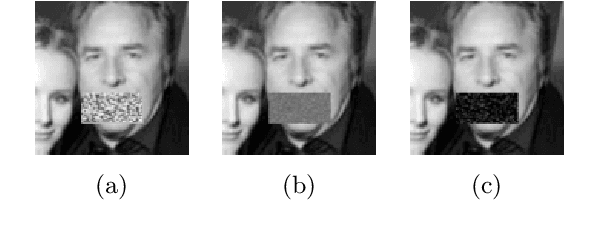

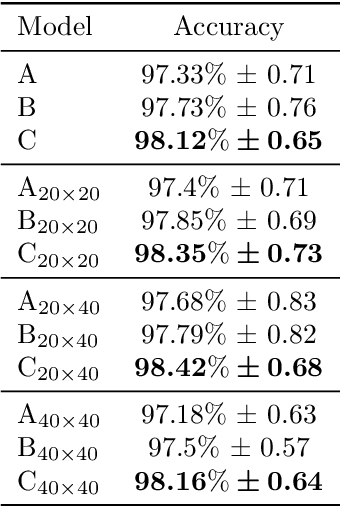

Enhancing Convolutional Neural Networks for Face Recognition with Occlusion Maps and Batch Triplet Loss

Jun 10, 2018

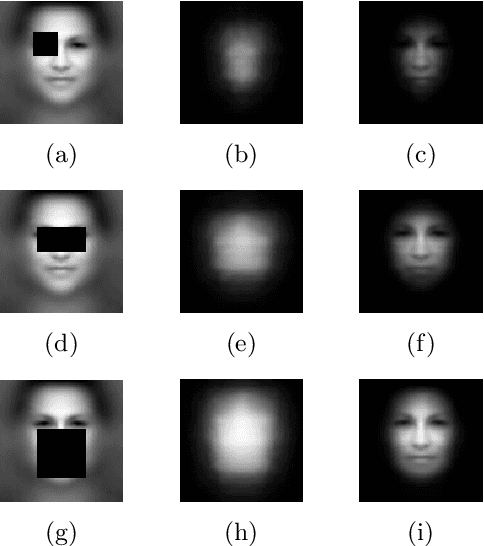

Abstract:Despite the recent success of convolutional neural networks for computer vision applications, unconstrained face recognition remains a challenge. In this work, we make two contributions to the field. Firstly, we consider the problem of face recognition with partial occlusions and show how current approaches might suffer significant performance degradation when dealing with this kind of face images. We propose a simple method to find out which parts of the human face are more important to achieve a high recognition rate, and use that information during training to force a convolutional neural network to learn discriminative features from all the face regions more equally, including those that typical approaches tend to pay less attention to. We test the accuracy of the proposed method when dealing with real-life occlusions using the AR face database. Secondly, we propose a novel loss function called batch triplet loss that improves the performance of the triplet loss by adding an extra term to the loss function to cause minimisation of the standard deviation of both positive and negative scores. We show consistent improvement in the Labeled Faces in the Wild (LFW) benchmark by applying both proposed adjustments to the convolutional neural network training.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge