Daniel J. Bauer

Machine Learning-Based Estimation and Goodness-of-Fit for Large-Scale Confirmatory Item Factor Analysis

Sep 20, 2021

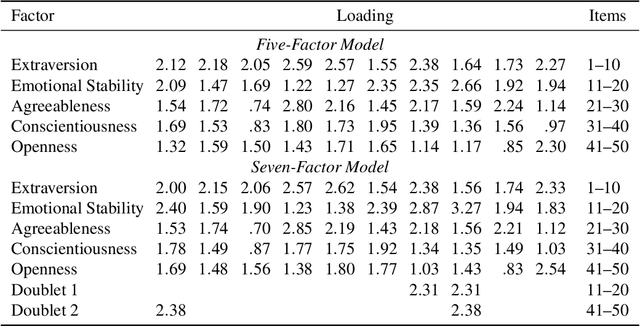

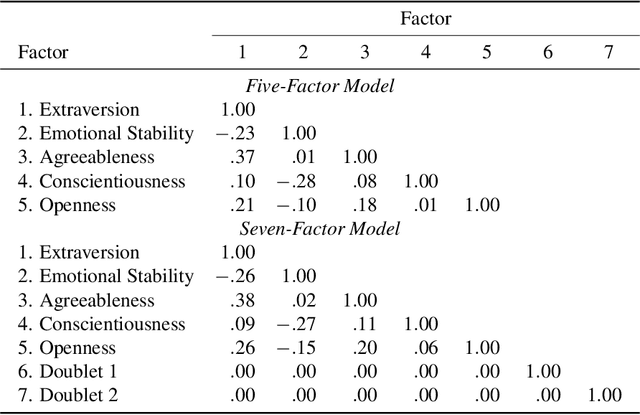

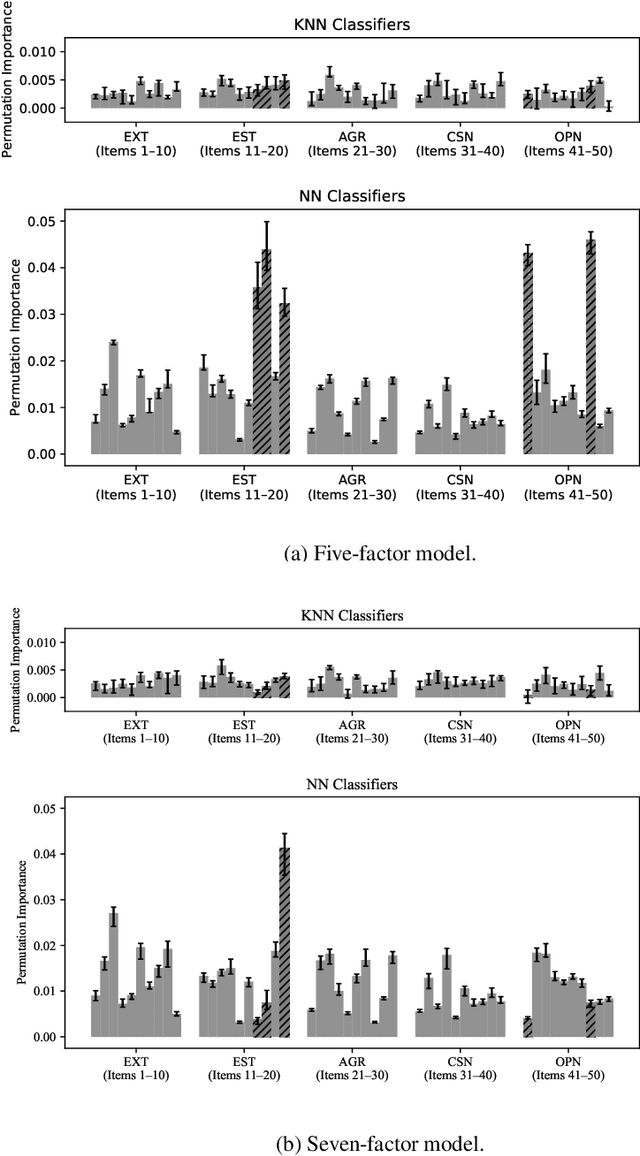

Abstract:We investigate novel parameter estimation and goodness-of-fit (GOF) assessment methods for large-scale confirmatory item factor analysis (IFA) with many respondents, items, and latent factors. For parameter estimation, we extend Urban and Bauer's (2021) deep learning algorithm for exploratory IFA to the confirmatory setting by showing how to handle user-defined constraints on loadings and factor correlations. For GOF assessment, we explore new simulation-based tests and indices. In particular, we consider extensions of the classifier two-sample test (C2ST), a method that tests whether a machine learning classifier can distinguish between observed data and synthetic data sampled from a fitted IFA model. The C2ST provides a flexible framework that integrates overall model fit, piece-wise fit, and person fit. Proposed extensions include a C2ST-based test of approximate fit in which the user specifies what percentage of observed data can be distinguished from synthetic data as well as a C2ST-based relative fit index that is similar in spirit to the relative fit indices used in structural equation modeling. Via simulation studies, we first show that the confirmatory extension of Urban and Bauer's (2021) algorithm produces more accurate parameter estimates as the sample size increases and obtains comparable estimates to a state-of-the-art confirmatory IFA estimation procedure in less time. We next show that the C2ST-based test of approximate fit controls the empirical type I error rate and detects when the number of latent factors is misspecified. Finally, we empirically investigate how the sampling distribution of the C2ST-based relative fit index depends on the sample size.

A Deep Learning Algorithm for High-Dimensional Exploratory Item Factor Analysis

Jan 22, 2020

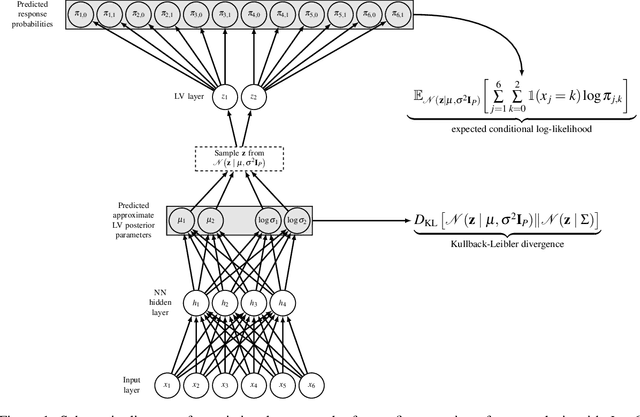

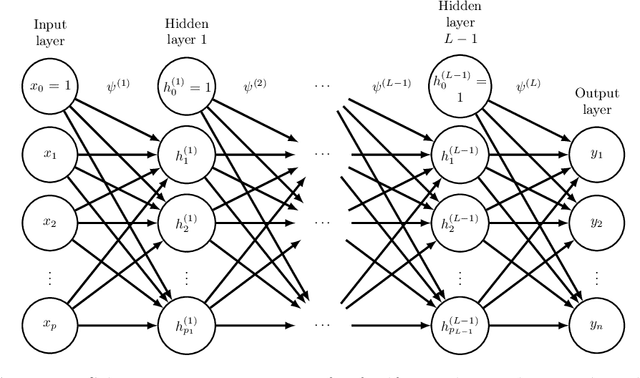

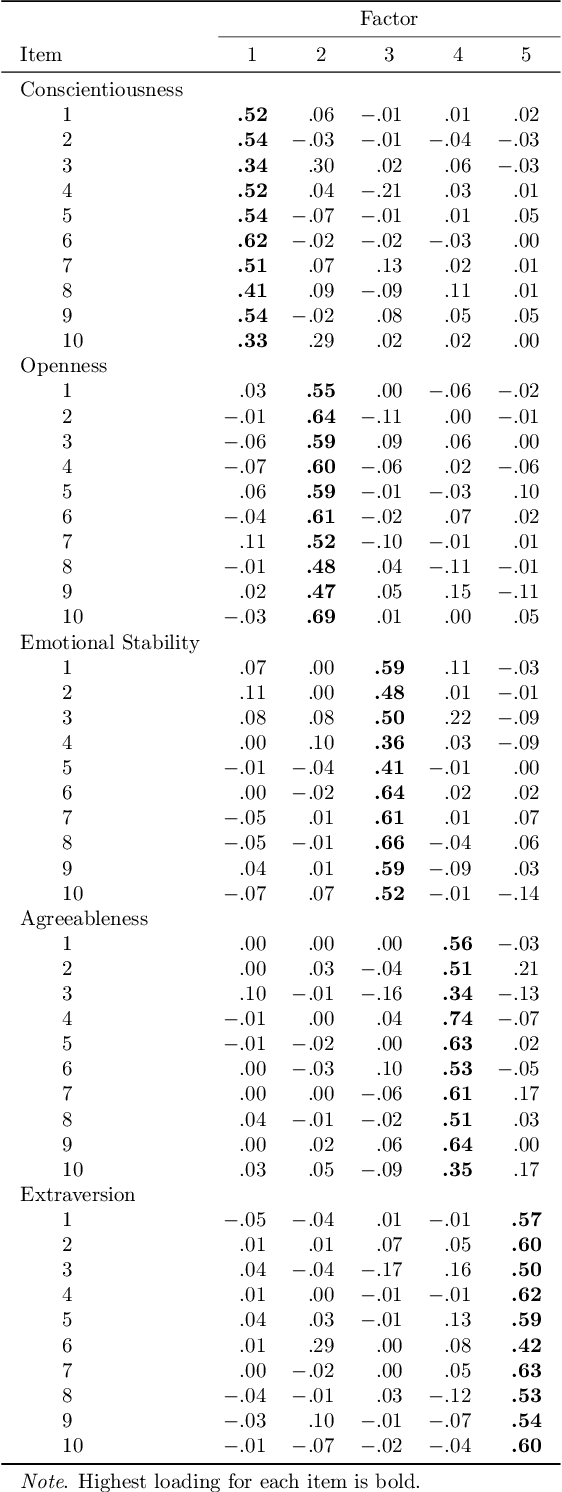

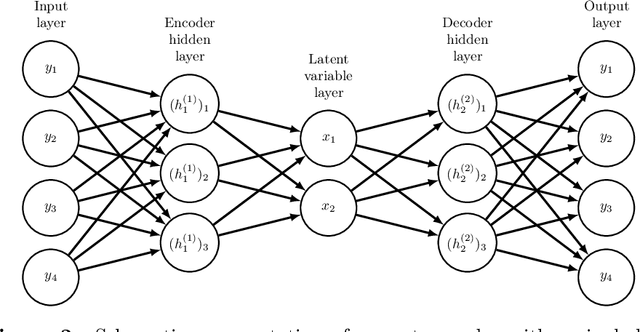

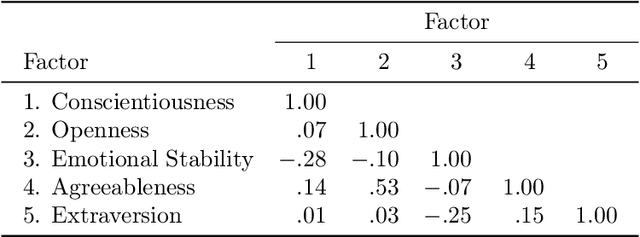

Abstract:Deep learning methods are the gold standard for non-linear statistical modeling in computer vision and in natural language processing but are rarely used in psychometrics. To bridge this gap, we present a novel deep learning algorithm for exploratory item factor analysis (IFA). Our approach combines a deep artificial neural network (ANN) model called a variational autoencoder (VAE) with recent work that uses regularization for exploratory factor analysis. We first provide overviews of ANNs and VAEs. We then describe how to conduct exploratory IFA with a VAE and demonstrate our approach in two empirical examples and in two simulated examples. Our empirical results were consistent with existing psychological theory across random starting values. Our simulations suggest that the VAE consistently recovers the data generating factor pattern with moderate-sized samples. Secondary loadings were underestimated with a complex factor structure and intercept parameter estimates were moderately biased with both simple and complex factor structures. All models converged in minutes, even with hundreds of thousands of observations, hundreds of items, and tens of factors. We conclude that the VAE offers a powerful new approach to fitting complex statistical models in psychological and educational measurement.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge