Dai-Qiang Chen

Image denoising based on improved data-driven sparse representation

Mar 01, 2016

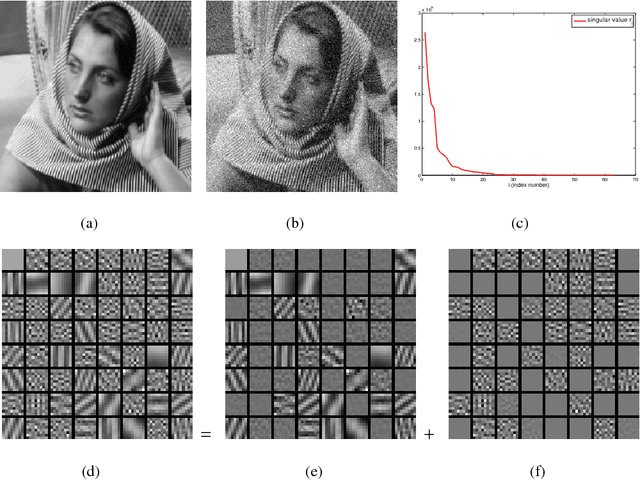

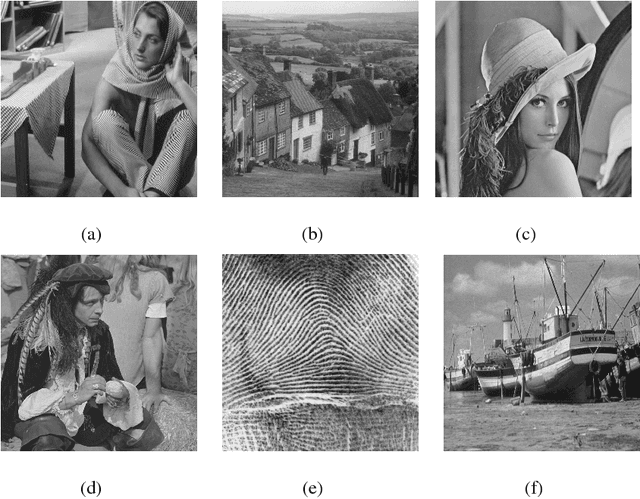

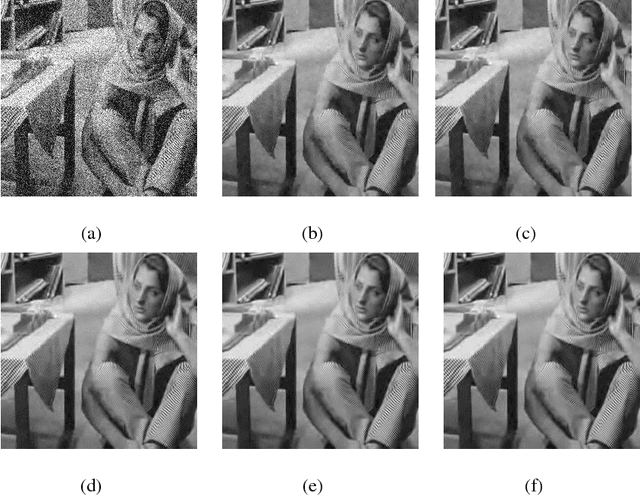

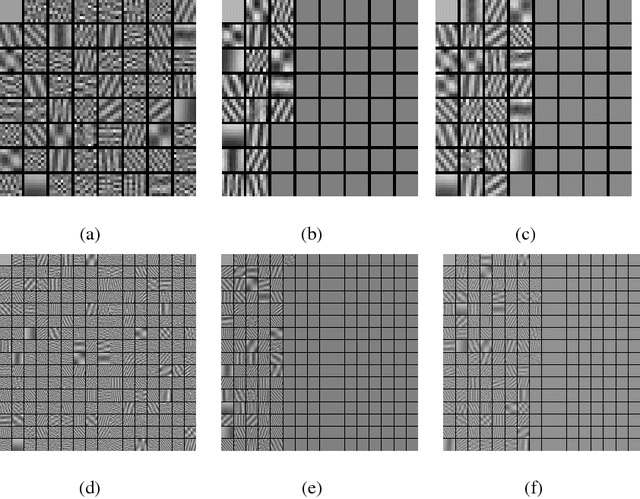

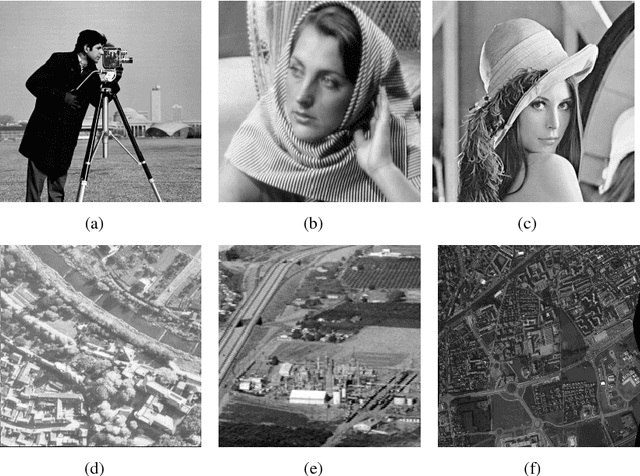

Abstract:Sparse representation of images under certain transform domain has been playing a fundamental role in image restoration tasks. One such representative method is the widely used wavelet tight frame systems. Instead of adopting fixed filters for constructing a tight frame to sparsely model any input image, a data-driven tight frame was proposed for the sparse representation of images, and shown to be very efficient for image denoising very recently. However, in this method the number of framelet filters used for constructing a tight frame is the same as the length of filters. In fact, through further investigation it is found that part of these filters are unnecessary and even harmful to the recovery effect due to the influence of noise. Therefore, an improved data-driven sparse representation systems constructed with much less number of filters are proposed. Numerical results on denoising experiments demonstrate that the proposed algorithm overall outperforms the original data-driven tight frame construction scheme on both the recovery quality and computational time.

Inexact Alternating Direction Method Based on Newton descent algorithm with Application to Poisson Image Deblurring

Mar 16, 2015

Abstract:The recovery of images from the observations that are degraded by a linear operator and further corrupted by Poisson noise is an important task in modern imaging applications such as astronomical and biomedical ones. Gradient-based regularizers involve the popular total variation semi-norm have become standard techniques for Poisson image restoration due to its edge-preserving ability. Various efficient algorithms have been developed for solving the corresponding minimization problem with non-smooth regularization terms. In this paper, motivated by the idea of the alternating direction minimization algorithm and the Newton's method with upper convergent rate, we further propose inexact alternating direction methods utilizing the proximal Hessian matrix information of the objective function, in a way reminiscent of Newton descent methods. Besides, we also investigate the global convergence of the proposed algorithms under certain conditions. Finally, we illustrate that the proposed algorithms outperform the current state-of-the-art algorithms through numerical experiments on Poisson image deblurring.

Fixed Point Algorithm Based on Quasi-Newton Method for Convex Minimization Problem with Application to Image Deblurring

Dec 15, 2014

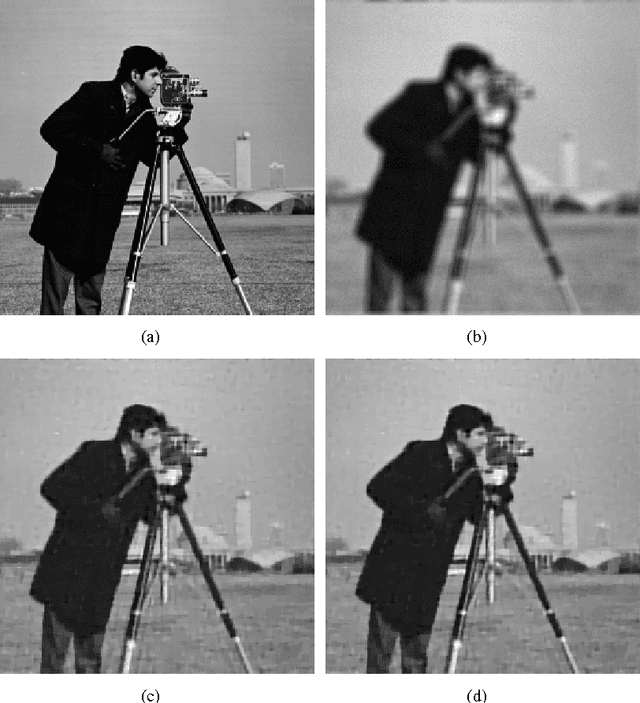

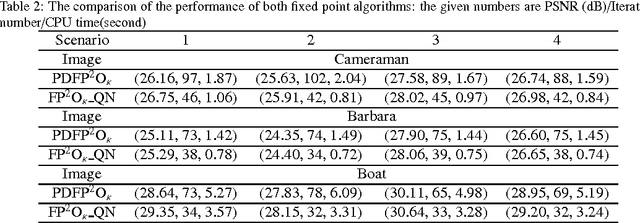

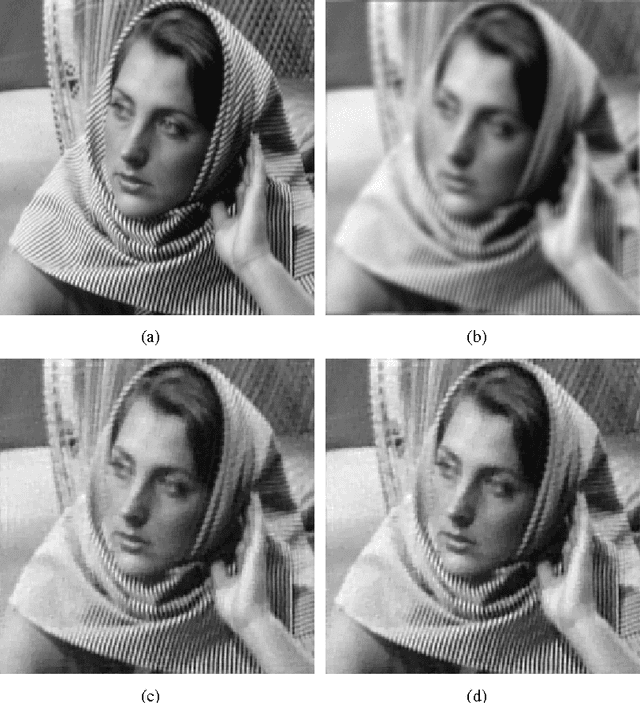

Abstract:Solving an optimization problem whose objective function is the sum of two convex functions has received considerable interests in the context of image processing recently. In particular, we are interested in the scenario when a non-differentiable convex function such as the total variation (TV) norm is included in the objective function due to many variational models established in image processing have this nature. In this paper, we propose a fast fixed point algorithm based on the quasi-Newton method for solving this class of problem, and apply it in the field of TV-based image deblurring. The novel method is derived from the idea of the quasi-Newton method, and the fixed-point algorithms based on the proximity operator, which were widely investigated very recently. Utilizing the non-expansion property of the proximity operator we further investigate the global convergence of the proposed algorithm. Numerical experiments on image deblurring problem with additive or multiplicative noise are presented to demonstrate that the proposed algorithm is superior to the recently developed fixed-point algorithm in the computational efficiency.

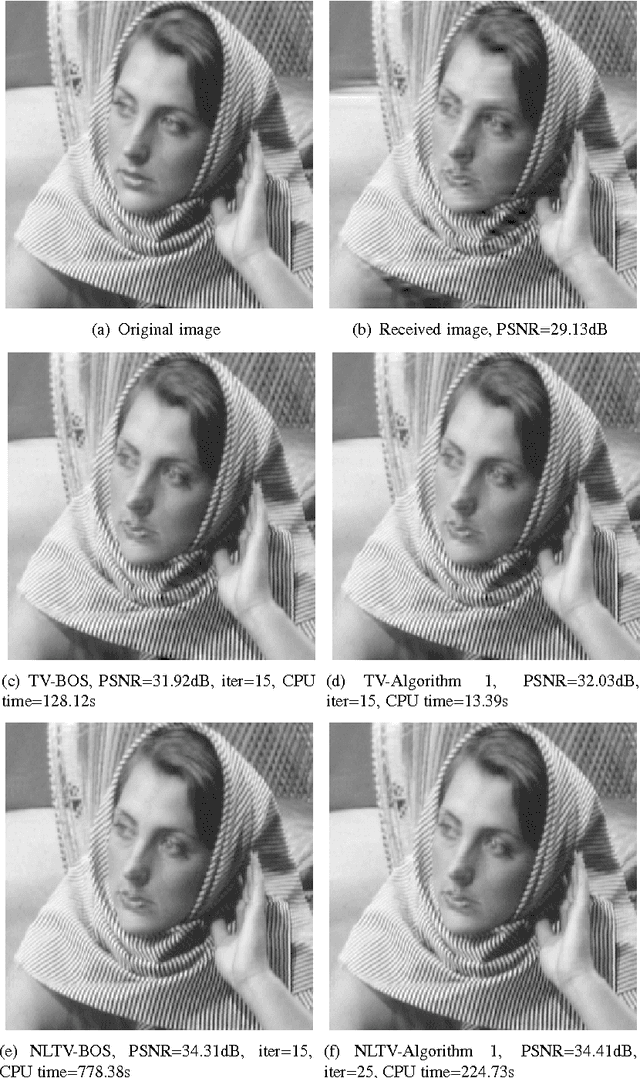

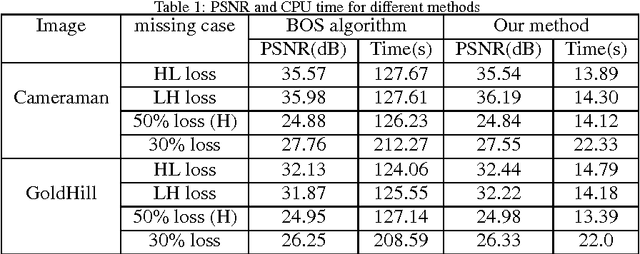

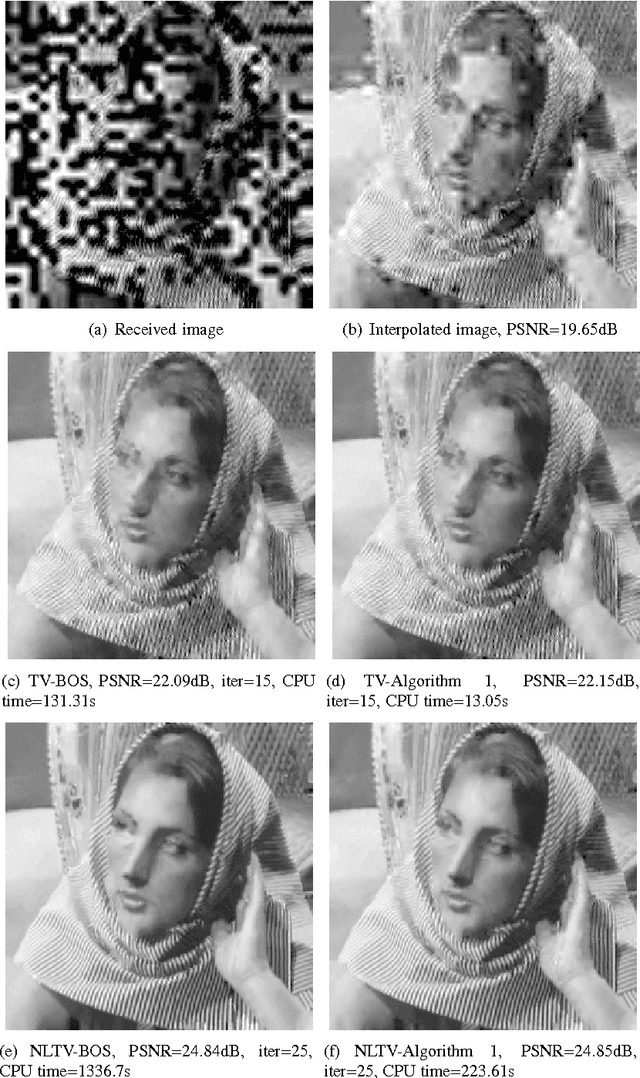

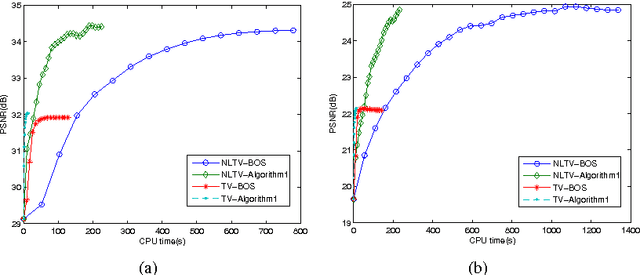

Novel variational model for inpainting in the wavelet domain

May 14, 2013

Abstract:Wavelet domain inpainting refers to the process of recovering the missing coefficients during the image compression or transmission stage. Recently, an efficient algorithm framework which is called Bregmanized operator splitting (BOS) was proposed for solving the classical variational model of wavelet inpainting. However, it is still time-consuming to some extent due to the inner iteration. In this paper, a novel variational model is established to formulate this reconstruction problem from the view of image decomposition. Then an efficient iterative algorithm based on the split-Bregman method is adopted to calculate an optimal solution, and it is also proved to be convergent. Compared with the BOS algorithm the proposed algorithm avoids the inner iteration and hence is more simple. Numerical experiments demonstrate that the proposed method is very efficient and outperforms the current state-of-the-art methods, especially in the computational time.

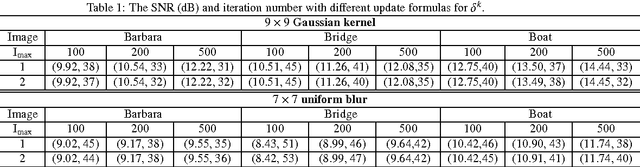

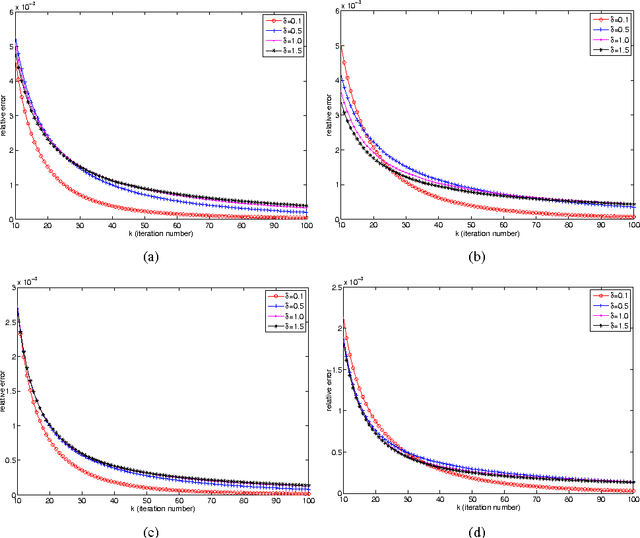

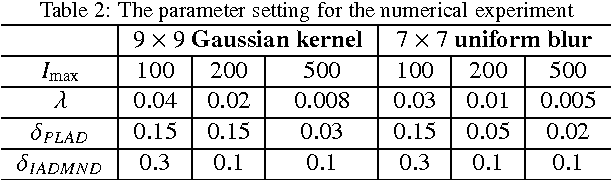

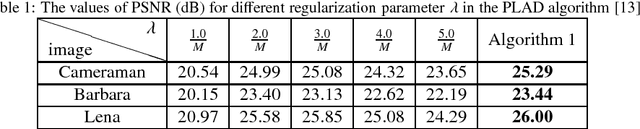

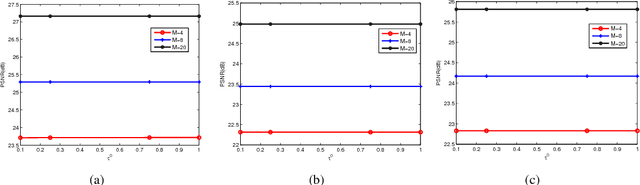

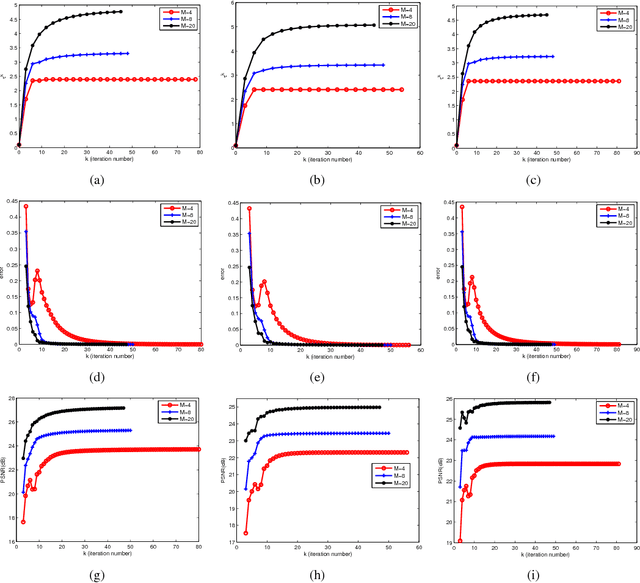

Fast Linearized Alternating Direction Minimization Algorithm with Adaptive Parameter Selection for Multiplicative Noise Removal

May 14, 2013

Abstract:Owing to the edge preserving ability and low computational cost of the total variation (TV), variational models with the TV regularization have been widely investigated in the field of multiplicative noise removal. The key points of the successful application of these models lie in: the optimal selection of the regularization parameter which balances the data-fidelity term with the TV regularizer; the efficient algorithm to compute the solution. In this paper, we propose two fast algorithms based on the linearized technique, which are able to estimate the regularization parameter and recover the image simultaneously. In the iteration step of the proposed algorithms, the regularization parameter is adjusted by a special discrepancy function defined for multiplicative noise. The convergence properties of the proposed algorithms are proved under certain conditions, and numerical experiments demonstrate that the proposed algorithms overall outperform some state-of-the-art methods in the PSNR values and computational time.

* 23pages

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge