Dae Kyung Sohn

Moving from 2D to 3D: volumetric medical image classification for rectal cancer staging

Sep 13, 2022

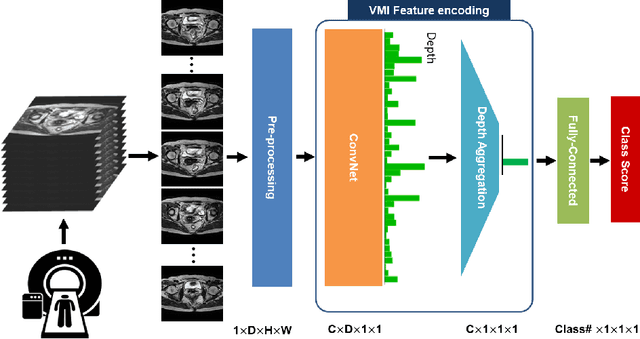

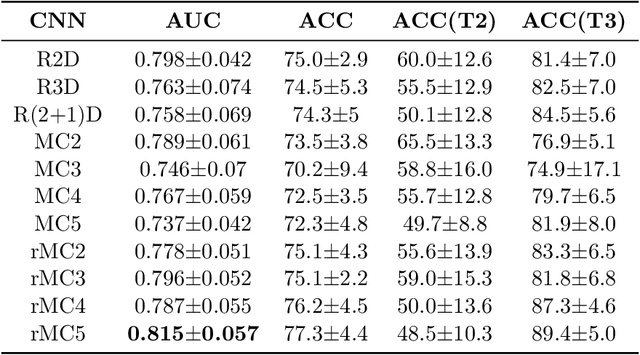

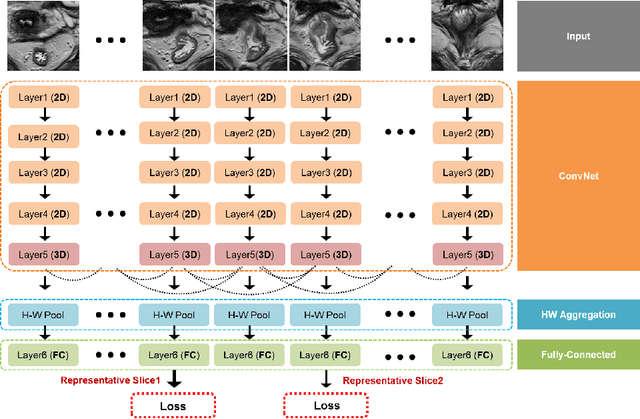

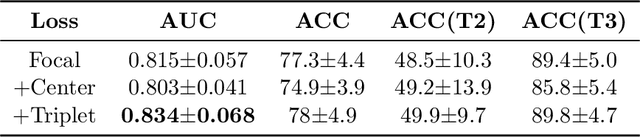

Abstract:Volumetric images from Magnetic Resonance Imaging (MRI) provide invaluable information in preoperative staging of rectal cancer. Above all, accurate preoperative discrimination between T2 and T3 stages is arguably both the most challenging and clinically significant task for rectal cancer treatment, as chemo-radiotherapy is usually recommended to patients with T3 (or greater) stage cancer. In this study, we present a volumetric convolutional neural network to accurately discriminate T2 from T3 stage rectal cancer with rectal MR volumes. Specifically, we propose 1) a custom ResNet-based volume encoder that models the inter-slice relationship with late fusion (i.e., 3D convolution at the last layer), 2) a bilinear computation that aggregates the resulting features from the encoder to create a volume-wise feature, and 3) a joint minimization of triplet loss and focal loss. With MR volumes of pathologically confirmed T2/T3 rectal cancer, we perform extensive experiments to compare various designs within the framework of residual learning. As a result, our network achieves an AUC of 0.831, which is higher than the reported accuracy of the professional radiologist groups. We believe this method can be extended to other volume analysis tasks

Multi-Task Learning with a Fully Convolutional Network for Rectum and Rectal Cancer Segmentation

Feb 01, 2019

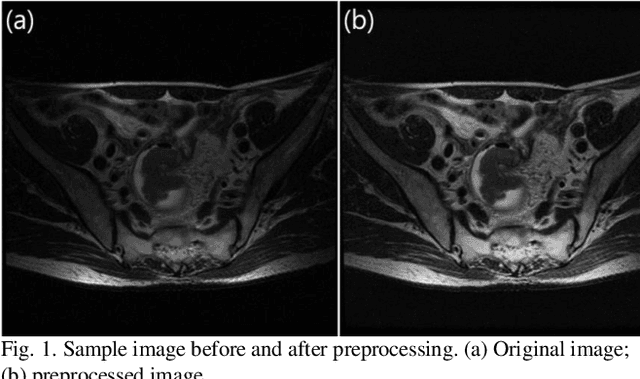

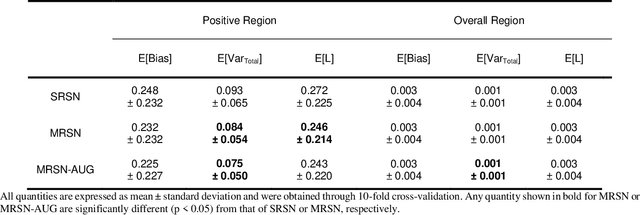

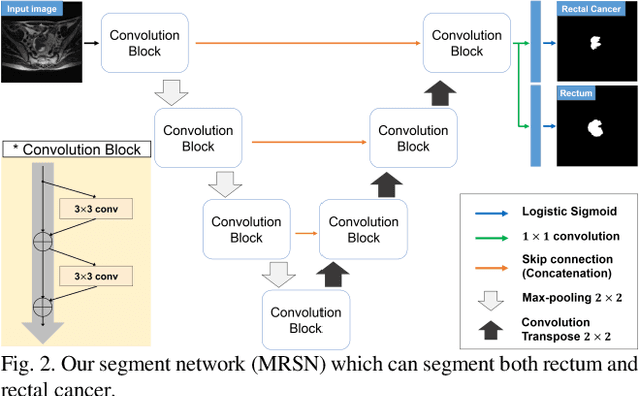

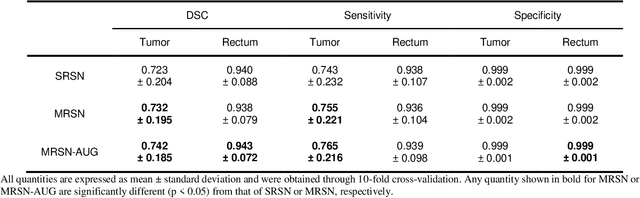

Abstract:In a rectal cancer treatment planning, the location of rectum and rectal cancer plays an important role. The aim of this study is to propose a fully automatic method to segment both rectum and rectal cancer with axial T2-weighted magnetic resonance images. We present a fully convolutional network for multi-task learning to segment both rectum and rectal cancer. Moreover, we propose an assessment method based on bias-variance decomposition to visualize and measure the regional model robustness of a segmentation network. In addition, we suggest a novel augmentation method which can improve the segmentation performance and reduce the training time. Our proposed method not only is computationally efficient due to its fully convolutional nature but also outperforms the current state-of-the-art in rectal cancer segmentation. It also shows high accuracy in rectum segmentation, for which no previous studies exist. We conclude that rectum information benefits the training of rectal cancer segmentation model, especially concerning model variance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge