Dónal M. McSweeney

A multi-channel cycleGAN for CBCT to CT synthesis

Dec 04, 2023

Abstract:Image synthesis is used to generate synthetic CTs (sCTs) from on-treatment cone-beam CTs (CBCTs) with a view to improving image quality and enabling accurate dose computation to facilitate a CBCT-based adaptive radiotherapy workflow. As this area of research gains momentum, developments in sCT generation methods are difficult to compare due to the lack of large public datasets and sizeable variation in training procedures. To compare and assess the latest advancements in sCT generation, the SynthRAD2023 challenge provides a public dataset and evaluation framework for both MR and CBCT to sCT synthesis. Our contribution focuses on the second task, CBCT-to-sCT synthesis. By leveraging a multi-channel input to emphasize specific image features, our approach effectively addresses some of the challenges inherent in CBCT imaging, whilst restoring the contrast necessary for accurate visualisation of patients' anatomy. Additionally, we introduce an auxiliary fusion network to further enhance the fidelity of generated sCT images.

COBRA: Cpu-Only aBdominal oRgan segmentAtion

Jul 21, 2022

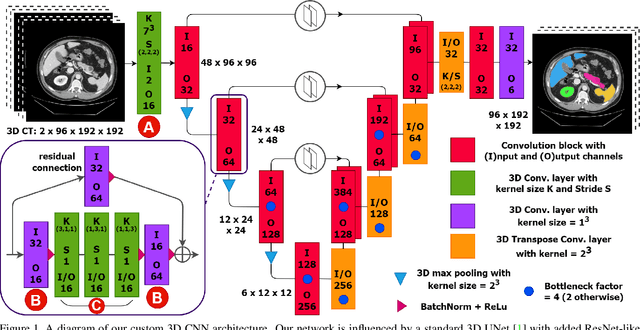

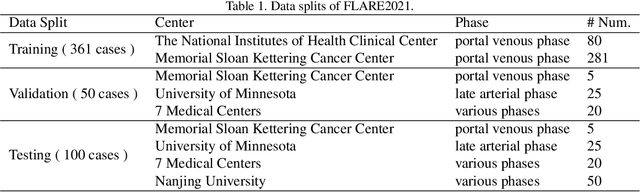

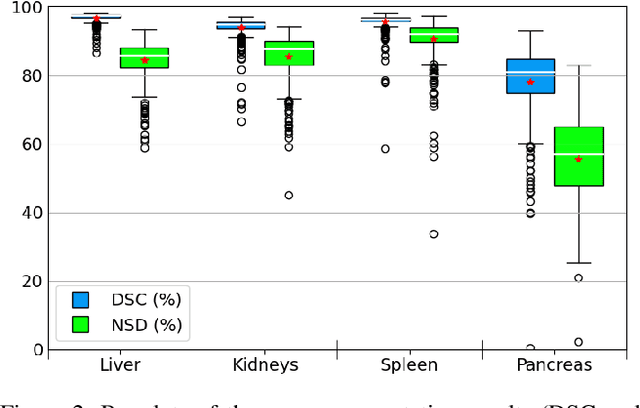

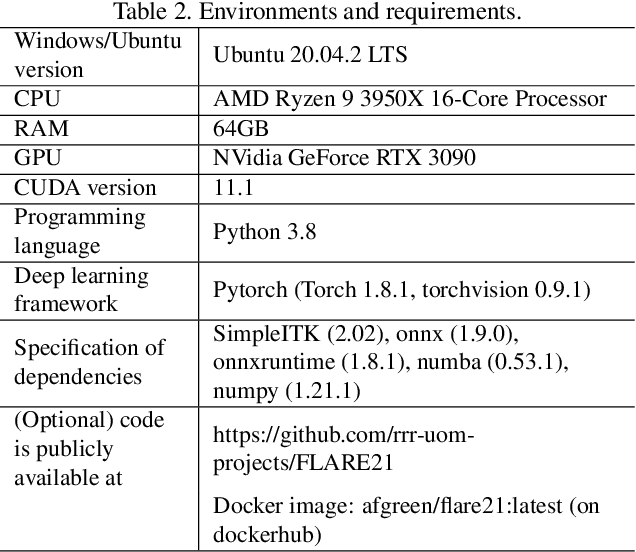

Abstract:Abdominal organ segmentation is a difficult and time-consuming task. To reduce the burden on clinical experts, fully-automated methods are highly desirable. Current approaches are dominated by Convolutional Neural Networks (CNNs) however the computational requirements and the need for large data sets limit their application in practice. By implementing a small and efficient custom 3D CNN, compiling the trained model and optimizing the computational graph: our approach produces high accuracy segmentations (Dice Similarity Coefficient (%): Liver: 97.3$\pm$1.3, Kidneys: 94.8$\pm$3.6, Spleen: 96.4$\pm$3.0, Pancreas: 80.9$\pm$10.1) at a rate of 1.6 seconds per image. Crucially, we are able to perform segmentation inference solely on CPU (no GPU required), thereby facilitating easy and widespread deployment of the model without specialist hardware.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge