Coleman DeLude

A Fast Broadband Beamspace Transformation

Dec 09, 2025

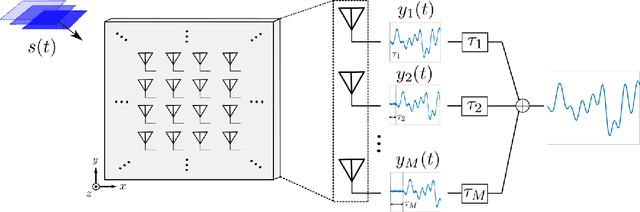

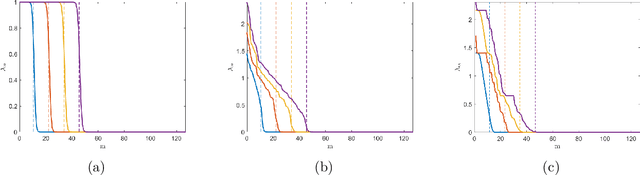

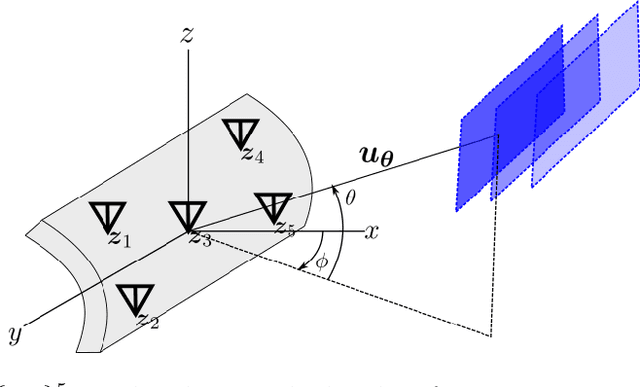

Abstract:We present a new computationally efficient method for multi-beamforming in the broadband setting. Our "fast beamspace transformation" forms $B$ beams from $M$ sensor outputs using a number of operations per sample that scales linearly (to within logarithmic factors) with $M$ when $B\sim M$. While the narrowband version of this transformation can be performed efficiently with a spatial fast Fourier transform, the broadband setting requires coherent processing of multiple array snapshots simultaneously. Our algorithm works by taking $N$ samples off of each of $M$ sensors and encoding the sensor outputs into a set of coefficients using a special non-uniform spaced Fourier transform. From these coefficients, each beam is formed by solving a small system of equations that has Toeplitz structure. The total runtime complexity is $\mathcal{O}(M\log N+B\log N)$ operations per sample, exhibiting essentially the same scaling as in the narrowband case and vastly outperforming broadband beamformers based on delay and sum whose computations scale as $\mathcal{O}(MB)$. Alongside a careful mathematical formulation and analysis of our fast broadband beamspace transform, we provide a host of numerical experiments demonstrating the algorithm's favorable computational scaling and high accuracy. Finally, we demonstrate how tasks such as interpolating to ``off-grid" angles and nulling an interferer are more computationally efficient when performed directly in beamspace.

Robust Broadband Beamforming using Bilinear Programming

Jun 24, 2024

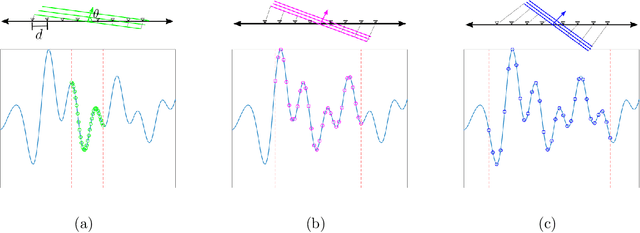

Abstract:We introduce a new method for robust beamforming, where the goal is to estimate a signal from array samples when there is uncertainty in the angle of arrival. Our method offers state-of-the-art performance on narrowband signals and is naturally applied to broadband signals. Our beamformer operates by treating the forward model for the array samples as unknown. We show that the "true" forward model lies in the linear span of a small number of fixed linear systems. As a result, we can estimate the forward operator and the signal simultaneously by solving a bilinear inverse problem using least squares. Our numerical experiments show that if the angle of arrival is known to only be within an interval of reasonable size, there is very little loss in estimation performance compared to the case where the angle is known exactly.

Real-time Digital RF Emulation -- I: The Direct Path Computational Model

Jun 13, 2024

Abstract:In this paper we consider the problem of developing a computational model for emulating an RF channel. The motivation for this is that an accurate and scalable emulator has the potential to minimize the need for field testing, which is expensive, slow, and difficult to replicate. Traditionally, emulators are built using a tapped delay line model where long filters modeling the physical interactions of objects are implemented directly. For an emulation scenario consisting of $M$ objects all interacting with one another, the tapped delay line model's computational requirements scale as $O(M^3)$ per sample: there are $O(M^2)$ channels, each with $O(M)$ complexity. In this paper, we develop a new ``direct path" model that, while remaining physically faithful, allows us to carefully factor the emulator operations, resulting in an $O(M^2)$ per sample scaling of the computational requirements. The impact of this is drastic, a $200$ object scenario sees about a $100\times$ reduction in the number of per sample computations. Furthermore, the direct path model gives us a natural way to distribute the computations for an emulation: each object is mapped to a computational node, and these nodes are networked in a fully connected communication graph. Alongside a discussion of the model and the physical phenomena it emulates, we show how to efficiently parameterize antenna responses and scattering profiles within this direct path framework. To verify the model and demonstrate its viability in hardware, we provide several numerical experiments produced using a cycle level C++ simulator of a hardware implementation of the model.

Real-time Digital RF Emulation -- II: A Near Memory Custom Accelerator

Jun 13, 2024

Abstract:A near memory hardware accelerator, based on a novel direct path computational model, for real-time emulation of radio frequency systems is demonstrated. Our evaluation of hardware performance uses both application-specific integrated circuits (ASIC) and field programmable gate arrays (FPGA) methodologies: 1). The ASIC testchip implementation, using TSMC 28nm CMOS, leverages distributed autonomous control to extract concurrency in compute as well as low latency. It achieves a $518$ MHz per channel bandwidth in a prototype $4$-node system. The maximum emulation range supported in this paradigm is $9.5$ km with $0.24$ $\mu$s of per-sample emulation latency. 2). The FPGA-based implementation, evaluated on a Xilinx ZCU104 board, demonstrates a $9$-node test case (two Transmitters, one Receiver, and $6$ passive reflectors) with an emulation range of $1.13$ km to $27.3$ km at $215$ MHz bandwidth.

Slepian Beamforming: Broadband Beamforming using Streaming Least Squares

Dec 06, 2023

Abstract:In this paper we revisit the classical problem of estimating a signal as it impinges on a multi-sensor array. We focus on the case where the impinging signal's bandwidth is appreciable and is operating in a broadband regime. Estimating broadband signals, often termed broadband (or wideband) beamforming, is traditionally done through filter and summation, true time delay, or a coupling of the two. Our proposed method deviates substantially from these paradigms in that it requires no notion of filtering or true time delay. We use blocks of samples taken directly from the sensor outputs to fit a robust Slepian subspace model using a least squares approach. We then leverage this model to estimate uniformly spaced samples of the impinging signal. Alongside a careful discussion of this model and how to choose its parameters we show how to fit the model to new blocks of samples as they are received, producing a streaming output. We then go on to show how this method naturally extends to adaptive beamforming scenarios, where we leverage signal statistics to attenuate interfering sources. Finally, we discuss how to use our model to estimate from dimensionality reducing measurements. Accompanying these discussions are extensive numerical experiments establishing that our method outperforms existing filter based approaches while being comparable in terms of computational complexity.

Broadband Beamforming via Linear Embedding

Jun 14, 2022

Abstract:In modern applications multi-sensor arrays are subject to an ever-present demand to accommodate signals with higher bandwidths. Standard methods for broadband beamforming, namely digital beamforming and true-time delay, are difficult and expensive to implement at scale. In this work, we explore an alternative method of broadband beamforming that uses a set of linear measurements and a robust low-dimensional signal subspace model. The linear measurements, taken directly from the sensors, serve as a method for dimensionality reduction and serve to limit the array readout. From these embedded samples, we show how the original samples can be recovered to within a provably small residual error using a Slepian subspace model. Previous work in multi-sensor array subspace models have largely analyzed performance from a qualitative or asymptotic perspective. In contrast, we give quantitative estimates of how well different dimensionality reduction strategies preserve the array gain. We also show how spatial and temporal correlations can be used to relax the standard Nyquist sampling criterion, how recovery can be achieved through fast algorithms, and how "hardware friendly" linear measurements can be designed.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge