Chuanjun Zheng

Efficient Proxy Raytracer for Optical Systems using Implicit Neural Representations

Jul 28, 2025Abstract:Ray tracing is a widely used technique for modeling optical systems, involving sequential surface-by-surface computations, which can be computationally intensive. We propose Ray2Ray, a novel method that leverages implicit neural representations to model optical systems with greater efficiency, eliminating the need for surface-by-surface computations in a single pass end-to-end model. Ray2Ray learns the mapping between rays emitted from a given source and their corresponding rays after passing through a given optical system in a physically accurate manner. We train Ray2Ray on nine off-the-shelf optical systems, achieving positional errors on the order of 1{\mu}m and angular deviations on the order 0.01 degrees in the estimated output rays. Our work highlights the potential of neural representations as a proxy for optical raytracer.

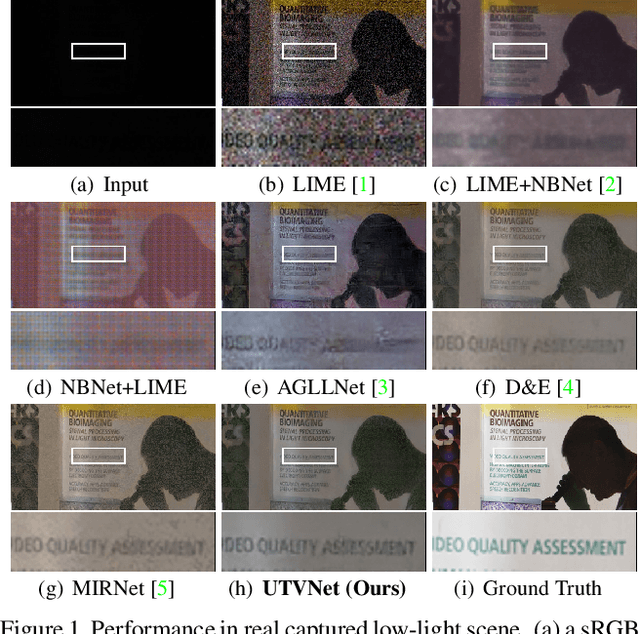

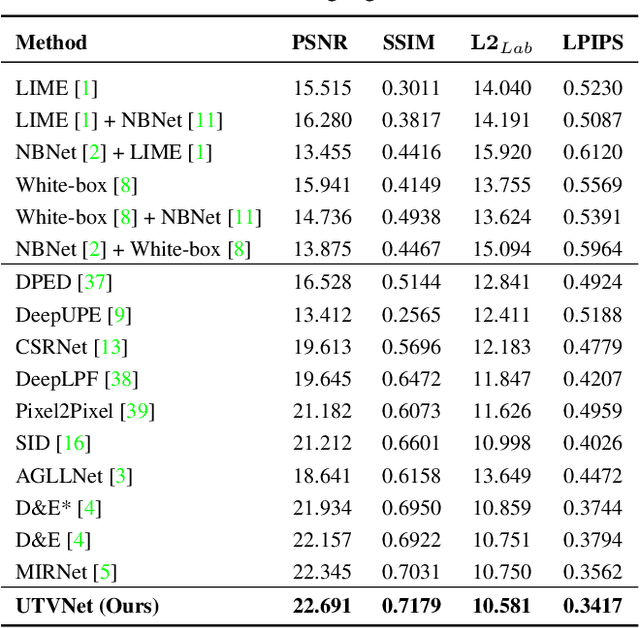

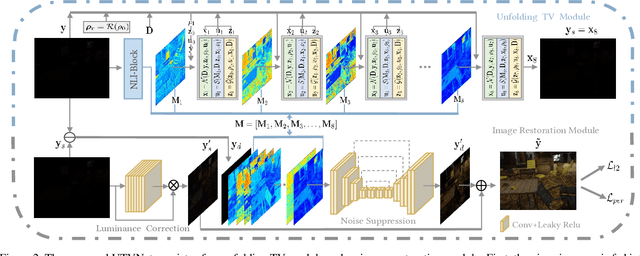

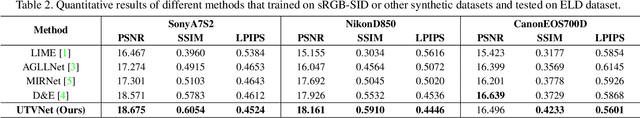

Adaptive Unfolding Total Variation Network for Low-Light Image Enhancement

Oct 07, 2021

Abstract:Real-world low-light images suffer from two main degradations, namely, inevitable noise and poor visibility. Since the noise exhibits different levels, its estimation has been implemented in recent works when enhancing low-light images from raw Bayer space. When it comes to sRGB color space, the noise estimation becomes more complicated due to the effect of the image processing pipeline. Nevertheless, most existing enhancing algorithms in sRGB space only focus on the low visibility problem or suppress the noise under a hypothetical noise level, leading them impractical due to the lack of robustness. To address this issue,we propose an adaptive unfolding total variation network (UTVNet), which approximates the noise level from the real sRGB low-light image by learning the balancing parameter in the model-based denoising method with total variation regularization. Meanwhile, we learn the noise level map by unrolling the corresponding minimization process for providing the inferences of smoothness and fidelity constraints. Guided by the noise level map, our UTVNet can recover finer details and is more capable to suppress noise in real captured low-light scenes. Extensive experiments on real-world low-light images clearly demonstrate the superior performance of UTVNet over state-of-the-art methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge