Chuang Ye

Power Control for Wireless VBR Video Streaming: From Optimization to Reinforcement Learning

Mar 31, 2019

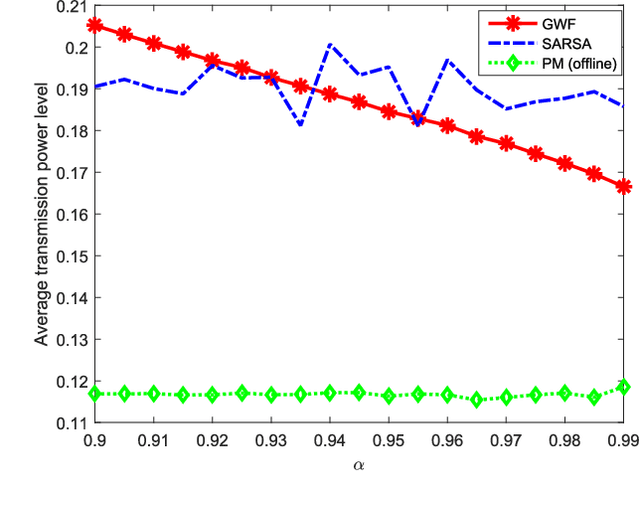

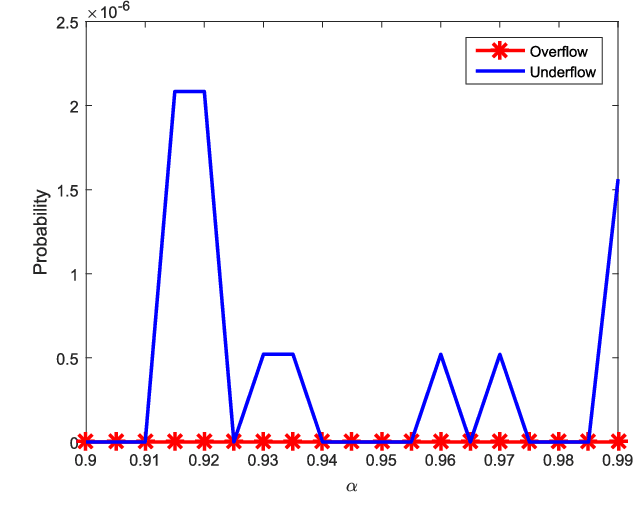

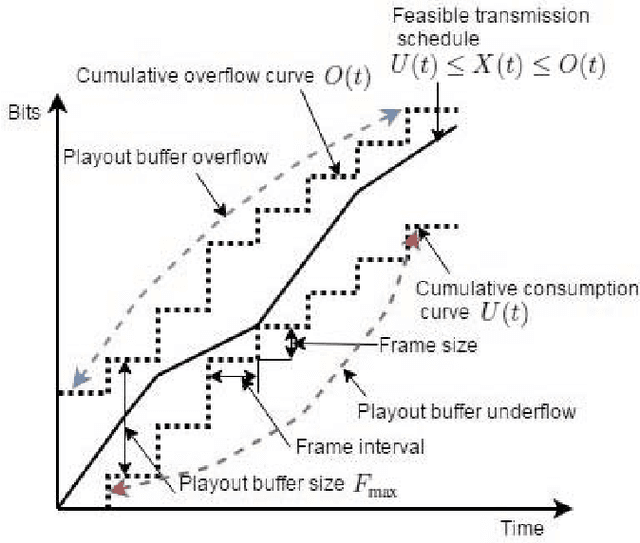

Abstract:In this paper, we investigate the problem of power control for streaming variable bit rate (VBR) videos over wireless links. A system model involving a transmitter (e.g., a base station) that sends VBR video data to a receiver (e.g., a mobile user) equipped with a playout buffer is adopted, as used in dynamic adaptive streaming video applications. In this setting, we analyze power control policies considering the following two objectives: 1) the minimization of the transmit power consumption, and 2) the minimization of the transmission completion time of the communication session. In order to play the video without interruptions, the power control policy should also satisfy the requirement that the VBR video data is delivered to the mobile user without causing playout buffer underflow or overflows. A directional water-filling algorithm, which provides a simple and concise interpretation of the necessary optimality conditions, is identified as the optimal offline policy. Following this, two online policies are proposed for power control based on channel side information (CSI) prediction within a short time window. Dynamic programming is employed to implement the optimal offline and the initial online power control policies that minimize the transmit power consumption in the communication session. Subsequently, reinforcement learning (RL) based approach is employed for the second online power control policy. Via simulation results, we show that the optimal offline power control policy that minimizes the overall power consumption leads to substantial energy savings compared to the strategy of minimizing the time duration of video streaming. We also demonstrate that the RL algorithm performs better than the dynamic programming based online grouped water-filling (GWF) strategy unless the channel is highly correlated.

Deep Learning Based Power Control for Quality-Driven Wireless Video Transmissions

Oct 16, 2018

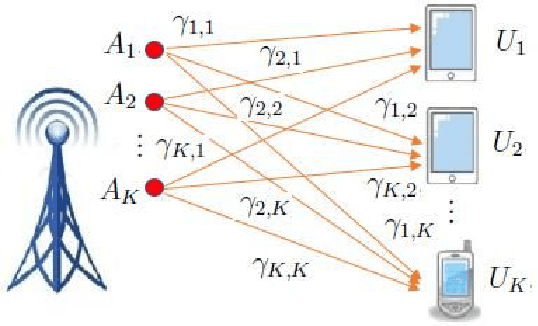

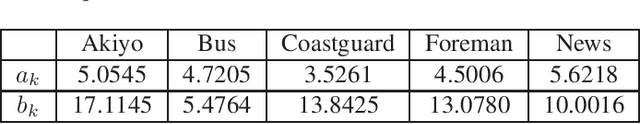

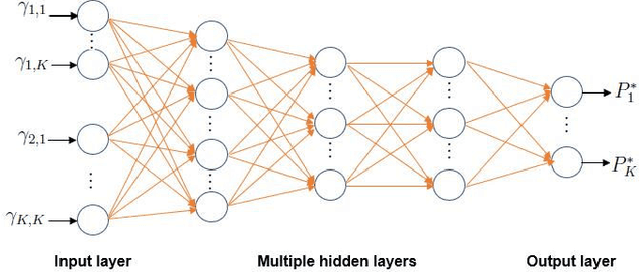

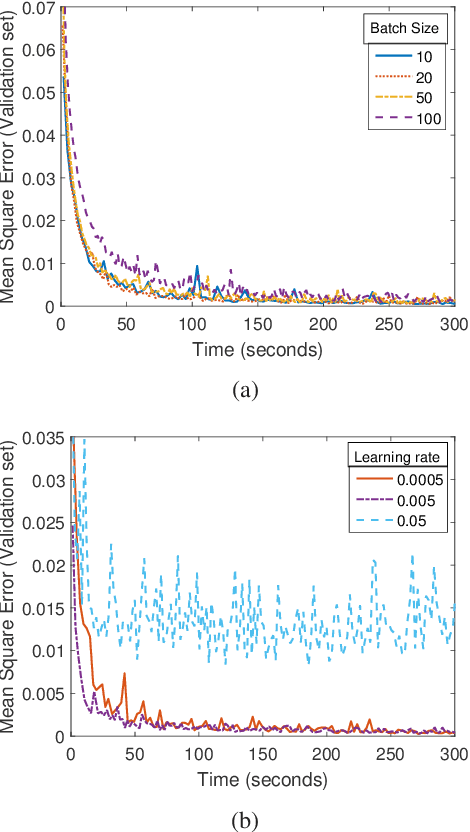

Abstract:In this paper, wireless video transmission to multiple users under total transmission power and minimum required video quality constraints is studied. In order to provide the desired performance levels to the end-users in real-time video transmissions while using the energy resources efficiently, we assume that power control is employed. Due to the presence of interference, determining the optimal power control is a non-convex problem but can be solved via monotonic optimization framework. However, monotonic optimization is an iterative algorithm and can often entail considerable computational complexity, making it not suitable for real-time applications. To address this, we propose a learning-based approach that treats the input and output of a resource allocation algorithm as an unknown nonlinear mapping and a deep neural network (DNN) is employed to learn this mapping. This learned mapping via DNN can provide the optimal power level quickly for given channel conditions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge