Christopher Thron

Lifetime Optimization of Dense Wireless Sensor Networks Using Continuous Ring-sector Model

Apr 12, 2021

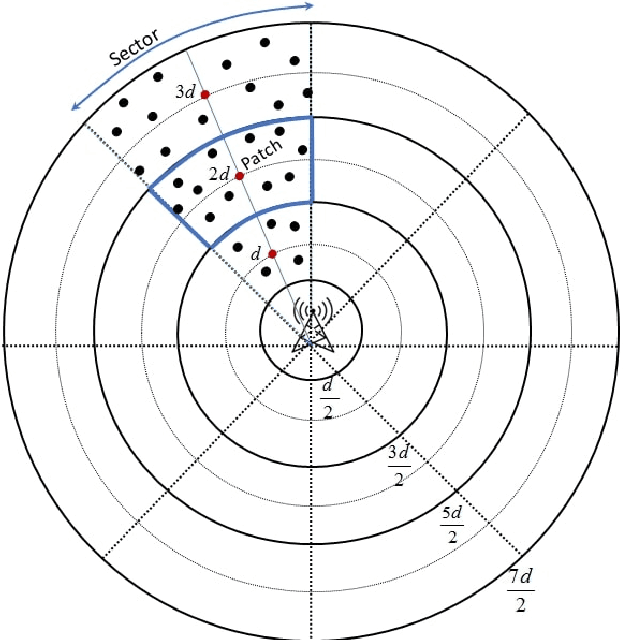

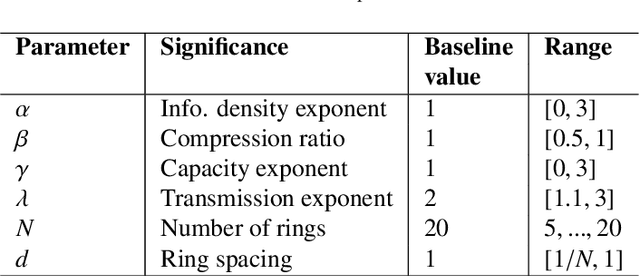

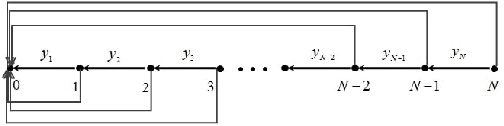

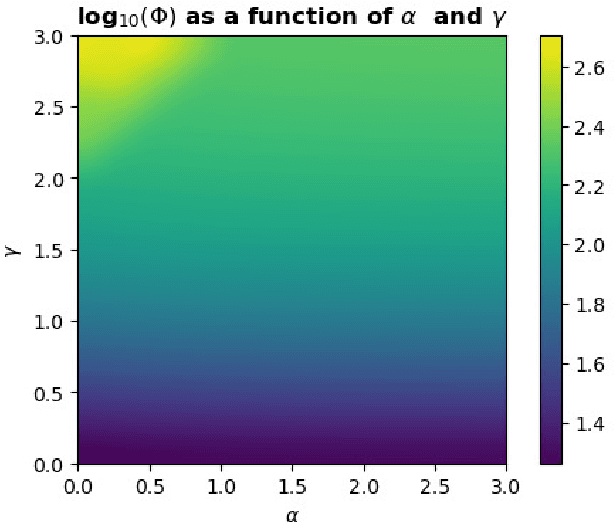

Abstract:Wireless sensor networks (WSNs) are becoming increasingly utilized in applications that require remote collection of data on environmental conditions. In particular dense WSNs are emerging as an important sensing platforms for the Internet of Things (IoT). WSNs are able to generate huge volumes of raw data, which require network structuring and efficient collaboration between nodes to ensure efficient transmission. In order to reduce the amount of data carried in the network, data aggregation is used in WSNs to define a policy of data fusion and compression. In this paper, we investigate a model for data aggregation in a dense {WSN} with a single sink. The model divides a circular coverage region centered at the sink into patches which are intersections of sectors of concentric rings, and data in each patch is aggregated at a single node before transmission. Nodes only communicate with other nodes in the same sector. Based on these assumptions, we formulate a linear programming problem to maximize system lifetime by minimizing the maximum proportionate energy consumption over all nodes. Under a wide variety of conditions, the optimal solution employs two transmissions mechanisms: direct transmission, in which nodes send information directly to the sink; and stepwise transmission, in which nodes transmit information to adjacent nodes. An exact formula is given for the proportionate energy consumption rate of the network. Asymptotic forms of this exact solution are also derived, and are verified to agree with the linear programming solution. We investigate three strategies for improving system lifetime: nonuniform energy and information density; iterated compression; and modifications of rings. We conclude that iterated compression has the biggest effect in increasing system lifetime.

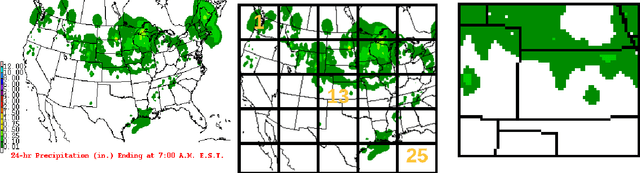

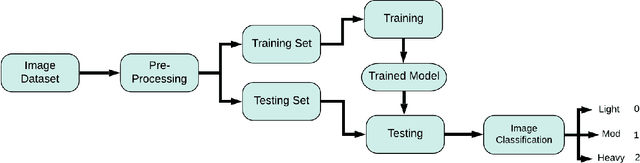

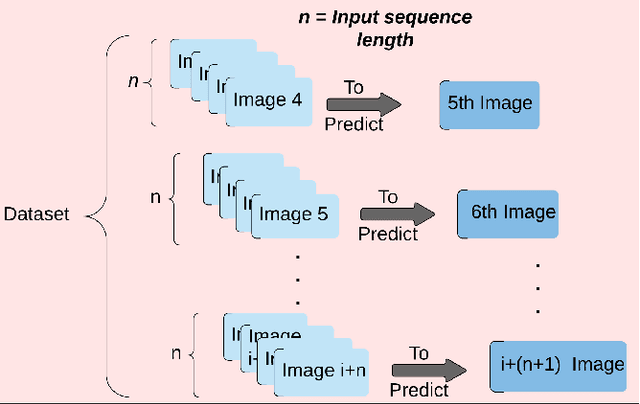

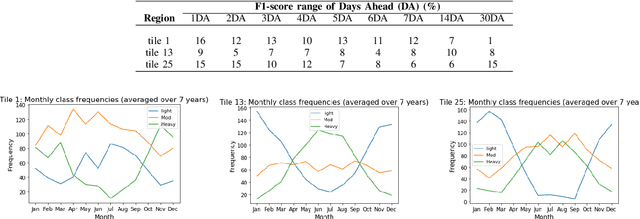

Regional Rainfall Prediction Using Support Vector Machine Classification of Large-Scale Precipitation Maps

Jul 30, 2020

Abstract:Rainfall prediction helps planners anticipate potential social and economic impacts produced by too much or too little rain. This research investigates a class-based approach to rainfall prediction from 1-30 days in advance. The study made regional predictions based on sequences of daily rainfall maps of the continental US, with rainfall quantized at 3 levels: light or no rain; moderate; and heavy rain. Three regions were selected, corresponding to three squares from a $5\times5$ grid covering the map area. Rainfall predictions up to 30 days ahead for these three regions were based on a support vector machine (SVM) applied to consecutive sequences of prior daily rainfall map images. The results show that predictions for corner squares in the grid were less accurate than predictions obtained by a simple untrained classifier. However, SVM predictions for a central region outperformed the other two regions, as well as the untrained classifier. We conclude that there is some evidence that SVMs applied to large-scale precipitation maps can under some conditions give useful information for predicting regional rainfall, but care must be taken to avoid pitfall

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge