Christoph Schaefer

Enriching Verbal Feedback from Usability Testing: Automatic Linking of Thinking-Aloud Recordings and Stimulus using Eye Tracking and Mouse Data

Jul 11, 2023Abstract:The think aloud method is an important and commonly used tool for usability optimization. However, analyzing think aloud data could be time consuming. In this paper, we put forth an automatic analysis of verbal protocols and test the link between spoken feedback and the stimulus using eye tracking and mouse tracking. The gained data - user feedback linked to a specific area of the stimulus - could be used to let an expert review the feedback on specific web page elements or to visualize on which parts of the web page the feedback was given. Specifically, we test if participants fixate on or point with the mouse to the content of the webpage that they are verbalizing. During the testing, participants were shown three websites and asked to verbally give their opinion. The verbal responses, along with the eye and cursor movements were recorded. We compared the hit rate, defined as the percentage of verbally mentioned areas of interest (AOIs) that were fixated with gaze or pointed to with the mouse. The results revealed a significantly higher hit rate for the gaze compared to the mouse data. Further investigation revealed that, while the mouse was mostly used passively to scroll, the gaze was often directed towards relevant AOIs, thus establishing a strong association between spoken words and stimuli. Therefore, eye tracking data possibly provides more detailed information and more valuable insights about the verbalizations compared to the mouse data.

Robust Dead Reckoning: Calibration, Covariance Estimation, Fusion and Integrity Monitoring

Jan 06, 2018

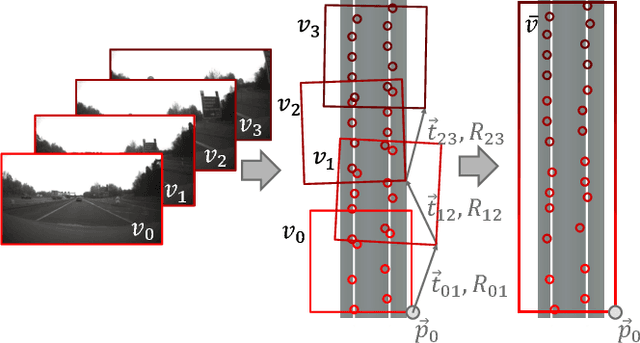

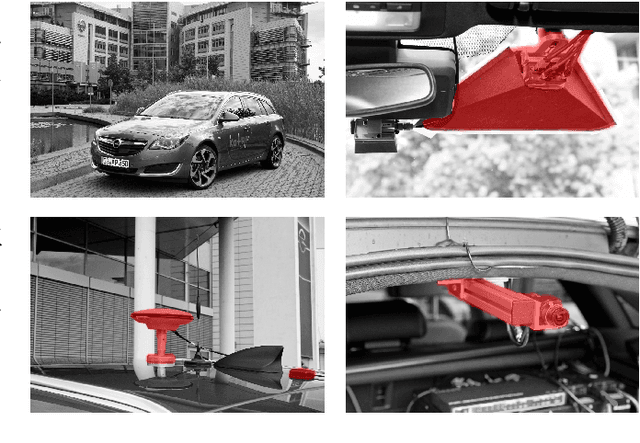

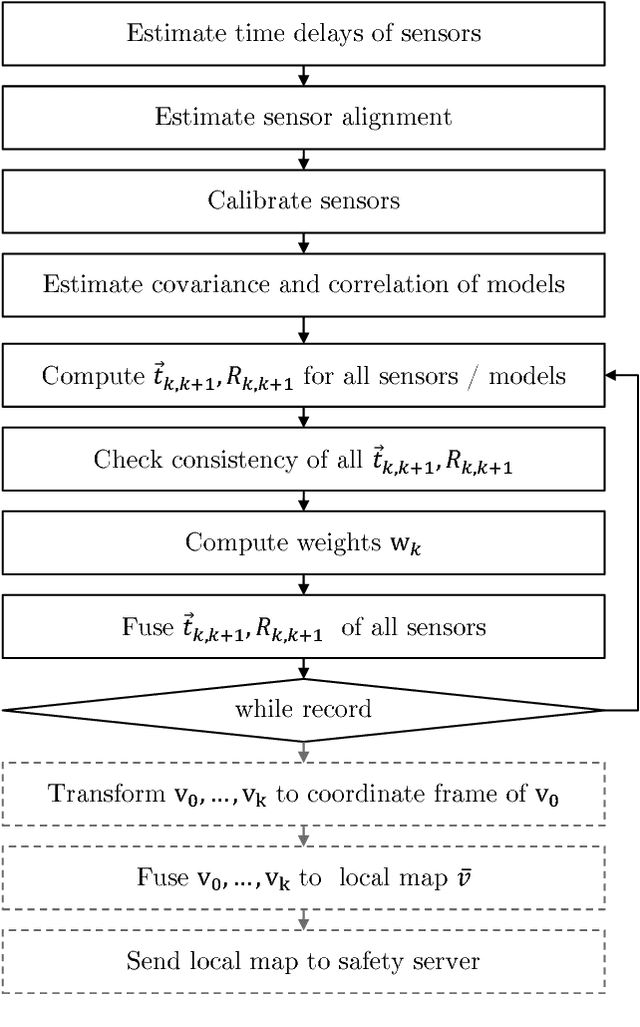

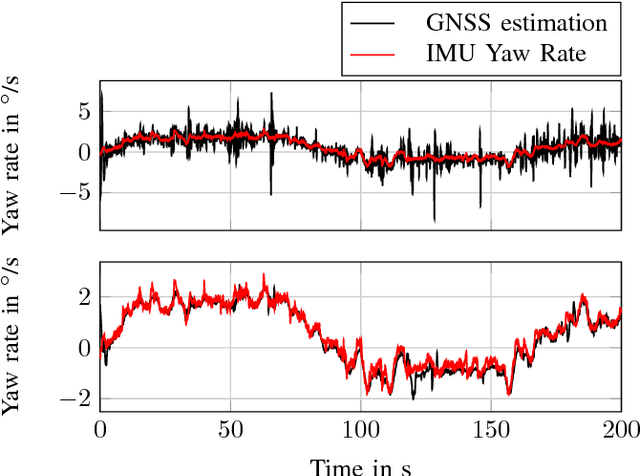

Abstract:To measure system states and local environment directly with high precision, expensive sensors are required. However, highly accurate system states and environmental perception can also be achieved using data fusion techniques and digital maps. One crucial task of multi-sensor state estimation is to project different sensor measurements into the same temporal, spatial and physical domain, estimate their covariance matrices as well as the exclusion of erroneous measurements. This paper presents a generic approach for robust estimation of vehicle movement (odometry). We will shortly present our calibration procedure, including the estimation of sensor alignments, offset / scaling errors, covariances / correlations and time delays. An improved algorithm for wheel diameter estimation is presented. Additionally an approach for robust odometry will be shown as odometry estimations are fused under known covariances, while outliers are detected using a chi-squared test. Utilizing our robust odometry, local environmental views can be associated and fused. Furthermore our robust odometry can be used to detect and exclude erroneous position estimates.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge