Chris P. Tsokos

Deep and Statistical Learning in Biomedical Imaging: State of the Art in 3D MRI Brain Tumor Segmentation

Mar 09, 2021

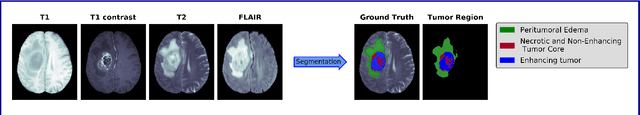

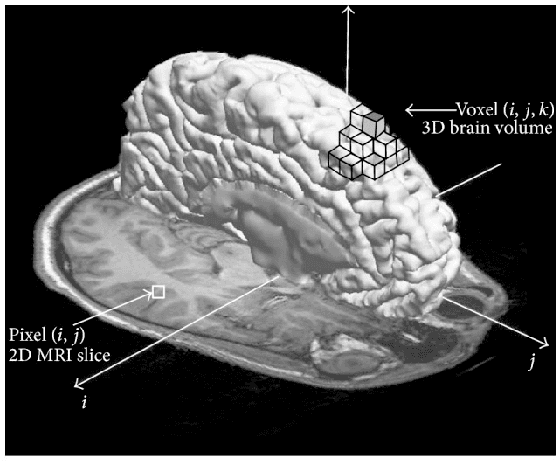

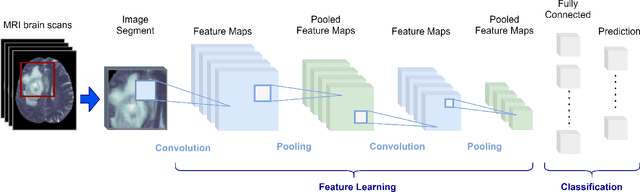

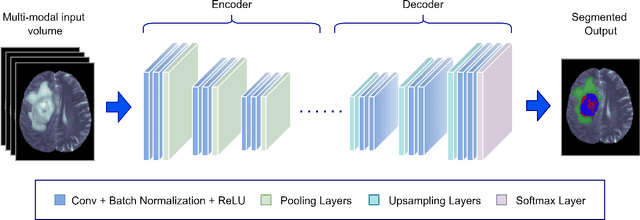

Abstract:Clinical diagnostic and treatment decisions rely upon the integration of patient-specific data with clinical reasoning. Cancer presents a unique context that influence treatment decisions, given its diverse forms of disease evolution. Biomedical imaging allows noninvasive assessment of disease based on visual evaluations leading to better clinical outcome prediction and therapeutic planning. Early methods of brain cancer characterization predominantly relied upon statistical modeling of neuroimaging data. Driven by the breakthroughs in computer vision, deep learning became the de facto standard in the domain of medical imaging. Integrated statistical and deep learning methods have recently emerged as a new direction in the automation of the medical practice unifying multi-disciplinary knowledge in medicine, statistics, and artificial intelligence. In this study, we critically review major statistical and deep learning models and their applications in brain imaging research with a focus on MRI-based brain tumor segmentation. The results do highlight that model-driven classical statistics and data-driven deep learning is a potent combination for developing automated systems in clinical oncology.

Honey Bee Dance Modeling in Real-time using Machine Learning

May 20, 2017

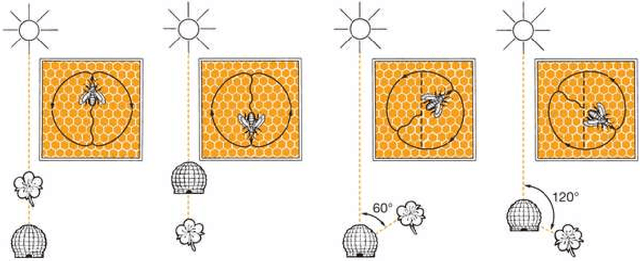

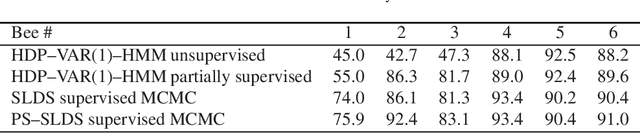

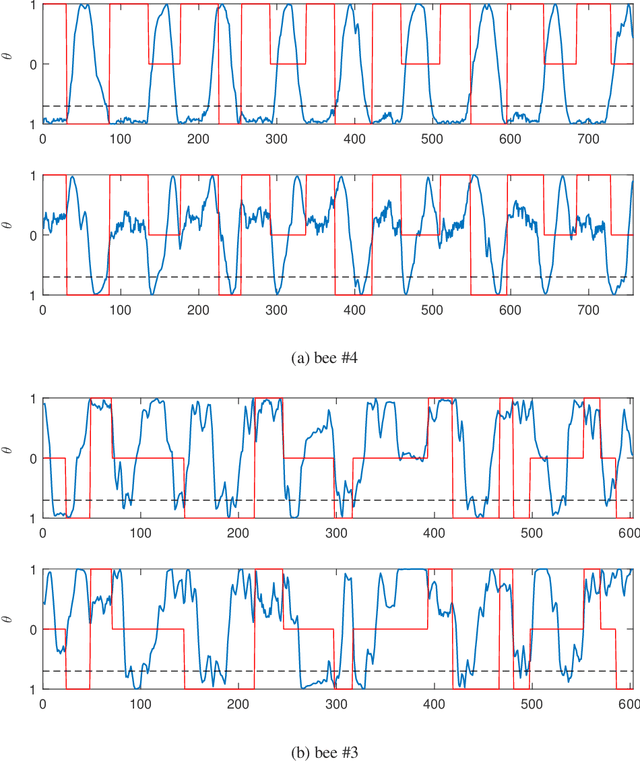

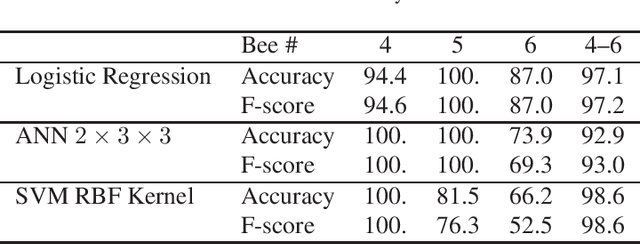

Abstract:The waggle dance that honeybees perform is an astonishing way of communicating the location of food source. After over 60 years of its discovery, researchers still use manual labeling by watching hours of dance videos to detect different transitions between dance components thus extracting information regarding the distance and direction to the food source. We propose an automated process to monitor and segment different components of honeybee waggle dance. The process is highly accurate, runs in real-time, and can use shared information between multiple dances.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge