Chirag Sharma

Environment Maps: Structured Environmental Representations for Long-Horizon Agents

Mar 24, 2026Abstract:Although large language models (LLMs) have advanced rapidly, robust automation of complex software workflows remains an open problem. In long-horizon settings, agents frequently suffer from cascading errors and environmental stochasticity; a single misstep in a dynamic interface can lead to task failure, resulting in hallucinations or trial-and-error. This paper introduces $\textit{Environment Maps}$: a persistent, agent-agnostic representation that mitigates these failures by consolidating heterogeneous evidence, such as screen recordings and execution traces, into a structured graph. The representation consists of four core components: (1) Contexts (abstracted locations), (2) Actions (parameterized affordances), (3) Workflows (observed trajectories), and (4) Tacit Knowledge (domain definitions and reusable procedures). We evaluate this framework on the WebArena benchmark across five domains. Agents equipped with environment maps achieve a 28.2% success rate, nearly doubling the performance of baselines limited to session-bound context (14.2%) and outperforming agents that have access to the raw trajectory data used to generate the environment maps (23.3%). By providing a structured interface between the model and the environment, Environment Maps establish a persistent foundation for long-horizon planning that is human-interpretable, editable, and incrementally refinable.

DIGITOUR: Automatic Digital Tours for Real-Estate Properties

Jan 17, 2023

Abstract:A virtual or digital tour is a form of virtual reality technology which allows a user to experience a specific location remotely. Currently, these virtual tours are created by following a 2-step strategy. First, a photographer clicks a 360 degree equirectangular image; then, a team of annotators manually links these images for the "walkthrough" user experience. The major challenge in the mass adoption of virtual tours is the time and cost involved in manual annotation/linking of images. Therefore, this paper presents an end-to-end pipeline to automate the generation of 3D virtual tours using equirectangular images for real-estate properties. We propose a novel HSV-based coloring scheme for paper tags that need to be placed at different locations before clicking the equirectangular images using 360 degree cameras. These tags have two characteristics: i) they are numbered to help the photographer for placement of tags in sequence and; ii) bi-colored, which allows better learning of tag detection (using YOLOv5 architecture) in an image and digit recognition (using custom MobileNet architecture) tasks. Finally, we link/connect all the equirectangular images based on detected tags. We show the efficiency of the proposed pipeline on a real-world equirectangular image dataset collected from the Housing.com database.

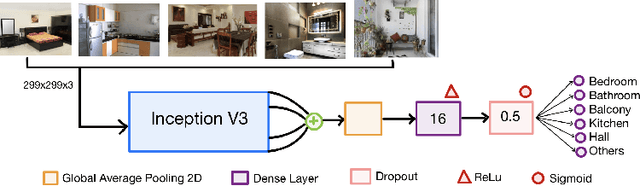

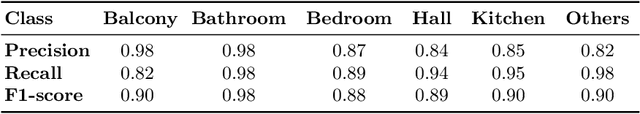

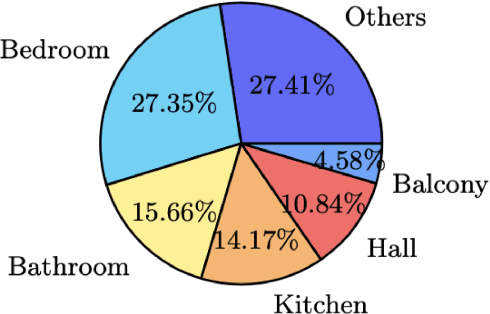

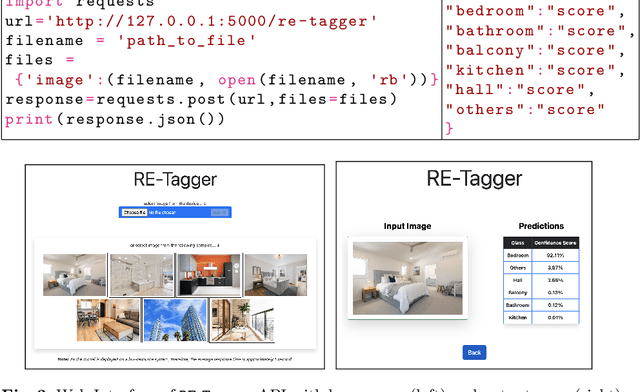

RE-Tagger: A light-weight Real-Estate Image Classifier

Jul 12, 2022

Abstract:Real-estate image tagging is one of the essential use-cases to save efforts involved in manual annotation and enhance the user experience. This paper proposes an end-to-end pipeline (referred to as RE-Tagger) for the real-estate image classification problem. We present a two-stage transfer learning approach using custom InceptionV3 architecture to classify images into different categories (i.e., bedroom, bathroom, kitchen, balcony, hall, and others). Finally, we released the application as REST API hosted as a web application running on 2 cores machine with 2 GB RAM. The demo video is available here.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge