Chih-Chun Wang

Princeton University

Beyond Freshness and Semantics: A Coupon-Collector Framework for Effective Status Updates

Mar 27, 2026Abstract:For status update systems operating over unreliable energy-constrained wireless channels, we address Weaver's long-standing Level-C question: do my packets actually improve the plant's behavior? Each fresh sample carries a stochastic expiration time -- governed by the plant's instability dynamics -- after which the information becomes useless for control. Casting the problem as a coupon-collector variant with expiring coupons, we (i) formulate a two-dimensional average-reward MDP, (ii) prove that the optimal schedule is doubly thresholded in the receiver's freshness timer and the sender's stored lifetime, (iii) derive a closed-form policy for deterministic lifetimes, and (iv) design a Structure-Aware Q-learning algorithm (SAQ) that learns the optimal policy without knowing the channel success probability or lifetime distribution. Simulations validate our theoretical predictions: SAQ matches optimal Value Iteration performance while converging significantly faster than baseline Q-learning, and expiration-aware scheduling achieves up to 50% higher reward than age-based baselines by adapting transmissions to state-dependent urgency -- thereby delivering Level-C effectiveness under tight resource constraints.

Bandit Problems with Side Observations

Jan 22, 2005

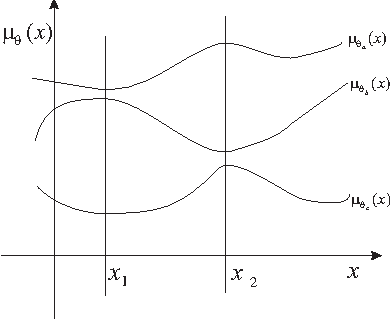

Abstract:An extension of the traditional two-armed bandit problem is considered, in which the decision maker has access to some side information before deciding which arm to pull. At each time t, before making a selection, the decision maker is able to observe a random variable X_t that provides some information on the rewards to be obtained. The focus is on finding uniformly good rules (that minimize the growth rate of the inferior sampling time) and on quantifying how much the additional information helps. Various settings are considered and for each setting, lower bounds on the achievable inferior sampling time are developed and asymptotically optimal adaptive schemes achieving these lower bounds are constructed.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge